SN24 Launches Quasar-3B Architecture: How Bittensor TAO Challenges OpenAI in Long-Context AI

2026/04/21 15:00:03

Introduction

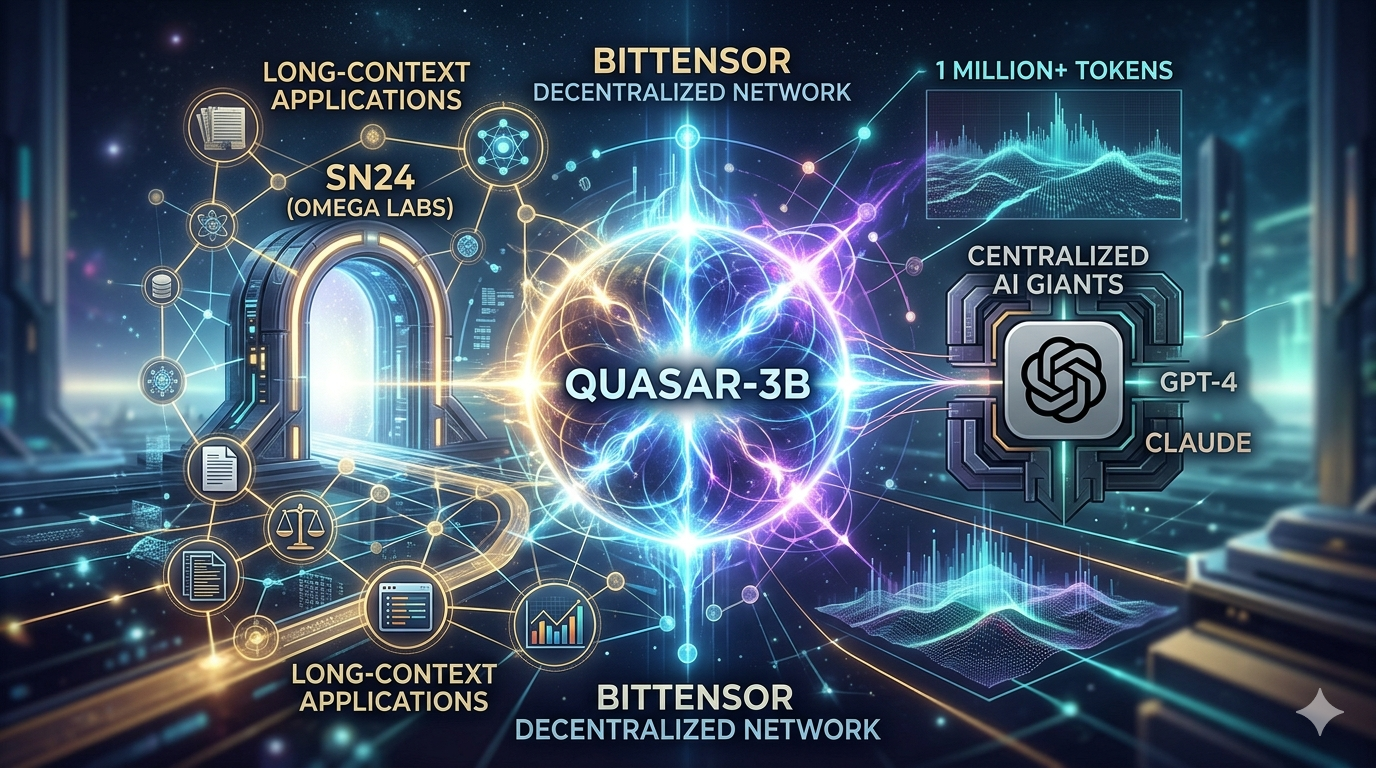

The artificial intelligence landscape witnessed significant development in April 2026 when SN24 (OMEGA Labs) announced the launch of Quasar-3B, a looped continuous-time transformer specifically engineered for long-context intelligence.

This announcement represents more than a technical milestone - it signals Bittensor's serious intent to compete directly with centralized AI giants like OpenAI in one of the most critical capability dimensions: the ability to process and reason across extended contexts. With the long-context AI landscape evolving rapidly, the competition to build models that can effectively process millions of tokens has become one of the most consequential battles in AI development. Bittensor's decentralized approach through SN24's Quasar-3B now enters this arena, challenging the assumption that only massive centralized corporations can push the boundaries of what AI models can accomplish. The question is no longer whether decentralized AI can compete-it is how quickly it can close the gap with established players.

This pillar article explores how Quasar-3B fits into the broader context of Bittensor's ecosystem. For readers new to this space, three foundational topics provide essential background:

-

understanding What Is SN24 and its role in the Bittensor network,

-

exploring Why Is Long-Context AI Important and its transformative applications across industries;

-

and comparing How Does Bittensor's Decentralized Approach work against traditional centralized AI development.

What Is Quasar-3B: SN24's Answer to Long-Context Challenges

Quasar-3B represents OMEGA Labs' solution to one of AI's most persistent limitations: context window degradation. When most models process documents exceeding their training context length, accuracy drops significantly. Research suggests that Claude loses over 30% of its accuracy after 1 million tokens. This limitation fundamentally constrains what AI systems can accomplish in practical applications.

The architecture name "Quasar" evokes the astronomical phenomenon - objects of immense brightness visible across vast distances. Similarly, Quasar-3B aims to illuminate vast contexts, enabling AI to "see" across millions of tokens with maintained accuracy. The "3B" designation refers to the model's parameter count, with "1B Active" indicating that 1 billion parameters remain actively engaged during processing.

Key architectural innovations distinguish Quasar-3B from conventional transformers. The looped continuous-time transformer design enables the model to maintain information flow across extended sequences without the typical degradation that occurs when models process context beyond their optimized range. This architectural choice addresses the fundamental limitation that has constrained Bittensor vs OpenAI competition in long-context applications.

To understand SN24's strategic positioning within the broader ecosystem, it's helpful to examine what the subnet accomplishes, operating as one of Bittensor's specialized units focused on advancing the network's long-context capabilities while contributing to the world's largest decentralized multimodal dataset.

The Technical Architecture: How Quasar-3B Achieves Extended Context

Understanding Quasar-3B's technical architecture requires examining why long-context processing has proven so challenging for AI systems. Traditional transformer models use attention mechanisms that scale quadratically with sequence length - doubling context length quadruples computational requirements. This mathematical reality has made extended context processing prohibitively expensive for most applications.

Quasar-3B's looped continuous-time transformer approach addresses this scaling challenge through architectural innovations that maintain computational efficiency even as context length extends. The model achieves this through several mechanisms. First, continuous-time modeling allows the system to process information as a flowing stream rather than discrete blocks, reducing the overhead associated with chunking. Second, the looped architecture creates feedback pathways that enable information to persist across extended sequences without proportional computational increase. Third, optimized inference pipelines ensure that the extended capability translates to practical applications.

The benchmark results have attracted significant attention within the AI research community. According to the X announcement from the Quasar team, the model demonstrates competitive performance on LongBench evaluations - the standard benchmark for long-context AI capabilities. While detailed benchmark numbers continue emerging as the model undergoes community testing, early indicators suggest meaningful progress toward the goal of maintaining accuracy across millions of tokens.

The deployment through Bittensor's subnet infrastructure provides additional advantages. The network's 128 active subnets enable specialized optimization for different aspects of long-context processing. Subnets focused on retrieval, processing, and validation can work in concert with Quasar-3B to deliver capabilities that would require significant engineering effort to replicate in centralized systems.

Why Long-Context AI Matters for the AI Race

The significance of long-context AI extends far beyond technical achievement - it represents a fundamental capability shift that enables entirely new categories of application. For enterprises and researchers working with large document sets, legal proceedings, codebases, or research archives, the ability to process entire datasets in context transforms what becomes possible.

Traditional AI approaches required breaking large documents into smaller chunks, losing the ability to see patterns that span the full dataset. A legal team reviewing a merger with thousands of documents could not ask questions that require understanding relationships across all materials. A developer analyzing a million-line codebase could not get AI assistance that understands the full context of the system. Long-context AI removes these constraints, enabling applications across legal, healthcare, finance, and research that were previously impractical.

The competitive landscape has intensified as major players recognize this dynamic. OpenAI's GPT-4.5 and Anthropic's Claude Opus 4.6 have pushed context windows to 1 million tokens, with Gemini reaching 2 million. These developments validate the market direction while raising the bar for competitors. Bittensor's entry through Quasar-3B represents the most serious decentralized challenge to this space.

For those seeking deeper understanding of why these capabilities matter and which industries benefit most, the analysis of long-context AI reveals transformative potential across healthcare diagnosis, legal document review, financial portfolio analysis, and academic literature synthesis.

How Bittensor's Decentralized Model Competes with Centralized AI

The comparison between Bittensor's decentralized approach and OpenAI's centralized development model takes on new dimensions with the Quasar-3B launch. Understanding how Bittensor vs OpenAI competition manifests in long-context AI requires examining multiple dimensions of the rivalry.

From a resource perspective, OpenAI enjoys significant advantages. The company's partnership with Microsoft provides access to massive compute infrastructure. Training runs for GPT-4 reportedly cost over $100 million. This capital intensity creates barriers that decentralized networks struggle to match directly. However, Bittensor's distributed model leverages capital from thousands of participants rather than requiring single-entity investment. The Quasar-3B development demonstrates that meaningful AI capability can emerge from this distributed model.

The incentive structures differ fundamentally. OpenAI's development benefits flow primarily to the company and its investors. Employees and researchers receive compensation but do not participate in long-term value creation. Bittensor's crypto-economic model means that contributors to Quasar-3B's development earn TAO tokens that appreciate as the network grows. This alignment creates different motivation patterns that can drive innovation through competition.

The architecture demonstrates how decentralized networks can specialize effectively. Rather than building general-purpose capability that tries to be everything to everyone, subnets can focus on specific challenges. Quasar-3B focuses exclusively on long-context processing, optimizing deeply for this capability rather than spreading resources across general improvement.

For readers interested in understanding the scalability trade-offs between these approaches, the detailed comparison shows that each model presents distinct advantages depending on use case requirements.

Performance comparisons continue evolving as both approaches mature. OpenAI's models currently lead on general capability benchmarks. Bittensor subnets have demonstrated competitive performance on specific tasks. The long-context dimension represents a domain where Bittensor can potentially lead rather than follow, given the architectural innovations like Quasar-3B's continuous-time transformer design.

The Strategic Importance for TAO and the Bittensor Ecosystem

The launch of Quasar-3B carries significant implications for the broader Bittensor ecosystem and the TAO token specifically. Understanding these implications requires examining how the subnet system creates value for the entire network.

Subnets within Bittensor operate as specialized markets, each focusing on different AI capabilities. The success of individual subnets contributes to overall network value through several mechanisms. First, useful subnets attract queries that generate TAO emissions. Second, successful subnets demonstrate the network's capability, attracting more participants. Third, the dTAO system means subnet token appreciation benefits TAO holders through the automatic market maker mechanism.

Quasar-3B's launch strengthens the network in multiple ways. The model provides a capability that was previously unavailable in the decentralized AI landscape, attracting users who need long-context processing. The technical innovation demonstrates that Bittensor can produce state-of-the-art AI research. The attention from the launch validates the subnet approach to AI development.

The competitive positioning becomes more compelling with Quasar-3B in production. Enterprise users evaluating AI options now have a decentralized alternative that can match certain capabilities of centralized providers. This competition benefits the entire market while potentially capturing value for the Bittensor ecosystem.

For investors evaluating TAO, the Quasar-3B launch represents a proof point for the investment thesis. The ability to develop competitive AI models through decentralized coordination validates the fundamental approach. Future subnet launches can point to Quasar-3B as evidence that the network can compete with centralized AI development.

Real-World Applications Enabled by Quasar-3B's Long-Context Capability

The practical applications of Quasar-3B's extended context capability span industries and use cases that were previously impractical for AI assistance. Understanding these applications demonstrates why the long-context race matters beyond technical achievement.

Legal industry applications transform when entire case files can be processed in context. Rather than reviewing individual documents in isolation, attorneys can query complete litigation histories, identifying patterns and precedents across all materials. Contract analysis can trace obligations and dependencies across entire agreement libraries. Due diligence can incorporate comprehensive company documentation in a single analysis.

Software development benefits from understanding entire codebases in context. Security audits can analyze complete repositories, identifying vulnerabilities that span multiple files. Code review can understand the full context of changes, not just diffs in isolation. Documentation generation can incorporate comprehensive understanding of system architecture.

Financial analysis reaches new sophistication with complete historical context. Portfolio analysis can incorporate decades of market data. Risk assessment can evaluate positions across entire portfolios simultaneously. Research can synthesize complete earnings histories and regulatory filings.

Healthcare applications enable comprehensive patient analysis. Diagnosis can consider complete medical histories spanning years. Research can analyze entire clinical trial datasets. Regulatory compliance can process comprehensive policy frameworks.

Academic research transforms when entire bodies of literature can be engaged. Literature review can synthesize findings across decades of publications. Cross-disciplinary research can connect insights across fields. Grant analysis can evaluate complete proposal histories.

The blockchain industry specifically benefits from these capabilities. Smart contract auditing can analyze entire protocol implementations. DeFi analysis can evaluate ecosystem interactions comprehensively. On-chain analysis can incorporate complete transaction histories.

Future Roadmap: What's Next for SN24 and Quasar

The Quasar-3B launch represents a milestone rather than a final destination. According to information from the subnet's documentation, the roadmap extends through 2026 and beyond with multiple development phases.

Q4 2025 saw the initial subnet launch on Bittensor testnet, implementation of LongBench evaluation, deployment of mock mode, and integration of WandB monitoring. These foundation elements established the infrastructure for ongoing development.

Q1 2026 focused on expanding long-context capabilities and improving evaluation metrics. The Quasar-3B announcement in April 2026 represents the result of these efforts, but continued improvement remains the focus.

Expected developments through the remainder of 2026 include additional model variants optimized for different use cases, expanded context lengths beyond current capabilities, integration with other Bittensor subnets for enhanced capability delivery, and community-driven improvements through the incentive mechanism.

The competitive pressure from centralized AI providers ensures continued innovation across the industry. As OpenAI, Anthropic, and Google push context windows further, decentralized competitors must match this progress while demonstrating distinctive advantages. Bittensor's approach of specialization through subnets provides a framework for this continued competition.

For the broader decentralized AI movement, Quasar-3B represents a proof point. The demonstration that competitive AI capabilities can emerge from decentralized networks validates the fundamental thesis. Future projects can build on this foundation, potentially accelerating development of decentralized AI alternatives.

Should I Invest in TAO on KuCoin

For traders evaluating Bittensor ecosystem exposure, the Quasar-3B launch provides additional context for investment decisions.

Bullish Considerations

-

Competitive validation: Quasar-3B demonstrates that Bittensor can develop state-of-the-art AI capabilities, validating the decentralized approach

-

Long-context market opportunity: The extended context AI market represents a significant and growing opportunity worth billions

-

Subnet ecosystem strength: The success of SN24's Quasar-3B strengthens the broader subnet ecosystem

-

Technical differentiation: Architectural innovations like continuous-time transformers provide distinctive capabilities

Risk Factors

-

Centralized competition: Major tech companies continue investing billions in long-context AI, potentially outpacing decentralized alternatives

-

Execution uncertainty: Translaining architectural innovations into practical applications requires continued execution

-

Regulatory environment: Both cryptocurrency and AI face evolving regulatory frameworks globally

-

Crypto market volatility: TAO remains highly volatile compared to traditional assets

Strategic Framework

The Quasar-3B launch represents a meaningful development for the Bittensor ecosystem but should be evaluated within overall portfolio context. Consider position sizing based on conviction in the decentralized AI thesis while maintaining appropriate risk management given crypto market volatility.

How to Trade TAO on KuCoin

Step 1: Create Your KuCoin Account

If you are ready to trade TAO, the first step is creating your KuCoin account. New users can register at KuCoin and Get Up to 11,000 USDT in New User Rewards - a substantial bonus that can boost your initial trading capital. Simply visit the KuCoin website or download the mobile app, complete the registration process with your email or phone number, and verify your identity to unlock these rewards. The registration process takes just a few minutes, and the welcome bonus provides an excellent starting point for exploring TAO trading opportunities.

Step 2: Execute Your Trade

Once your account is set up, search for "TAO/USDT" in KuCoin's trading interface. TAO typically offers strong liquidity for most position sizes, though liquidity can vary with market conditions. During periods of high volatility around major announcements like the Quasar-3B launch, consider using limit orders rather than market orders to manage slippage. Evaluate your entry point based on current market conditions and your risk tolerance before executing the trade.

Step 3: Position Management

Given the volatility inherent in AI crypto assets, establish clear profit targets and stop-loss levels before entering a position. Monitor developments from SN24, broader Bittensor subnet launches, and the competitive landscape between decentralized and centralized AI. Adjust your position based on ongoing assessment of the thesis rather than emotional responses to price movements.

Conclusion

The launch of Quasar-3B by SN24 represents a watershed moment for decentralized AI. By demonstrating that Bittensor can develop competitive long-context AI capabilities through its distributed network, the project challenges assumptions about who can push the boundaries of artificial intelligence. The architectural innovations in Quasar-3B's looped continuous-time transformer provide a foundation for continued advancement.

The competitive dynamics between decentralized and centralized AI continue evolving. OpenAI retains advantages in capital and scale. However, Bittensor's incentive alignment, specialization through subnets, and global participation create different advantages. The Bittensor vs OpenAI competition has become more interesting with this development.

For the broader AI industry, multiple approaches coexisting benefits everyone. Competition drives innovation while diversity provides resilience. The demonstration that decentralized networks can compete validates alternative development structures.

For investors, the Quasar-3B launch provides evidence supporting the Bittensor investment thesis. However, position sizing should reflect early-stage technology adoption and crypto market volatility.

FAQs

Q: What is Quasar-3B?

A: Quasar-3B is a long-context AI model launched by SN24 (OMEGA Labs) on the Bittensor network in April 2026. It uses a looped continuous-time transformer architecture designed for efficient reasoning across millions of tokens. The "3B" refers to 3 billion parameters with 1 billion active during processing.

Q: How does Quasar-3B compare to OpenAI's long-context models?

A: Quasar-3B specifically targets the long-context challenge with architectural innovations that maintain accuracy across extended sequences. While detailed benchmark comparisons continue emerging, the model demonstrates competitive performance on LongBench evaluations. The decentralized development model provides different advantages than OpenAI's centralized approach.

Q: What makes the Quasar architecture different from traditional transformers?

A: Quasar uses a looped continuous-time transformer design that enables information to flow across extended sequences without proportional computational increase. This addresses the quadratic scaling problem that makes traditional transformer context extension expensive.

Q: How does SN24 fit into the broader Bittensor ecosystem?

A: SN24 (OMEGA Labs) is one of Bittensor's 128 active subnets, focused on creating the world's largest decentralized multimodal dataset. The subnet contributes to the ecosystem both through data infrastructure and through AI capabilities like Quasar-3B.

Q: What are the real-world applications for Quasar-3B?

A: Applications include legal document analysis across complete case files, software security auditing across entire codebases, financial analysis incorporating decades of market data, healthcare analysis across complete patient histories, and academic research synthesis across entire bodies of literature.