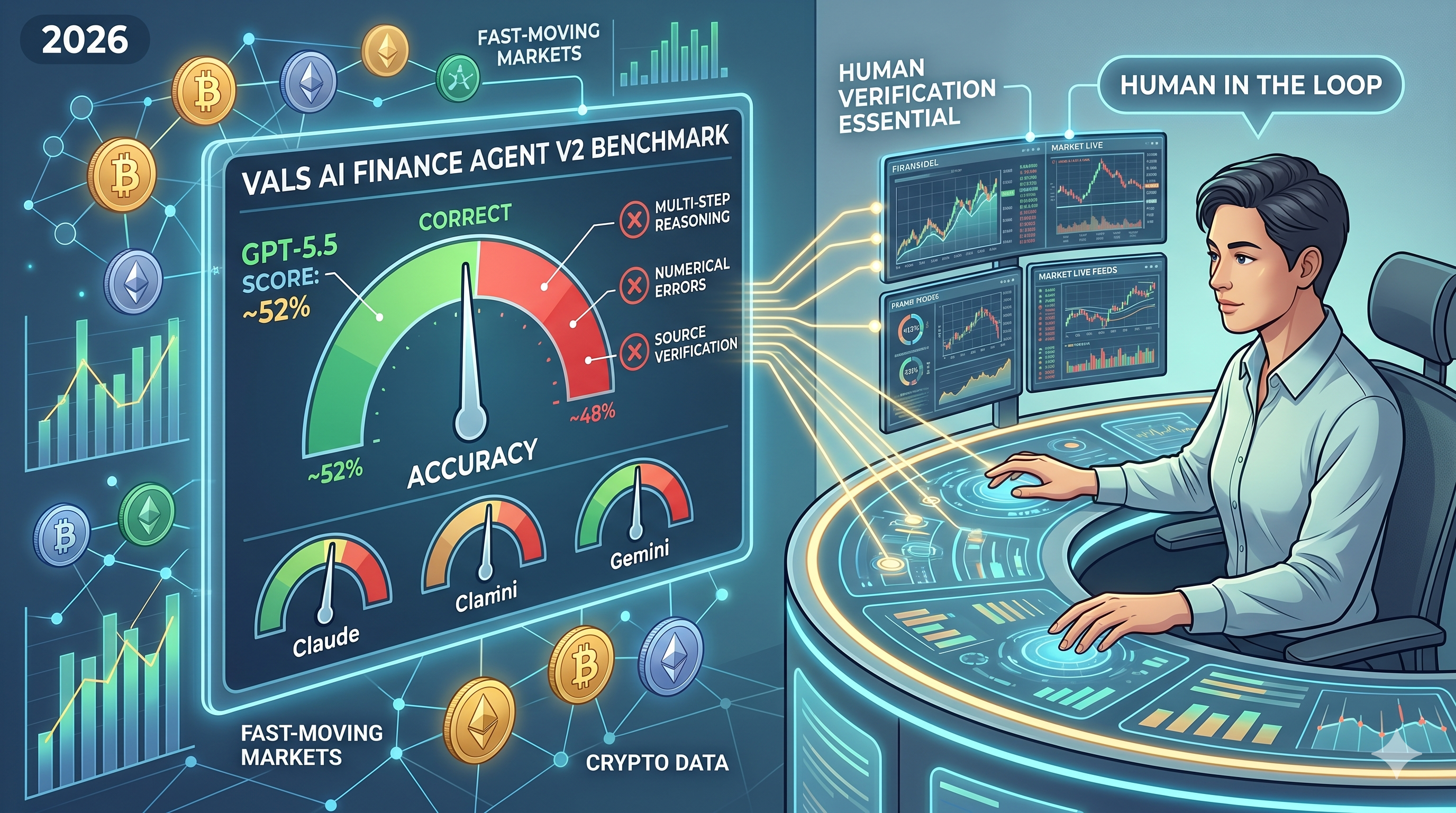

Can AI Replace Financial Analysts in 2026? Vals AI Finance Agent v2 Reveals GPT-5.5 Hits Just 52% Accuracy

2026/05/15 03:09:02

Introduction

Even the most advanced AI model in 2026 — OpenAI's GPT-5.5 — answers fewer than 52% of real-world financial analyst tasks correctly, according to the latest Vals AI Finance Agent v2 benchmark released in May 2026. The short answer to whether AI can replace financial analysts this year is no — not yet. While large language models have grown dramatically more capable, the benchmark shows they still fail roughly half of the multi-step research, modeling, and data-retrieval tasks that junior analysts handle daily. That gap matters for traders, investors, and crypto market participants who increasingly rely on AI-generated research.

This article breaks down what the Vals AI v2 results actually measure, why accuracy plateaus near 50%, which tasks AI handles well, and how human analysts remain essential — especially in fast-moving markets like cryptocurrency.

What Is the Vals AI Finance Agent v2 Benchmark?

Vals AI Finance Agent v2 is an industry benchmark that tests large language models on realistic financial analyst workflows rather than isolated trivia questions. According to Vals AI's May 2026 release notes, the v2 version expands on the original benchmark by adding multi-step agentic tasks — meaning the AI must plan, retrieve data, perform calculations, and synthesize conclusions across multiple tools.

The benchmark scores models on real tasks pulled from equity research, credit analysis, and corporate finance work. These include extracting figures from 10-K filings, building DCF inputs, reconciling segment data across quarters, and answering questions that require navigating both structured tables and unstructured prose.

How the Benchmark Differs from Earlier Tests

Earlier AI finance benchmarks measured single-turn question answering — closer to a multiple-choice exam. Vals AI v2 measures end-to-end task completion, which is far harder. A model must not only know the answer but also retrieve the correct supporting data, avoid hallucinating figures, and chain reasoning across several steps without losing context.

This shift matters because real analyst work almost never resembles a single question with a clean answer. It involves dozens of micro-decisions, source verification, and judgment calls.

How Did GPT-5.5 Score on Vals AI Finance Agent v2?

GPT-5.5 scored approximately 52% accuracy on the Vals AI Finance Agent v2 benchmark, making it the top-performing model in the May 2026 evaluation — but still well short of professional reliability. Based on the Vals AI leaderboard data published in May 2026, GPT-5.5 narrowly edged out Anthropic's Claude and Google's Gemini frontier models, all of which clustered in the high-40% to low-50% range.

A 52% score sounds modest, but it represents meaningful progress. Earlier generation models — including GPT-4-class systems tested in 2024 — scored in the 30-40% range on comparable tasks. The trajectory is upward, but the curve is flattening as benchmarks get harder.

Why 52% Is Not Good Enough for Production Use

A coin flip accuracy rate is unacceptable for any task involving money. In financial analyst workflows, an error rate above 5-10% is generally considered unusable without human review. At 52% accuracy, every output requires verification — which eliminates most of the time savings AI is supposed to deliver.

The Vals AI report notes that errors are not evenly distributed. Models perform well on definitional questions and basic retrieval but degrade sharply on multi-step calculations, cross-document reconciliation, and tasks requiring industry context.

Where Does AI Still Fail in Financial Analysis?

AI fails most often on tasks requiring numerical precision, source verification, and contextual judgment. The Vals AI v2 results identify four recurring failure modes that persist even in the strongest 2026 models.

Multi-Step Numerical Reasoning

Models lose accuracy as calculations chain together. A single DCF model can involve 40-50 linked assumptions. According to the Vals AI breakdown, accuracy drops below 35% on tasks requiring more than five sequential calculation steps, even when each individual step is straightforward.

Hallucinated Financial Figures

AI models still invent plausible-sounding numbers when correct data is not easily retrievable. This is the most dangerous failure mode in finance because hallucinations often pass surface-level review. Analysts who trust AI outputs without checking source documents risk publishing fabricated figures.

Cross-Document Reconciliation

Comparing data across multiple filings — for example, reconciling a company's segment revenue between a 10-Q and an investor presentation — remains a persistent weakness. Models often pull the right numbers from one source but miss inconsistencies that an experienced analyst would catch.

Industry Context and Judgment

Models lack the tacit knowledge analysts develop from years of covering a sector. They may correctly compute a ratio but fail to recognize when that ratio is unusual for the industry or when management is using a non-standard definition.

What Tasks Can AI Handle Well in 2026?

AI excels at high-volume, low-stakes, well-defined tasks where speed matters more than perfect accuracy. Even at 52% overall accuracy, GPT-5.5 and peer models deliver real productivity gains in specific workflows where errors are easy to catch or low-cost.

These include:

-

Summarization of earnings calls, research notes, and filings — where the analyst still reads the source for critical sections

-

First-draft writing of routine sections like company overviews or industry backgrounds

-

Data extraction from standardized tables in well-structured documents

-

Code generation for Excel formulas, Python scripts, and SQL queries used in modeling

-

Translation of foreign-language filings and news

-

Initial screening of large document sets to identify which require human review

The pattern is clear: AI augments analysts effectively when humans remain in the loop and when errors are recoverable. AI fails when used as an autonomous decision-maker.

How Does This Apply to Crypto Market Analysis?

Crypto analysts face the same AI limitations as traditional finance analysts — plus additional challenges unique to digital assets. AI models trained primarily on equity research data perform even worse on crypto-specific tasks, where structured filings do not exist and most signal lives in on-chain data, social sentiment, and protocol documentation.

Key crypto-specific challenges include:

On-Chain Data Interpretation

Reading wallet flows, smart contract interactions, and liquidity pool dynamics requires specialized tools and judgment that general-purpose AI agents handle poorly. A model may correctly query a block explorer but misinterpret what the data means for price action.

Protocol-Specific Knowledge

Each protocol — whether a layer-1 chain, DEX, or restaking platform — has unique tokenomics, governance rules, and risk vectors. AI models trained on broad data often miss critical protocol-specific nuances that determine whether a thesis is valid.

Real-Time Market Conditions

Crypto markets move 24/7 and respond to news within seconds. AI models with knowledge cutoffs or slow retrieval pipelines are structurally disadvantaged compared to human traders watching live order books and social feeds.

Derivatives and Options Complexity

For traders using options strategies, AI cannot reliably assess dealer gamma positioning, skew dynamics, or volatility regime shifts — areas where human judgment and specialized models remain dominant.

Conclusion

The Vals AI Finance Agent v2 benchmark settles the 2026 version of the AI-versus-analyst debate clearly: even the strongest model available, GPT-5.5, hits just 52% accuracy on realistic financial analyst tasks. That is impressive progress compared to prior generations, but it is nowhere near the reliability threshold needed to replace human professionals.

AI handles summarization, drafting, extraction, and code generation well — making analysts faster, not obsolete. It fails on multi-step calculations, cross-document reconciliation, hallucinated figures, and the judgment calls that define senior analyst work. In crypto markets specifically, AI faces additional disadvantages from sparse training data, real-time dynamics, and protocol-specific complexity.

The practical takeaway for traders and investors is straightforward: use AI to accelerate research, but never outsource final decisions to a model that gets half its answers wrong. Pair AI tools with reliable trading infrastructure — like KuCoin's spot, futures, and options markets — and keep human judgment in the loop. The analyst is not being replaced in 2026; the analyst is being upgraded.

FAQs

Which AI model currently ranks highest on financial analyst benchmarks?

GPT-5.5 ranks highest on the Vals AI Finance Agent v2 benchmark as of May 2026, scoring approximately 52% accuracy. Claude and Gemini frontier models cluster closely behind in the high-40s to low-50s range. The gap between the top three models is narrow, and rankings have shifted with each new release cycle throughout 2025 and 2026.

Are AI hedge funds outperforming human-managed funds?

No consistent evidence shows AI-only hedge funds outperforming human-managed funds on a risk-adjusted basis. Most successful quantitative funds use machine learning as one input among many, with human portfolio managers making final allocation decisions. Pure AI-driven strategies have struggled during regime shifts and black-swan events where historical data offers limited guidance.

Can AI accurately predict crypto prices?

AI cannot reliably predict crypto prices over any meaningful time horizon. Price movements depend on macro liquidity, regulatory news, on-chain flows, and sentiment shifts that resist pattern-matching. AI tools are more useful for processing information faster than for forecasting — helping traders understand what just happened, not what will happen next.

What skills should financial analysts develop to stay relevant?

Analysts should develop prompt engineering, AI output verification, and domain expertise that AI cannot replicate. Specializing in a sector, building proprietary data sources, and cultivating client relationships all create defensible value. Generalist research tasks are increasingly commoditized; deep, specific expertise is not.

Is the 52% Vals AI score expected to improve significantly in 2026?

Yes, the score is expected to rise as new models launch throughout 2026, but the pace of improvement on the hardest tasks is slowing. Based on the gap between Vals AI v1 and v2 results, frontier models are gaining roughly 8-12 percentage points per year on complex multi-step tasks. Reaching production-grade reliability above 90% likely remains several years away.