How Does Bittensor’s Decentralized Approach Compare to OpenAI’s Centralized Model in Scalability and Performance

2026/04/21 12:09:02

Introduction

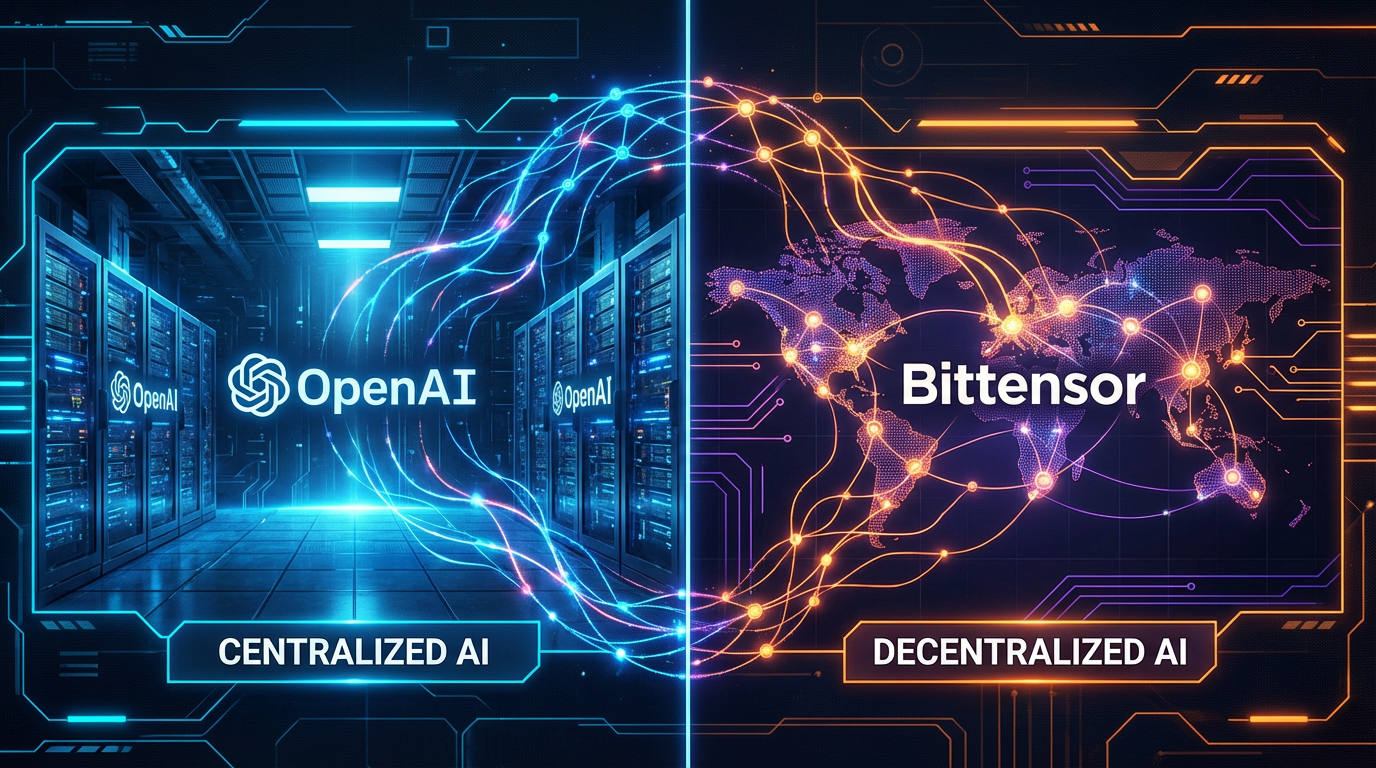

The artificial intelligence landscape is reshaping rapidly, and a fundamental debate has emerged: should AI development remain in the hands of centralized corporations, or can decentralized networks challenge the status quo?

This question sits at the heart of the comparison between Bittensor and OpenAI. While OpenAI has become synonymous with centralized AI development, backing its GPT models with billions of dollars in computing resources, Bittensor takes a radically different approach by creating a decentralized marketplace where machine intelligence emerges from global participant contributions. The implications extend beyond technology - they touch fundamental questions about who controls the future of artificial intelligence. With Bittensor’s 128 active subnets processing diverse AI tasks and OpenAI’s massive centralized infrastructure powering ChatGPT for hundreds of millions of users, the comparison reveals trade-offs that will shape the AI industry for years to come.

What Is Bittensor: The Decentralized AI Marketplace

Bittensor represents a fundamental rethinking of how artificial intelligence can be developed and deployed. Launched in 2019, the protocol creates a decentralized marketplace for machine intelligence where contributors are rewarded in TAO tokens for their computational resources and AI capabilities. Unlike traditional AI development where a single entity controls the model, Bittensor’s network operates through thousands of distributed nodes, each contributing to the collective intelligence.

The architecture centers on a blockchain-based incentive system. Validators stake TAO to verify the quality of AI responses, while miners provide computational resources and run AI models to serve queries. This crypto-economic design aligns participant incentives with network quality - those who contribute valuable intelligence earn more TAO, while poor performers lose stakes. The result is a self-regulating ecosystem where competition drives improvement.

A defining feature is the subnet system. As of April 2026, Bittensor supports 128 active subnets, each specialized for different AI tasks. These subnets range from language models to compute resources to data generation. The modular design allows the network to scale by adding specialized components without disrupting existing functionality. Each subnet operates independently while contributing to the broader ecosystem.

The TAO token mirrors Bitcoin’s economics with a fixed 21 million supply and halving mechanism. This scarcity model contrasts sharply with traditional tech companies where value accrues to shareholders rather than contributors. For participants, TAO represents not just a cryptocurrency but a stake in the network’s intelligence production.

The February 2025 introduction of dynamic TAO (dTAO) transformed the ecosystem further. Each subnet gained its own token trading against TAO, creating liquid markets for subnet participation. This innovation added asymmetric opportunities - early participants in successful subnets benefit from token appreciation alongside service rewards.

What Is OpenAI: The Centralized AI Powerhouse

OpenAI represents the conventional approach to AI development - centralized control, massive capital investment, and proprietary model development. Founded in 2015 as a nonprofit research organization, OpenAI transitioned to a capped-profit structure in 2019 to attract investment. Today, it stands as one of the most well-funded AI companies globally, with Microsoft providing billions in infrastructure support.

The GPT model family exemplifies centralized AI development. Each iteration - from GPT-3 through GPT-4 and beyond - represents massive investments in training compute. Training GPT-4 reportedly cost over $100 million in computational resources. This capital intensity creates significant barriers to entry, concentrating AI capability within a handful of well-funded organizations.

OpenAI’s infrastructure operates through centralized data centers. The company controls training pipelines, model architectures, and deployment infrastructure. This centralization enables tight integration between components but creates single points of failure and dependency. Users access models through OpenAI’s API, with pricing based on token usage.

The organizational structure has evolved significantly. While originally founded as a nonprofit with open research principles, OpenAI’s partnership with Microsoft and transition to a “capped profit” model has led to increasing proprietary development. The release of GPT-4 omitted technical details that would enable independent verification or reproduction.

Market position demonstrates the centralized approach’s success. ChatGPT reached 100 million users faster than any technology product in history. Enterprise adoption for API access continues growing. The model powers numerous third-party applications through provider relationships. This scale creates feedback loops - more users generate more training data, improving models further.

However, this success comes with trade-offs. Centralized control means OpenAI makes all significant decisions about model capabilities, safety, and access. The company’s content policies determine what users can create. Pricing changes affect entire application ecosystems. Contributors to the model’s development receive no direct economic benefit.

Scalability: Distributed Versus Centralized Architecture

Scalability represents one of the most significant differences between Bittensor’s decentralized approach and OpenAI’s centralized model. Each architecture presents distinct advantages and limitations that affect how each system handles growth.

Bittensor’s subnet architecture enables horizontal scaling. Adding new subnets increases network capacity without requiring changes to existing infrastructure. As of April 2026, the network maintains 128 active subnets with plans to expand to 256 later in 2026. Each subnet specializes in specific AI tasks, allowing the network to handle diverse workloads simultaneously. New subnets can launch to address emerging use cases, with low-performing subnets replaced through market competition.

The decentralized nature provides resilience benefits. No single point of failure exists - the network continues operating even if individual nodes go offline. Geographic distribution reduces latency for global users while providing redundancy against regional outages. This resilience comes without requiring massive redundant infrastructure investments.

However, decentralized scaling faces coordination challenges. Network upgrades require consensus among participants. Security considerations introduce overhead that centralized systems avoid. The incentive mechanism must balance participant rewards against network sustainability, a balance that requires ongoing tuning.

OpenAI’s centralized architecture enables highly optimized scaling. The company can deploy massive compute clusters, optimizing hardware utilization across training and inference. Dedicated engineering teams focus exclusively on performance improvement. The tight integration between components enables optimizations impossible in distributed systems.

The trade-off is capital intensity. Scaling OpenAI’s infrastructure requires billions in ongoing investment. Data center expansion follows traditional capacity planning, with lead times measured in years. Geographic distribution is limited to regions where OpenAI chooses to deploy.

Performance comparisons reveal interesting dynamics. Bittensor subnets have demonstrated competitive performance on specific benchmarks, with some achieving results rivaling centralized models. However, direct comparison is complex - Bittensor’s distributed network optimizes for different metrics than OpenAI’s unified system.

| Aspect | Bittensor (Decentralized) | OpenAI (Centralized) |

| Active Components | 128 subnets (expandable to 256) | Single unified model family |

| Scaling Mechanism | Add new subnets | Increase compute capacity |

| Infrastructure Control | Distributed across participants | Centralized company control |

| Geographic Distribution | Global node network | Microsoft Azure data centers |

| Upgrade Coordination | On-chain governance | Internal decision-making |

| Capital Requirements | Participant-funded | Billions in corporate investment |

Performance: Quality, Speed, and Reliability

Performance encompasses multiple dimensions - output quality, response speed, and reliability. Comparing Bittensor and OpenAI requires examining each dimension while acknowledging their different optimization targets.

Quality represents the most visible comparison point. OpenAI’s GPT-4 has set benchmarks across multiple evaluations, demonstrating state-of-the-art capabilities on reasoning, coding, and knowledge tasks. The company’s scale enables training on massive datasets with extensive human feedback. Bittensor’s network achieves competitive results on specific tasks through specialized subnets, though no single subnet matches GPT-4’s general capability.

The Bittensor approach emphasizes specialization. Subnets can optimize for specific domains rather than general capability. A subnet focused on code generation might outperform general models on programming tasks. This specializaiton enables targeted excellence while the network collectively provides broad capability.

Response latency differs significantly between systems. OpenAI’s centralized infrastructure enables consistent low-latency responses through optimized inference pipelines. Geographic distribution through Microsoft Azure provides reasonable latency globally. Bittensor’s decentralized network introduces latency variability depending on node distribution and network conditions.

However, Bittensor’s architecture enables optimization strategies unavailable to centralized systems. Multiple miners can compete to serve queries, with the fastest responder earning rewards. Users can choose between subnets based on their speed requirements. The competitive dynamic creates incentives for performance optimization.

Reliability presents distinct trade-offs. OpenAI’s centralized control enables consistent service levels but creates single points of failure. API outages affect all users simultaneously. Bittensor’s distributed design provides resilience against individual node failures but introduces complexity that can affect consistency.

Cost structures differ fundamentally. OpenAI operates through API pricing, with costs scaling with usage. This model provides predictability for users willing to pay but creates barriers for high-volume applications. Bittensor’s token-based economy means costs depend on TAO value and subnet dynamics, creating different cost exposure for participants.

The competitive landscape is evolving rapidly. Bittensor’s subnet tokens reached a combined market capitalization of approximately $1.4 billion as of March 2026, indicating significant market validation. Ecosystem growth has been substantial - 84% quarter-over-quarter growth in Q3 2025 demonstrates accelerating adoption.

Economic Models and Incentive Structures

The economic foundations underlying Bittensor and OpenAI represent fundamentally different philosophies about how AI development should be funded and who should benefit from its success.

Bittensor’s crypto-economic model distributes value to participants. Miners earn TAO for providing computational resources and AI capabilities. Validators earn through stake-based emissions. Delegators participate by staking to trusted validators. The TAO token’s fixed supply and halving mechanism create scarcity similar to Bitcoin.

This distribution model has profound implications. Contributors directly benefit from network growth through token appreciation. Early participants in successful subnets gain through token allocation. The alignment between participant incentives and network success creates sustainable economics without requiring corporate funding.

However, crypto-economic models face challenges. Token volatility creates uncertainty for participants. Regulatory uncertainty affects token-based systems globally. The complexity of incentive mechanisms can produce unintended behaviors. Market dynamics don’t always align with network utility.

OpenAI’s centralized model operates through traditional corporate economics. The company raises capital from investors, spends on development, and captures value through API pricing. This approach provides predictable funding for large-scale development but concentrates value within the company and its shareholders.

The partnership with Microsoft illustrates centralized AI economics. Microsoft provides billions in compute infrastructure in exchange for exclusive deployment rights. This vertical integration enables massive investment but creates dependency for users on Microsoft’s infrastructure choices.

Market positioning reflects these economic differences. OpenAI commands significant enterprise value through its proprietary position. Bittensor’s market cap reached approximately $3.43 billion as of April 2026, representing roughly 20% of the AI crypto sector - a meaningful position but far smaller than OpenAI’s enterprise value.

Network Effects and Ecosystem Development

Network effects drive long-term success in both systems, though through different mechanisms. Understanding these dynamics reveals how each approach might evolve.

OpenAI benefits from classic network effects. More users generate more training data through API interactions. Third-party developers build applications on the platform, increasing its utility. Enterprise adoption creates switching costs that retain users. The brand recognition from ChatGPT drives continued growth.

These network effects are reinforced by capital availability. Revenue from API sales funds model improvements, attracting more users. The cycle creates increasing returns that benefit the centralized player. Competitors must match both capability and network effects.

Bittensor’s network effects emerge from its decentralized structure. More subnets create a more comprehensive AI marketplace. Each subnet’s success attracts participants to the broader ecosystem. The dTAO mechanism means subnet growth contributes to TAO value, reinforcing network participation.

The subnet model creates unique ecosystem dynamics. Successful subnets demonstrate viable models, attracting new subnet launches. Competition between subnets drives quality improvement. The 128-subnet cap creates scarcity that rewards early participation in successful subnets.

Integration developments affect both systems. Bittensor’s subnets increasingly connect with traditional blockchain and AI infrastructure. OpenAI’s enterprise features expand through Microsoft partnerships. The competitive landscape continues evolving as both approaches mature.

Should I Invest in TAO on KuCoin

For traders evaluating Bittensor ecosystem exposure, understanding the distinction between TAO and subnet tokens is essential for portfolio construction.

Bullish Considerations for TAO

-

Ecosystem diversification: TAO provides exposure to the entire Bittensor network of 128 subnets, capturing broad ecosystem growth rather than individual subnet performance

-

Proven network: Bittensor has established itself as the leading decentralized AI protocol with significant market validation

-

dTAO mechanism: The dynamic TAO system means each successful subnet launch potentially adds value to the TAO token

-

Growing institutional interest: Decentralized AI as a sector has attracted increasing institutional attention, with major firms exploring Bittensor partnerships

Bullish Considerations for Bittensor Subnet Tokens

-

Higher risk, higher return: Individual subnet tokens can experience significant appreciation when subnets achieve success

-

Targeted exposure: Traders can focus on specific AI use cases rather than general ecosystem exposure

-

dTAO liquidity: The automated market maker provides trading opportunities beyond TAO

Risk Factors to Consider

-

Centralized AI competition: Major tech companies continue investing billions in AI development, potentially outpacing decentralized alternatives

-

Regulatory uncertainty: Both cryptocurrency and AI face evolving regulatory frameworks globally

-

Technical challenges: Decentralized AI must overcome significant technical hurdles to match centralized performance

-

Crypto market volatility: TAO and subnet tokens remain highly volatile compared to traditional assets

-

Network execution: Bittensor must continue executing on its roadmap while maintaining network quality

Strategic Framework

Position sizing should reflect the binary nature of early-stage technology adoption. Consider TAO for diversified ecosystem exposure with relatively lower risk. Consider subnet tokens for targeted exposure with higher risk but potentially higher returns. The Bittensor ecosystem represents meaningful allocation for those bullish on decentralized AI infrastructure, but allocation size should reflect overall portfolio risk tolerance.

How to Trade TAO on KuCoin

Step 1: Create Your KuCoin Account

If you are ready to trade TAO, the first step is creating your KuCoin account. New users can register at KuCoin and Get Up to 11,000 USDT in New User Rewards - a substantial bonus that can boost your initial trading capital. Simply visit the KuCoin website or download the mobile app, complete the registration process with your email or phone number, and verify your identity to unlock these rewards. The registration process takes just a few minutes, and the welcome bonus provides an excellent starting point for exploring TAO trading opportunities.

Step 2: Execute Your Trade

Once your account is set up, search for “TAO/USDT” in KuCoin’s trading interface. TAO typically offers strong liquidity for most position sizes, though liquidity can vary with market conditions. During periods of high volatility, consider using limit orders rather than market orders to manage slippage. Evaluate your entry point based on current market conditions and your risk tolerance before executing the trade.

Step 3: Position Management

Given the volatility inherent in AI crypto assets, establish clear profit targets and stop-loss levels before entering a position. The Bittensor ecosystem continues evolving rapidly, with new subnet launches and ecosystem developments occurring regularly. Monitor Bittensor documentation, subnet launches, and broader AI market sentiment. Adjust your position size based on ongoing risk assessment rather than emotional responses to price movements.

Conclusion

The comparison between Bittensor’s decentralized approach and OpenAI’s centralized model reveals fundamental trade-offs in AI development. OpenAI’s centralized architecture enables massive scale, optimized performance, and rapid iteration through billions in capital investment. However, this concentration creates single points of control and excludes contributors from economic participation.

Bittensor’s decentralized model distributes AI development across global participants, aligning incentives through crypto-economic mechanisms. The subnet architecture enables specialized capability while maintaining network-level integration. With 128 active subnets and ecosystem token valuation exceeding $1.5 billion, the approach has demonstrated meaningful market validation.

Both approaches likely coexist rather than one displacing the other. Centralized AI will continue serving use cases requiring maximum capability. Decentralized alternatives will appeal to those seeking economic participation and architectural alternatives. The AI industry is large enough to accommodate multiple approaches.

For investors, TAO provides diversified ecosystem exposure. Individual subnet tokens offer targeted opportunities with higher risk. Both carry significant crypto market risk.

FAQs

Q: What is the main difference between Bittensor and OpenAI?

A: Bittensor is a decentralized AI network where participants earn TAO tokens for contributing computational resources and AI capabilities. OpenAI is a centralized company that develops proprietary AI models through corporate investment and research. The fundamental difference is control - Bittensor distributes decision-making while OpenAI maintains central control.

Q: How many subnets does Bittensor have?

A: As of April 2026, Bittensor supports 128 active subnets, each specialized for different AI tasks. The network has a hard cap of 128 subnets, with new subnets replacing low performers. Expansion to 256 subnets is projected for later in 2026.

Q: Is Bittensor’s AI performance comparable to OpenAI’s models?

A: Bittensor subnets have demonstrated competitive performance on specific benchmarks, with some achieving results rivaling centralized models in targeted domains. However, no single subnet currently matches GPT-4’s general capability across all tasks. The comparison is complex due to different optimization targets.

Q: What is dTAO in the Bittensor ecosystem?

A: Dynamic TAO (dTAO) was introduced in February 2025, transforming each subnet into its own automated market maker with a natively assigned token. This innovation created liquid markets for subnet participation and added token appreciation as a potential return source alongside service rewards.

Q: How does Bittensor’s scalability compare to centralized AI systems?

A: Bittensor scales horizontally through subnet addition - new subnets can launch to address emerging use cases without disrupting existing infrastructure. OpenAI scales vertically through compute addition, requiring massive capital investment. Each approach has trade-offs around coordination complexity versus capital intensity.