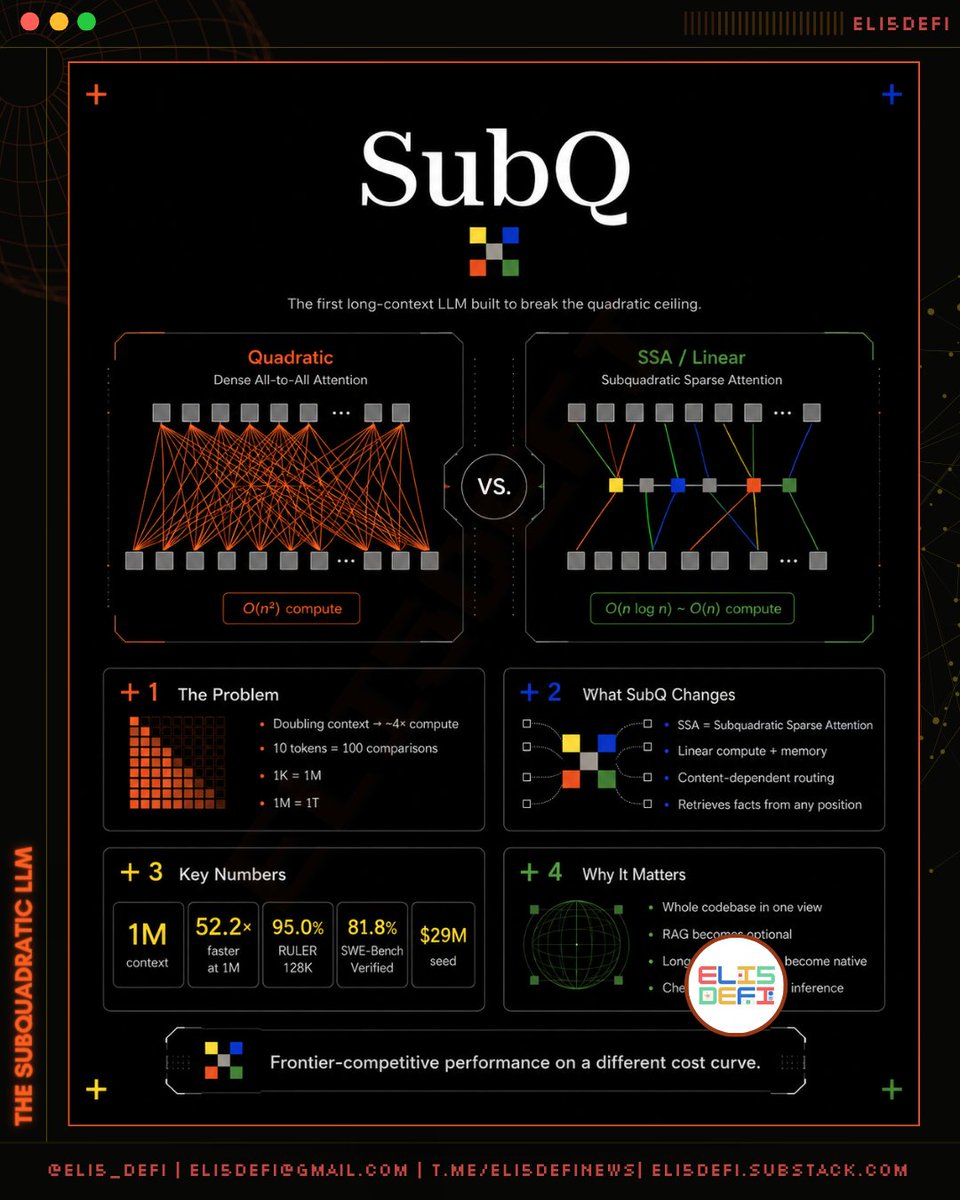

A new AI company called @subquadratic just released a model that breaks one of the oldest limits in modern AI. To understand why it matters, you need to understand a single math problem that's been silently shaping every chatbot you've ever used. - ➠ The Problem: AI Reads in Pairs, and Pairs Don't Scale Every modern LLM (ChatGPT, Claude, Gemini) reads text by checking how every word relates to every other word. That sounds fine until you do the math: ▸ 10 words → 100 comparisons ▸ 1,000 words → 1 million comparisons ▸ 1 million words → 1 trillion comparisons Doubling the input doesn't double the work. It quadruples it. This is called quadratic scaling, and it's been baked into AI since 2017. What that means for you: ▸ Long documents get expensive fast ▸ Models often miss facts buried deep in long inputs ▸ Whole codebases or research libraries don't fit Now you know why, the longer the context, the dumber and more expensive your LLMs become. - ➠ How Today's AI Hides the Problem The industry built workarounds instead of fixing the math: ▸ RAG: a search engine grabs a few relevant snippets, feeds only those to the model ▸ Chunking: long documents get sliced into small pieces ▸ Agent systems: multiple AI calls handle different parts, glued together with code ▸ FlashAttention: clever memory tricks that make the same expensive math run faster These work but none of them fix the actual problem. The whole modern AI stack (vector databases, retrieval pipelines, prompt engineering) exists because models can't just hold the whole thing in view. — ➠ What SubQ Does Differently SubQ uses a new approach called SSA (Subquadratic Sparse Attention). The idea in one sentence: instead of comparing every word to every other word, the model figures out which words actually matter for the question, and ignores the rest. That changes scaling from quadratic to linear. Doubling the input now doubles the work instead of quadrupling it. The hard part isn't the idea since people have tried this before. Every previous attempt sacrificed something: either accuracy, or the ability to find facts buried far back in the text, or the efficiency itself. Subquadratic, co-founded by @alex_whedon claims they finally cracked all three at once. — ➠ The Receipts Third-party verified benchmarks: ▸ Ties Claude Opus 4.6 on RULER 128K (a long-context reasoning test) ▸ Beats Opus 4.7, GPT 5.4, and Gemini 3.1 Pro on MRCR v2 (multi-evidence retrieval), but loses to Opus 4.6 and GPT 5.5 ▸ Beats Opus 4.6 and Gemini 3.1 Pro on SWE-Bench (real coding tasks), trails Opus 4.7 ▸ 52× faster than FlashAttention at 1 million tokens ▸ A research version handles 12 million tokens with roughly 1,000× less attention compute than other frontier models To put it succinctly, this is not "best model in the world." It's frontier-level accuracy at a fundamentally cheaper cost curve. — ➠ Where Sam Altman Comes In Two of Altman's biggest public claims point at the same problem SubQ is solving. On cost: In his February 2025 blog post Three Observations, Altman wrote that the cost of using AI drops about 10× every 12 months. He called this "unbelievably stronger" than Moore's Law. His thesis: cheaper inference is the dominant force shaping what AI can become. On size: Going back to 2023, Altman has said the era of bigger and bigger models is ending, and the real competition is capability per dollar. He compared the parameter count race to the GHz race in 1990s chips. Wrong axis. SubQ takes both of those bets literally. Their tagline is Efficiency is intelligence. The catch: Altman's stated path to cheaper AI is hardware progress, software optimization, and model distillation. He has not publicly endorsed redesigning the attention math itself. So SubQ's pitch aligns with his economics, but it's also a bet that the big labs left an architectural dollar on the table. — ➠ Why This Matters If SubQ delivers at production scale: ▸ Codebases as a single conversation. No more multi-agent systems juggling files. The model holds the whole repo. ▸ RAG becomes optional. A lot of today's AI infrastructure exists to compensate for the quadratic ceiling. Remove the ceiling, and the scaffolding becomes baggage. ▸ Long-running agents stop being a hack. Days-long sessions with persistent memory become native. ▸ New apps become possible. Workloads that were too expensive (full document review, exhaustive code search, compliance scanning) become routine. — ➠ The Honest Caveats ▸ It's in private beta. Real-world reliability hasn't been stress-tested. So until then, treat the announcement as a teaser, even many concerned that this is performative act only. ▸ The MRCR v2 score (65.9%) is good but trails Opus 4.6 (78.3%) and GPT 5.5 (74%). SSA is more efficient, not strictly more capable. ▸ Benchmarks are self-published with third-party verification. Academic replication is the real test. ▸ The 12M-token result is a research model, not the shipping product (which is 1M). — ➠ Bottom Line For nine years, every transformer-based AI has paid the same quadratic tax. Subquadratic claims they finally figured out how not to. The benchmarks suggest they're at least directionally right. Altman has been telling the industry for three years that capability per dollar is the new battleground. SubQ is one of the first companies trying to win that fight by changing the underlying math instead of stacking workarounds. Whether they pull it off is now a public empirical question.

Share

Source:Show original

Disclaimer: The information on this page may have been obtained from third parties and does not necessarily reflect the views or opinions of KuCoin. This content is provided for general informational purposes only, without any representation or warranty of any kind, nor shall it be construed as financial or investment advice. KuCoin shall not be liable for any errors or omissions, or for any outcomes resulting from the use of this information.

Investments in digital assets can be risky. Please carefully evaluate the risks of a product and your risk tolerance based on your own financial circumstances. For more information, please refer to our Terms of Use and Risk Disclosure.