Author:Tina, DongmeiInfoQ

1. After nearly three years, Musk opens up the X recommendation algorithm again.

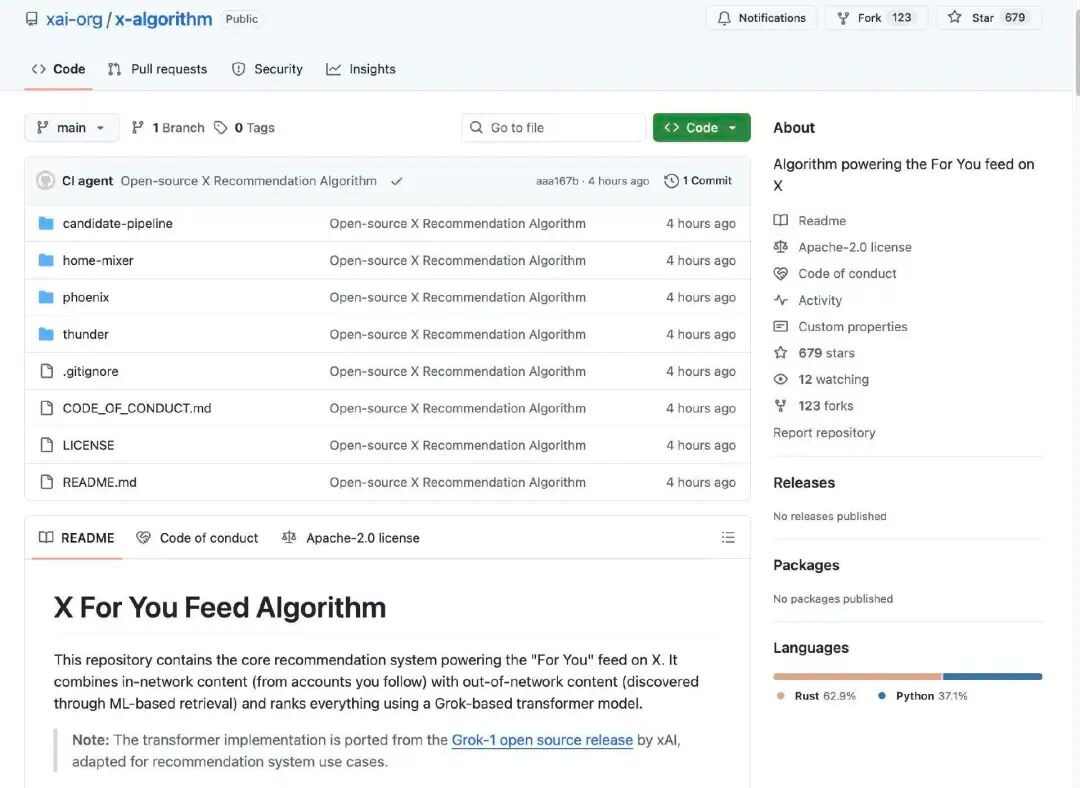

Just now, the X engineering team posted on X to announce the open-sourcing of X's recommendation algorithm. According to the introduction, this open-source library includes the core recommendation system that powers the "For You" feed on X. It combines in-network content (from accounts users follow) with out-of-network content (discovered through machine learning-based retrieval), and ranks all content using a Transformer model based on Grok. In other words, the algorithm employs the same Transformer architecture as Grok.

Open source address: https://x.com/XEng/status/2013471689087086804

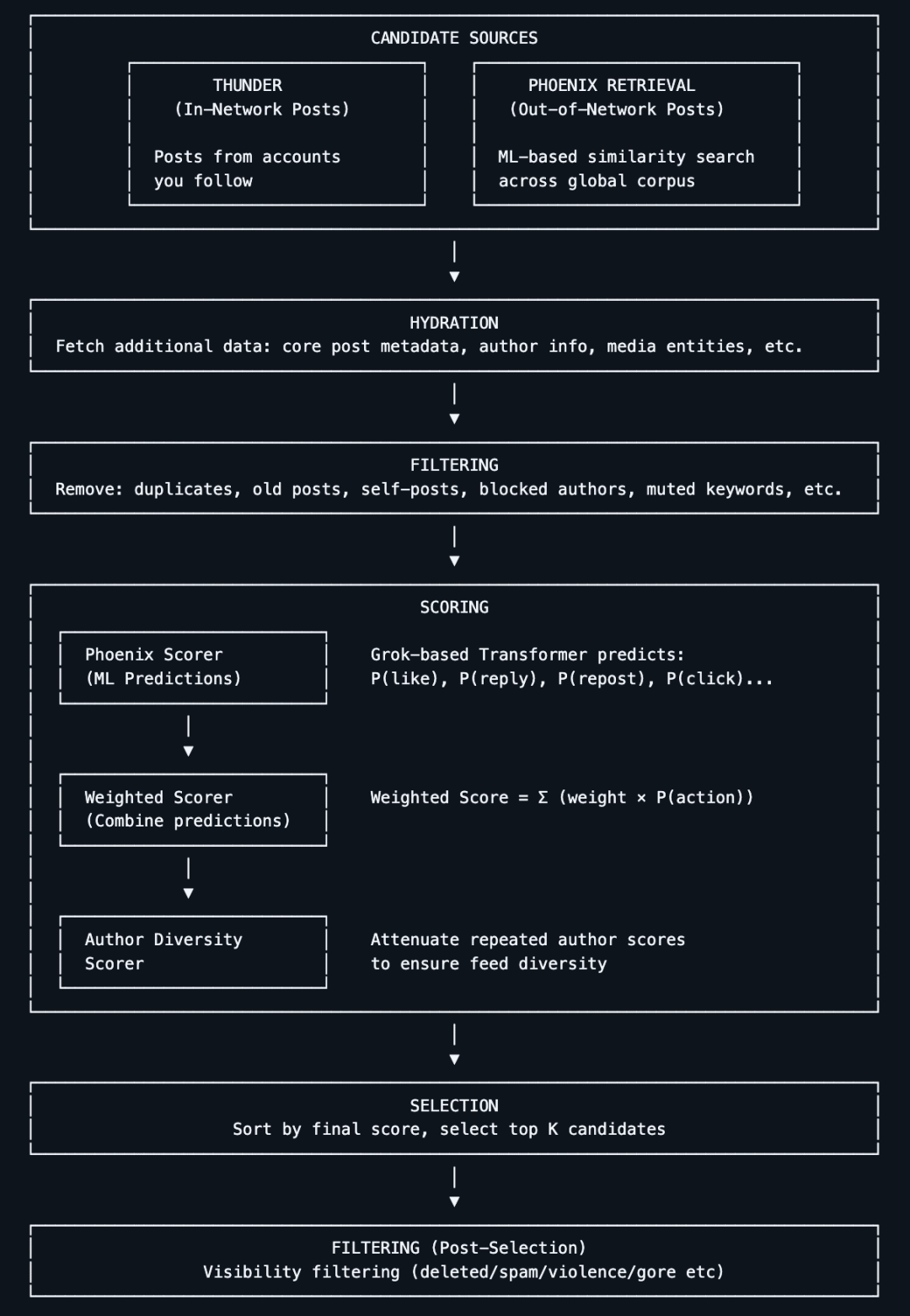

X's recommendation algorithm is responsible for generating the content users see on the home screen."For You" feed contentIt obtains candidate posts from two main sources:

The account you are following (In-Network / Thunder)

Other posts found on the platform (Out-of-Network / Phoenix)

These candidate contents are then uniformly processed, filtered, and sorted by relevance.

So, what is the core architecture and operational logic of the algorithm?

The algorithm first crawls candidate content from two types of sources:

Content from Followed Accounts: Posts published by accounts you have actively followed.

Non-following content: Posts that may interest you, retrieved by the system from the entire content library.

The goal of this stage is "to find posts that may be relevant."

The system automatically removes low-quality, duplicate, non-compliant, or inappropriate content. For example:

Content from blocked accounts

Topics that users are clearly not interested in

Illegal, outdated, or invalid posts

This ensures that only valuable candidate content is processed during the final sorting.

The core of the algorithm open-sourced this time is that the system uses a Grok-based Transformer model (similar to a large language model / deep learning network) to score each candidate post. The Transformer model predicts the probability of each type of user behavior (likes, replies, shares, clicks, etc.) based on the user's historical actions. Finally, these behavior probabilities are combined with weights into a comprehensive score, and posts with higher scores are more likely to be recommended to the user.

This design essentially eliminates the traditional manual feature extraction approach, instead using an end-to-end learning method to predict user interests.

This is not Musk's first time open-sourcing the X recommendation algorithm.

As early as March 31, 2023, as promised when Musk acquired Twitter, he officially open-sourced part of Twitter's source code, including the algorithm for recommending tweets in users' timelines.On the day of its open source release, the project received over 10,000 stars on GitHub.

At that time, Musk stated on Twitter that this release was"Most recommendation algorithms"The rest of the algorithms will also be released sequentially. He also mentioned that he hopes "independent third parties can determine with reasonable accuracy the content that Twitter might show to users."

In the Space discussion about the algorithm release, he said the open-source plan aims to make Twitter the "most transparent system on the internet" and as robust as the most famous and successful open-source project, Linux. "The overall goal is to let the users who continue to support Twitter enjoy it to the fullest extent possible."

It has been more than three years since Musk first open-sourced the X algorithm. As a super KOL in the tech community, Musk has long been thoroughly promoting this open-source initiative.

On January 11, Musk posted on X that he would open-source the new X algorithm—including all the code used to determine which organic search content and ads to recommend to users—within seven days.

This process will repeat every four weeks, accompanied by detailed developer notes to help users understand what changes have occurred.

Today, his promise was fulfilled once again.

2. Why did Musk open source it?

When Elon Musk mentions "open source" again, the first reaction from the outside is not technological idealism, but rather practical pressure.

Over the past year, X has repeatedly found itself in controversy due to its content distribution mechanism. The platform has been widely criticized for favoring and amplifying right-wing viewpoints at the algorithmic level. This bias is not considered an isolated incident but is perceived as a systemic issue. A research report released last year pointed out that X's recommendation system has developed a noticeable new bias in the dissemination of political content.

At the same time, some extreme cases have further fueled external doubts. Last year, an unverified video involving the shooting of American right-wing activist Charlie Kirk quickly spread on the X platform, causing a public outcry. Critics argue that this not only exposed the failure of the platform's content moderation mechanisms, but also once again highlighted how algorithms determine "what to amplify and what not to amplify." Hidden power.

Against this backdrop, Musk's sudden emphasis on algorithm transparency is hard to be simply interpreted as a purely technical decision.

3. What do netizens think?

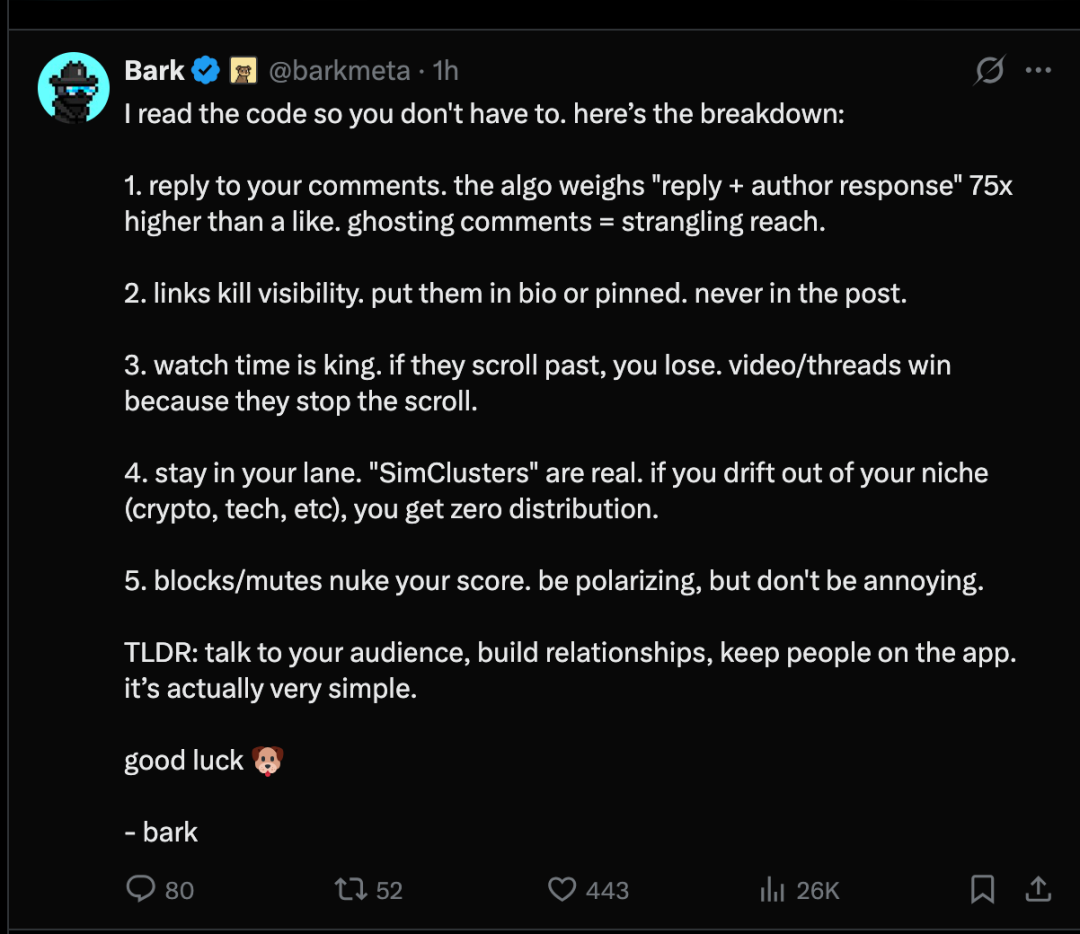

After X's recommendation algorithm was open-sourced, on the X platform, users summarized the recommendation mechanism into the following 5 points:

- Reply to your commentThe algorithm weights "replies + author responses" 75 times more than likes. Not replying to comments can significantly affect visibility.

- Links can reduce exposure.You should place the link in your personal profile or in a pinned post. Under no circumstances should you put it in the main body of a post.

- Viewing duration is crucial.If they swipe past your screen, you won't capture their attention. Videos/posts gain high engagement because they can make users stop scrolling.

- Stay committed to your field."Simulated clusters" are real. If you deviate from your niche (cryptocurrency, tech, etc.), you will not be able to obtain any distribution channels.

- Blocking / being silent will greatly reduce your score.It should be controversial, but not annoying.

In short: Communicate with your audience, build relationships, and keep users within the app. It's actually quite simple.

Some netizens have also noticed that although the architecture is open source, some components have not yet been open-sourced. The netizen stated that this release is essentially a framework without an engine. Specifically, what is missing?

Missing weight parameter - The code confirms "positive actions add points" and "negative actions deduct points," but unlike the 2023 version, the specific numerical values have been removed.

Hide model weights - Does not include the internal parameters and computations of the model itself.

Unreleased training data - We have no knowledge about the data used to train the model, the sampling methods for user behavior, or how "good" and "bad" samples were constructed.

For the average X user, the platform's open-sourcing of its algorithm would not have a significant impact. However, greater transparency could help explain why some posts gain visibility while others are ignored, and allow researchers to study how the platform ranks content.

4. Why is the recommendation system a key battleground?

In most technical discussions,Recommendation SystemIt is often regarded as part of the backend engineering—low-profile, complex, and rarely in the spotlight. However, if one truly examines how the business operations of internet giants function, it becomes clear that the recommendation system is not a marginal module, but rather an "infrastructure-level component" that supports the entire business model. For this reason, it can be called the "silent giant" of the internet industry.

Public data has repeatedly confirmed this. Amazon has disclosed that approximately 35% of purchases on its platform directly result from its recommendation system. Netflix is even more aggressive, with about 80% of viewing time driven by its recommendation algorithms. YouTube is similarly dependent, with around 70% of viewing activity attributed to its recommendation system, especially the news feed. As for Meta, although it has never provided an exact percentage, its technical team has mentioned that approximately 80% of the computing cycles in the company's internal computing clusters are used to support recommendation-related tasks.

What do these numbers mean?Removing the recommendation system from these products is almost equivalent to pulling out the foundation.Take Meta as an example; ad delivery, user engagement time, and commercial conversion are almost entirely built upon recommendation systems. These systems not only determine what users "see," but more importantly, they directly determine "how the platform makes money."

Yet, it is precisely such a life-and-death system that has long faced the problem of extremely high engineering complexity.

In traditional recommendation system architectures, it is difficult for a single unified model to cover all scenarios. Real-world production systems are often highly fragmented. Take companies like Meta, LinkedIn, and Netflix as examples: behind a complete recommendation pipeline, there are typically 30 or even more specialized models running simultaneously: retrieval models, coarse-ranking models, fine-ranking models, and re-ranking models, each optimized for different objective functions and business metrics. Behind each model, there is often one or even multiple teams responsible for feature engineering, training, hyperparameter tuning, deployment, and continuous iteration.

The cost of this approach is evident: it involves complex engineering, high maintenance costs, and difficulties in cross-task collaboration. Once someone proposes the idea of "can a single model address multiple recommendation problems," it signifies a reduction in system complexity by orders of magnitude. This is precisely the long-sought but difficult-to-achieve goal for the industry.

The emergence of large language models provides a new possible path for recommendation systems.

Large Language Models (LLMs) have already demonstrated in practice that they can become extremely powerful general-purpose models: they exhibit strong capabilities in transferring knowledge across different tasks, and their performance continues to improve as data scale and computational power increase. In contrast, traditional recommendation models are often "task-specific," making it difficult to share capabilities across multiple scenarios.

More importantly, a single large model brings not only engineering simplification but also the potential for "cross-learning." When the same model handles multiple recommendation tasks simultaneously, signals from different tasks can complement each other. As the data scale grows, the model can evolve more holistically. This is precisely the characteristic that recommendation systems have long desired but found difficult to achieve through traditional approaches.

What has LLM changed? It has actually changed things from feature engineering to comprehension ability.

From a methodological perspective, the most significant change that LLMs bring to recommendation systems occurs in the core step of "feature engineering."

In traditional recommendation systems, engineers first manually construct a large number of signals, such as user click history, dwell time, similar user preferences, content tags, and so on. Then, they explicitly instruct the model to "make judgments based on these features." The model itself does not understand the semantics of these signals; it merely learns mapping relationships in the numerical space.

After introducing language models, this process becomes highly abstracted. You no longer need to specify each signal individually, such as "pay attention to this signal, ignore that signal." Instead, you can directly describe the problem to the model: "This is a user, this is a piece of content; this user has previously liked similar content, and other users have also given positive feedback on this content—now please determine whether this content should be recommended to this user."

Language models themselves already possess comprehension capabilities; they can independently determine which information constitutes important signals and how to integrate these signals to make decisions. In a sense, they are not merely executing recommendation rules, but actually "understanding the act of recommending."

The source of this capability lies in the fact that LLMs are exposed to massive and diverse data during the training phase, enabling them to more easily capture subtle yet important patterns. In contrast, traditional recommendation systems must rely on engineers to explicitly enumerate these patterns; once any are missed, the model cannot perceive them.

From the backend perspective, this kind of change is not unfamiliar. Just like when you ask a question to GPT, it generates a response based on the contextual information; similarly, when you ask it, "Will I be interested in this content?" it can also make a judgment based on the available information. To some extent, language models inherently possess the capability of "recommendation."