Could AI really have saved a toilet company?

Japan’s premium smart toilet manufacturer TOTO has seen its stock price surge over the past few months—not because its toilets are selling better, but because of a hidden business: high-purity ceramic electrostatic chucks, which are critical consumables used to hold wafers in place during chip manufacturing. TOTO has achieved a precision level of 1/80 the thickness of a human hair and boasts the highest purity in the industry.

Amid a surge in demand for memory chips and rampant capacity expansion by upstream manufacturers, this business has become an absolute necessity. As a result, investment banks like Goldman Sachs have raised their price ratings on TOTO—simply because orders for electrostatic chucks are already booked through 2027. This segment now accounts for over 40% of TOTO’s operating profit.

When even a toilet company has become an AI-related stock, it’s clear just how hot the AI storage sector has become. Behind the soaring stock prices of major storage players like Samsung, SK Hynix, Micron, and SanDisk is the most severe supply-demand imbalance the global memory chip market has seen in forty years.

In this article, we’ll break down this round of the storage “super cycle” and take an in-depth look with Samsung insiders and Wall Street investors: why this cycle is different from previous ones, why storage is so critical to the AI industry, how AI giants like Google are working to reduce their dependence on storage, and how long this shortage cycle will last—and how it will impact you and me.

01 Surge of over 1800%: HBM, More Expensive Than Gold

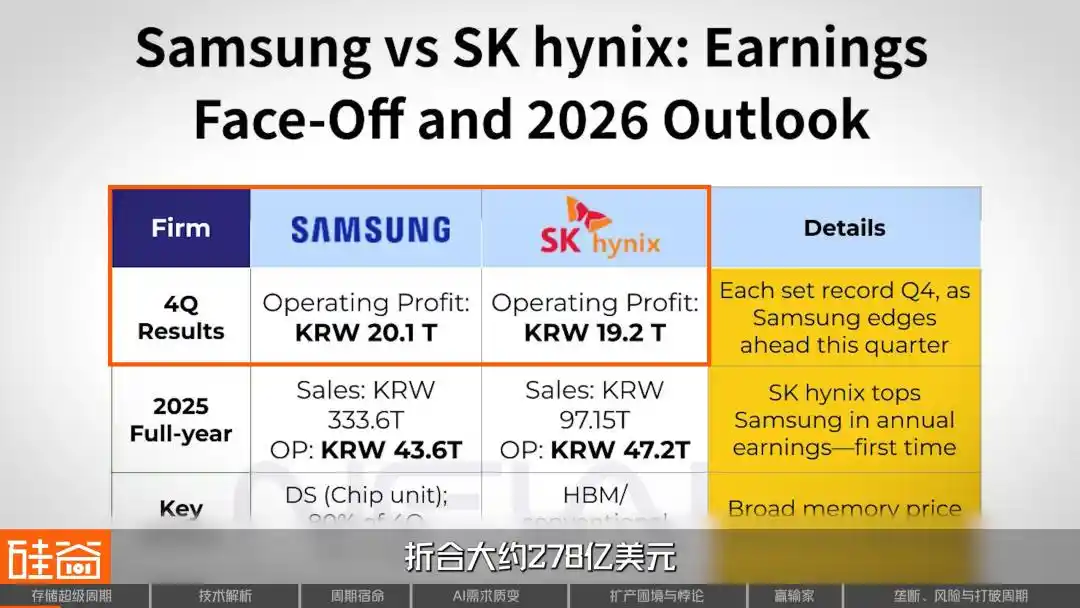

At the end of January 2026, South Korea’s storage giants Samsung Electronics and SK Hynix simultaneously released their fourth-quarter financial results for the previous year. How staggering were the numbers? Combined, the two companies achieved an operating profit of nearly 4 trillion Korean won, equivalent to approximately $27.8 billion—roughly $300 million in net profit per day. Amid these record-breaking profits, SK Hynix’s year-end bonuses reached an average of RMB 640,000 per employee, setting a new company record.

The core product driving all of this to its peak is the HBM (High Bandwidth Memory) chip. A single HBM chip, no larger than a fingernail, sells for $400 to $500—more expensive than gold by weight. Only three companies worldwide can produce it: SK Hynix holds about 60%, with Samsung and Micron each holding 20%.

But HBM is just the tip of the iceberg—the entire industry is in panic as shortages hit everything from high-end to low-end, from DRAM to NAND.

From the end of 2024 to December 2025, the spot average price of DDR5 (16GB) rose from $4.6 to $28, an increase of over 500%; the older DDR4 surged from $3.2 to over $62, achieving a cumulative gain of nearly 1,800%; and 64GB server memory modules for data centers climbed from $255 to $700 within just six months last year, rising nearly 175%.

SK Hynix's 2026 capacity has already been fully sold out, and Samsung raised its NAND flash supply prices by 100%—doubling them—for the first quarter of 2026.

Candice Hu

Samsung Storage Products Marketing Manager

We are now seeing DRAM spot prices exceed the peak levels seen during the 2016–2018 cloud era. Our current shortage situation means nearly all inventory for 2026 has already been sold, and 2027 is also likely to be fully committed. For example, our quotes to a major GPU provider for SSDs have surged dramatically—doubling within just one week.

Meanwhile, a more significant signal emerged: At CES in early 2026, SanDisk told Wall Street that it was entering into entirely new long-term supply agreements (LTAs) with customers—this time requiring upfront payments that are non-refundable in case of breach. This has never happened in the decades-long history of the storage industry.

Rob Li

Managing Partner at Amont Partners, New York

Long-term agreements (LTAs) have existed historically, but over the past several decades, LTAs have had no enforceable validity. When the market enters a downturn, if a customer says they no longer recognize the agreement, there is absolutely nothing you can do if the customer refuses to honor it.

This time, the tone has changed. Powerful storage suppliers have established new rules.

Rob Li

Managing Partner at Amont Partners, New York

SanDisk told Wall Street or the market: "Our current LTAs with customers are very different from those in the past. These agreements are legally binding, and customers must make advance payments to us. If you make an advance payment and later decide to walk away without paying at the agreed price, your advance payment will not be refunded."

Rob's judgment is that if SanDisk can achieve this, then the other three giants—SK Hynix, Samsung, and Micron—have no reason not to as well. Under these circumstances, the entire supercycle could very well last until 2027.

02 Full Industry Chain Breakdown: How Does the Storage Industry Operate?

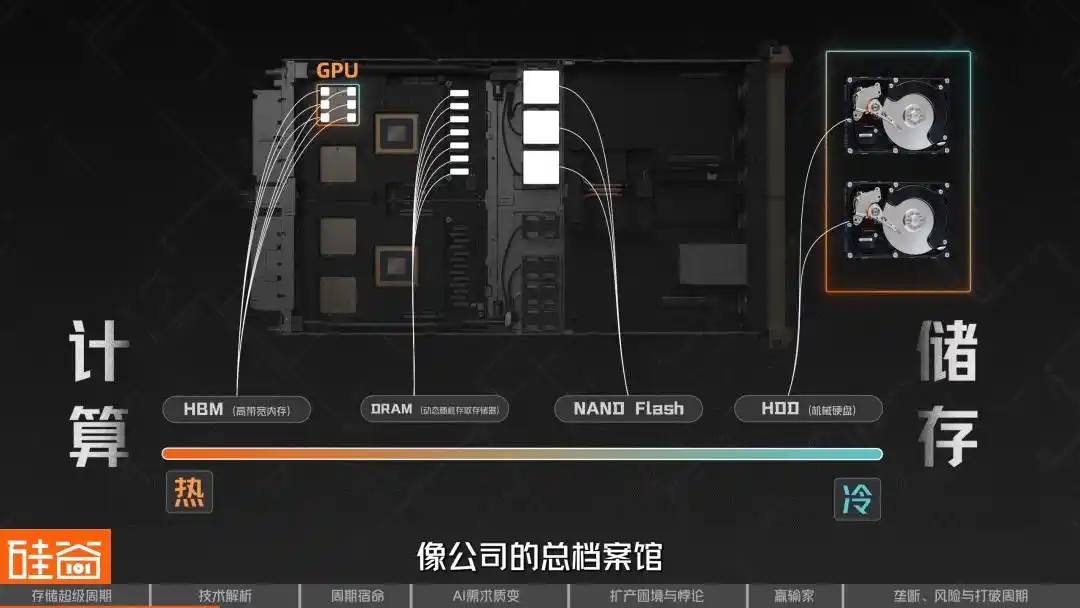

In the storage industry, we can categorize storage as hot or cold: the closer it is to computation, the hotter it is; the more it leans toward pure storage characteristics, the colder it is.

Therefore, the hottest is DRAM (Dynamic Random-Access Memory), the storage closest to computation, which can be understood as the "RAM" in computers and smartphones. When a chip is operating, data must first be loaded into DRAM before it can be processed. Its characteristics include extremely high speed, but it loses data when power is cut, making it a form of "short-term memory."

Among these, HBM (High Bandwidth Memory) is a specialized evolution of DRAM. It vertically stacks multiple layers of DRAM chips using through-silicon via (TSV) technology and integrates them on the same substrate with a GPU, significantly increasing bandwidth.

That’s why all top chips used for AI training—whether NVIDIA’s GPUs or Google’s TPUs—rely on HBM, making it the most prominent and scarce product of this supercycle.

Within the DRAM family, there is actually a wide variety of types, including GDDR (used in graphics cards), Low-Power DDR (LPDDR for smartphones and laptops), and more—each tailored to specific applications. A single DRAM chip cannot serve all devices; for example, HBM used in NVIDIA GPUs and LPDDR in your smartphone are both DRAM, but they differ entirely in manufacturing processes, packaging methods, and performance specifications.

On the other end of the spectrum is NAND. If DRAM is like short-term memory, then NAND Flash is long-term memory—it retains data even without power and serves as the core component in everyday devices such as solid-state drives (SSDs), phone storage, and USB drives. The photos stored on your phone and the games installed on your computer all reside on NAND.

The role of NAND in the AI era is also rapidly evolving. Once merely a "warehouse" responsible for long-term data storage, NAND is now transforming from a back-end repository into a frontline ammunition depot.

Moving even further into "colder" storage, we have traditional hard disk drives (HDDs), which read and write data using spinning magnetic disks. They are slower but more affordable and offer large capacities, making them ideal for cold storage and archival use cases in data centers today.

As AI inference demands increasingly granular storage hierarchies, it now resembles a layered warehouse system. Data needed most urgently is stored in HBM, like items placed right at your fingertips; frequently used but less time-sensitive data resides in DRAM, like files kept in a desk drawer; colder, backup data is stored in NAND/SSD, like items stored in an office cabinet; and large volumes of long-term, collectively shared data are kept in backend enterprise-scale storage, like the company’s central archive.

Rob Li

Managing Partner at Amont Partners, New York

AI benefits things that are heat-intensive, and storage is also necessary. I’ve used AI to generate many images and create numerous videos; due to regulations in various countries, these cannot be deleted and must be retained, which has significantly increased demand for storage. However, its most immediate impact will undoubtedly be in the area of computation—those closest to computation will see the most noticeable short-term gains.

Next, let’s take a look at the key players across the entire storage industry chain.

At the upstream level are materials and silicon wafers, such as SUMCO from Japan, one of the world’s most important semiconductor wafer suppliers. In the manufacturing phase, key equipment providers include ASML, the leading lithography machine manufacturer, and Tokyo Electron, which covers multiple processes including photoresist coating and development, deposition, etching, and cleaning.

Meanwhile, at the chip design layer before manufacturing, companies like Cadence and Synopsys—providers of EDA, verification, and design IP—are equally indispensable; and interface IP firms such as Rambus play a critical role in high-speed memory architectures like HBM. Though less visible than GPUs, they are equally essential in this AI-driven supercycle.

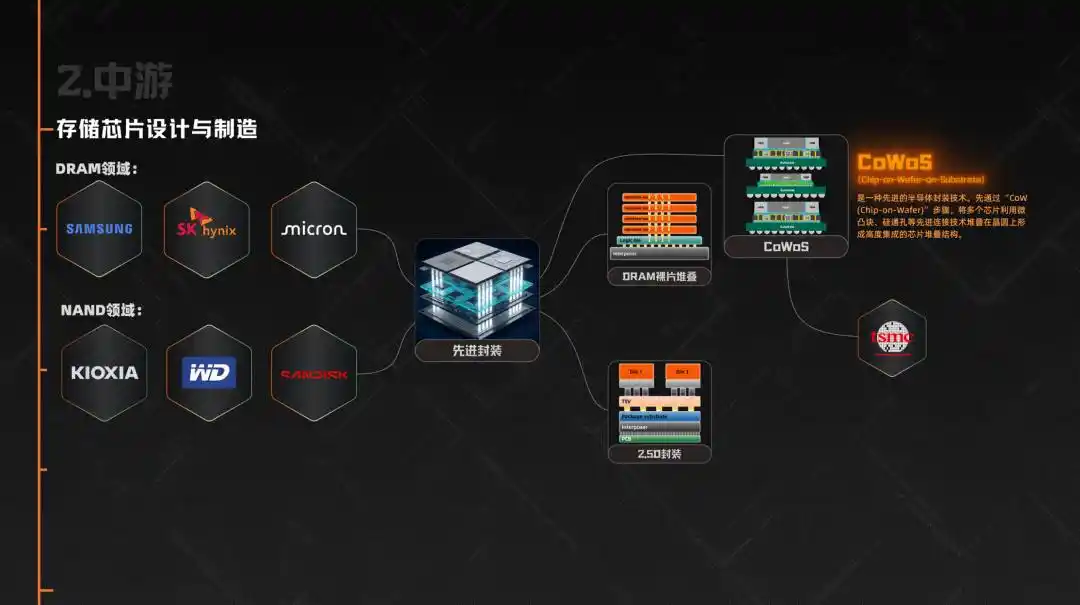

The midstream consists of the design and manufacturing of memory chips. In the DRAM sector, Samsung, SK Hynix, and Micron together account for 95% of the global market share. In the NAND sector, in addition to these three companies, Kioxia, Western Digital, and SanDisk are also key players.

Then there’s the particularly critical component in this cycle—advanced packaging. HBM doesn’t end simply with the production of DRAM; it requires stacking multiple layers of DRAM dies and integrating them with GPUs or other AI accelerators through 2.5D packaging. As a result, packaging technologies like CoWoS became one of the most critical bottlenecks in the AI chip supply chain, directly limiting the actual shipment of HBM, with CoWoS capacity primarily supplied by TSMC.

Downstream consists of various end-user applications, including data centers and cloud providers such as Microsoft, Google, Amazon, and ByteDance, which are currently the largest investors. Next come smartphone manufacturers (Apple, Samsung, Xiaomi, OPPO), PC manufacturers (Lenovo, Dell, HP), automotive companies (Tesla, Li Auto, NIO), as well as gaming consoles and industrial equipment.

So you can see that although the entire supply chain is very long, pricing power is highly concentrated among just three midstream players: Samsung, SK Hynix, and Micron. They determine what products to make, who to supply, and at what price to sell. And in today’s market, where supply is far below demand, their bargaining power is unprecedented.

03 Why Prices Always Surge and Crash: The Inherent Cyclical Nature of the Storage Industry

Another major characteristic of the storage industry is its cyclicality. Historically, it has repeatedly swung between periods of massive growth and severe downturns. This behavior stems from two underlying factors: one rooted in physics, and the other in economics.

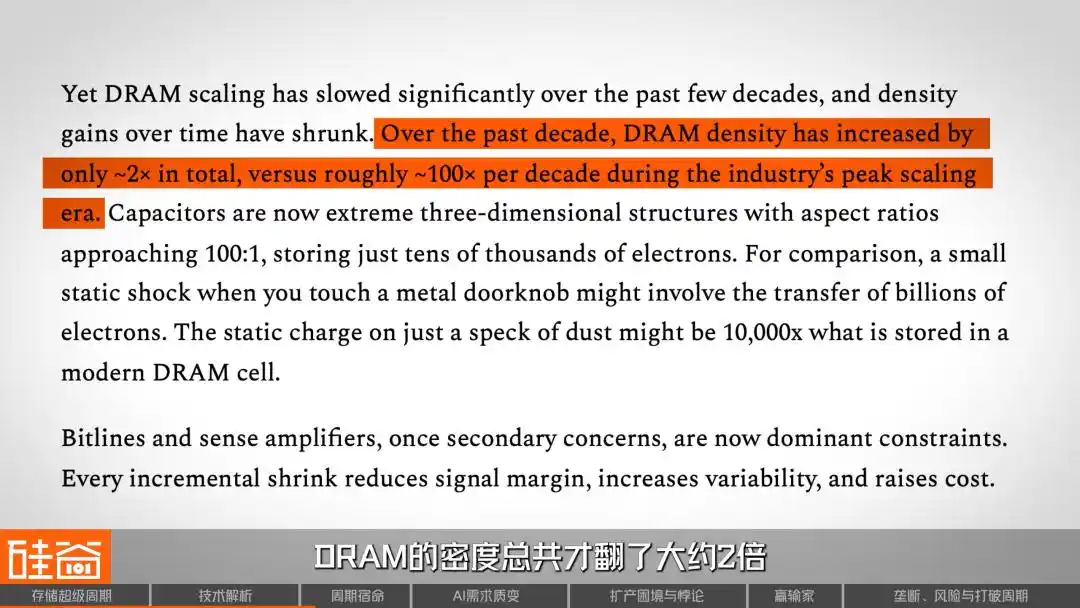

Let’s start with physics. DRAM, the "runtime memory" in phones and computers, stores data by holding electrical charge. For decades, engineers have been shrinking and increasing the number of storage units to boost density. At its peak, DRAM density doubled every ten years.

But now, according to SemiAnalysis’s report, DRAM density has only increased by about 2 times over the past decade, compared to 100 times per decade in the past—scaling has significantly slowed. This means that cost reductions in memory chips are no longer automatically driven by technological advancements as they once were, but are now more dependent on fluctuations in production capacity and supply-demand dynamics.

Let’s talk about economics. Memory chip manufacturing is one of the most capital-intensive industries globally; building a state-of-the-art wafer fab typically costs billions, even tens of billions, of dollars and takes two to three years to complete. Once this money is invested, it becomes a sunk cost, so even when demand is weak, manufacturers tend to keep producing—because halting operations would lead to even greater losses.

More critically, the storage industry operates on a “build first, sell later” model, which is completely different from TSMC’s “take orders first, then expand capacity” approach. Storage manufacturers must guess future demand on their own and invest in production capacity two to three years in advance. If they guess right, everyone wins; if they guess wrong, it’s a disaster.

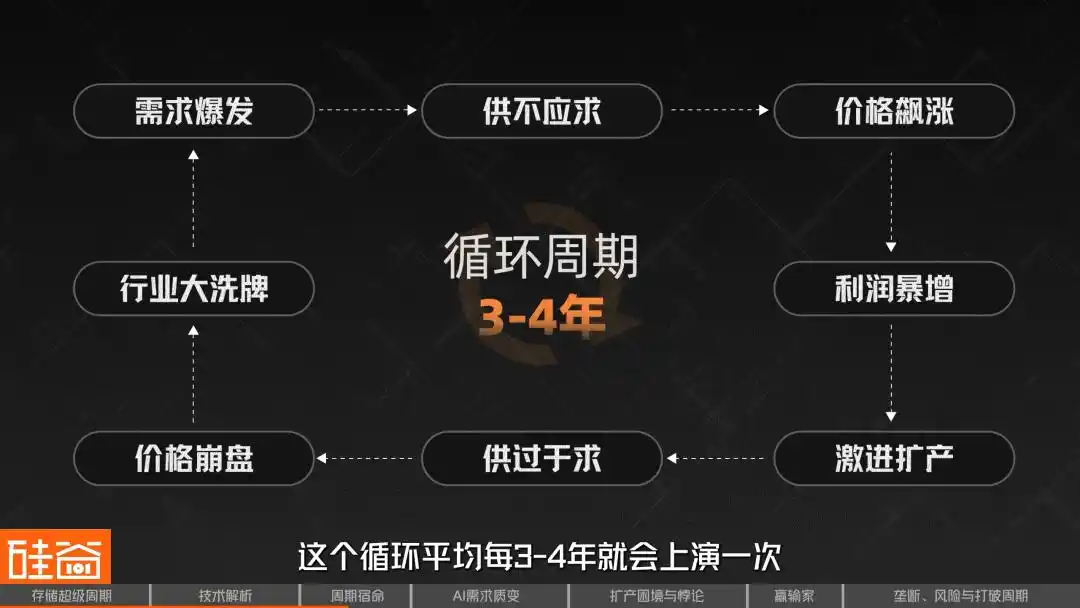

This structural contradiction has created a classic cycle repeatedly played out in the storage industry: surge in demand → supply shortage → price spike → profit explosion → aggressive capacity expansion → oversupply → price collapse → industry consolidation. Over the past three decades, this cycle has occurred every three to four years without exception.

As a result, the global DRAM suppliers have been reduced from more than 20 in the 1990s to just three major players today, along with challengers like China’s CXMT. Each cycle has eliminated competitors—such as Germany’s Qimonda, which went bankrupt, and Japan’s Elpida, which exited the market. These harsh lessons have instilled deep respect for the concept of “cycles” throughout the industry.

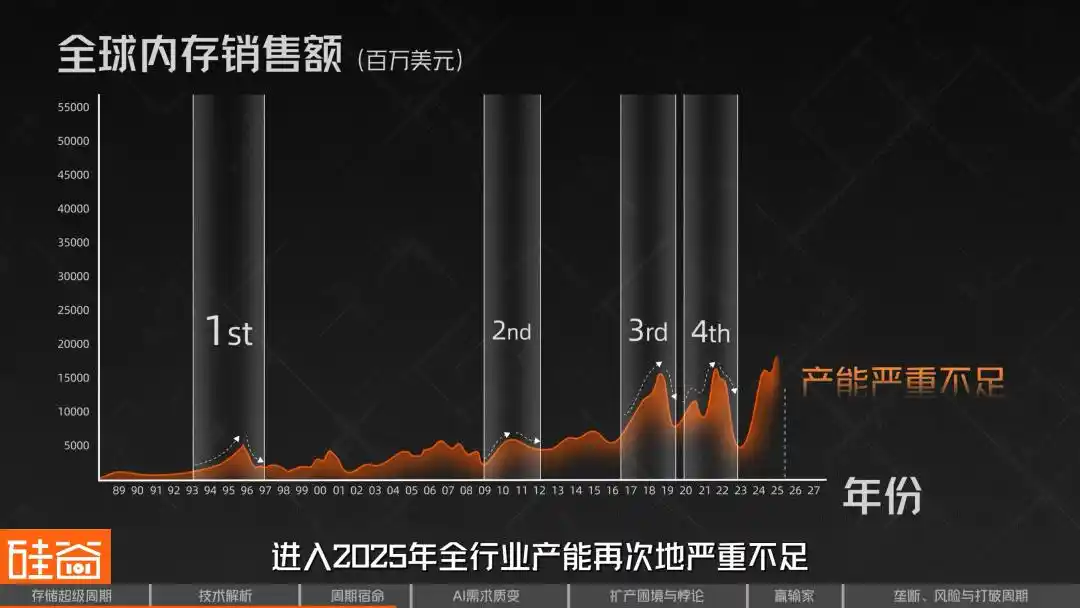

Over the past several decades, the storage industry has experienced four cycles.

The first time was in 1993, at the dawn of the Windows PC era. The widespread adoption of graphical interfaces caused memory demand to surge, while supply-side production capacity was severely insufficient, leading to a sharp price increase. As a result, approximately 50 new factories were built globally all at once; after overcapacity set in, prices plummeted and many players exited the market.

The second was in 2010, during the smartphone and cloud computing era. The iPhone and Android drove explosive growth, pushing server DRAM from single-digit gigabytes to tens of gigabytes. However, standardization accelerated commoditization, making it difficult for suppliers to differentiate themselves, resulting in a cycle that was shorter than expected.

The third cycle occurred from 2017 to 2018. Cloud providers upgraded their data centers, packing more DRAM into each server. Since server memory is more expensive and more profitable than consumer-grade memory, the gross margins of the three major manufacturers reached historical highs. However, the high profits spurred increased production, and once demand passed its peak, the industry slid back into a downturn by the end of 2018.

The fourth cycle occurred from 2020 to 2021, an unexpected boom driven by the pandemic. Remote work and cloud usage surged, but panic-driven double ordering created artificial demand. When the tide receded, inventory became severely overstocked, leading to a painful downturn from 2022 to 2023. Since then, production capacity has been significantly reduced, but this conservative period laid the groundwork for today’s shortage. Entering 2025, industry-wide capacity is once again severely insufficient.

So what is the core lesson history teaches us? Past so-called supercycles have never lasted more than two years—they always followed the pattern of “high profits → frantic expansion → oversupply → crash,” a rigid rule over the past four decades. After experiencing so many cycles, investors and industry participants have developed a deeply ingrained reflex: the faster it rises, the harder it falls.

But this time, increasing evidence suggests that historical patterns may be broken.

04 Why This Time Is Different: The Qualitative Shift from Training to Inference Demand

4.1 Let's start with the most basic intuition

Before diving into complex supply and demand models, let’s start with the simplest logic. Every day, you open ChatGPT or Gemini, upload files, save conversations, and let the AI remember your preferences—you may not realize that each interaction consumes storage resources. It’s not just computational power on the server side, but also vast amounts of memory and flash storage.

Most AI users today have little loyalty—they use whichever model works best and is cheapest. But imagine if one day your AI assistant truly “understood” you—remembering your work habits, communication preferences, and even details of projects you discussed three months ago. Would you still switch platforms so easily?

This "memory stickiness" is the core weapon for large model companies to build their moats, and the hardware infrastructure enabling this stickiness is storage—vast, multi-tiered storage.

Another equally intuitive logic is that video models are becoming increasingly powerful, and AI-generated video is drawing closer to practical application. Since video data volumes are tens to hundreds of times larger than text data, storage demands will experience an exponential surge.

Rob Li

Managing Partner at Amont Partners, New York

Memory is like a small chalkboard. In the past, we only calculated simple things like 1 + 1 = 2, so a normal-sized chalkboard was enough. But now, in the AI era, computations are much more intense and complex, involving many steps. If I were a small chalkboard, and you had to write, erase, write again, and erase again for each of 100 steps, you’d have to erase 100 times—that would waste a lot of time. That’s why we now need a massively large chalkboard, where I can write all 100 calculation steps at once, and erase them all at once, saving us time.

So, a blackboard that keeps growing larger—that’s the storage demand in the AI era.

4.2 From Training to Inference: A Qualitative Shift in Storage Requirements

In the early stages of generative AI, computing power and funding were heavily invested in model training, with the storage system primarily responsible for efficiently feeding data to thousands of GPUs and periodically creating model checkpoints to prevent training from being lost due to interruptions.

But today, inference is rapidly becoming the main battleground, and the storage demand patterns for inference are far more complex than those for training.

It requires loading the model from the storage layer into memory: active weights primarily reside in HBM, while some states and caches remain in DRAM; when the KV Cache exceeds the capacity of higher-level memory, a portion is offloaded to SSD/NAND and retrieved as needed; external knowledge relied upon by RAG queries is typically stored in more backend shared storage or data lakes, retrieved in real time by the retrieval system.

A larger variable is the rise of AI agents. In its latest research report, Morgan Stanley points out that 2026 will be the year when AI transitions from experimentation to core infrastructure, with these agents becoming more reliable, having better memory, exhibiting fewer hallucinations, and enabling continuous learning. The report states: “Reasoning is becoming a memory challenge, not just a computational one.”

However, for agents to function effectively, they require maintenance of multiple layers of memory: short-term working memory (current conversations), long-term memory (user history across sessions), pre-trained knowledge bases, and tool invocation logs... Each layer relies on different levels of storage: from "hot data" in HBM, to "warm data" in DRAM, and finally "cold data" on NAND SSDs.

So the trend is clear: the next wave of AI advancement won’t come from stronger reasoning abilities, but from better context handling. An AI assistant that remembers everything is far more useful than a larger model that remembers nothing. What does this mean for storage?

4.3 Do the Math: How Much Storage Will AI Actually Consume?

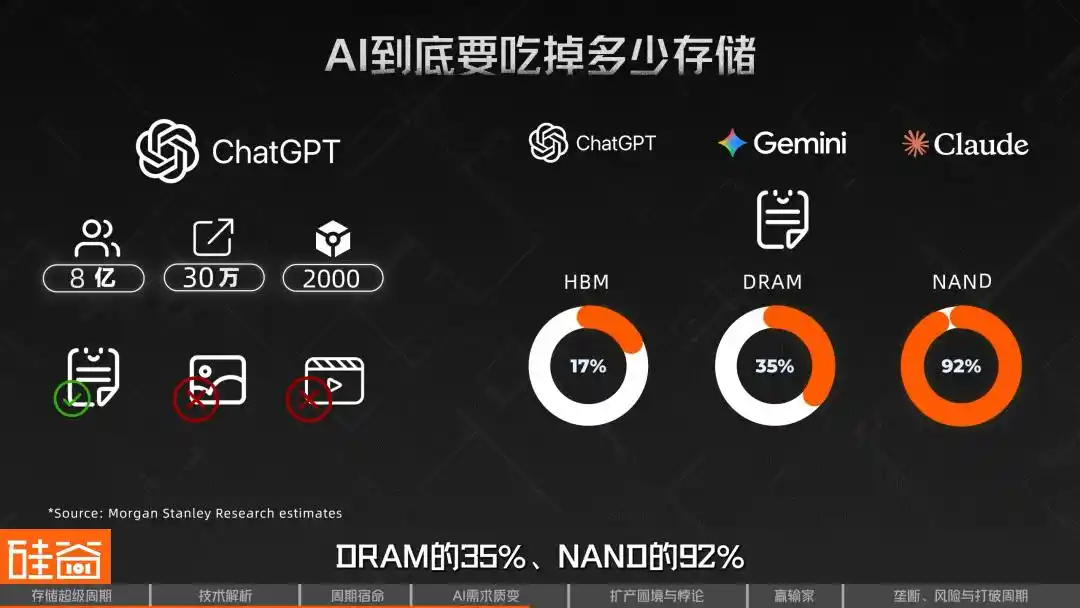

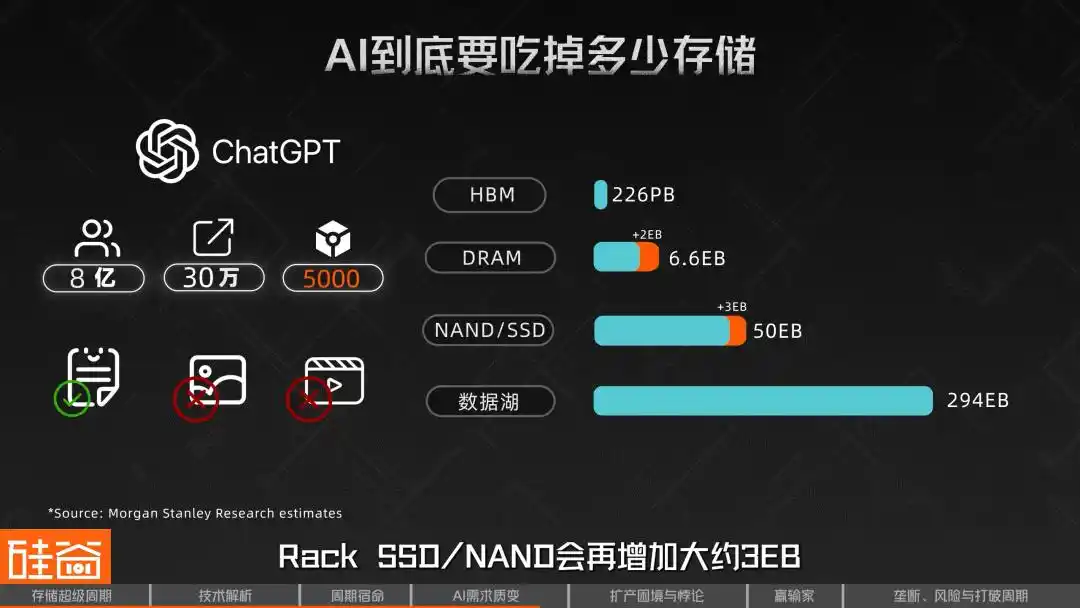

Morgan Stanley conducted a highly detailed layered calculation. Using a model of similar scale to ChatGPT as a baseline, they assumed approximately 800 million weekly active users, a peak of 300,000 requests per second, 2,000 input tokens per request, and considered text only, excluding images and video. Based on this detailed breakdown, such a system would require approximately 226 PB of HBM, 4.6 EB of DRAM, around 47 EB of NAND/SSD, and a data lake of approximately 294 EB.

This set of numbers means that if there were three models of this scale globally—such as ChatGPT, Gemini, and Claude—the purely text-based inference demand alone would account for 17% of global HBM supply, 35% of DRAM, and 92% of NAND in 2026. This does not even include multimodal demands such as images and videos.

More importantly, this estimation is highly sensitive to context length. Morgan Stanley’s sensitivity analysis shows that if the input length is increased from 2,000 tokens to 5,000 tokens per request, with all other factors held constant, DRAM requirements per model would increase by approximately 2 EB, and Rack SSD/NAND requirements would increase by approximately 3 EB. In other words, as longer contexts and longer reasoning chains become standard, the pressure on storage will rapidly intensify.

SemiAnalysis calls this the "Memory Parkinson's Law": every time HBM capacity increases, developers immediately build larger models to fill it. Previously used techniques for compressing models are relaxed as soon as new space becomes available, until they hit the wall again—meaning storage will always be the next bottleneck.

This is why some in the industry believe that memory chip manufacturers may have collectively underestimated the demand surge brought about by the increase in large language model tokens.

Rob Li

Managing Partner at Amont Partners, New York

Previous cycles may have lasted only one and a half to two years, but this cycle could last much longer—when a cyclical industry transforms into a structurally growing industry, it is no longer cyclical.

Another decisive factor in this cycle is the expansion of production capacity—but why is increasing production so challenging?

05 The More You Produce, the Shorter the Supply: The HBM-DRAM Dilemma and Strategic博弈

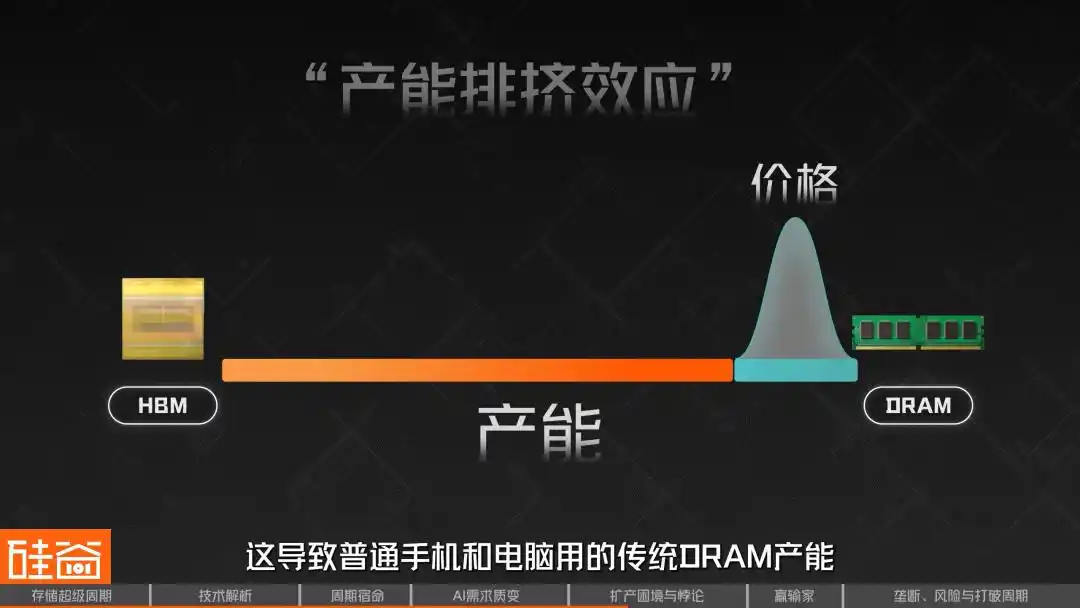

Another key to understanding this super cycle lies in grasping a seemingly contradictory phenomenon: the large-scale expansion of HBM production has not alleviated DRAM shortages—it has made them worse.

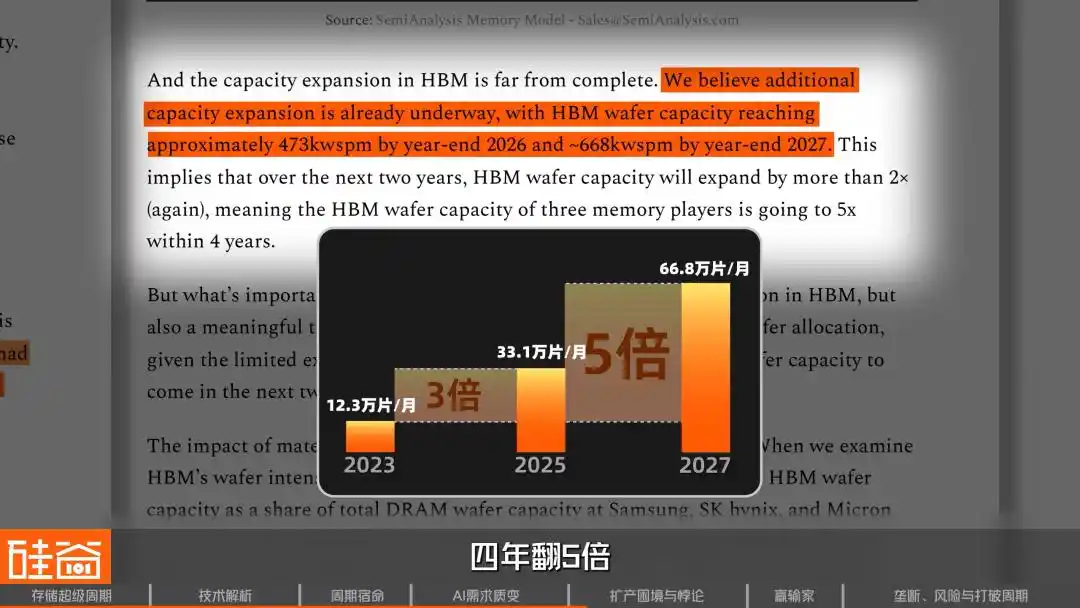

SemiAnalysis’s tracking data shows that at the end of 2023, the three major memory manufacturers allocated approximately 123,000 wafers per month to HBM production. By the end of 2025, this increased to 331,000 wafers per month, nearly tripling in two years. It is projected to rise further to 668,000 wafers per month by the end of 2027, a fivefold increase over four years.

Why is DRAM still in short supply despite such massive expansion? The key is that producing HBM consumes a huge amount of regular DRAM capacity—and it’s extremely inefficient.

HBM is an extremely wafer-intensive architecture. A wafer used for HBM3E 12-layer stacks produces only about one-third the bit output (i.e., storage capacity) of a standard DRAM wafer; for HBM4, this ratio may worsen further to one-quarter.

Candice Hu

Samsung Storage Products Marketing Manager

Compared to traditional DRAM, when producing HBM, our yield per wafer is only one-third that of standard DRAM.

This means that for every additional 1 GB of HBM produced, the market loses the opportunity to produce 3–4 GB of standard DRAM.

Why is the efficiency so low? Because the manufacturing complexity of HBM far exceeds that of standard DRAM—processes like TSVs (through-silicon vias), wafer thinning, and back-end processing all introduce additional yield losses. When stacking 8 or 12 layers, if even a single die is defective, the entire stack may be rendered unusable.

All these issues combined make HBM a product with “reverse scaling”—the more you produce it, the greater the demand on capacity.

This has led to the “HBM-DRAM dilemma,” known in the industry as the “capacity displacement effect.” Because HBM offers higher profits and is heavily pre-ordered by AI giants, manufacturers prioritize allocating their limited wafer capacity to HBM production lines. This has severely constrained the supply of traditional DRAM used in ordinary smartphones and computers, triggering a sharp, retaliatory price surge.

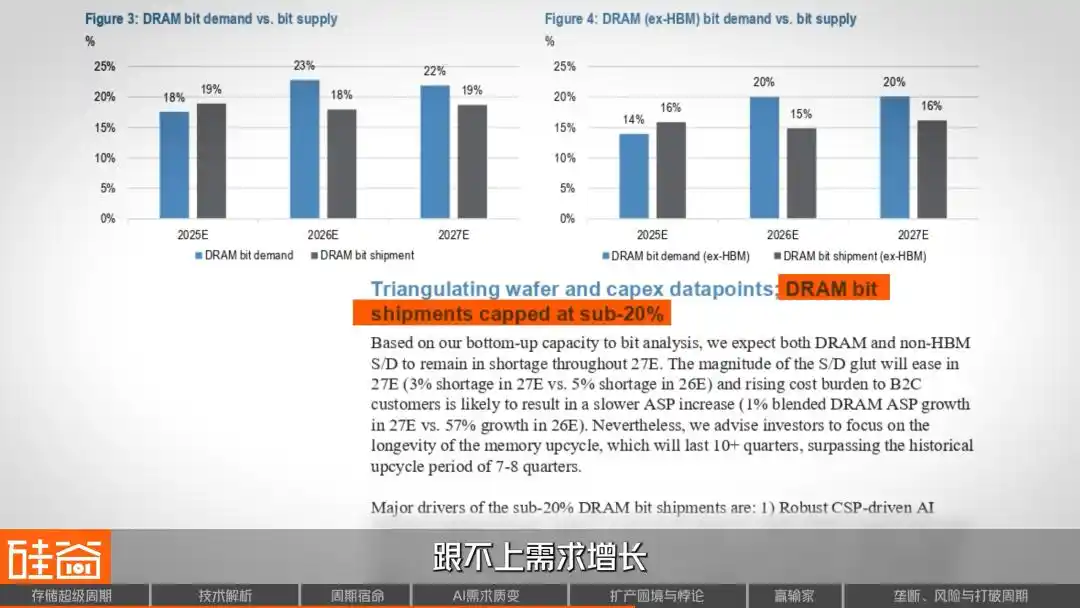

A supply and demand model from J.P. Morgan’s research report also reached a similar conclusion: DRAM supply growth will be capped at below 20% over the next two years, lagging behind demand growth.

Thus, another puzzling phenomenon has emerged: although standard DRAM manufacturing is simpler than HBM, due to production constraints and soaring prices, its profit margin has now caught up to—and even surpassed—that of HBM by Q4 2025. This is because HBM prices are largely locked in through long-term contracts, while spot prices for standard DRAM can quickly reflect supply-demand imbalances. This presents manufacturers with a difficult dilemma: should they continue aggressively expanding HBM production, or allocate part of their capacity to standard DRAM, which is equally profitable?

06 Three Major Challenges to Expansion: Cleanroom Shortages, Equipment Suppliers' Conservatism, and Process Friction

Demand side is already frantic, but supply-side constraints are even more suffocating.

The first bottleneck: insufficient production resources such as cleanrooms. Manufacturing chips requires cleanrooms, but after the pandemic, memory manufacturers collectively adopted a conservative approach during the industry’s downturn, leading to reduced investments and a severe shortage of cleanrooms in 2025 and 2026.

Candice Hu

Samsung Storage Products Marketing Manager

Because the environmental requirements for chip production are extremely high, we’re more concerned about whether we have enough clean rooms and sufficient power—since we might produce enough chips but lack the necessary electricity to operate them.

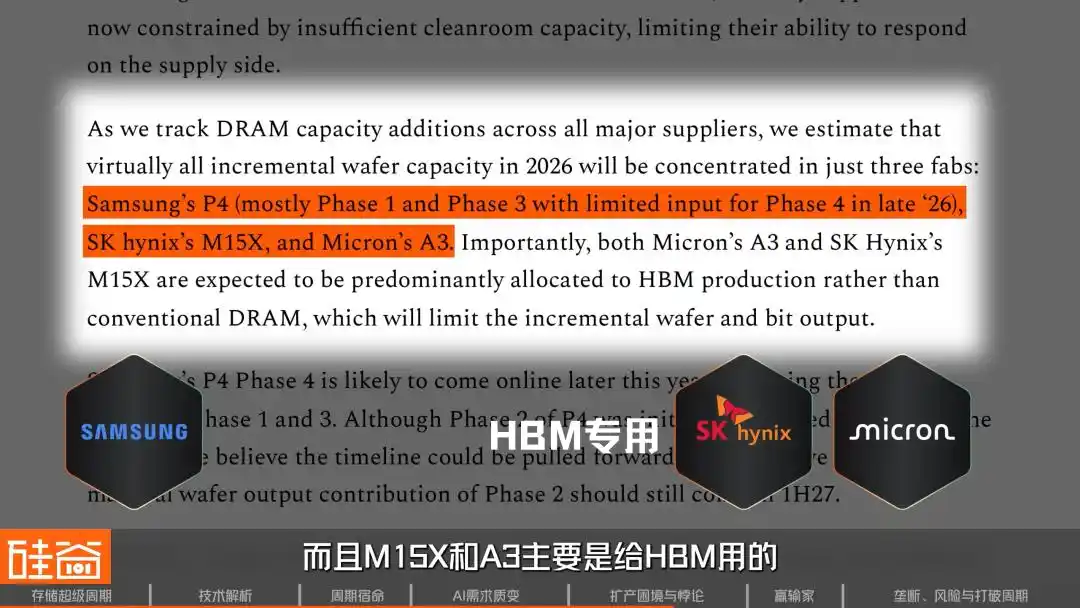

SemiAnalysis’s tracking shows that nearly all new wafer capacity in the industry in 2026 will be concentrated in three factories: Samsung’s P4, SK Hynix’s M15X, and Micron’s A3. Moreover, M15X and A3 are primarily dedicated to HBM, contributing very little to conventional DRAM.

What about truly meaningful new capacity? SK Hynix’s Yongin facility won’t come online until at least February 2027, and Micron’s Idaho facility is targeted for mid-2027. In other words, there will be virtually no supply increase over the next year and a half.

Second bottleneck: Upstream equipment manufacturers are unwilling to increase production.

Rob Li

Managing Partner at Amont Partners, New York

Many equipment manufacturers, such as numerous suppliers in Japan—including the large company Tokyo Electron—are reluctant to expand production, preferring a conservative approach. Having weathered many cycles over the past few decades, expanding capacity now would take several years; by the time that new capacity comes online, the AI cycle may already have peaked. Therefore, they’d rather not expand at all—content to earn $100 instead of chasing $500, and still enjoying a comfortable lifestyle.

Image source: TEL

This is a classic example of the "barrel effect"—even if storage manufacturers have the financial resources and determination to expand production, bottlenecks in the supply of upstream equipment will significantly slow down the ramp-up of production capacity.

Third bottleneck: The friction involved in migrating to advanced nodes. To maximize memory bit output amid limited wafer capacity, the three major manufacturers are accelerating their transition to the 1b node (currently the most advanced production node) and the 1c node (the next-generation node set to enter mass production), as more advanced processes enable finer circuit etching—allowing more memory chips to be produced from the same wafer size on the 1c node compared to the 1b node.

However, migrating this production line requires shutting down the machines and undergoing weeks or even months of reconfiguration and installation, which itself leads to fluctuations in yield and capacity loss over several quarters. At the critical juncture of surging AI demand in 2026, this approach is too slow to address immediate needs.

Candice Hu

Samsung Storage Products Marketing Manager

From the decision to increase capacity to building a fab, and then to having the back-end capable of producing DRAM or NAND chips, it takes three years. At this point, HBM—a more challenging chip to produce—has emerged. As I mentioned, HBM has only one-third the capacity of conventional DRAM. So, if I was already waiting two to three years for capacity to increase, now I’m facing a reduction of one-third in output. As a result, supply and demand remain tight within this cycle.

Insufficient cleanroom capacity, equipment manufacturers not expanding production, and the inherent friction of transitioning to advanced nodes—these three bottlenecks together explain why, despite everyone knowing that memory chips are surging, the supply side remains powerless.

07 Reallocation of Industry Chain Profits: Who Is Enjoying the Banquet, and Who Is Facing the Winter

The surge in memory chip prices, of course, comes at a cost—it is redistributing profits across the entire electronics supply chain.

First, let’s look at the biggest winners along this profit chain: beyond the astronomical profits of South Korea’s two giants, Chinese domestic storage manufacturers are also soaring. Biwin Storage expects its profit to increase by 427% to 520% year-over-year in 2025, while Demingli projects a growth of 85% to 128%.

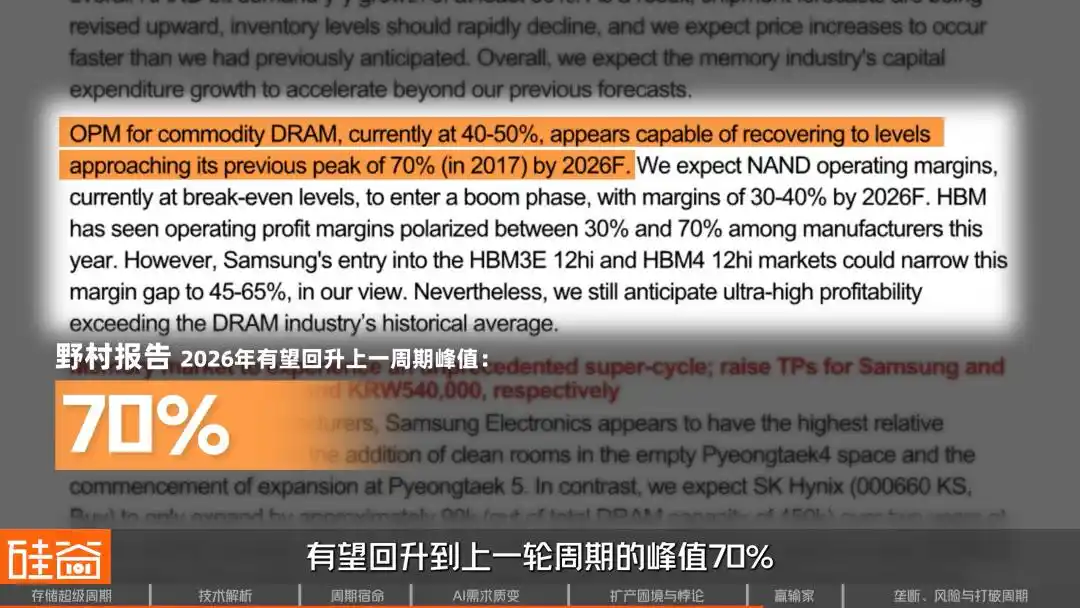

Regarding industry profit margins, Nomura estimates that the operating margin for generic DRAM manufacturers could rebound to the peak of 70% seen in the previous cycle by fiscal year 2026. J.P. Morgan is more aggressive, suggesting that by 2027, the operating margin could exceed 80%, even surpassing the peak of the previous cycle.

The losers along this supply chain are the hardware manufacturers. Morgan Stanley has estimated that for every 10% increase in memory chip prices, hardware OEMs’ gross margins decline by 45 to 150 basis points.

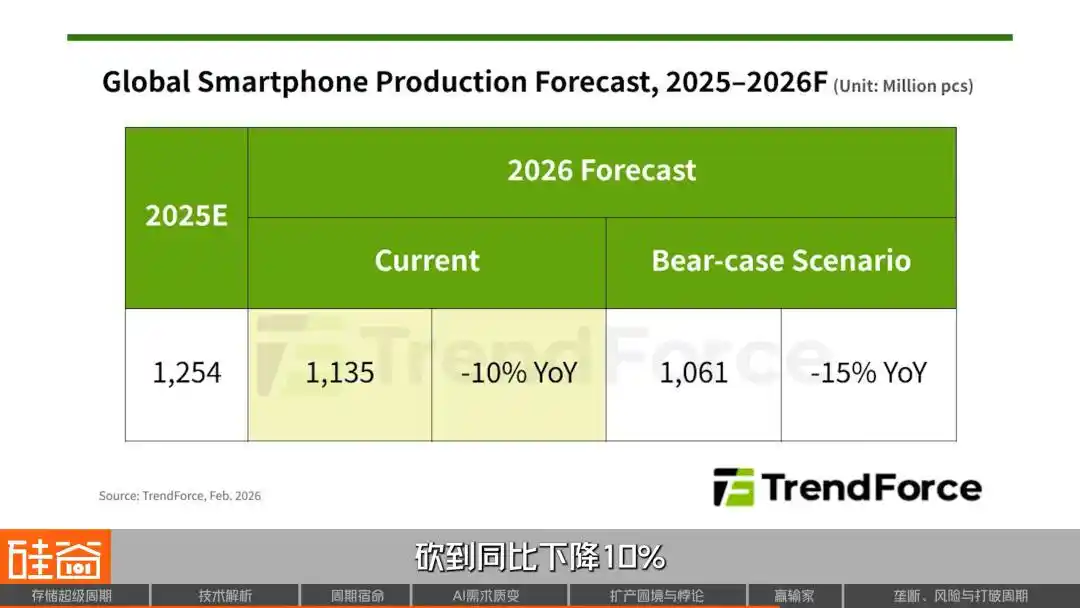

The mobile phone market was hit first, with Xiaomi and OPPO reducing their shipment forecasts by over 20%, and vivo cutting its forecast by nearly 15%. TrendForce directly slashed its global smartphone production forecast for 2026 to a 10% year-over-year decline. Meizu announced the cancellation of the Meizu 22 Air launch due to unsustainable costs. Nothing’s CEO, Philip Pei, remarked on social media: "Smaller companies must seek alternative paths."

The PC market is equally harsh: Lenovo has raised prices on some models by 500 to 1,500 yuan, while Dell and HP have explicitly announced upcoming price increases, primarily driven by rising storage costs. Dell’s COO, Clarke, bluntly stated, “I’ve never seen costs rise this quickly,” and HP’s CEO is even considering “reducing the amount of memory in products.”

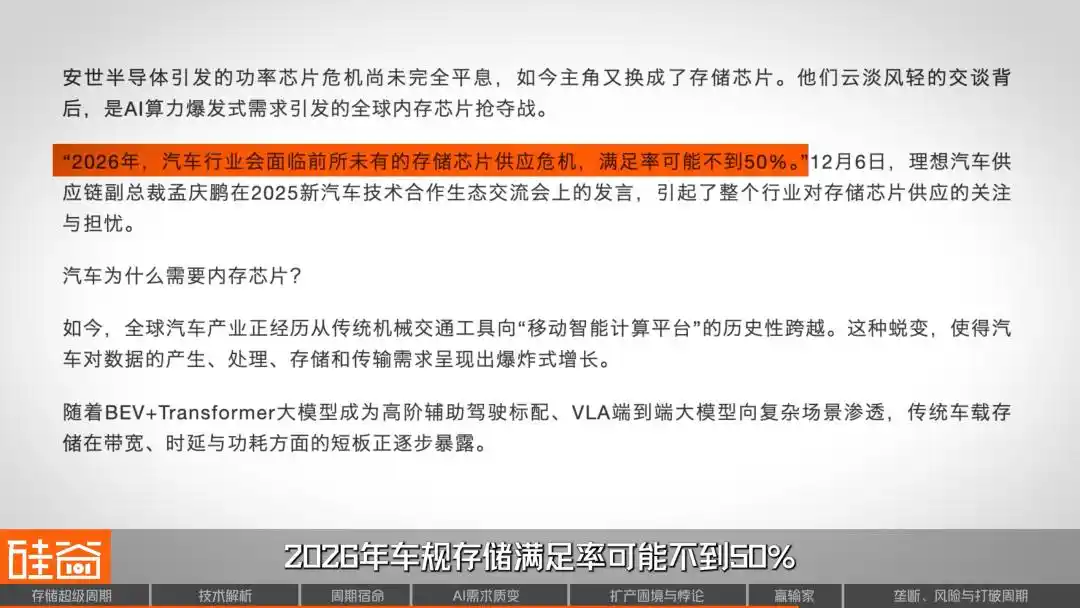

The automotive industry has not been spared: Li Xiang’s supply chain vice president publicly warned that the fulfillment rate for automotive-grade storage may fall below 50% by 2026. NIO’s Li Bin stated, “This year, the biggest cost pressure is memory.” Lei Jun openly admitted during a livestream, “Just the cost of automotive memory alone will increase by thousands of yuan.”

Candice Hu

Samsung Storage Products Marketing Manager

Even though manufacturers like PCs and smartphones have well-known names, they currently have little pricing power with us because their margins are lower than those of cloud providers. For example, we recently heard that a domestic automaker, due to insufficient memory, may be removing the rear-seat in-car entertainment system.

Rob Li

Managing Partner at Amont Partners, New York

Smartphones and PCs will definitely drop by at least 5% this year, possibly more, but no one will care about this. Because these three giants—especially Micron—are saying they no longer care about this business, even if the market drops to zero.

On the other end of demand, cloud providers (Microsoft, Google, Amazon AWS) have shown remarkable price insensitivity.

Candice Hu

Samsung Storage Products Marketing Manager

Now, cloud providers have a marginal cost of zero for their software. Their finances and narratives are tied to their stock prices, so they are extremely price-insensitive—they don’t really care how much memory costs.

For cloud providers, even if the smartphone and PC markets were to drop to zero, storage manufacturers wouldn’t mind, because the prospects for AI data centers are too compelling. So the final question is: how much longer can this super cycle last? Is this time truly different?

August 2026: What’s next?

Today, the competitive landscape of the entire storage industry chain remains stable. HBM is currently in a roughly “6:2:2” distribution, with SK Hynix holding the largest share, while Samsung and Micron each maintain their respective positions. Of course, some investors believe that, in this seller’s market where supply is far below demand, debating market share differences is largely meaningless.

Rob Li

Managing Partner at Amont Partners, New York

Since all three giants are constrained in production capacity, market share depends solely on who can expand output—whoever can increase supply can capture more market share. However, this has little to do with which company has better technology, as the current market is one where supply is far below demand. Therefore, discussing market share—such as SK Hynix holding half the market, larger than the other two—is meaningless, since none of the three can expand their capacity.

The reality is that all three major storage manufacturers have sold out— whoever can squeeze out a bit more production capacity gets to eat a little more. Interestingly, however, the big storage companies may not be pursuing a monopoly.

Candice Hu

Samsung Storage Products Marketing Manager

No storage player wants to monopolize the market; Samsung fears monopolization, and our customers also don’t want us to dominate. When there’s a shortage, giving any single memory supplier 100% market share, as is happening now, puts immense pressure on storage players. Therefore, breaking monopolies is precisely what storage players are more willing to see.

People often assume that monopolies equate to high premiums, but in an industry like storage, which experiences extreme cyclical fluctuations, a 100% market share means bearing 100% of the demand risk—when customers cancel orders, the company becomes highly vulnerable. As a result, storage manufacturers actually prefer to maintain a balanced competitive environment among three players.

So, how long will this cycle last?

Candice Hu

Samsung Storage Products Marketing Manager

By 2026, all coins will be fully sold, creating a supply-demand gap of 30% to even 50%. The shortage will persist in 2027, with meaningful improvement unlikely until 2028—this is a supply shortage expected to last the next two to three years.

Meanwhile, demand shows no signs of slowing. The upcoming surge in AI inference and agents, followed by demand for robotics and physical AI, will further drive exponential growth in storage throughput and capacity requirements.

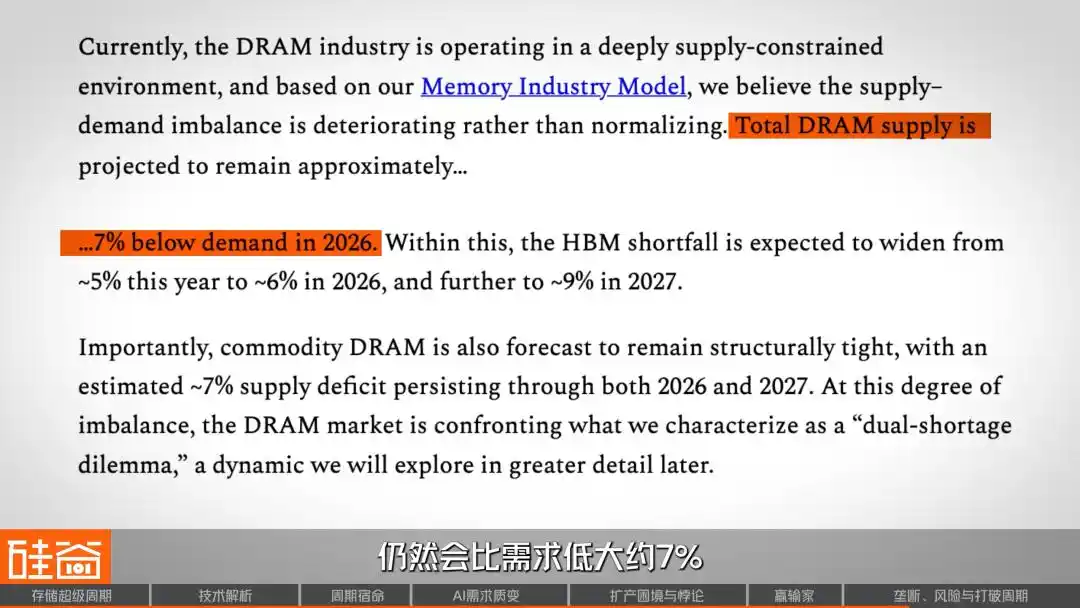

SemiAnalysis believes that total DRAM supply will still be approximately 7% lower than demand in 2026. On the HBM front, the supply-demand gap is expected to widen further through 2027. As for new supply, meaningful capacity additions are more likely to gradually emerge in the second half of 2027. According to Nomura Securities’ estimates, actual increases in production volume may not materialize until 2028.

But an even more important question is whether this industry will从此告别周期? From a Wall Street perspective, Rob offered a deeply insightful perspective in the interview:

Rob Li

Managing Partner at Amont Partners, New York

This cycle could last for a very long time, or even transform the industry from a cyclical one into a structurally growing industry—no longer cyclical at all. If the industry now undergoes a major shift, evolving from a cyclical sector into a non-cyclical one characterized by stable, structural growth, the entire market’s perception of this industry could undergo a qualitative transformation.

For cyclical industries, a P/E ratio of 10 is already considered high; but if they become structurally growing industries and sustain that growth for many years, their P/E ratios could double again.

So, where are we currently in this supercycle?

The horizontal axis of this chart represents a timeline with the trough of each of the past five cycles set as zero, while the vertical axis shows market price gains. As shown, each cycle progresses through four phases: pessimism, skepticism, optimism, and euphoria, before returning to pessimism. In the current cycle, represented by the red line, we have entered the "optimism" phase, and the gain has far exceeded that of any previous cycle.

This aligns with what Rob just said—what if AI truly disrupts this cycle? It would mean that even without profit growth, simply reclassifying the valuation from a cyclical stock to a growth stock could double the stock price. Just as no one would describe Apple’s smartphone business over the past 20 years as a cyclical industry, if storage could reach a similar point, it would represent a paradigm shift in the entire semiconductor investment framework.

However, demand in the storage industry also carries uncertainty; demand-side variables do not only stem from macroeconomic factors, but technology itself may also reshape the supply-demand relationship.

For example, at the end of March, Google released a new algorithm called TurboQuant, claiming it to be an efficient AI memory compression algorithm. Its release immediately caused a sensation in Silicon Valley’s tech community and triggered a sharp decline across the entire storage sector.

But soon, voices within the industry countered that this crash was a misunderstanding. First, the paper was published a year ago and has itself been subject to academic controversy. Moreover, this algorithm has only been validated on smaller models such as Gemma and Mistral, with no testing on models larger than 70B, MoE architectures, or contexts with millions of tokens—scenarios where AI memory demands truly surge. Additionally, technical experts pointed out that TurboQuant only compresses the KV Cache in GPU memory during inference—one of the three major sources of AI memory demand—while having absolutely no impact on the training process.

Anyway, this paper and its algorithm have been heavily criticized. But here’s the interesting part: a controversial, old paper was enough to trigger such a severe market crash—doesn’t that itself suggest something? Does it mean that market confidence in the storage sector has become so extreme that it’s now incredibly fragile? Keep in mind that, prior to this plunge, SanDisk had already surged 200% since 2026, and Micron had risen over 80%.

Some short-sellers have directly pointed out that SanDisk’s valuation is hard to justify, given its $92 billion market capitalization versus an expected net profit of only $6 billion in 2026. Micron faces similar scrutiny: despite posting its best-ever financial results, its capital expenditures for fiscal year 2026 have surged 68% year-over-year to $20 billion—essentially a bold bet that memory demand will continue to grow.

Ultimately, the TurboQuant paper was merely a spark; the real powder keg was the extreme valuations accumulated over the past two years, where any signal suggesting lower demand could trigger a panic sell-off.

These algorithmic-level advancements represent precisely the risks most difficult to price in advance within the "super cycle" narrative, and Rob has clearly issued the ultimate risk warning.

Rob Li

Managing Partner at Amont Partners, New York

Concerns about the storage industry will persist until it is ultimately proven to become a stable, upward-trending, Apple-like business. The first concern is that AI could fail, leading to widespread collapse, since current growth primarily stems from AI. If one day AI proves ineffective and people realize it has little value, then all the future projections you’ve made would amount to nothing—reducing to zero.

Therefore, current optimism about a "super cycle" rests on one key assumption: that demand for AI is real and sustainable. If AI were to experience a bubble burst one day, the storage industry would find it difficult to remain unaffected. This sword of Damocles will remain hanging until the industry truly proves itself to be a stable, Apple-like growth business.

SemiAnalysis defines this cycle as a "once-in-forty-years shortage." But a more valuable perspective might be: the memory chip industry stands at a crossroads—it could either follow its four-decade pattern, slipping into another downturn after price peaks, or, driven by the structural demand from AI, truly break free from its cyclical fate and become a consistently growing industry.

At least through 2026, the answer seems to be leaning toward the latter. Production capacity from the three major storage manufacturers has been fully sold out, orders for upstream equipment suppliers have been booked through 2027, customers are paying advance deposits and signing legally binding long-term contracts, and even a Japanese toilet manufacturer has had its fate transformed as a result.

But history has never lacked mockery of the claim that “this time is different.” The only certainty is that, regardless of whether this cycle can be broken, it has irrevocably reshaped the global power dynamics of the technology industry. In this hunger game for memory chips, whoever controls the supply holds the power to shape the discourse of the AI era.