Article by Sleepy.md

Unfortunately, in this era, the more diligently and unreservedly you work, the more likely you are to accelerate your own reduction into a skill that AI can replace.

Over the past two days, trending lists and media channels have been flooded with “colleague.skill.” As this incident continues to gain traction across major social platforms, public attention has been overwhelmingly swept up by grand anxieties surrounding “AI layoffs,” “capital exploitation,” and the “digital immortality of workers.”

These are certainly stressful, but what worries me the most is a recommendation written in the project's README:

The quality of raw materials determines the quality of skill: prioritize collecting his long-form written content > decision-related responses > casual messages.

Those who work the hardest are precisely the ones most perfectly distilled and pixel-perfectly replicated by the system.

They are the ones who, after every project concludes, still sit down to write reflective reports; those who, when disagreements arise, take the time to type out detailed messages in the chat, honestly laying out their decision-making logic; and those who are deeply responsible, entrusting every detail of their work meticulously to the system.

Seriousness, once the most revered workplace virtue, has now become a catalyst accelerating workers' transformation into AI fuel.

Exhausted workers

We need to reconsider a word: context.

In everyday contexts, context is the backdrop of communication. But in AI—especially in the world of rapidly growing AI agents—context is the fuel that powers the engine, the blood that sustains the pulse, and the only anchor that allows models to make precise judgments amid chaos.

An AI stripped of context, no matter how astonishing its parameter count, is merely a search engine afflicted with amnesia. It cannot recognize who you are, cannot sense the hidden currents beneath business logic, and has no way of knowing the long, drawn-out negotiations and trade-offs you endured on this web of resource constraints and interpersonal dynamics when making a decision.

The reason "colleague.skill" has stirred such a massive uproar is because it coldly and precisely targeted the mine hoarding vast amounts of high-quality context—modern enterprise collaboration software.

Over the past five years, China’s workplace has undergone a quiet yet profound digital transformation. Tools like Feishu, DingTalk, and Notion have become vast corporate knowledge bases.

Using Feishu as an example, ByteDance has publicly stated that the number of documents generated internally each day is enormous, and these dense strings of characters faithfully capture every brainstorming session, every heated meeting debate, and every hard-fought strategic compromise from over 100,000 employees.

This digital penetration far exceeds that of any previous era. Once, knowledge was alive—with warmth, nestled in the minds of veteran employees, drifting through casual chats in the break room; now, all human wisdom and experience are forcibly stripped of their vitality and coldly archived in the server matrices of the cloud.

In this system, if you don’t document your work, it remains invisible, and new colleagues cannot collaborate with you. The efficient operation of modern enterprises is built upon the daily cycle in which every employee contributes context to the system.

Serious workers, driven by diligence and goodwill, openly lay bare their thought processes on these impersonal platforms. They do so to make the team’s gears mesh more smoothly, to strive to prove their worth to the system, and to desperately carve out a place for themselves within this complex corporate behemoth. They are not willingly surrendering themselves—they are simply clumsily yet earnestly adapting to the survival rules of the modern workplace.

But it is precisely this context, left behind for human collaboration, that becomes the perfect fuel for AI.

Feishu’s admin console includes a feature that allows super administrators to bulk export members’ documents and communication records. This means that the project retrospectives and decision-making logic you spent three years crafting through countless late nights can, in just a few minutes, be effortlessly bundled into a cold, lifeless compressed file via a single API call.

When a person is reduced to an API

With the popularity of "colleague.skill," undesirable derivatives have begun appearing on GitHub's Issues section and various social media platforms.

Some have created a "Ex.skill," feeding their years of WeChat chat history into an AI to have it continue arguing with or comforting them in that familiar tone; others have built a "White Moonlight.skill," reducing their unattainable longing to a cold interpersonal simulation, endlessly rehearsing试探 phrases in a calculated pursuit of the optimal emotional outcome; still others have crafted a "Paternalistic Boss.skill," preemptively digesting those oppressive PUA remarks in the digital space, constructing for themselves a sorrowful psychological defense.

The use of these skills has long since moved beyond the realm of work efficiency. Unconsciously, we have become adept at applying the cold logic of tool manipulation to dismember and objectify living, breathing human beings.

The German philosopher Martin Buber once proposed that the foundation of human relationships consists of only two distinctly different modes: "I and Thou" and "I and It".

In the encounter of "I and Thou," we transcend prejudice and regard each other as complete, dignified beings. This bond is openly敞开, brimming with vibrant unpredictability, and precisely because of its sincerity, it is especially fragile; yet once we fall into the shadow of "I and It," living people are reduced to objects that can be deconstructed, analyzed, and categorized with labels. Under this purely utilitarian gaze, the only thing we care about becomes: "What use is this thing to me?"

The emergence of products such as "Former.skill" signifies that instrumental rationality of "me and it" has thoroughly invaded the most intimate emotional domains.

In a real relationship, people are multidimensional and full of wrinkles, constantly flowing with contradictions and rough edges; their responses change based on specific situations and emotional interactions. Your ex may react very differently to the same phrase upon waking in the morning versus after working late at night.

But when you distill a person into a skill, what you strip away is merely the residual function that happened to be “useful” to you and could “produce utility” within that specific bond. The originally warm, self-aware individual, with their own joys and sorrows, has their soul completely drained in this cruel purification, transformed into a “functional interface” that you can plug in or unplug at will.

It must be acknowledged that AI did not invent this chilling coldness out of thin air. Long before AI emerged, we were already accustomed to labeling others and precisely measuring the "emotional value" and "network weight" of every relationship. For instance, we quantify people’s criteria into tables on dating platforms; in the workplace, we categorize colleagues as "hard workers" or "slackers." AI merely made explicit what was once implicit—the functional extraction inherent in human interactions.

A person is flattened, leaving only the slice that asks, “What use is this to me?”

Digital patina

In 1958, Hungarian-British philosopher Michael Polanyi published "Personal Knowledge," in which he introduced the highly insightful concept of tacit knowledge.

Polanyi had a famous assertion: "We know more than we can tell."

He gave the example of learning to ride a bicycle. A skilled rider, gliding effortlessly with the wind, perfectly maintains balance with every shift in gravity, yet cannot precisely convey to a beginner the subtle bodily intuition of that moment using dry physics formulas or pale words. He knows how to ride, but he cannot articulate it. This kind of knowledge that cannot be encoded or verbalized is tacit knowledge.

The workplace is filled with this kind of tacit knowledge. A senior engineer, when troubleshooting a system failure, might pinpoint the issue with just a glance at the logs—but he struggles to document this “intuition” built on thousands of trial-and-error experiences. A skilled salesperson, who suddenly falls silent during a negotiation, creates a sense of pressure and timing that no sales manual can capture. An experienced HR professional, during an interview, can detect inconsistencies in a candidate’s resume from just a half-second avoidance of eye contact.

「Colleague.skill」 can only extract explicit knowledge that has been written down or spoken aloud. It can capture your reflection documents, but not the doubts you had while writing them; it can copy your decision responses, but not the intuition behind your decisions.

What the system distills is always just a shadow of a person.

If the story ended here, it would merely be another clumsy imitation of humanity by technology.

But when a person is distilled into a skill, that skill does not remain static. It is used to reply to emails, write new documents, and make new decisions. In other words, these AI-generated shadows begin to create new contexts.

These AI-generated contexts will be stored in Feishu and DingTalk, becoming training material for the next round of distillation.

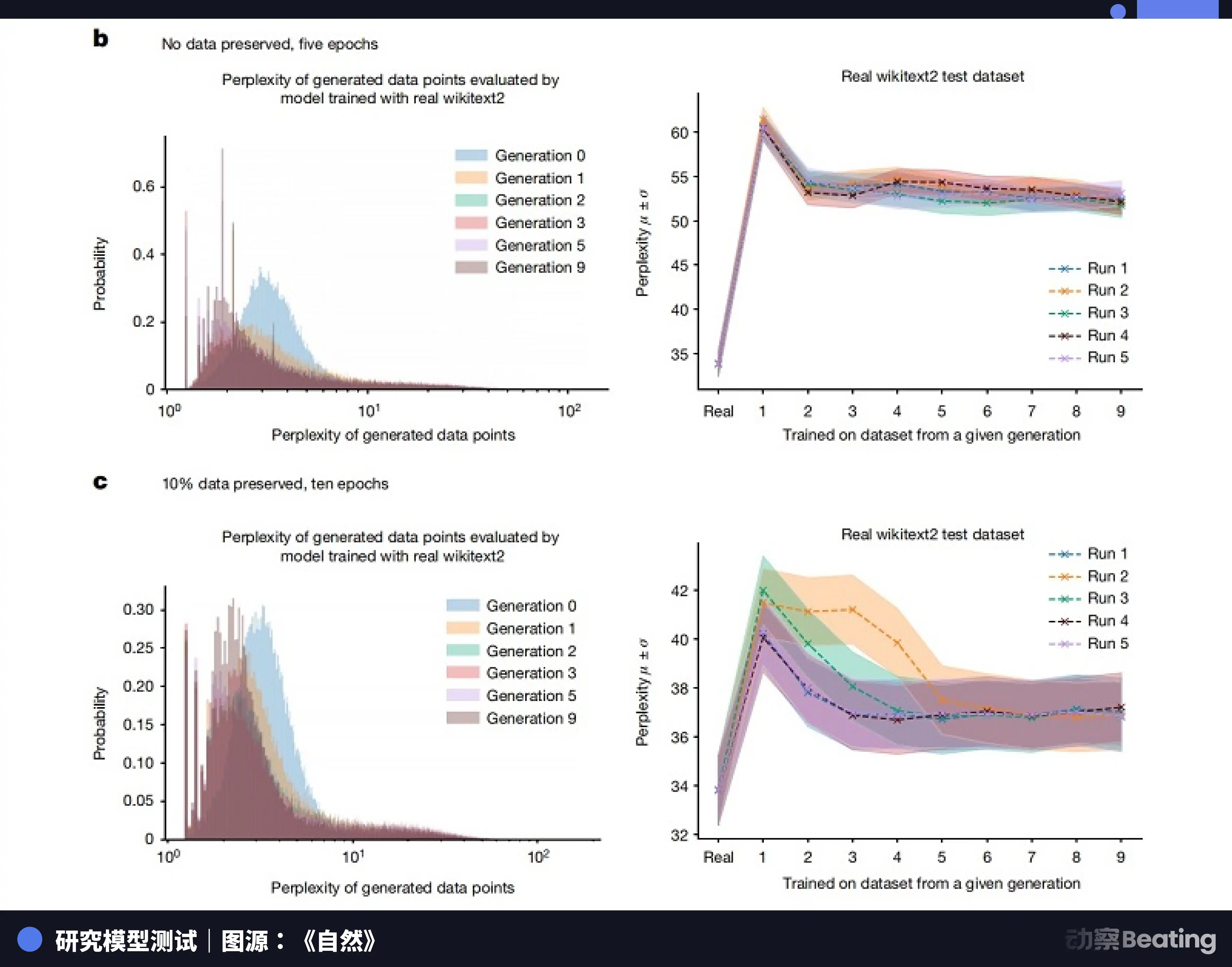

Back in 2023, research teams from the University of Oxford and the University of Cambridge jointly published a paper on "model collapse." The study found that when AI models are iteratively trained on data generated by other AI systems, the distribution of data becomes increasingly narrow. Rare, marginal, yet profoundly authentic human traits are rapidly erased. After just a few generations of synthetic data training, models completely forget long-tail, complex real human data and instead produce highly mundane and homogenized outputs.

In 2024, Nature also published a research paper indicating that training future generations of machine learning models on AI-generated datasets will severely pollute their outputs.

It’s like those meme images circulating online—originally a high-resolution screenshot, passed around and compressed countless times. Each share loses some pixels and adds more noise, until the image becomes blurry and covered in digital patina.

When the authentic human context, infused with tacit knowledge, is exhausted, and the system can only train itself on weathered shadows, what remains?

Who is erasing our traces?

What remains is only correct nonsense.

When the river of knowledge dries up into an endless cycle of AI chewing on AI, everything the system produces will become extremely standardized and extremely safe, yet irrevocably hollow. You will see countless perfectly structured weekly reports and flawless emails, but not a single breath of humanity, not a single truly valuable insight.

This great collapse of knowledge is not because human brains have become dumber; the true tragedy is that we have outsourced the right to think and the responsibility to preserve context to our own shadows.

A few days after 'colleague.skill' went viral, a project named 'anti-distill' quietly appeared on GitHub.

The author of this project did not attempt to attack large models or write any grand manifestos. He simply provided a small tool to help workers automatically generate long, seemingly reasonable but logically noisy, ineffective texts in Feishu or DingTalk.

His goal was simple: hide his core knowledge before the system distilled it. Since the system favored scraping "long-form content written actively," he fed it a pile of meaningless gibberish.

This project hasn’t gone viral like «colleague.skill»; in fact, it feels small and powerless. Using magic to defeat magic still operates within the established rules of capital and technology. It cannot reverse the growing trend of systems becoming more dependent on AI and increasingly neglecting real people.

But this does not prevent this project from becoming the most tragically poetic and profoundly metaphorical scene in the entire absurd play.

We work tirelessly to leave traces within the system, write detailed documentation, and make meticulous decisions, trying to prove our existence and value within this vast modern corporate machine. Yet we don’t realize that these very earnest traces will ultimately become the eraser that wipes us away.

But on the other hand, this may not be a complete dead end.

Because what that eraser wipes away is always the "you of the past." A skill packaged as a file—no matter how sophisticated its extraction logic—is fundamentally just a static snapshot. It is frozen at the moment of export, reliant on outdated inputs and trapped in a loop of predefined processes and logic. It lacks the instinct to confront the chaos of the unknown and the capacity to evolve through real-world setbacks.

When we let go of those highly standardized, rigidly established experiences, we free up our own hands. As long as we continue to reach outward and constantly break down and rebuild the boundaries of our understanding, that shadow lingering in the clouds will forever be forced to follow in our footsteps.

Humans are fluid algorithms.

Click to learn about the open positions at BlockBeats

Welcome to the official BlockBeats community:

Telegram subscription group: https://t.me/theblockbeats

Telegram group: https://t.me/BlockBeats_App

Official Twitter account: https://twitter.com/BlockBeatsAsia