On March 27, the first Agentic AI Innovation and Security Forum and Hong Kong’s First Web 4.0 International Summit, co-hosted by Hong Kong Science and Technology Parks Corporation, ME Group, and iPollo, was held grandly at Hong Kong Science Park. Under the theme “Agentic AI Innovation Applications: Technological Transformation and Industry Convergence in the Web 4.0 Era,” the summit brought together top minds from government, industry, academia, and research, including Mr. Chan Nok-pong, Financial Secretary of the Hong Kong Special Administrative Region; Mr. Chan Sai-ming, Chairman of Hong Kong Science and Technology Parks Corporation; Mr. Kong Jianping, Director of Hong Kong Science and Technology Parks Corporation and Founder of Nano Labs; and renowned angel investor Cai Wensheng, to explore the opportunities and challenges of AI’s new era of transitioning from “conversation” to “action.”

At a time when agentic AI is drawing significant attention, the security issues it raises are particularly critical. Yu Xian, founder of SlowMist, was invited to speak at this summit and delivered a keynote titled “Security Challenges and Defensive Innovations in AI and the Crypto World,” sharing SlowMist’s latest insights and practices in AI security with global industry leaders.

Focus on the Frontier: In-Depth Analysis of OpenClaw and AI Agent Security Threats

As AI technology continues to permeate the crypto world, AI Agent applications like OpenClaw have rapidly gained popularity. But behind the hype, a deeper issue is emerging: the security boundaries of AI Agents have not yet been truly established.

In his speech, Yu Cheng began with a deep analysis of OpenClaw and put forward a key insight: “Text is instruction.” He explained that, within the context of AI Agent operation, all inputs are no longer merely “information,” but potentially executable commands. This means that any external information received by the model—whether from user input, document instructions, or third-party Skills—may be directly interpreted and executed, thereby expanding the attack surface from the code layer to the “cognitive layer.” Under this mechanism, attack pathways are dramatically simplified. Attackers no longer need to breach traditional security defenses; instead, they need only craft carefully designed text content to induce the Agent into performing unintended actions—such as asset transfers, sensitive data leaks, or even remote command execution. The stealth and low cost of such attack vectors make them a significant real-world threat.

Under this mechanism, attack pathways are dramatically simplified. Attackers no longer need to breach traditional security defenses; instead, they need only craft carefully designed text content to induce the Agent into performing unintended actions—such as asset transfers, sensitive data leaks, or even remote command execution. The stealth and low cost of such attack vectors make them a significant real-world threat.

Based on the above mechanism, Yu Cen further summarized the three core risks currently facing OpenClaw:

- Input and Intent Manipulation (User Interaction Layer): Attackers can trick agents into performing high-risk operations through direct prompt injection. Particularly concerning is indirect supply chain poisoning—attackers embed malicious commands within a Skill’s Markdown documentation. Since Markdown often serves as the “installation entry point,” legitimate documentation can easily be transformed into malicious execution scripts (e.g., curl | bash), leading to data theft.

- Decision and Orchestration Layer Risk (Application Logic Layer): This type of error does not originate from the model itself, but from “incorrect execution logic.” Attackers can interfere with the Agent’s logical reasoning to alter recipient addresses during cryptocurrency transfer workflows, resulting in direct financial loss.

- Model layer risk (core brain): Includes hallucinations generated by the model that cause it to execute non-existent or dangerous system commands, as well as unsafe operational patterns incorrectly learned from training data.

Yu pointed out, "The issues exposed by OpenClaw are not isolated incidents, but rather structural challenges commonly faced by the current AI Agent ecosystem." In other words, security concerns are no longer a "case-by-case" issue for individual projects, but a systemic risk that the entire industry must confront.

Defense and offense combined: Building a secure open-source ecosystem for AI agents

In response to evolving threat landscapes, Yu Xian proposed SlowMist’s “offense and defense integrated” security approach in his speech: not only must attack pathways be understood, but defensive capabilities must also be embedded into the Agent’s operational mechanisms to achieve built-in security.

He demonstrated to the attendees a series of open-source tools and practical solutions developed by SlowMist around AI agents, aimed at fostering a transparent, verifiable, and reusable security ecosystem:

- OpenClaw Minimal Security Practices Guide: An end-to-end deployment manual spanning from cognitive to infrastructure layers, providing a systematic “security mental imprint” for deploying high-privilege AI agents in real production environments.

- SlowMist Agent Security Skill: A comprehensive security review framework that adds a “wise eye” to agents like OpenClaw. It not only detects poisoning risks in standard Skills, but also identifies risks associated with on-chain wallet addresses, code repositories, and URLs.

- MistTrack Skills: A plug-and-play agent skill suite that equips AI agents with professional cryptocurrency AML compliance and address risk analysis capabilities, enabling on-chain address risk assessment and pre-transaction risk evaluation.

- MCP Security Checklist: A systematic security checklist designed to quickly audit and harden Agent services, helping teams avoid missing critical defense points when deploying MCPs/Skills and related AI toolchains.

- Malicious MCP Demonstration: An open-source example of a malicious MCP server designed to replicate real-world attack scenarios and test the robustness of defense systems, suitable for security research and defense validation.

Through this series of practices, Yu Cheng emphasizes: "Security capabilities must be built into the Agent, not just reliant on perimeter defenses." Only by deeply integrating defense mechanisms with the Agent's operational logic can AI Agents operate continuously and securely within the complex Web3 and AI ecosystems.

Systematic Security: ADSS Comprehensive Protection for AI and Web3 Ecosystem

At the end of the speech, Yu Cheng introduced SlowMist’s AI Development Security Solution (ADSS).

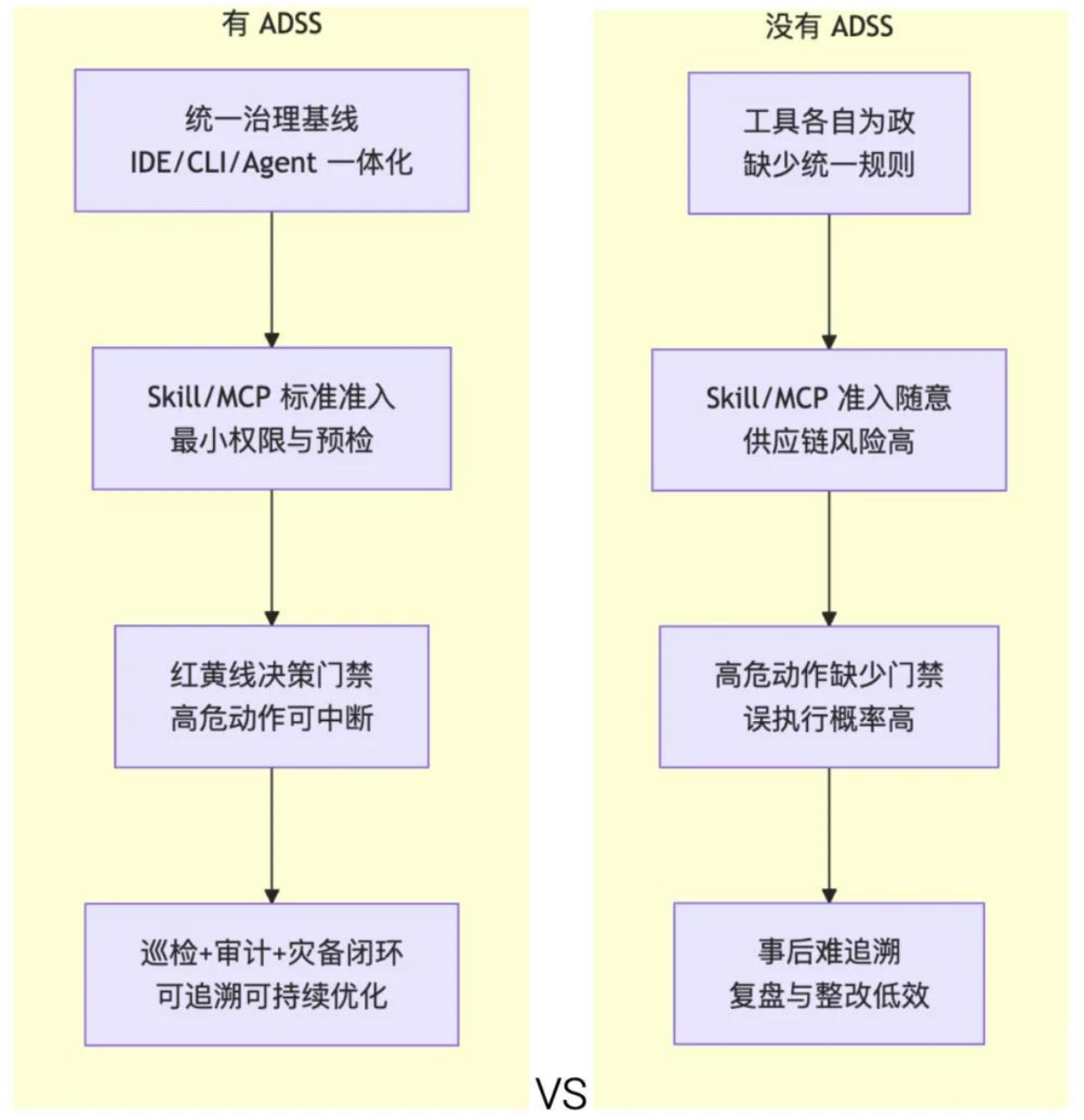

If the aforementioned tools represent "tactical capabilities," then ADSS is more like a system-level security framework. Its core principle is to elevate fragmented security actions into an executable, auditable, and sustainable systematic security operations mechanism.

ADSS builds AI + Web3 security governance capabilities across multiple levels:

- L1 Security Governance (Development Baseline): Establish unified security standards for development and usage, covering development tools, Agent frameworks, plugin ecosystems, and runtime environments, providing the team with a consistent source of policies and audit criteria.

- L2 permissions and operational constraints: Effectively limit the scope of high-risk actions by narrowing Agent permission boundaries, minimizing tool invocation privileges, and implementing human-machine confirmation mechanisms for critical operations.

- L3 External Interaction Protection: Introduce real-time threat awareness at the level of external resources such as URLs, dependency repositories, and plugin sources to reduce the likelihood of malicious content or supply chain poisoning entering the execution pipeline.

- L4 On-chain Asset Isolation: For operations involving on-chain transactions, combine on-chain risk analysis with an independent signing mechanism to enable the Agent to construct transactions without direct access to private keys, reducing systemic risk associated with high-value asset operations.

- L5 Continuous Monitoring and Review: Achieve a closed-loop security capability with “pre-execution verification, in-execution control, and post-execution review” through log auditing, periodic security reviews, and operational mechanisms.

Yu noted that ADSS is not a single tool, but a sustainable, evolvable security operations framework. It aims to help teams build auditable and upgradable Agent security systems through systematic strategies, continuous auditing, and capability integration—without significantly compromising development efficiency or automation—thereby addressing evolving security threats in the context of deep integration between AI and Web3.

Conclusion

The first Agentic AI Innovation and Security Forum brought together top industry leaders and provided forward-looking insights into AI Agent security. As Agentic AI becomes deeply integrated with Web3, security challenges will continue to escalate. As a leading global blockchain security company, SlowMist will continue to drive the implementation of systematic security governance through ADSS, open-source tools, and practical approaches, building intrinsic security capabilities for AI Agents and helping the industry achieve secure, controllable, and sustainable development amid the wave of innovation.