Written by: Vaidik Mandloi, TheTokenDispatch

Compiled by: Blockchain for Beginners

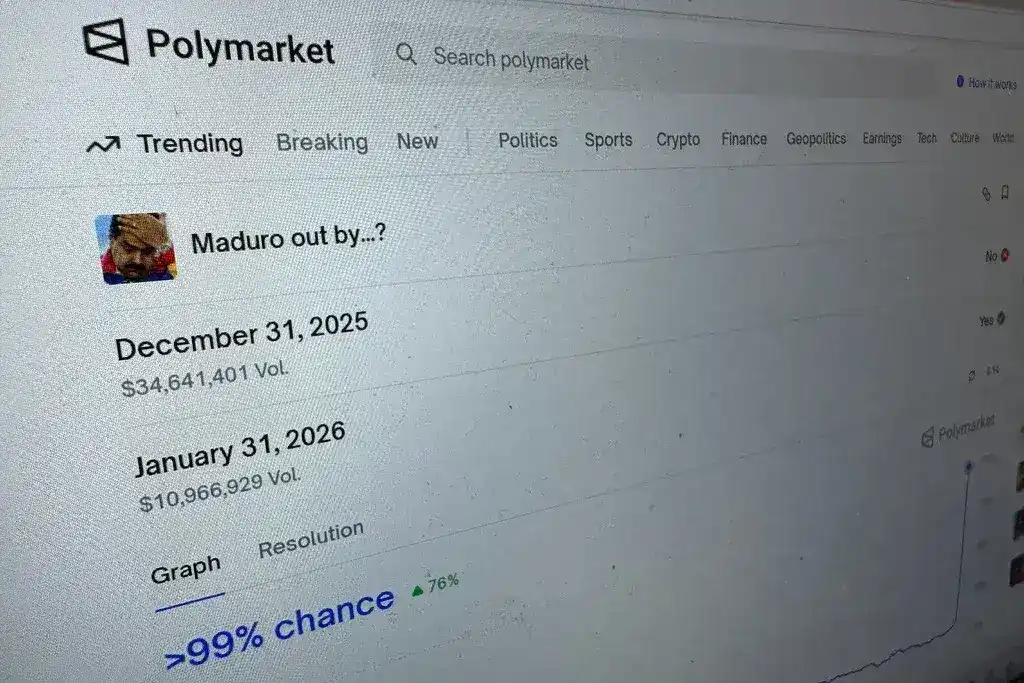

In January 2026, an anonymous trader placed a series of bets on the cryptocurrency trading platform Polymarket, wagering that Venezuelan President Nicolás Maduro would be captured. The total stake amounted to approximately $34,000. Days later, U.S. special forces carried out the capture operation, and the trader cashed in over $400,000 in winnings. The Secretary of State later confirmed that the operation was so sensitive that it did not require notification to Congress. Consider this: the U.S. Congress, responsible for authorizing military actions, was completely unaware. The American public was also in the dark. Yet, someone sitting behind a screen on a cryptocurrency betting platform had access to enough information to risk real money—and their prediction came true.

This has become a common saying in today’s prediction market industry. As Shane Coplan, CEO of Polymarket, puts it, it’s called the “truth machine.” The argument is that because traders have their own stakes at risk, their collective bets reflect the future direction of the world more accurately than any poll, expert, or commentator—even those who face no consequences for being wrong. One could say that Polymarket’s odds are the closest thing to truth you can find.

This approach seems to be working. Prediction markets are no longer a niche corner of the internet where a small group of gamblers bet for thrills. A recent analysis of a dataset of 364 TikTok videos mentioning prediction markets found that 68% of the videos had nothing to do with gambling. People are not betting—they are citing odds from these platforms in political debates, just as they once cited polls. Polymarket appears in about 70% of these videos. A 22-year-old TikTok user, in posting a political video, used odds from a crypto betting platform to predict real-world outcomes, and a significant number of viewers agreed.

This is incredible. Two years ago, you simply couldn’t have believed all of this would happen. But one question no one seriously considered is: Are these probabilities really worthy of such trust?

So I want to ask: how accurate are these markets really? What happens when odds begin to influence the very events they are meant to predict? What would the future look like if the entire world treated betting odds as truth?

How to score prediction markets?

Before analyzing the data, we first need to understand how to measure whether a prediction market is effective. Since most people have never considered this question, all the marketing claims about Polymarket and Kalshi amount to nothing more than hype if we ignore it.

One scoring method is called the Brier score. Developed by meteorologist Glenn Brier in 1950, it was designed to evaluate the quality of weather forecasts, as meteorologists were (and still are) among the first professionals to rely on probabilistic predictions for their livelihood. The method is very simple. Suppose you predict a 90% chance of rain tomorrow, and it does rain—that’s a good prediction, and your Brier score will be low. Now suppose you predict a 90% chance of rain tomorrow, but it turns out to be completely sunny—that’s a poor prediction, and your Brier score will be high. A Brier score of 0 means your prediction was perfectly accurate. A score of 0.25 means your prediction is no better than flipping a coin. Any score above 0.25 means you’re worse than random guessing.

Why does this matter? Because when Polymarket tells you their market predicts Trump has a 60% chance of winning, and he ultimately wins, it sounds impressive in headlines—but statistically, one correct prediction means almost nothing. You need to evaluate the market’s complete historical record across thousands of questions. That’s what the Brier score does. It’s the only honest way to assess whether these markets are truly skilled at predicting election outcomes.

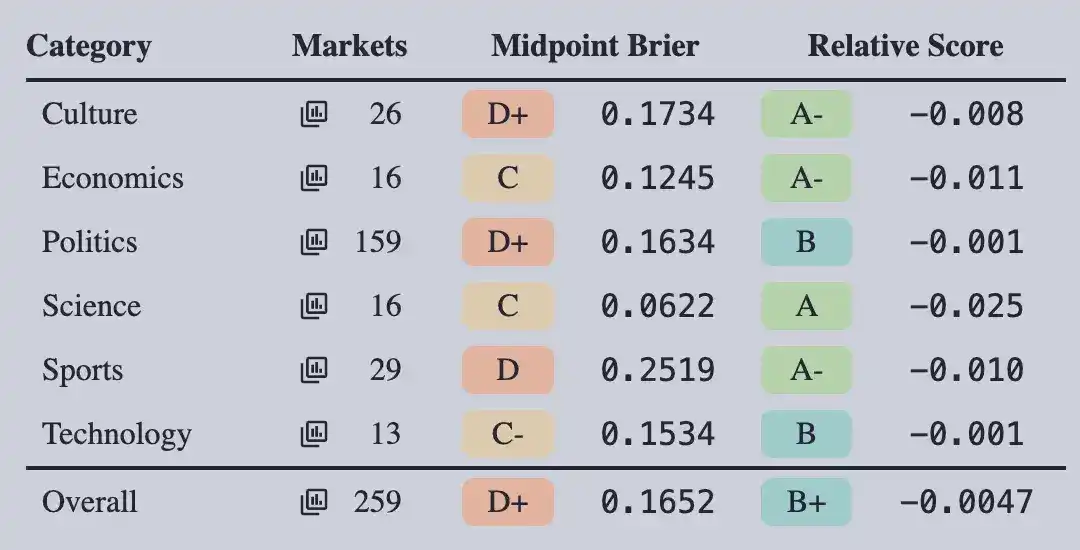

A website called Brier.fyi does exactly that. They analyzed over 84,000 questions on the Polymarket, Kalshi, Manifold, and Metaculus platforms. Polymarket’s Brier score is 0.047. That’s indeed a very good score. Simply put, imagine a predictor saying, “I’m 90% confident this will happen,” and they’re right with that level of accuracy every time.

Source: Brier.fyi

Source: Brier.fyi

But here's the interesting part: the notion of the "truth machine" is beginning to unravel.

0.044 is the average score for all listed items on Polymarket. In this case, the average plays a crucial role; if the scores are broken down by actual user bets, significant fluctuations in ratings become apparent.

Science and economics? Polymarket receives an A rating. This market is based on CPI data, Federal Reserve interest rate decisions, and GDP figures. These markets perform well because traders tend to have financial literacy, the data is verifiable, and institutional investors with genuine expertise in the field are putting real money behind their positions.

Politics? B+. Decent enough, mainly propped up by the massive market of presidential elections, where billions of dollars flow in. Culture and technology? Worse. Much worse.

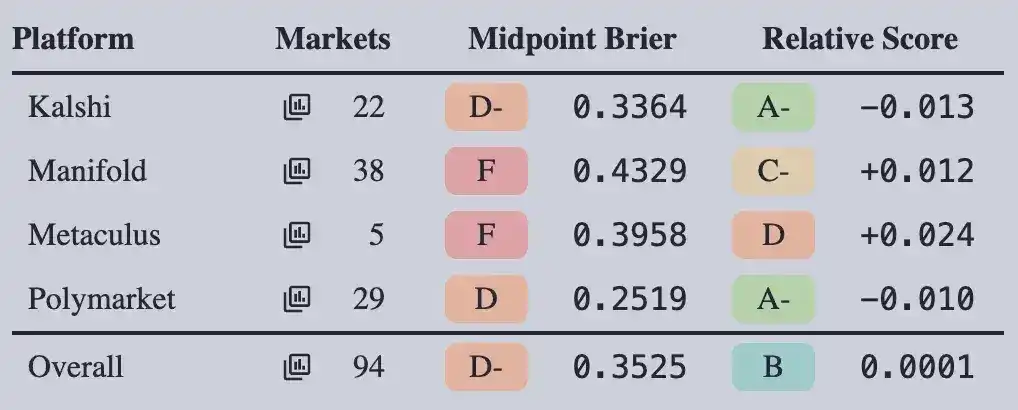

Next is sports. The total score for sports prediction markets across all platforms is only 0.325, which is -D. Keep in mind that the probability of flipping a coin is 0.25. Overall, sports prediction markets perform worse than simply flipping a coin to guess each outcome. Think about that.

The category that attracts the most casual bettors—and one that Kalshi has actively expanded into (at one point, about 90% of Kalshi’s trading volume was concentrated in sports betting)—is a category where markets have proven to be unreliable.

Now let’s look at the various markets, where the story becomes even more compelling.

Polymarket launched a market on whether Bitcoin would reach $100,000 before January 2025. Bitcoin ultimately did reach $100,000. The market’s outcome was correct, but it incorrectly assessed the probability for most of the time, remaining in a low-confidence state for months before surging to near-certainty in the final stages. Its Brier score was 0.4909, which is an F. Remember, beyond 0.25 (equivalent to a coin flip), you’re better off guessing randomly. This market’s Brier score is nearly double that threshold.

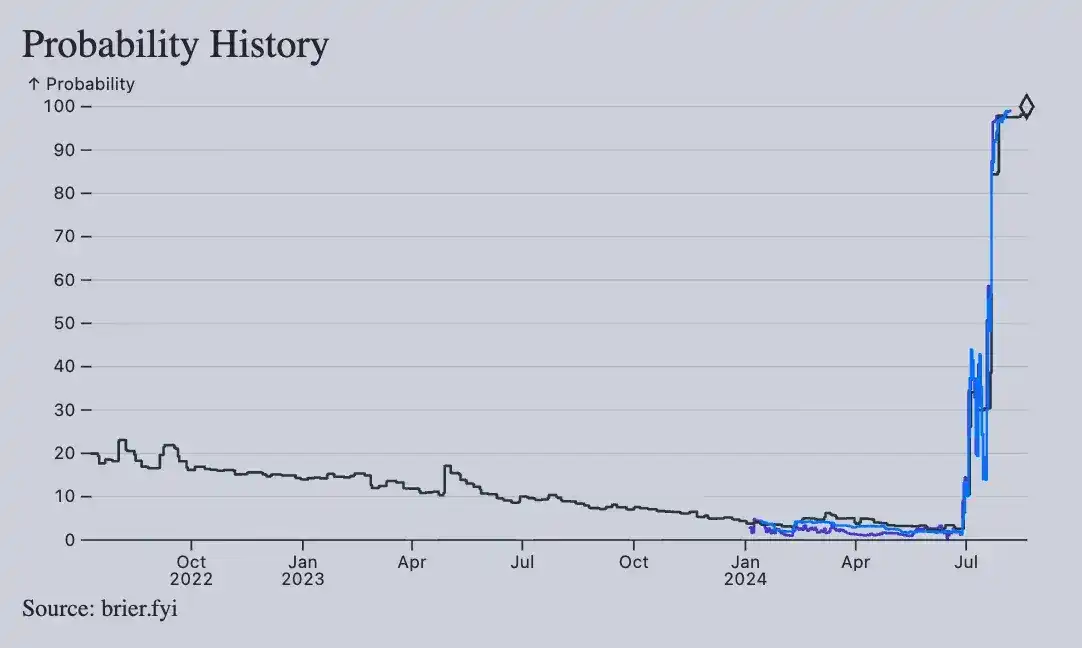

Market movements surrounding Kamala Harris winning the 2024 Democratic presidential nomination became even more erratic. She ultimately secured the nomination, and the market once again predicted the correct outcome—ironically enough. Yet the Brier score stands at just 0.9098. This number is abysmal, and it cannot be overstated. The market has consistently and confidently made wrong predictions for so long that even getting the final result right cannot redeem it. If you had relied on this market as a basis for decision-making, you would have been misled throughout the entire campaign cycle, right up until the very moment the outcome was finalized.

Now let’s turn to the other side of the story, because this isn’t a simple one. The 2024 U.S. presidential election was a major win for prediction markets. At the time, all major polls suggested a tight race, yet Polymarket predicted Trump’s support at around 60%. A study from Vanderbilt University used a Bayesian time series model to compare Polymarket’s prediction prices with national polling data from seven swing states. The results showed that Polymarket was more accurate across the board.

So what does this indicate? Prediction markets have been exceptionally accurate in forecasting elections. Especially in the largest, most liquid elections, where billions of dollars in capital, tens of thousands of traders, and widespread public attention converge on a single question, the predictions often outperform opinion polls.

But the issue is that election predictions may account for only 2% of the trading volume listed on these platforms. Just Polymarket’s 2024 presidential election markets generated over $3.6 billion in trading volume and attracted 63,000 unique traders per month. When looking at congressional elections, state referendums, or any issues in culture, technology, or sports, bid-ask spreads on contracts surge to 20% to 100% of the mid-price. Spreads on legislative and crisis-related markets can approach 100%. Such wide spreads indicate that the market knows almost nothing—it’s merely two individuals making wildly opposing guesses on the same question, with little capital backing either side.

When fate begins to write the story

If accuracy issues are confined within the prediction market ecosystem, they can still be controlled. Traders who bet on poor markets lose money, learn from their mistakes, and the system improves over time. This has been how all financial markets have operated for the past century. But the problem is that odds are no longer just signals among traders—they have become public information accessible to everyone.

Over the past 18 months, major U.S. news outlets have integrated prediction market data into their political reporting. The Wall Street Journal signed a formal agreement with Polymarket to display Polymarket’s betting data alongside its news coverage. CNN began showing Kalshi odds on screen during its election night coverage. CNBC adopted the same approach. In December 2024, Substack even announced a direct partnership with Polymarket to enable newsletter writers to embed real-time market data directly into their articles.

This is why the odds ended up on TikTok. These numbers traveled from Polymarket to The Wall Street Journal, then to cable news, then to Twitter, and finally to TikTok. When ordinary users see these odds, they’ve already been disseminated through enough authoritative channels that people perceive them as facts. What people accept are numbers that have been pre-“sanitized” by mainstream media.

This is the root of the problem with prediction markets: once odds are reported as news, they begin to influence the very events they are meant to predict. This phenomenon has a specific name—economists call it endogeneity. In simple terms, the act of measuring changes the thing being measured.

Let me give a specific example. Brian Armstrong, CEO of Coinbase, is participating in an earnings call. He learns that Polymarket is executing a contract that predicts whether he will mention certain specific phrases during the call. As a result, he adjusts the wording he originally planned to use. The market was supposed to be able to predict what he would say—but his awareness of the market’s movement changed his final statements.

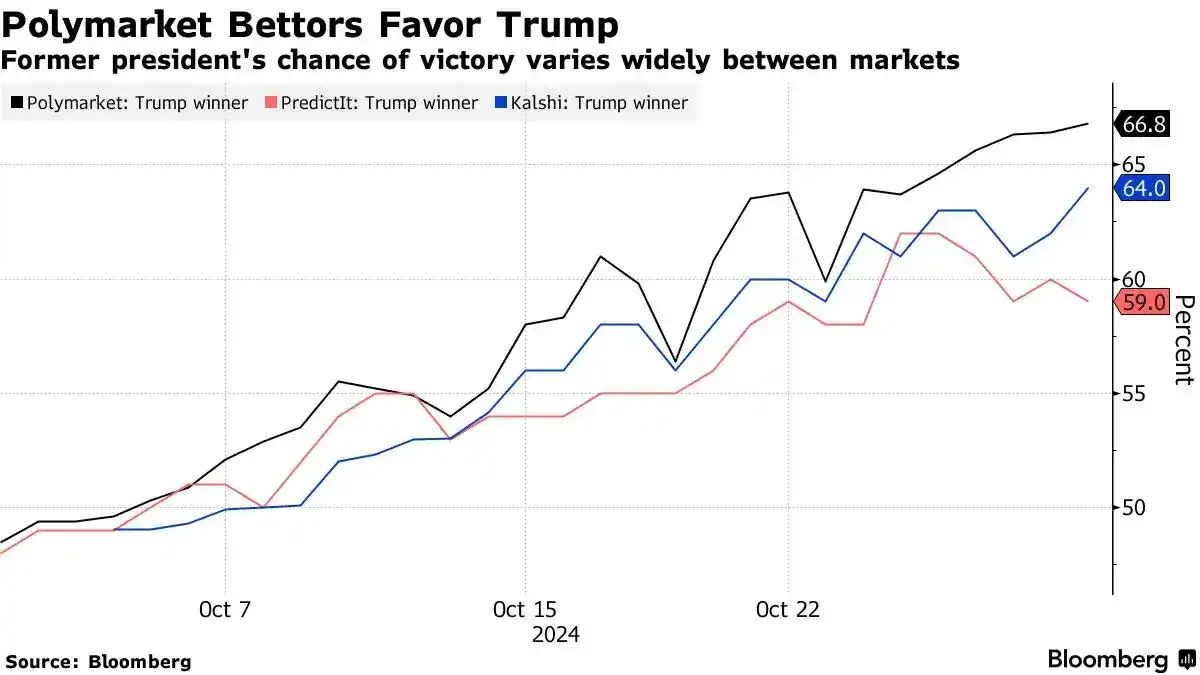

Now, let’s scale this dynamic up to the electoral level. In the 2024 U.S. presidential election, a French trader operating under the pseudonym “Theo” (known in the media as the “Polymarket whale”) placed a bet on Trump’s victory and ultimately earned over $85 million. This was no lucky gambler—he commissioned an independent private poll, separate from all public opinion polls nationwide, which revealed that Trump’s actual performance far exceeded what public polls suggested.

For this reason, his bets drove up prices across multiple platforms, subsequently covered by the media outlets I mentioned, including The Wall Street Journal, CNN, and political commentators across various platforms. Despite polls showing a tight race, market predictions favored Trump. This single narrative shaped how millions viewed the election in the final weeks. Commentators debated whether the "smart money" had access to information unseen by polls. Voters accepted this claim, and ultimately, Trump won the election.

I am not claiming that Theo changed the election outcome. Such a claim is far-fetched and I cannot substantiate it. What I am truly saying is that anyone paying attention should be concerned: a trader with access to private polling data unavailable to others was able to influence Polymarket’s price movements, and then The Wall Street Journal and CNN subsequently packaged that price as if it represented the collective wisdom of the market. A well-functioning prediction market should aggregate vast amounts of information from many participants into a clear signal. What happened in 2024 was that one person’s exclusive polling data was laundered through Polymarket and then repackaged as if it reflected the consensus of thousands of traders.

If a trader can do this with $85 million, imagine what those who truly possess wealth and power could accomplish.

In February 2026, Israeli authorities charged at least two individuals for using classified military intelligence to place bets on the Polymarket platform. They wagered on contracts related to military operations before they were publicly disclosed, potentially earning around $100,000. These individuals, who held security clearances, exploited information unavailable to the public for several days to place war-related bets. This is the first such case globally, confirming that prediction markets are fast enough, liquid enough, and anonymous enough to be used for real-time monetization of classified information.

The Maduro trade mentioned at the beginning follows the same pattern: someone placed a bet just before a secret military operation and won over $400,000. Whoever it was either had insider information or is one of the luckiest political gamblers in history. We’ll never know.

What happens when everyone believes the odds?

The median response time for questions on the Polymarket platform is four days. The average response time is 19 days, but a small number of long-running markets raise the average. Most questions on the platform relate to this week’s market movements.

This indicates that these markets are not making any meaningful long-term forecasts about the future—they are simply pricing in near-term developments. Will the vote pass on Friday? What will this person say tomorrow? Will the numbers released on Wednesday be higher or lower than expected? These insights are certainly useful. But they are vastly different from what is meant when people call prediction markets a “truth machine.” The term “truth machine” typically implies that markets can tell you what the world will look like six months, a year, or even five years from now. But the data shows they simply cannot. Not even close.

99% of trading volume in prediction markets occurs in the final few hours before the event is resolved, with capital flooding in as the outcome becomes nearly certain. These markets also suffer from larger liquidity gaps. By the end of 2025, the combined weekly trading volume on the two major platforms, Polymarket and Kalshi, is projected to reach approximately $2.5 billion. Sounds impressive, right? But the daily clearing volume of just the U.S. options market is around $760 billion.

Prediction markets account for only 0.05% of this. The entire prediction market industry, regardless of which platform, contract, or category it covers, is negligible compared to the markets actually used by institutions for decision-making.

Here’s the situation: prediction markets are only suitable for a very specific type of question—binary, high-profile, short-term events involving millions of dollars. But this represents only a small fraction of the wide range of questions these platforms actually offer. For the remaining 98%, prices are unreliable, liquidity is nearly nonexistent, and outcomes resemble Twitter polls more than financial instruments.

They are building the default source of probability for everything. Just as you would open a Bloomberg Terminal to check stock prices, their vision is that you would open Polymarket to check probabilities. Their strategy is that once enough media outlets, newsrooms, financial analysts, and government researchers rely on this data source, the product will become indispensable—regardless of whether the data is accurate.

I think this will work. And I think this should concern everyone.

The issue is not whether prediction markets are useful. The answer is yes. For elections, major economic data, and a few high-profile events, they consistently outperform other alternatives. This is a fact, and it’s crucial. The question is, what happens when the entire information ecosystem begins to treat the outputs of these markets as truth—even for the 98% of issues that are completely beyond the markets’ ability to predict?

Economist Robin Hanson has advocated for prediction markets for decades, describing them as systems that force people to put money behind their beliefs. In his model, the final price would represent the best current estimate of the truth. But this model assumes sufficient market liquidity, diverse participants, and resistance to manipulation. Yet our existing markets are dominated by a few "whales," concentrated in just two areas (elections and sports), accounting for about 80% of total trading volume. The remaining 20% of volume is spread across thousands of contracts, where investments of just a few thousand dollars can cause price movements of double digits.

These are tools designed to generate attention. They work when the world is paying attention; they fail when no one is watching. The more people believe these tools create truth, the more power those who can manipulate prices hold. And those who can manipulate prices are not a broad group of informed ordinary people, but a small group of well-capitalized traders who control private polling data and, in at least two verified cases, possess classified intelligence.

The most dangerous aspect of prediction markets is not that they get things wrong, but that they consistently get crucial predictions just right enough to earn a trust they don’t truly deserve—and this trust is gradually becoming embedded in the world’s information-processing mechanisms. The Wall Street Journal publishes prediction data, CNN airs segments on it, and TikTok shares it. In the end, it will be some trader with sufficient capital who decides what this number means.

This is the reality of the truth machine. A system that produces numbers, while the world decides to call them truth.