A few days ago, OpenAI officially released the new large model GPT-5.4-Cyber. Like many internet users, this model gave us an extremely strong sense of déjà vu.

This new model closely mirrors Anthropic’s recently released Claude Mythos in terms of target audience, use cases, and even marketing strategy. The direct competition has become unmistakable—so much so that The New York Times recently highlighted it bluntly in its headline: “Like Anthropic, OpenAI…”

This homogenization trend extends far beyond just the foundational base models. If you look at the recent product lines released by these two companies, you’ll see they are becoming mirror images of each other!

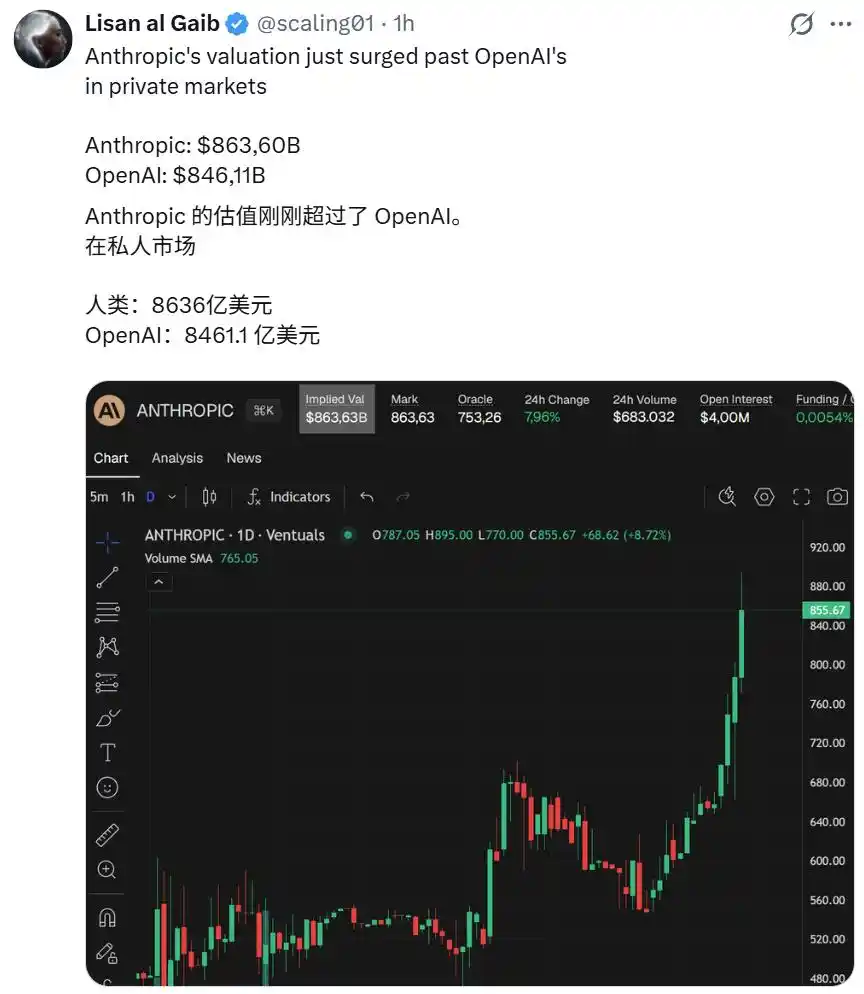

Under the bright lights of the capital market, this convergence is even more apparent. Currently, the valuations of the two companies on the secondary market are extremely close, with Anthropic even slightly surpassing OpenAI recently, thanks to its rapid expansion in the enterprise market. Capital has the most acute sense of smell—in their view, these two unicorns are growing identical horns.

It appears that the homogenization of underlying large models inevitably leads to convergence in upper-layer applications.

Today, I want to explore the two leading tools representing the pinnacle of AI-assisted programming: OpenAI’s Codex and Anthropic’s Claude Code. From their once-divergent paths, how did they gradually evolve into such similar forms?

From Parting Ways to Converging Paths: The Evolution of the Two Giants

Go back a few years, and Codex and Claude Code were products of entirely different technological philosophies.

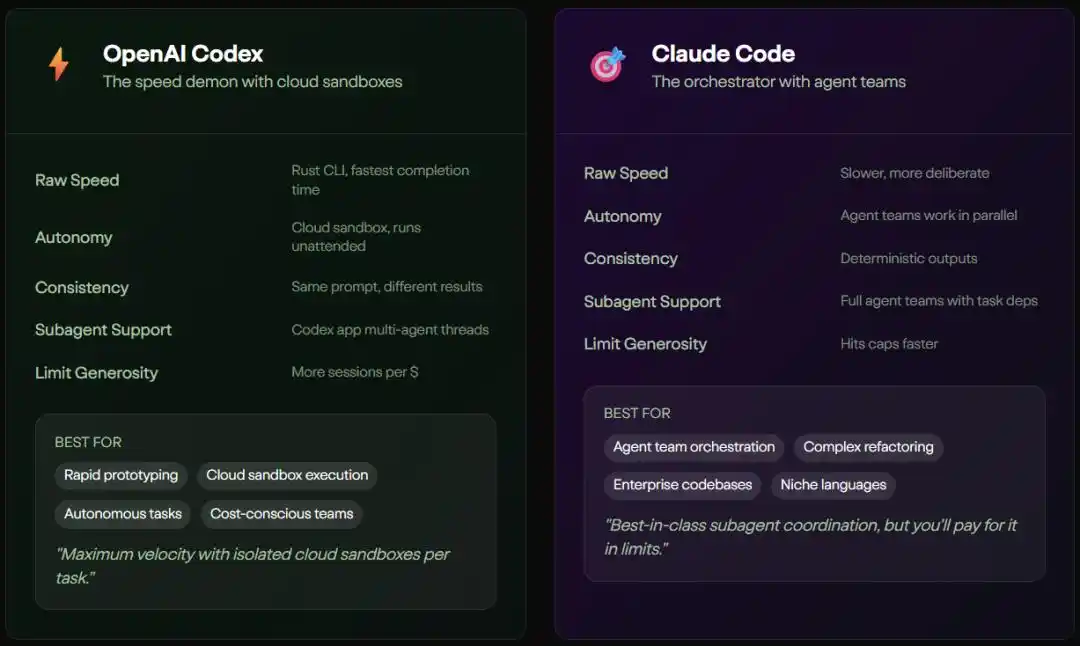

The underlying logic of Codex is “In all martial arts, speed is invincible.” It’s like an experienced senior developer with five years of expertise, always right behind you, ready to complete your code.

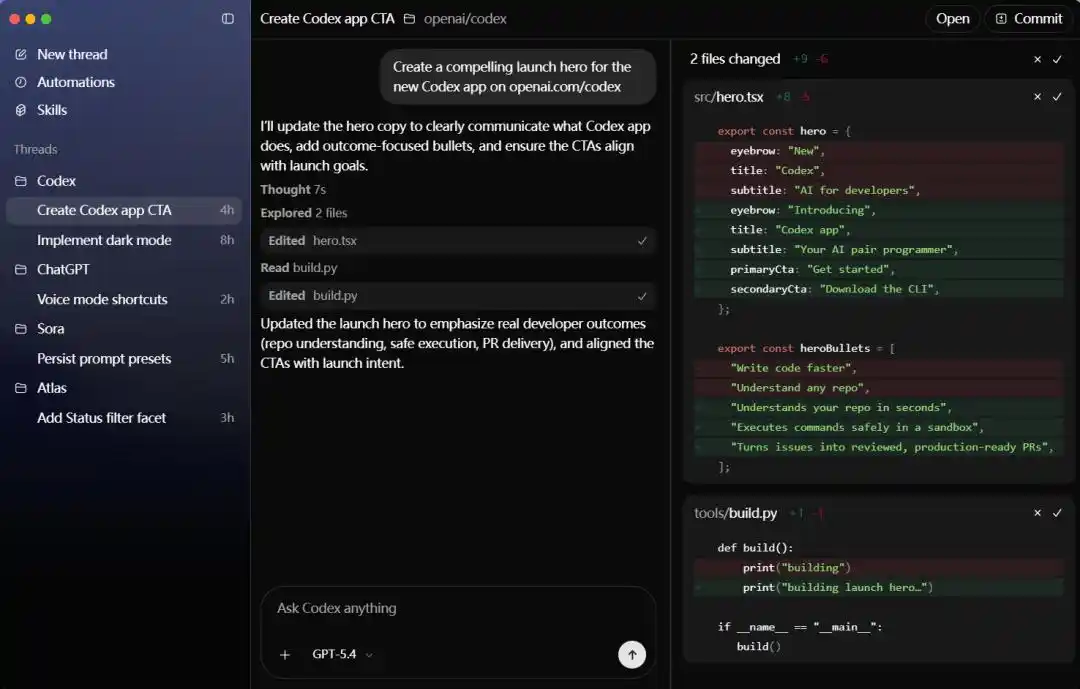

In OpenAI’s vision, Codex is a lightweight, highly interactive terminal agent designed for rapid iteration and interactive programming. With the support of Cerebras WSE-3 hardware, it achieves a throughput of up to 1,000 tokens per second. In its workflow, Codex offers three distinct approval modes—suggestions, automatic editing, and fully automated—keeping developers始终 within the loop. This design philosophy perfectly suits power developers who need to quickly prototype and handle high-frequency interactions.

In contrast, Claude Code has possessed a cool, restrained "architect" persona from its inception.

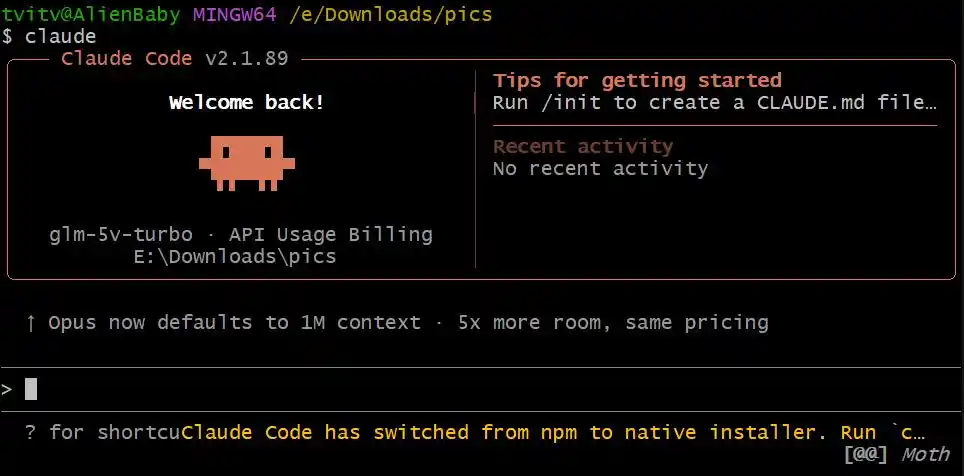

Anthropic has infused it with the capability to handle extremely complex tasks. It relies on a massive context window of up to 1 million tokens and a unique "compression" technique to enable infinite conversations. Claude Code’s guiding principle is “Master the big picture, then act with strategy.” Before performing any action, it first uses agent-based search to thoroughly understand the entire codebase’s structure, then coordinates modifications across multiple files for consistency. For enterprise-level refactoring tasks involving tens of thousands of lines of code, Claude Code demonstrates remarkable mastery.

However, over time and as their applications have expanded, these two originally very different tools have begun to emulate each other.

Image source: MorphLLM

When handling complex projects, the biggest bottleneck for monolithic AI models is context pollution. When you ask the AI to refactor an authentication module, it often forgets the design pattern of the first file after reading 40 files. To address this pain point, two companies arrived at nearly identical solutions: assign independent context windows to each subtask.

OpenAI soon launched a brand-new macOS desktop app that isolates tasks into separate threads and runs them independently in a cloud sandbox. Anthropic introduced an agent team architecture that allows developers to spawn multiple sub-agents, which share task lists and dependencies and work in parallel within their own independent windows. You’ll find that, whether called a “cloud sandbox” or an “agent team,” their core engineering principles are now identical.

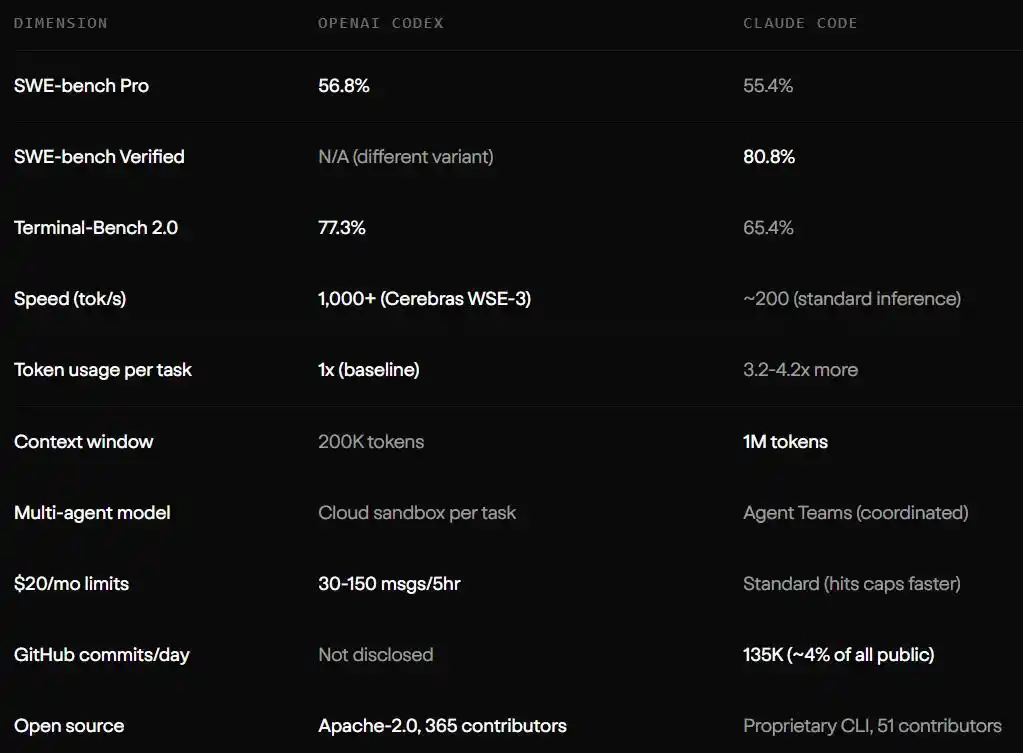

On the benchmark scorecards, they also show a subtle balance. GPT-5.3-Codex leads with a score of 77.3% on the Terminal-Bench 2.0 terminal task. Claude Code achieves 80.8% on the complex SWE-bench Verified leaderboard. Each excels in its own strength area while striving to close its weaknesses.

The OpenClaw Effect: The Invisible Hand That Topples High Walls

If the internal strategies of two companies determine their path toward homogenization, then the pressure exerted by the entire open-source ecosystem is an undeniable external force. Here, we must highlight OpenClaw’s profound impact on the entire AI programming tools landscape.

As a workflow framework launched by the open-source community, OpenClaw has effectively broken down the ecosystem walls painstakingly built by industry giants. It standardizes the interaction between large models and local toolchains. Previously, how to enable large models to gracefully invoke local Git commits, securely run test scripts in sandboxed environments, and perform multi-step reasoning verification were proprietary “black technologies” proudly guarded by Codex and Claude Code.

But OpenClaw has abstracted these processes into a universal protocol. This means developers are no longer locked into specific platforms for particular collaboration models. The open-source community’s momentum has turned standardization into an irreversible tide. Faced with this reality, both OpenAI and Anthropic have no choice but to lower their barriers and comply with this open standard.

When the underlying technological barriers are leveled by open-source forces like OpenClaw, and all advanced features become industry-standard configurations, Codex and Claude Code have no choice but to engage in endless competition over increasingly subtle aspects of user experience. This is why they seem to be growing more alike—under standardized frameworks, the optimal solution is often just one, much like convergent evolution in biology.

Codex is catching up to Claude Code.

Although Claude Code and Codex are evolving toward convergence, differences still exist, and Codex is already preferred by some developers in certain areas.

A few days ago, u/Canamerican726, a senior engineer with 14 years of experience at a tech giant, shared an extremely rigorous review on the r/ClaudeCode community.

Specifically, in a complex project containing 80,000 lines of code, he spent 100 hours using Claude Code and 20 hours using Codex.

From his perspective, using Claude Code is like guiding an engineer racing against a deadline—it moves at lightning speed but often ignores the specifications written in CLAUDE.md and prefers to keep adding code to existing files to complete tasks, lacking any sense of refactoring.

In contrast, Codex gave him the feeling of a seasoned professional with 5 to 6 years of experience. Although it was three to four times slower in processing, it would proactively pause to reflect and refactor code along the way, strictly adhering to instruction boundaries. This high level of autonomy allowed the engineer to confidently delegate tasks to it and focus on other matters.

The same sentiment appears on social networks like X. Researcher Aran Komatsuzaki, drawing from his own experience, noted that while Claude Code still leads in front-end tasks, Codex, which frequently calls upon web searches, is clearly more robust in backend planning and keeping information up to date.

The comment section is filled with hard-won lessons from real-world use cases. Developers sharply point out that while Opus-based models run quickly, they often accumulate massive amounts of “technical debt”; Codex may be slower, but it cleans up as it goes. I even saw a user distill a survival rule: start a new session as soon as your context window usage hits 70%, or you’re very likely to receive hidden bugs courtesy of the system.

These real-world complaints from the front lines clearly show that when the feature sets of these two essential tools become increasingly overlapping, what ultimately determines a developer’s preferred camp is often these small experiential differences related to “filling the gaps” and “mental maintenance.” Of course, Chinese users also face some unique challenges, such as:

Cold Reflection: The Hidden Ecosystem Battle Behind Homogenization

Of course, the advantages and disadvantages of Codex and Claude Code ultimately depend on the developers themselves and their individual skills, as summarized in the review by u/Canamerican726 above: if you don't understand software engineering, both tools will produce poor results—tools are not a substitute for skill.

This statement shatters a long-standing illusion created by AI programming tools. We once believed that with a powerful enough AI assistant, even a complete beginner could single-handedly build enterprise-grade applications. But the reality is that Claude Code requires an extremely focused and highly skilled “driver”—otherwise, it easily loses its way in large codebases. While Codex is more independent, it still needs developers to provide precise system context to achieve its full potential.

So, in today’s environment where tool capabilities are highly homogenized, where have the moats of these two companies shifted to?

The answer lies in those dry financial statements and pricing strategies. For the same task, Claude Code often consumes three to four times as many tokens as Codex, resulting in higher usage costs. For enterprise teams, using Claude Code requires paying $100 to $200 per developer per month, while Codex bundles its capabilities into more affordable subscription plans and has accumulated a large base of users through its extensive GitHub community.

Image source: MorphLLM

Anthropic’s ambition is to deeply integrate Claude Code into the workflows of tech giants with abundant resources. For example, Stripe enabled 1,370 engineers to use Claude Code, completing a cross-language code migration in just four days—a task that would have taken 10 people weeks to accomplish. Ramp further relied on it to reduce incident response times by 80%. Meanwhile, OpenAI leveraged its pervasive ecosystem penetration to make Codex the default choice for many everyday developers.

This is no longer just a technological competition, but a war of attrition centered on ecosystem lock-in, pricing strategies, and reshaping user habits.

The Developer's Crossroads

Looking back on this year’s technological evolution, the release of GPT-5.4-Cyber is merely a minor footnote in this long-term battle. Codex and Claude Code are converging toward the same face, signaling that AI programming tools have officially moved beyond their early, uncertain, and novelty-driven testing phase into a mature and unremarkable industrial production stage.

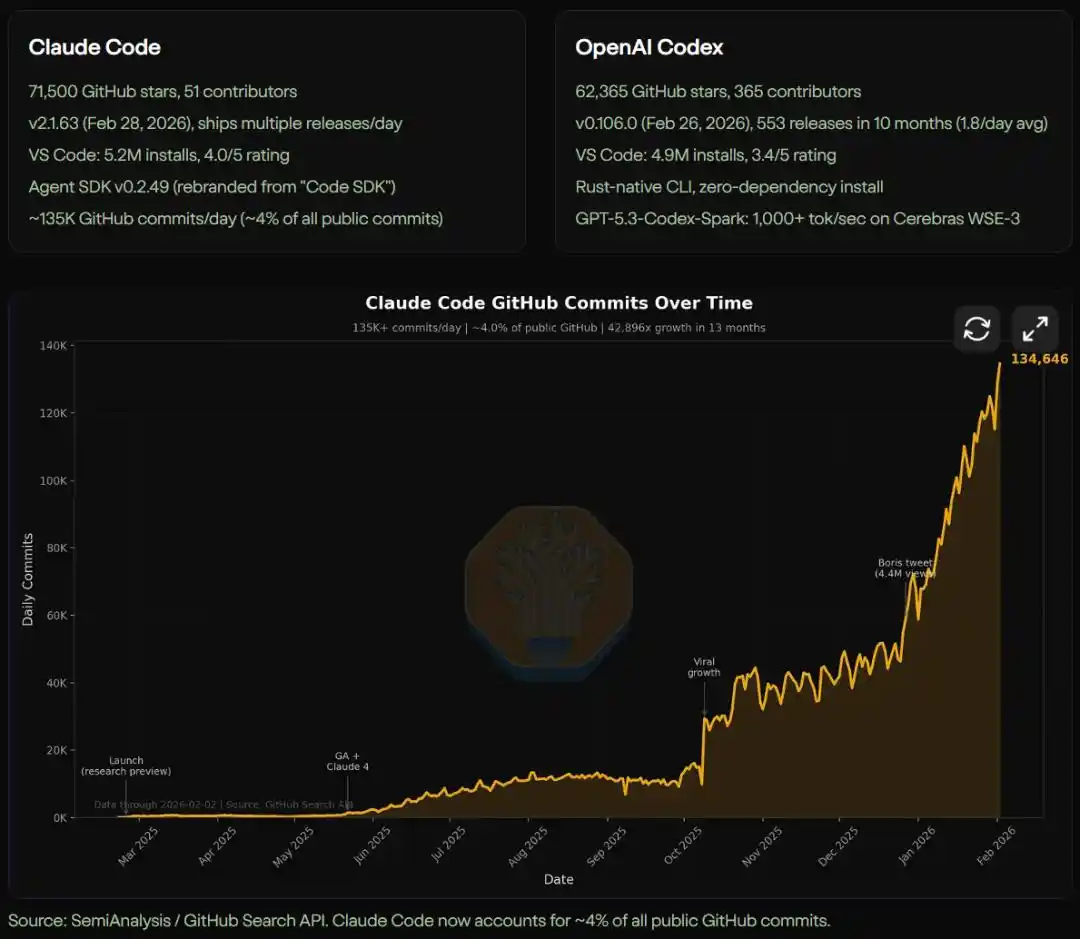

Today, Claude Code generates 135,000 GitHub commits daily, accounting for 4% of all public commits online. We can anticipate that, in the near future, most boilerplate code, basic test cases, and routine code refactoring will be quietly handled in the background by AI agents that are increasingly resembling one another.

Image source: MorphLLM & SemiAnalysis / GitHub Search API

When faced with two super tools that are infinitely similar in capability and mutually imitating in experience, what remains of our core value as human developers? Perhaps the era of tool-based advantages is coming to an end. When everyone holds equally sharp weapons, what truly determines victory will no longer be who has faster code completion, but who can better define problems, who possesses a broader systemic architecture perspective, and who can find that uniquely human, irreplaceable quality in a code world saturated with AI.

By the way, which one do you choose?

Reference link

https://www.morphllm.com/comparisons/codex-vs-claude-code

https://www.reddit.com/r/ClaudeCode/comments/1sk7e2k/claude_code_100_hours_vs_codex_20_hours/

https://x.com/arankomatsuzaki/status/2044270102003196007

https://www.nytimes.com/2026/04/14/technology/openai-cybersecurity-gpt54-cyber.html

This article is from the WeChat public account "Machine Heart" (ID: almosthuman2014), authored by Machine Heart.