Author: Anita, Sentient Asia-Pacific Executive

The war in Iran has thrown large models from the lab straight into the battlefield.

The "Epic Fury" operation at the end of February 2026 was not just a joint airstrike, but more like an AI stress test conducted on a real battlefield—those who can compress the "sensor-decision-shooter" chain into minutes or even seconds will hold the pricing power for the next round of geopolitics.

I. Epic Fury: The First "AI Full-Stack War"

During this operation, U.S. and Israeli official sources claimed that the concentrated strikes against Iran’s key military and nuclear facilities “achieved strategic success,” and repeatedly implied that Iran’s Supreme Leader Khamenei was likely killed in an attack on an underground command facility in northern Tehran—but Iran has long refused to provide clear confirmation of his status, turning this “decapitation” into more of a battle over authoritative narratives.

From an operational perspective, what defines Epic Fury is not the duration, but the density: intensive airstrikes, drone swarm penetrations, special operations, and cyber warfare interwoven over just over a decade—underpinned by a highly software-driven作战栈—the Palantir battlefield ontology and digital twin platform, U.S. defense agencies’ intelligence fusion systems, Israel’s automated target generation tools, augmented by the emerging roles of cutting-edge large model companies like OpenAI.

This war marks a symbolic turning point: from here on, "AI involvement in military decision-making" is no longer just a buzzword in Pentagon PowerPoint slides, but a tangible source of cash flow and political risk that cannot be ignored by markets, regulators, and ethical debates.

II. OpenAI: From an "Ethics Declaration" to the Department of Defense's Most Expensive SaaS Subscription

In just two or three years, OpenAI's public stance has undergone a remarkable transformation.

From distancing itself from "military applications" to acknowledging support for national security and defense projects under strict safety principles, and securing what may be the most sensitive customer contract of this era.

Around February 27, 2026, Sam Altman announced that the company had reached an agreement with the U.S. Department of Defense to deploy GPT series models on a classified network for defense-related scenarios such as intelligence analysis, translation, and war gaming. In some public materials and media reports, this traditionally named Department of Defense was deliberately referred to as the “Department of War,” symbolically reverting to more offensive war-related language, despite the legal name of the agency remaining Department of Defense.

The publicly reported "red lines" can be summarized as three:

Does not participate in mass surveillance within the United States;

Human oversight must be maintained in the use of force; do not directly drive fully autonomous lethal weapon systems.

Maintain human oversight and accountability in high-risk decisions.

These principles serve both as OpenAI’s ethical stance toward the public and as a bargaining chip in contract negotiations—signaling to Washington that the company is willing to cooperate, but prefers to do so within “controlled boundaries.” nytimes+1

What role do these models play in real-world scenarios like Epic Fury? Public information will only remain at safe descriptions—assisting in intelligence processing, analyzing complex data, and helping decision-makers form a situational picture more quickly.

From a technical standpoint, feeding massive amounts of satellite imagery, signals intelligence, and social media streams into a large model, then having it rank, predict paths, and assess risks for potential "high-value targets," is essentially very close to a "battlefield brain."

For Wall Street, the significance of this agreement is straightforward.

After Anthropic was labeled a "supply chain risk" by the Pentagon for holding firm on stricter red lines, OpenAI secured this multi-hundred-million-dollar, hard-to-challenge defense contract with a stance of "ethically limited compromise, commercially massive gain."

Three: Anthropic — The "Principle-Based" Player Hovering on the Edge of Defense Budgets

In stark contrast to OpenAI’s “pragmatic” approach, Anthropic finds itself in a difficult position: it was once considered one of the Pentagon’s most valuable providers of cutting-edge models, but was abruptly excluded from the entire system for refusing to compromise on ethical boundaries.

Multiple media outlets reported that during negotiations with the Department of Defense, Anthropic took a firm stance on two points:

Claude does not participate in fully autonomous weapons systems;

Claude does not participate in mass surveillance or profiling of U.S. citizens.

Meanwhile, the Pentagon’s position is closer to “no legitimate use should be precluded by model providers.”

After negotiations broke down, Defense Secretary Pete Hegseth announced, following the deadline, that Anthropic would be designated as a national security “supply chain risk,” requiring all contractors doing business with the military to complete their migration away from Claude within six months—a label previously reserved for companies from adversarial nations such as Huawei, and now applied for the first time to a U.S.-based AI startup, sparking discussions in Silicon Valley about a “chilling effect.”

Internal Pentagon assessments show that fully replacing the large model stack embedded in classified systems could take months, meaning the ban's effective date overlaps significantly with the Epic Fury time window.

From a technical reality perspective, Claude likely continued to participate in U.S. national security work in some form before being forcibly removed by executive order—yet no one is willing to clearly articulate this connection during hearings, which is typical of the modern military–industrial–tech complex’s “gray zone.”

The capital market reads a simple yet dangerous lesson: when "safety red lines" conflict with "maximizing defense orders," the company more willing to negotiate is often the safer investment; while companies that stand by their principles may, overnight, be labeled as "supply chain risks" and have investors hit the "re-evaluation" button.

Four: The True Central Nervous System—Microsoft, Google, and the “Cloud-Based Military-Industrial Complex”

If OpenAI and Anthropic are the "brains" in this war, then Microsoft and Google are the true central nervous system of this framework:

Without their cloud, all large models and local AI tools would remain stuck on PowerPoint.

Microsoft Azure: From Office Cloud to Kill Chain Operating System

AP and multiple institutions' investigations show that since October 2023, the scale of Israel's military use of machine learning tools on Azure increased dozens of times within months, reaching up to 64 times the original level, with overall AI function calls nearing 200 times.

Meanwhile, large-scale data storage has reached a scale equivalent to that of the Library of Congress.

This computing power is used to transcribe and translate vast amounts of communications, process signals intelligence from surveillance infrastructure, and integrate with AI systems within Israel (such as Lavender and Gospel) to automatically generate target lists and risk assessments, significantly increasing the throughput of the “target production line.”

Although Microsoft later reduced its services to certain Israeli military units—particularly those involved in surveillance—under public and employee pressure, the core cloud and AI contracts remain active, allowing the company to secure substantial commercial orders while paying a significant reputational cost.

Google Project Nimbus: The wartime cloud with the highest political risk premium

Starting in 2021, Google and Amazon provided approximately $1.2 billion in unified cloud infrastructure—covering computing, storage, and machine learning tools—to the Israeli government and military through Project Nimbus. Employees, scholars, and human rights organizations have consistently warned:

Nimbus's general cloud and AI capabilities are easily applicable to surveillance and military target selection, despite Google's repeated emphasis that the contract "does not include offensive military uses."

At the Epic Fury stage, it is widely believed that Nimbus-type cloud platforms serve as the critical computing foundation supporting the IDF’s complex target planning, battlefield simulation, and real-time intelligence fusion, though the specific invocation pathways and operational details remain classified.

From a risk perspective, this means Google is trading a slightly higher political risk premium for stable revenue from security-focused clients in the Middle East, while internal protests and waves of resignations over this project serve as a reminder to investors: this is not a routine enterprise cloud contract.

Five: Israel’s AI Killing Factory: The Portability of Lavender Logic

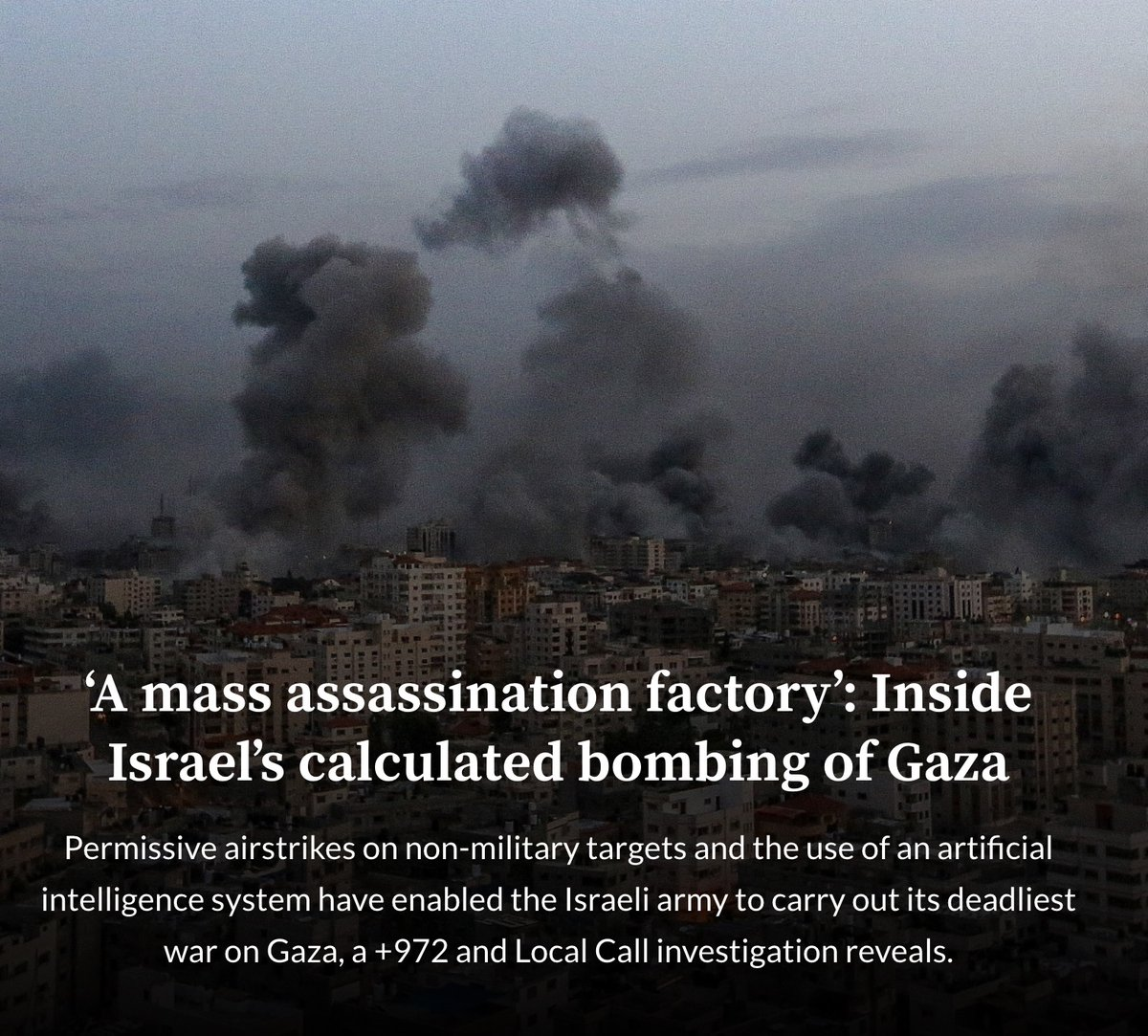

To understand how AI is changing the battlefield, one might start with a set of Israel’s most controversial systems: Lavender, Gospel, and Where’s Daddy.

The investigation by +972 Magazine and Local Call shows:

"Lavender" analyzed behavioral and relational patterns of nearly all adult males in Gaza, assigning each a "suspected militant score" from 1 to 100, and rapidly identified up to 37,000 targets suspected of being affiliated with armed groups;

The "Gospel" focuses on architecture and infrastructure, automatically tagging buildings deemed to be used for military purposes, creating a batchable strike list for the air force;

“Where’s Daddy” optimizes for the temporal dimension: tracking when listed targets return home and triggering strikes when they are with family at the residence—significantly increasing the probability of a “successful kill,” while exposing family members and neighbors to extreme lethal risk.

Frontline Israeli intelligence officers admitted in interviews that human review of Lavender-recommended targets often amounted to just a few seconds of “formal checkboxing”;

Human rights organizations and United Nations experts have described this system as a “highly automated mass assassination factory,” highlighting its structural issues in amplifying algorithmic bias, reducing room for human judgment, and increasing the risk of civilian casualties.

It should be emphasized that public reports more clearly link this system to the Gaza War, while official sources have long remained silent on its specific application in the Iranian theater.

From a technical portability standpoint, as long as sufficient communication data, location trajectories, and social graphs within Iran are available, translating the Lavender logic to the power elite in Tehran is not hard to imagine—this is why many analysts believe Epic Fury is more like an external experiment of a “Gaza-style algorithmic kill factory” targeting the capital of a sovereign state.

Six: Market and Regulation: Pricing Power of the AI-Cloud-Defense Complex

Put these pieces together, and you’ll get a picture that’s very un-“Silicon Valley”:

On one side are large model companies, represented by OpenAI, willing to make limited compromises on red lines and quickly establishing a foothold in defense budgets; reuters+2

On the other end is Anthropic, which adheres to stricter security principles and was ousted by the Secretary of Defense under the guise of “supply chain risks,” delivering a stark lesson to the entire industry: don’t clash with your sole buyer. axios+5

At the foundational level, cloud giants like Microsoft and Google have built the "operating system" of modern warfare using GPU clusters and confidential cloud networks, absorbing the majority of cash flows from wartime AI while bearing increasingly high reputational and regulatory risks.

From an asset pricing perspective, this is no longer just a binary choice between “tech stocks vs. defense stocks,” but rather a new AI–Cloud–Defense complex:

Tactically, low-cost drone swarms, automated target production, and AI decision systems are eroding the deterrence of traditional great powers, making expensive fifth-generation fighter jets and aircraft carrier strike groups appear like outdated capital-intensive assets;

Industrially, large models and cloud providers have gained access to countercyclical cash flows available only to a select few, via military channels, and have entered a profit black box justified by "security and confidentiality," where regulation struggles to achieve full transparency;

Politically, when "who better aligns with the national security agenda" becomes the decisive factor in securing key contracts, companies' commitment to ethical boundaries is systematically undermined, and this incentive structure will be quietly remembered by all future entrepreneurs and investors.

The Iranian battlefield may just be the prologue. Whether the next outbreak occurs in the Taiwan Strait, Eastern Europe, or another corner of the Middle East, what truly determines the pace of war is no longer the number of tanks or the caliber of artillery, but the models trained on petabytes of classified data and the clouds connected to racks of GPUs.

The question is, before we outsource an increasing number of kill chains to a handful of large AI and cloud companies, does global regulation and democratic politics still have time to seriously answer this: when algorithmic recommendations become actual coordinates for explosions, who is ultimately responsible for those decisions?