Original author: Ku Li, Shenchao TechFlow

A few days ago, a photo went viral online.

India hosted an AI summit, with Prime Minister Modi on stage flanked by a row of Silicon Valley executives. During the photo session, Modi took the hand of the person next to him and raised it above his head; others followed suit, linking hands in a show of unity.

But only two people did not hold hands.

The CEOs of OpenAI and Anthropic, the companies behind ChatGPT and Claude, stood side by side, each raising a fist.

No hand-holding, no eye contact, like two rivals forced to sit together by the teacher.

Over the past few years, these two companies have been fiercely competing—Claude was developed by a team that left OpenAI, and both are vying for users, enterprise clients, and funding. During this year’s Super Bowl, Anthropic even spent money on an ad directly mocking ChatGPT’s advertising push.

So not holding hands is normal.

But today, they came together—because of the Pentagon.

This is how it is.

Anthropic, the company behind Claude, signed a contract worth up to $200 million with the U.S. Department of Defense last year. Claude is the first AI model deployed on U.S. military classified networks, assisting with intelligence analysis, mission planning, and other tasks.

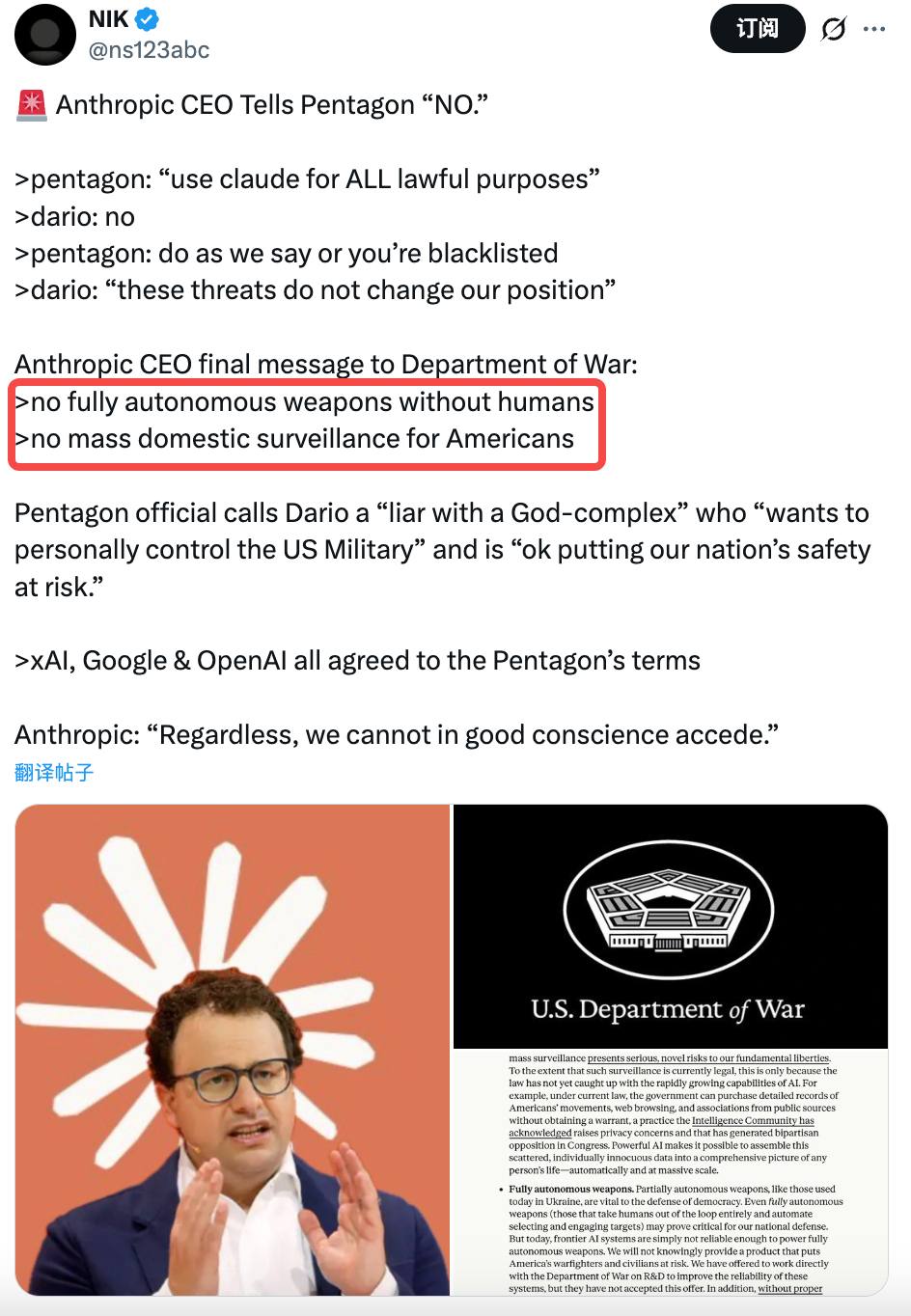

But Anthropic drew two red lines in the contract:

Claude cannot be used for mass surveillance of U.S. citizens or for autonomous weapons without human involvement. (Reference: Anthropic’s 72-Hour Identity Crisis)

However, the Pentagon does not accept it.

Their requirement is four words: no restrictions. Once you buy the tools, you should be free to use them—why should a tech company tell the U.S. military what it can or cannot do?

Last Tuesday, Secretary of Defense Hegseth delivered an ultimatum to Anthropic’s CEO in person: agree by 5:01 p.m. Friday, or face the consequences.

Anthropic did not agree.

Their CEO issued a public statement stating: We understand the importance of AI to U.S. national defense, but in certain cases, AI can undermine rather than uphold democratic values. We cannot accept this request in good conscience.

Emil Michael, Deputy Secretary of Defense and Pentagon negotiator, later called him a fraud on social media, accusing him of having a god complex and joking about national security.

A brief handshake

Then, something unexpected happened.

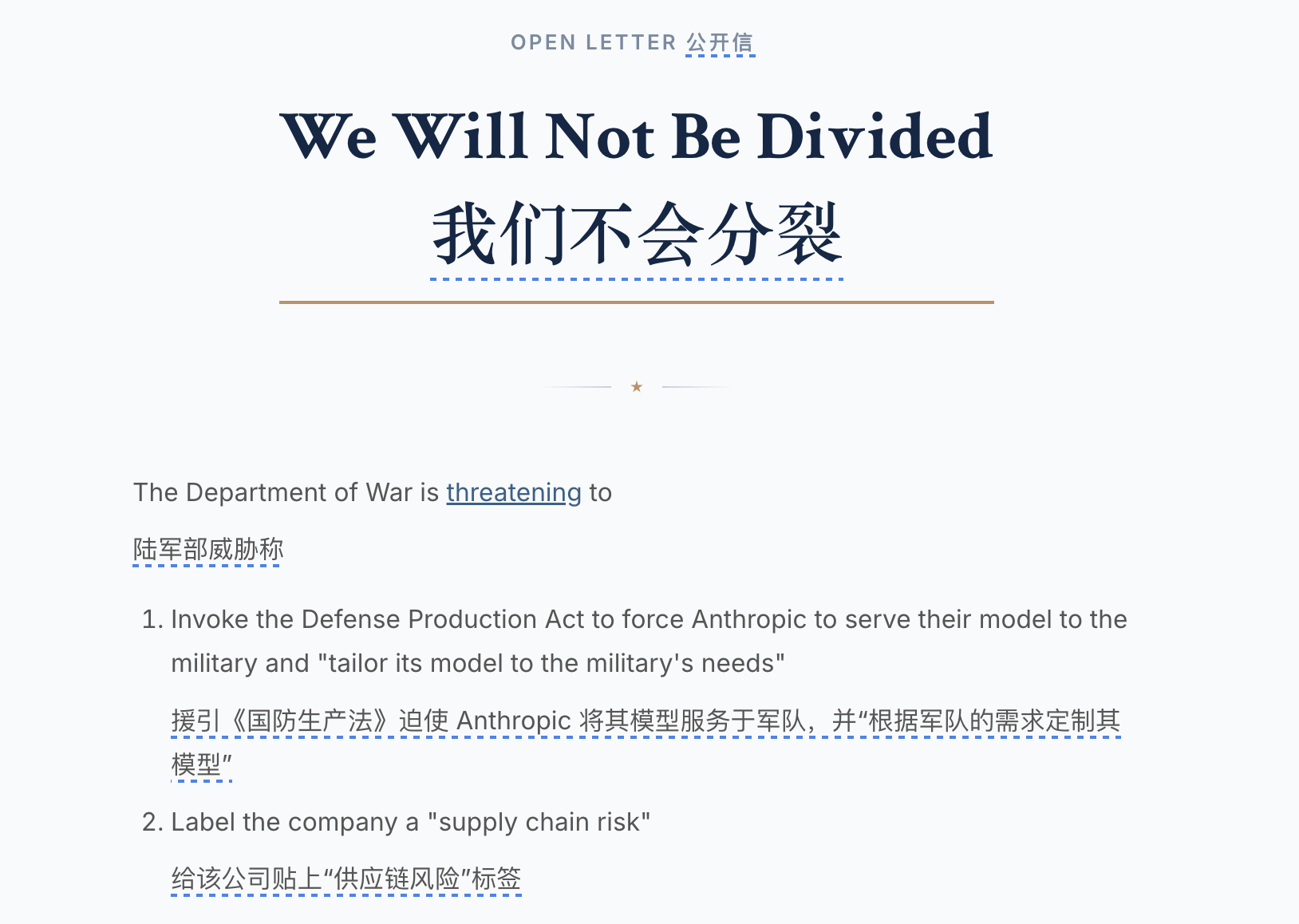

More than 400 employees from OpenAI and Google signed an open letter titled "We Will Not Be Divided."

The letter says the Pentagon is approaching AI companies one by one, trying to get them to agree to terms that Anthropic refused, using fear to divide each company.

The CEO of OpenAI also sent an internal email to all employees stating that OpenAI shares the same red lines as Anthropic:

No mass surveillance, no autonomous lethal weapons.

Just a few days ago, two companies refused to shake hands, but suddenly found themselves on the same side because of the Pentagon.

However, this unity may have lasted only a few hours.

At 5:01 PM on Friday, the Pentagon’s ultimatum expired. Anthropic did not sign.

A U.S. tech company valued at $38 billion refused the U.S. Department of Defense, risking the cancellation of a $200 million contract. In the past, this would have amounted to little more than terminating the contract and finding another supplier. But this time, Washington’s response was anything but business-as-usual.

Trump posted on Truth Social about an hour later, calling Anthropic "left-wing lunatics" and saying they were trying to override the Constitution and making a joke of American soldiers' lives.

He demanded that all federal agencies immediately cease using Anthropic's technology.

Immediately afterward, U.S. Defense Secretary Hegseth designated Anthropic as a "supply chain security risk"—a label typically reserved for companies like Huawei. The implication is clear: all contractors doing business with the U.S. military are now prohibited from using Anthropic’s products.

Anthropic says it will take legal action.

Yet on the same night, OpenAI, which had previously maintained the same position, signed an agreement with the Pentagon.

Ideological issues

What did OpenAI receive?

The position left by Claude after being removed: AI provider for the U.S. military’s classified network. However, OpenAI presented three conditions to the Pentagon: no mass surveillance, no autonomous weapons, and human involvement required for high-risk decisions.

The Pentagon says yes.

You didn't misread. Conditions that Anthropic refused for weeks were agreed upon in just a few days by another company?

Of course, the two solutions are not exactly the same.

Anthropic added another layer: they believe current laws have not kept pace with AI's capabilities—for example, AI can legally purchase, legally aggregate your location data, browsing history, and social media information, resulting in an effect equivalent to surveillance, yet each step remains legal.

Anthropic says writing just “no monitoring” isn’t enough—you need to close this loophole. OpenAI didn’t stand by this point; they accepted the Pentagon’s claim that existing laws are sufficient.

But if you think this is just a matter of differing terms, you’re being naive. This negotiation was never just about terms from the start.

White House AI czar David Sacks has long publicly criticized Anthropic for creating "woke AI" (ideology-first, political correctness); a senior Pentagon official told the media that Dario’s issue is ideologically driven, "We know who we're dealing with."

Elon Musk’s xAI, a direct competitor of Anthropic, repeatedly attacked Anthropic on X this week, claiming the company "hates Western civilization."

The CEO of Anthropic did not attend Trump's inauguration last year. The CEO of OpenAI did.

Kill the chicken to scare the monkeys

So let’s break down what happened.

Same principles, same red lines—Anthropic, because it added an extra layer of safeguards, took the wrong side, and adopted the wrong posture, has been labeled as a national security threat on par with Huawei.

OpenAI missed one layer; by maintaining good relations, they secured the contract. Is this a victory of principle, or the pricing of principle?

This is not the first time the Pentagon's contracts have been boycotted.

In 2018, over 4,000 Google employees signed a petition, and more than a dozen resigned to protest the company’s involvement in Project Maven, a Pentagon initiative that used AI to analyze drone footage and help the military identify targets more quickly.

Google finally exited. They didn't renew and left. The employees won.

Eight years later, the same controversy has resurfaced. But this time, the rules have changed completely. An American company told me I could do business with the military, but there were two things I couldn’t do. The U.S. government’s response was to exclude it from the entire federal system.

Moreover, the label "supply chain security risk" has far greater consequences than losing a $200 million contract.

Anthropic's revenue this year is approximately $14 billion, so a $200 million contract is negligible. But the implication of this label is that any company doing business with the U.S. military cannot use Claude.

These companies don’t need to agree with the Pentagon’s stance—they just need to do a risk assessment: continuing to use Claude could mean losing government contracts; switching to another model means nothing happens.

The choice is easy. That’s the real signal here.

Whether Anthropic can withstand it is not important; what matters is whether the next company will dare to stand firm. It will look at this outcome, assess the cost of holding to its principles, and make a very rational decision.

Looking back at the photo of India, everyone had their hands linked above their heads, but the two of them each held their fists clenched.

Perhaps this is the norm.

AI companies may share the same principles, but their hands don't always connect.