Author: Claude, DeepChain TechFlow

Shenchao Summary: On April 14, NVIDIA released the world’s first open-source quantum AI model family, Ising, on World Quantum Day, achieving 2.5 times faster error correction decoding and three times higher accuracy than industry standards.

Quantum computing stocks surged collectively today, with IonQ up 18% and D-Wave up 15%. On the same day, Chief Scientist William Dally revealed at GTC 2026 that AI has reduced the time to migrate standard cell libraries for chips from eight people over ten months to a single GPU in one night, with design results surpassing human output.

NVIDIA is using AI to accelerate two of the most challenging engineering problems: making quantum computers truly viable and making GPU design faster and better.

On April 14, "World Quantum Day," NVIDIA launched NVIDIA Ising, the world’s first open-source AI model family for quantum computing, prompting a collective surge in quantum-related stocks. Around the same time, company chief scientist William Dally revealed at GTC 2026 the latest advancements in AI’s application within NVIDIA’s internal chip design processes, with one task achieving efficiency improvements of several hundredfold.

Two lines of evidence point to the same conclusion: AI is transitioning from an "application-layer tool" to the "infrastructure of infrastructure," accelerating both downstream industries (such as quantum computing) and the hardware evolution of AI itself.

The world's first open-source quantum AI model targets the two major bottlenecks in quantum computing.

According to NVIDIA's press release on April 14, the first family of Ising models includes two model domains: Ising Calibration and Ising Decoding, addressing the two key bottlenecks in the practical application of quantum computing.

Qubits in quantum processors are inherently noisy; even the best current quantum processors make approximately one error per thousand operations. For quantum computers to be practically useful, the error rate must be reduced to below one in a trillion.

Ising Calibration is a 35-billion-parameter vision-language model that automatically interprets measurement data from quantum processors and makes calibration decisions, reducing calibration times from days to hours. Ising Decoding consists of a pair of 3D convolutional neural networks—optimized for speed and accuracy respectively—that perform real-time decoding for quantum error correction, achieving 2.5 times the speed and three times the accuracy of the current open-source industry standard, pyMatching.

NVIDIA's Quantum Product Director, Sam Stanwyck, explained the rationale behind the open-source strategy at the launch event: each quantum hardware vendor has unique noise characteristics, and open-source models allow them to fine-tune locally using their own data, enhancing performance while protecting proprietary information.

NVIDIA CEO Jensen Huang was more direct, stating in his announcement that AI is becoming the control plane for quantum machines, transforming fragile qubits into scalable, reliable quantum GPU systems.

According to NVIDIA, several institutions have already adopted the Ising model, including the Harvard John A. Paulson School of Engineering and Applied Sciences, Fermilab, IQM Quantum Computers, Lawrence Berkeley National Laboratory, and the UK National Physical Laboratory.

Quantum computing stocks surged collectively, with IonQ rising 18% in a single day.

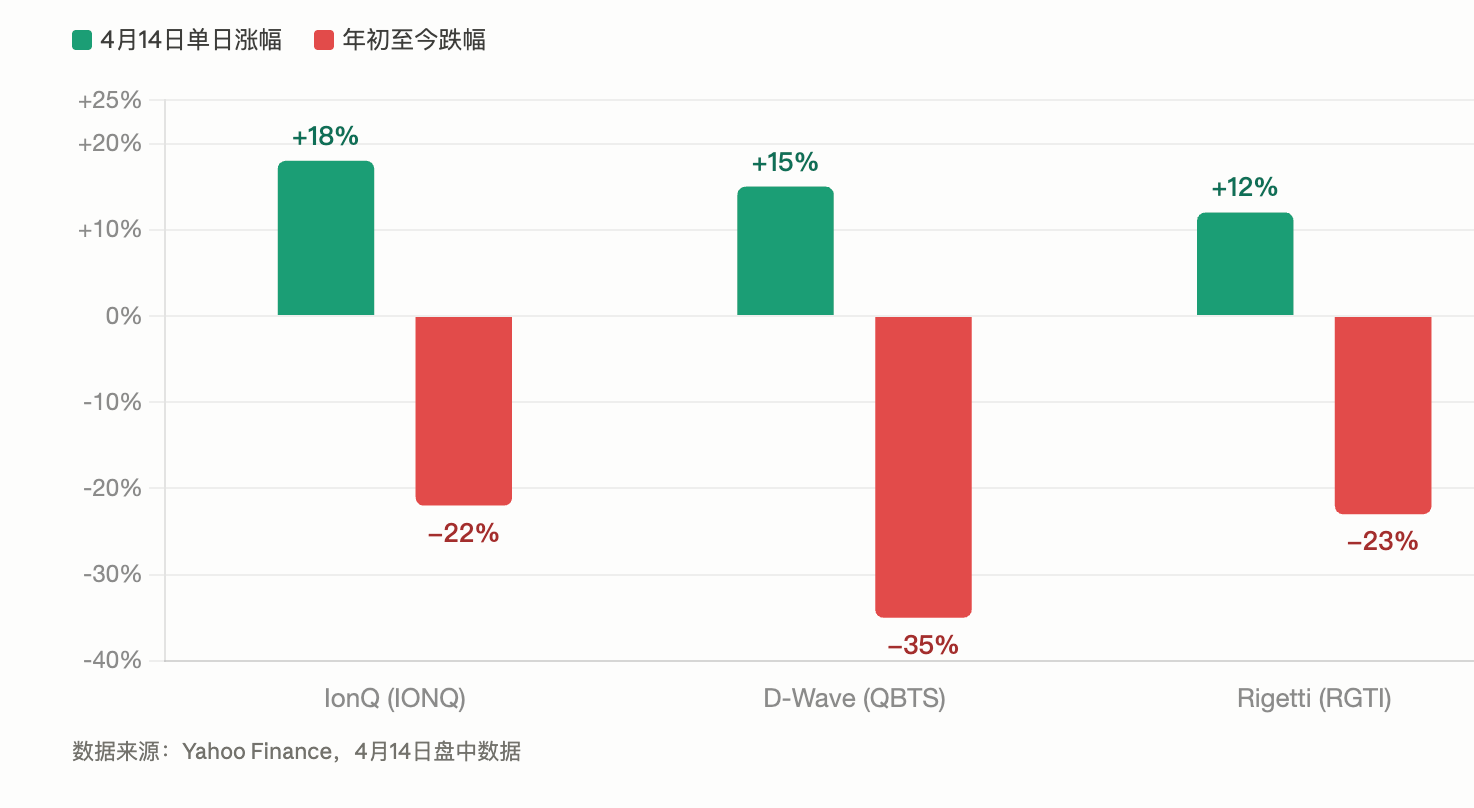

On the day Ising was released, U.S. quantum stocks experienced a collective surge. According to Yahoo Finance data, IonQ rose approximately 18%, D-Wave Quantum increased about 15%, and Rigetti Computing climbed around 12%.

This rally occurred against the backdrop of widespread deep corrections among quantum computing stocks year-to-date. As of April 14, IonQ had declined approximately 22% for the year, D-Wave by about 35%, and Rigetti by around 23%. While the double-digit rebound that day did not alter the overall downward trend for the year, the synchronized magnitude of the move remained notable.

It should be noted that the driver of this market movement was not solely Ising’s announcement. On the same day, IonQ revealed milestones in quantum networking and a DARPA contract, while Rigetti announced an $8.4 million order from India’s Centre for Development of Advanced Computing (C-DAC). The叠加 of multiple catalysts amplified the sector’s momentum.

Research firm Resonance predicts that the global quantum computing market will exceed $11 billion by 2030. On the same day, the Quantum Economic Development Consortium (QED-C) reported in its publication that the global quantum market had reached $1.9 billion in 2025, with a 14% increase in employees at pure-play quantum companies.

Reduced from 80 person-months to one night: AI reshapes NVIDIA chip design processes

Ising is driving acceleration toward external industries, while NVIDIA is using AI to transform its own chip design processes.

At GTC 2026, NVIDIA Chief Scientist William Dally revealed several concrete examples in a conversation with Google Chief Scientist Jeff Dean. The most striking data came from standard cell library migration: whenever NVIDIA transitions to a new semiconductor process node—such as from 7nm to 5nm—approximately 2,500 to 3,000 standard cells must be redesigned and adapted to the new process. Previously, this task required eight engineers working for about ten months. NVIDIA developed a reinforcement learning tool called NVCell, which now completes the entire process overnight on a single GPU, producing cells that match or even outperform human-designed cells in metrics such as area, power consumption, and latency.

According to Tom's Hardware, Dally described the process as resembling "a video game where you fix design rule violations," which is precisely the type of trial-and-error optimization that reinforcement learning excels at.

At a higher level of abstraction, NVIDIA developed proprietary large language models called Chip Nemo and Bug Nemo. These models are fine-tuned on NVIDIA’s three decades of proprietary data, encompassing all RTL code, hardware design documents, and architectural specifications for every GPU in the company’s history. According to Dally, junior engineers can directly ask Chip Nemo questions, eliminating the need to repeatedly interrupt senior designers. He describes Chip Nemo as “a very patient mentor.”

At the circuit optimization level, NVIDIA has also applied reinforcement learning to classic circuit design problems such as carry-lookahead chains. Dally stated that the AI-generated designs are “completely bizarre schemes humans would never think of, yet they perform 20% to 30% better than human-designed circuits.”

There is still a long way to go before AI can independently design chips.

However, Dally also clearly set expectations, stating that while he is eager to achieve an end-to-end state, they are still far from that goal.

NVIDIA’s current AI chip designs remain complementary rather than replacement. AI is making progress in areas such as standard cell placement, bug classification and summarization, routing prediction, and architecture space exploration, but a complete end-to-end automated workflow has not yet been achieved. Dally envisions a long-term direction involving multi-agent models, where different AI systems each handle distinct stages of the design process, mirroring the division of labor in human engineering teams.

According to Computer Weekly, Dally and Dean also discussed in their conversation how AI agents are impacting traditional software tools: when AI agents operate far faster than humans, traditional software tools designed for human users will become performance bottlenecks, requiring redesign across programming tools and business applications.