Original author: David, Shenchao TechFlow

On March 1, missiles and drones from Iran struck the Gulf region, with one landing on an Amazon data center in the UAE.

Data center fire caused a power outage, resulting in approximately 60 cloud services being disrupted.

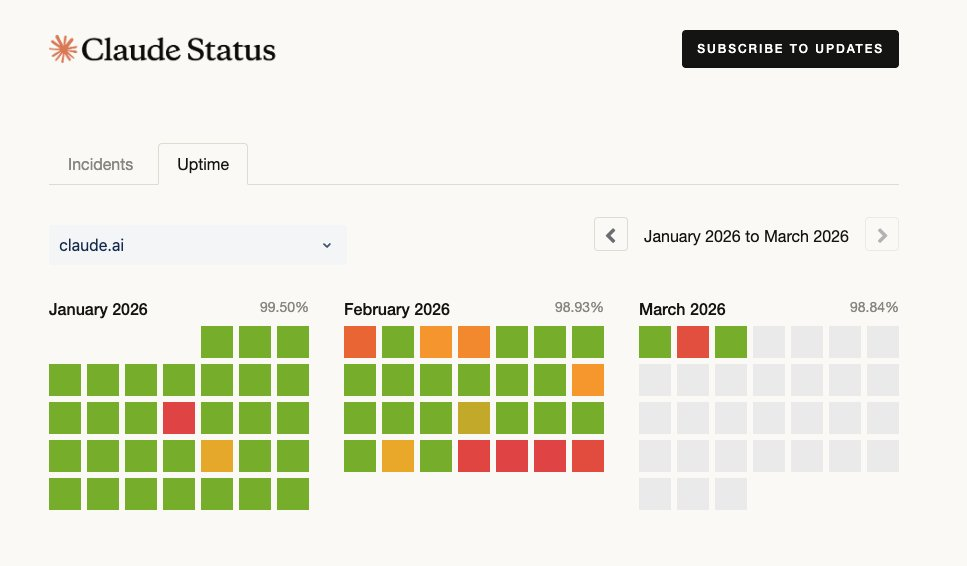

One of the world's largest AI models, Claude, runs on Amazon's cloud. On the same day, Claude experienced a global outage.

Anthropic's official statement is that user traffic surged, overwhelming their servers.

As of press time, social media continues to feature complaints about Claude's service being unavailable; on the prominent prediction market Polymarket, a prediction topic titled "How many more outages will Claude have in March?" has already appeared.

If it is ultimately confirmed that Iran was responsible, this will be the first time in human history:

A commercial data center physically destroyed in a war.

But why would a civilian data center be bombed?

Go back two days. On February 28, the United States and Israel conducted a joint airstrike on Iran, killing Supreme Leader Khamenei and a number of senior officials.

A significant portion of the intelligence analysis, target identification, and battlefield simulation for this airstrike was assisted by Claude. Through collaboration between the military and the data analytics company Palantir, Claude had long been integrated into the U.S. military’s intelligence system.

Ironically, hours before the airstrike, Trump ordered a complete ban on Anthropic because Anthropic refused to hand over AI without restrictions to the Pentagon. But even with the ban in place, the war still had to be fought.

Extracting Claude from the military system publicly requires at least six months.

So the ink on the ban was barely dry when the U.S. military took Claude to bomb Iran. Then Iran retaliated, and missiles landed on the data center running Claude AI.

Source: Bloomberg

The data center was most likely not targeted for destruction, but rather caught in the crossfire. However, regardless of whether the missile was aimed at the data center, one thing is certain:

Truth lies within the range of artillery, and so does AI. Both sides—the one firing the artillery and the one being hit—are within its range.

AI mega-infrastructure built on the Middle East's powder keg

Over the past three years, Silicon Valley has moved half of the AI industry to the Middle Eastern Gulf.

The reason is simple: the UAE and Saudi Arabia have the world's wealthiest sovereign wealth funds, access to cheap electricity, and one key regulation:

Your data must be stored on my platform if you're to serve my clients.

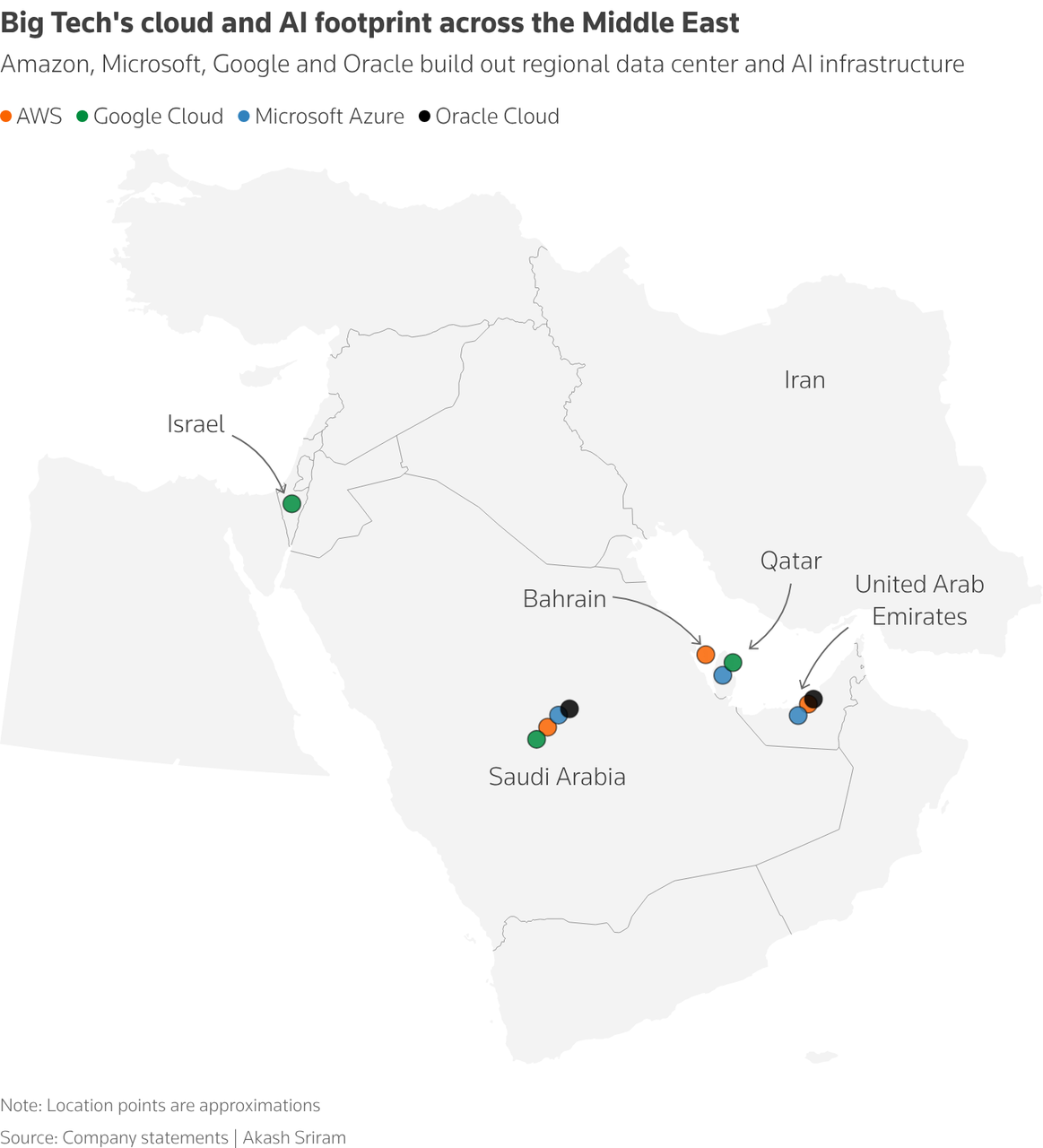

Amazon has opened data centers in the UAE and Bahrain, and is investing $5.3 billion to build another one in Saudi Arabia; Microsoft has nodes in the UAE and Qatar, and its facility in Saudi Arabia is also complete.

OpenAI, in collaboration with NVIDIA and SoftBank, is building an AI campus in the UAE worth over $30 billion, touted as the largest computing facility outside the United States.

In January this year, the United States, along with the UAE and Qatar, signed an agreement called "Pax Silica," which translates to "Peace of Silicon"—sounds quite beautiful.

The core of the agreement is to control the flow of chips and ensure that advanced chips do not fall into Chinese hands.

In exchange, the UAE received approval to import hundreds of thousands of NVIDIA's most advanced processors annually. G42 in Abu Dhabi has severed ties with Huawei, and Saudi Arabia’s AI companies have pledged not to purchase Huawei equipment...

The entire Gulf's AI infrastructure—from chips to data centers to models—is fully aligned with the United States.

These protocols take into account everything, from chip export controls and data sovereignty to investment reciprocity and technology leakage risks.

But none of them considered that someone might use a missile to blow up the server room.

An international security scholar from Qatar University said something that I found quite apt after seeing the Amazon data center fire:

These security frameworks were designed for supply chain control and political alignment; physical security has never been on the agenda.

Cloud computing has been telling the same story for ten years: elasticity, redundancy, decentralization. But data centers are physical buildings with addresses, walls, roofs, and coordinates. No matter how advanced your chips are, if the data center is destroyed, it’s destroyed.

"Cloud" is a metaphor; the data center is not.

AI seems intangible, running in code and floating in the cloud. But code runs on chips, chips are installed in data centers, and data centers are built on Earth.

Who protects AI?

This Amazon data center can be said to have been affected, or, optimistically, caught in the crossfire.

But what about next time?

In the context of escalating global geopolitical conflicts, if your data center is running AI models that assist your adversaries in target identification, they have no reason not to treat your data center as a military target.

International law also has no answer to this question.

Current laws of war address "dual-use facilities," but those provisions refer to factories and bridges—no one considered data centers.

A data center that runs banking transactions during the day and military intelligence analysis at night—is it civilian or military?

In peacetime, data center locations are chosen based on latency, electricity costs, and policy incentives... But when war breaks out, none of that matters—what matters is how far your server room is from the nearest military base.

So, this bombardment has started to shift everyone's attention.

Previously, everyone was discussing the same concern: whether AI would replace my job; but no one talked about another issue:

How vulnerable is AI itself before it replaces you?

A regional conflict took down the Middle East node of the world’s largest cloud provider for an entire day—and that was just one data center.

There are currently nearly 1,300 hyperscale data centers worldwide, with another 770 under construction. These facilities consume increasing amounts of electricity, water, and money, while also storing an ever-growing volume of data—your savings, your medical records, your food delivery orders, and even a nation’s military intelligence...

But the solutions for protecting these server rooms may still consist solely of fire suppression systems and backup generators.

When AI becomes a nation’s infrastructure, its security is no longer just a company’s concern. Who protects AI? Cloud providers? The U.S. Pentagon? Or the United Arab Emirates’ air defense system?

This question was theoretical three days ago. It isn't anymore.

AI is within range of the cannon. In fact, it’s not just AI. In this era, what isn’t within range of the cannon?