Original author: Yi Haotian

In the wave of AI, how can lawyers genuinely use AI without breaching confidentiality obligations? Directly pasting client contracts into ChatGPT could lead to disciplinary action. This article outlines my setup from the perspectives of lawyers’ confidentiality duties, key considerations, and selecting AI service providers.

Lawyer's duty of confidentiality

1. China: Article 33 of the Lawyers Law

First, Article 33 of the People's Republic of China Lawyers Law, which stipulates:

Lawyers shall keep confidential any state secrets and commercial secrets learned during professional activities and shall not disclose clients’ privacy. Lawyers shall maintain confidentiality regarding any circumstances or information learned during professional activities that clients or other parties do not wish to be disclosed.

China's Lawyers Law elevates confidentiality obligations to the level of criminal liability. Article 309 of the Criminal Law defines the offense of disclosing information from cases that should not be made public. Additionally, Article 38 of the Measures for the Administration of Lawyers' Practice explicitly prohibits lawyers from disclosing trade secrets and personal privacy learned during the course of their practice.

Currently, local bar associations and the Ministry of Justice have not issued more detailed guidelines on lawyers' use of generative AI. Therefore, we can refer to the requirements of our counterparts in the United States.

2. United States: ABA Model Rule 1.6 and NY RPC Rule 1.6

If holding a New York State law license (or any U.S. state license), a lawyer’s duty to maintain client confidentiality is not merely an ethical obligation but an enforceable disciplinary rule.

New York State Rule of Professional Conduct 1.6 (NY RPC Rule 1.6) provides:

A lawyer shall not knowingly disclose confidential information... unless the client provides informed consent.

The term “confidential information” here has an extremely broad scope—it is not limited to court secrets but encompasses all information acquired by the lawyer during the representation, including the client’s name, address, financial data, transaction terms, and business strategies, regardless of the source.

More importantly, Rule 1.6(c):

A lawyer shall make reasonable efforts to prevent the inadvertent or unauthorized disclosure of, or unauthorized access to, information relating to the representation of a client.

This means: we must not only refrain from actively disclosing customer information but also take reasonable measures to prevent leaks.

In July 2024, the ABA officially issued Formal Opinion 512—the first comprehensive ethical guidance on the use of generative AI issued by the American Bar Association. The opinion explicitly states:

Before inputting information related to a client’s agent into a generative AI tool, lawyers must assess the likelihood that the information will be disclosed to or accessed by other individuals inside or outside the tool.

Opinion 512 compares AI tools to cloud computing services and requires lawyers to:

- Investigate the reliability, security measures, and data processing policies of the AI tools used.

- Ensure the tool's configuration protects confidentiality and security.

- Confirm that the confidentiality obligation is enforceable (e.g., via contractual agreement).

- Monitor for violations or changes in provider policies

In short: We cannot directly paste client contracts into ChatGPT unless we have conducted a thorough compliance assessment.

This means that, regardless of the jurisdiction in which we operate, the obligation to maintain confidentiality is an absolute底线.

3. Why does AI make confidentiality obligations more complex?

When we input customer contracts into consumer-grade AI applications (such as ChatGPT, Claude, Kimi, etc.), the text is transmitted to third-party servers. Even if providers claim they do not use the data to train models, the following risks still exist:

- Data transmission: Customer PII (Personally Identifiable Information) leaves our control and enters third-party infrastructure.

- Training risk: Consumer-grade products may use input data for model training (review service agreements carefully)

- Violation exposure: We currently rely on the provider’s security measures to fulfill our own ethical obligations.

- Audit gap: We cannot verify what happened after the data transfer.

- Informed consent: Obtaining customer consent for each AI interaction is impractical in practice.

Most lawyers respond by either completely avoiding AI (losing a competitive advantage) or using it without caution (risking disciplinary action). Neither is a good solution. I will discuss the key considerations in detail in Section Three.

OpenClaw: How do I get started?

1. What is OpenClaw?

OpenClaw is an open-source multi-agent AI assistant platform. In simple terms, it’s an “AI gateway” running on our own hardware that can simultaneously manage multiple AI assistants (agents), each with its own role, memory, and tools.

2. Core Features

3. How does it work?

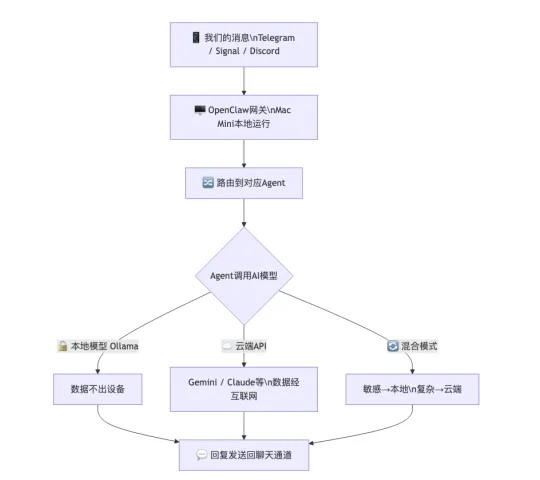

OpenClaw runs as a "gateway" on our local device:

OpenClaw is free and open-source, but we require:

- A running device.

You can use any unused computer or Mac Mini. However, I recommend using a Mac, as the current OpenClaw ecosystem is primarily built around Mac and Linux. Although many developers are working on a Windows version, Mac remains more stable at this stage.

Rent a VPS from service providers such as Alibaba Cloud or Tencent Cloud. Recently, the Kimi official team has launched OpenClaw with one-click deployment; if you'd like to try OpenClaw at a low cost, start with this option.

- API key for AI models (e.g., when using cloud-based models)

You can directly purchase from LLM cloud providers such as Google Gemini, Alibaba Cloud, Moonshot AI, and others. Besides pricing, one advantage of purchasing APIs from developers is that some cloud providers offer Batch APIs. For large-volume, non-urgent tasks, these providers offer a 50% discount in exchange for delivering responses after 24 hours.

The second option is an LLM aggregator platform, such as OpenRouter or Silicon Flow. The advantage of these cloud service providers is that they offer a unified interface, support multiple LLM options, and include routing functionality that allows automatic switching between different LLMs.

Or install Ollama with open-source models locally (if you prefer not to rely on cloud services)—this allows you to freely choose based on your host configuration, offering flexibility for all budgets.

4. Why do I use a Mac Mini?

- Local and production environment isolation: Using a dedicated computer ensures that OpenClaw won’t malfunction and delete my important work files. Of course, renting a VPS can also achieve physical isolation. However, VPSes are typically Linux-based and hosted in the cloud, offering a less smooth user experience than a local machine. For tasks requiring a stable network environment, a VPS remains a good option. Additionally, rented VPSes usually come with modest specifications; upgrading to high-end configurations can be quite expensive.

- Apple Silicon Unified Memory: The unified memory architecture of the M4 chip allows large AI models to be loaded directly into memory for execution, eliminating the need for expensive GPUs. This unified memory architecture combines what are traditionally separate video memory and system memory in Windows systems into a single pool, enabling flexible memory allocation during large model execution at a lower cost than purchasing a dedicated graphics card.

- 32 GB RAM: Sufficient to run MoE models with 35B parameters (such as Qwen 3.5 35B) at an inference speed of approximately 18 tokens/second.

- Extremely low power consumption, compact size, and minimal noise: The Mac Mini consumes approximately 5W in standby mode and 15–30W when running AI models at full load—less than 10 yuan in electricity costs for a month of 24/7 operation. The new Mac Mini is no larger than the palm of your hand and fits easily on a bookshelf or desk corner. Even under full load running AI models, it operates with exceptionally low noise.

What should be focused on in confidentiality work?

When using OpenClaw or any AI tool for legal work, we need to pay attention to three levels of confidentiality.

1. Confidentiality of communication channels

The communication channel between us and the AI assistant is the first line of defense.

I recommend that highly confidential legal matters first use end-to-end encrypted software as the communication channel. Some may ask, “But my clients usually contact me via WeChat.” That’s true—if the client initiates contact via WeChat, there is an implied consent to use WeChat as the channel for information transmission. However, if we proactively transmit the client’s confidential information through non-encrypted channels, we should at least obtain the client’s written consent first.

2. Choosing an API Provider: Saving Money vs. Maintaining Privacy

This is the most critical yet often overlooked issue.

- Coding Plan

In recent years, domestic cloud providers have launched highly attractive "Coding Plans" that offer access to top-tier models' APIs at extremely low prices.

Taking Alibaba Cloud's Bailian as an example:

- Lite Plan: First month ¥7.9, second month ¥20, then ¥40/month thereafter

- Pro Plan: First month ¥39.9, second month ¥100, then ¥200/month thereafter

- Models included: Qwen3.5-Plus, Kimi K2.5, GLM-5, MiniMax M2.5

The price is indeed attractive. And with the subscription plan, you don't have to worry about exceeding API usage limits. However, please note this statement in the Bailian Coding Plan's data policy:

During the use of Coding Plan, model inputs and generated content will be used to improve services and optimize the model.

This means that all content we input, including legal documents that may contain client information, will be used for model training and optimization. For lawyers, this directly violates confidentiality obligations.

- Key information to consider when selecting an API

Since Coding Plan cannot handle confidential information (and of course, cloud providers did not introduce Coding Plan for the purpose of processing confidential information), directly purchasing API tokens or subscribing to a paid service is a better option. When selecting an AI model API, lawyers must review the following points in the service agreement:

- Comparison of Major API Providers

It is important to emphasize that even if API providers claim ZDR and state their data is not used for training, lawyers cannot fully verify the enforcement of these commitments. I believe cloud service providers will not grant individual users access to audit permissions. Returning to ABA Opinion 512, lawyers should investigate the security measures of AI tools and confirm that confidentiality is properly enforced. If we cannot audit the implementation of confidentiality measures, I believe the API does not meet the requirements of Opinion 512. LLMs are black boxes—we cannot confirm what happens to our data after transmission.

3. The Safest Option: Local Model

If we have the highest requirements for confidentiality, running the model locally is the only way to guarantee 100% that data will not be leaked.

Benefits:

• Data never leaves your device, 100% private

• No API fees, no usage restrictions

• No internet dependency, available anytime

• Unaffected by changes in the provider's policies

Disadvantages:

• Slower inference speed (18 tok/s vs. over 100 tok/s in the cloud)

• The model's capabilities are weaker than those of leading cloud-based models (such as GPT-4o and Claude Opus)

• Hardware costs are required

• The context window is limited by memory

Recommended local model:

Note: MoE (Mixture of Experts) is a model architecture that, despite having a total of 35B parameters, activates only about 3B parameters during each inference, significantly reducing computational load and memory requirements. This is why a 35B model can run smoothly on a Mac Mini with 32GB of memory.

My settings

As a lawyer licensed to practice in New York, the following is my actual configuration of OpenClaw based on Opinion 512.

1. Communication channel

Signal (end-to-end encrypted) serves as the primary channel for legal matters. All communications with legal counsel are conducted via Signal to ensure complete encryption at the communication level. Non-sensitive daily operations are handled via Telegram.

2. Model Configuration

I use a hybrid model strategy:

3. Core Security Process: Anonymization Pipeline

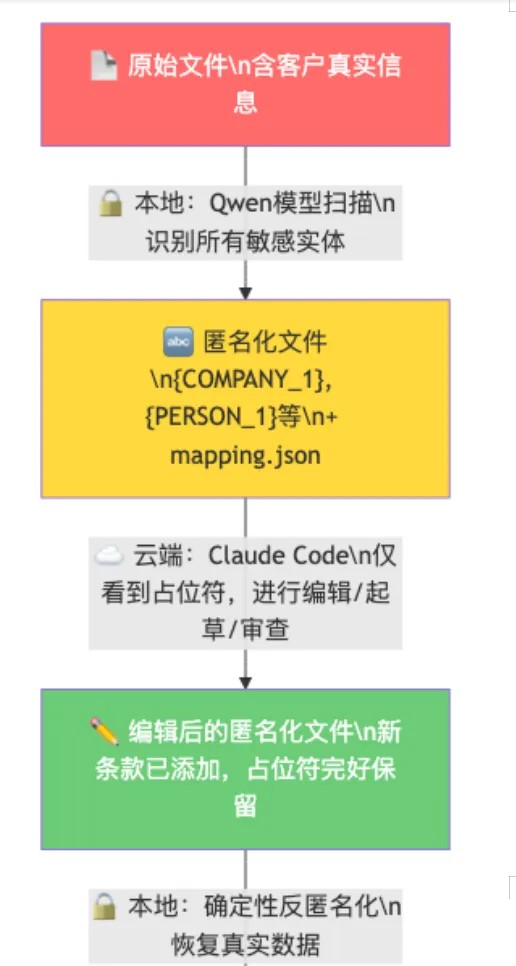

This is the most important part of the entire configuration. When I need to use powerful cloud-based AI to draft or review sensitive documents:

Key point: The mapping.json file (which maps real data to placeholders) never leaves our devices. The cloud AI only sees "{COMPANY_1} acquires 30% equity in {COMPANY_2}"—it does not and cannot know the actual parties involved.

4. Why choose Claude Code, a consumer-grade AI, as a cloud-based editing tool?

- Subscription plan: Max plans at $100/month or $200/month are more cost-effective than pay-as-you-go API pricing.

- Latest and most powerful model: Subscribers can directly use the newly released model (such as Claude Opus 4).

- Compare API pricing: Claude API charges $3 per million tokens for input and $15 per million tokens for output. Reviewing a complex contract may consume millions of tokens, making the pay-per-use cost far exceed subscription fees. If price isn’t a concern, using Opus’s API directly after encryption offers a smoother experience—though at a higher cost. Based on my current token usage, switching entirely to the Claude API would cost over $500 per month.

This solution fully satisfies all requirements of ABA Formal Opinion 512, as the cloud-based AI never receives confidential information.

5. Hardware Requirements

6. Cost Calculation

In contrast, enterprise-level legal AI platforms like Harvey AI cost $1,000–$1,200 per user per month (approximately ¥7,200–8,600) and typically require a minimum of 20 seats.

7. Open-source project

I have open-sourced this configuration and workflow on GitHub:

VibeCodingLegalTools (https://github.com/Reytian/VibeCodingLegalTools)—An AI workflow compliant with Rule 1.6 for legal practice

The project includes:

Complete anonymization/de-anonymization tool (LDA)

- OpenClaw Configuration Template

- Agent workspace template

- Customer Memory System Template

- Detailed ethical compliance analysis

My thoughts on legal AI

Full localization is ideal, but currently not feasible.

In an ideal world, lawyers should run AI entirely locally—all data staying on their own devices, with zero risk of leakage. But in reality:

- Model capability gap: There is a significant capability gap between locally runnable models (at the 35B parameter level) and state-of-the-art cloud-based models (at the trillion-parameter level). For simple legal consultations and information retrieval, local models are sufficient. However, for complex contract drafting, multi-turn legal reasoning, and high-quality text generation, local models still fall short.

- Hardware cost: Running a truly powerful local model (such as one with 70B+ parameters) requires 64GB or more memory, causing hardware costs to rise rapidly. This is economically unfeasible for solo practitioners and small law firms.

- Model update lag: Open-source models are always updated more slowly than commercial state-of-the-art models.

2. Relying entirely on the cloud also has issues

On the other hand, relying entirely on cloud APIs is not a solution:

Even if API providers promise ZDR (zero data retention) and that data will not be used for training, lawyers are practically unable to investigate any suspicious leaks.

The LLM is a black box. We cannot open it to check whether our data has been used for training. We can only rely on the provider's assurances.

As a lawyer, "trust" is not a compliance strategy. Rule 1.6 requires "reasonable efforts"—not reasonable trust.

3. The hybrid model is the optimal solution today

That's why I chose a hybrid model strategy:

1. Daily inquiries → Local model: Simple legal questions, information retrieval, preliminary analysis—all completed locally

2. Complex tasks → Cloud API: Use trusted APIs when stronger reasoning is required, but avoid transmitting sensitive information.

3. Sensitive Files → Anonymization Pipeline: When cloud-based AI processing of confidential files is required, anonymize them locally first, then send them to the cloud for processing, and finally restore them locally.

The core idea of this solution is to use technology (anonymization) to bridge the trust gap. We do not need to trust any AI provider to securely handle our customer data, because they never receive the customer data in the first place.

The cloud AI only ever sees "{COMPANY_1}" and "{PERSON_1}", not our customers' real names.

Conclusion

AI won't replace lawyers, but lawyers who use AI will eventually replace those who don't.

The key is not whether to use AI, but how to use it. The duty of confidentiality is the foundation of legal practice; it should not be an obstacle to embracing AI, but rather a standard for selecting AI solutions.

What does Legal AI sell? I think there are two types:

1. Knowledge;

2. Tools.

I believe all of you lawyers already have sufficient knowledge—you just need a more suitable tool. When a Mac Mini costs less than a month’s subscription to Harvey AI, building your own compliance system may be a more practical choice for independent lawyers.

A Mac Mini, a set of OpenClaw, and an encrypted communication channel—that’s all it takes for a compliant AI legal workstation.

This document does not constitute legal advice. Attorneys should evaluate the workflow described herein in accordance with the specific ethical rules of their jurisdiction and seek professional ethical guidance when necessary.

Reference materials:

- ABA Model Rules of Professional Conduct, Rule 1.6

- ABA Formal Opinion 512 — Generative Artificial Intelligence Tools (2024)

- Law of the People's Republic of China on Lawyers (2017 Revision)

- OpenClaw (https://openclaw.ai/)

- VibeCodingLegalTools—GitHub (https://github.com/Reytian/VibeCodingLegalTools)