Source: CoinW Research Institute

Summary

Gradients is a decentralized AI training subnet (SN56) built on Bittensor, centered around mechanisms such as task posting, miner competition, and verification filtering, transforming model training from a complex technical process into a market-driven network collaboration. Architecturally, it integrates AutoML with distributed computing power to form a training marketplace anchored in incentive mechanisms, lowering the barrier to AI adoption while improving computational efficiency. From an ecosystem and data perspective, Gradients has completed its foundational network setup, but incentive weights and capital inflows remain relatively limited. Gradients fills a critical training infrastructure gap in the TAO ecosystem and explores a new paradigm of “market-driven AI optimization,” with long-term potential to become a key entry point for decentralized AI training.

1. Starting with Web2 AutoML: The Current State and Limitations of AI Training

1.1 What is AutoML?

Traditionally, training an AI model was considered a high-barrier task, requiring engineers to handle data, select models, repeatedly tune parameters, and evaluate performance—a complex and time-consuming process. The emergence of AutoML (Automated Machine Learning) essentially packages these tedious steps into an automated workflow. Think of it as a “tool that builds models automatically”: users only need to provide data and specify their goal—such as classification, prediction, or recognition—and the system automatically handles everything else, including model selection, parameter tuning, and training optimization. This transforms AI from a tool accessible only to a small group of specialized engineers into a capability that ordinary developers and businesses can readily use, marking a crucial step toward the widespread adoption of AI.

1.2 Core Limitations of Traditional AutoML

Currently, mainstream implementations of AutoML are concentrated on cloud provider platforms such as Google Vertex AI and AWS SageMaker, which offer "AI training as a service." Although Web2 AutoML has significantly lowered the barrier to using AI, its underlying model still has clear limitations. First, there is centralization: computing power, pricing, and rules are all controlled by the platform, leaving users heavily dependent on a single provider with little bargaining power. Second, costs are high and opaque—GPU resources essential for AI training are largely held by cloud providers, and pricing mechanisms lack market-driven competition. More critically, optimization efficiency has an upper limit. Traditional AutoML is still fundamentally "a system helping you find the optimal solution"; no matter how complex the system, it remains an optimization along a single technological path. Its exploration space is limited, making it difficult to simultaneously test entirely different approaches. As a result, current Web2 AI training operates as a "closed system," where model training, optimization, and resource scheduling all occur within an environment controlled by a single platform. While efficient, this model is increasingly revealing its boundaries as demand grows.

2. Gradients: Reconstruct AI training with "network"

2.1 What are Gradients: A decentralized AutoML platform

In the previous section, we mentioned that the core issue with traditional Web2 AutoML is its “closed system,” where model training relies on proprietary platforms, offers limited optimization paths, and restricts resource flow. Gradients is a reimagining of this model. Originating from the decentralized engineering community initiated by WanderingWeights and built on the Bittensor network, Gradients is an AI training subnet operating on Subnet 56. Unlike traditional platforms, it does not provide centralized services; instead, it decomposes the training process and delegates it to an open network. Users only need to define their task objectives—such as model type and data—while the rest of the process, including training execution, parameter optimization, and result selection, is automatically handled by the network. In this model, AI training is abstracted from a complex engineering workflow into a simple “submit request, receive result” process, making it more like a general-purpose capability rather than a highly specialized technical task.

2.2 From Closed Systems to Open Collaboration: What Problems Does Gradients Solve?

The core innovation of Gradients lies in transforming the previously closed, single-platform training process into an open, collaborative network. Training tasks are no longer completed by a single system but are distributed among multiple participants who concurrently explore different approaches, with the best results selected through a unified evaluation mechanism. This structure first reduces dependence on centralized service providers, grounding training in distributed computing power; at the same time, dispersed GPU resources are integrated into a single network, fostering a more market-driven allocation of resources through competition. More importantly, model optimization is no longer confined to a single path but continuously approaches better solutions through parallel exploration of multiple methods, thereby raising the overall upper limit of optimization.

2.3 Fundamental Shift: From Tool to “Training Market”

In traditional AutoML, the platform functions more like a tool, using internal algorithms to help users find optimal solutions. In Gradients, however, this process resembles a continuously operating "market": users post requirements, different participants compete on the same task, and results are filtered through an evaluation mechanism. As a result, model performance no longer depends solely on the capability of a single system, but emerges from ongoing competition and iteration among multiple participants. AutoML thus shifts from a relatively closed technical optimization problem into a dynamic, incentive-driven process, enabling optimization capacity to scale continuously as more participants join. This transformation allows AI training to begin exhibiting self-evolving characteristics similar to those of a market.

2.4 Role in the TAO Ecosystem: AI Training Infrastructure Layer

Within Bittensor’s subnet architecture, different subnets perform distinct functions such as inference, data processing, and training, with Gradients operating at the training layer. It transforms distributed computing power into actual model outputs, using task distribution and evaluation mechanisms to continuously schedule and optimize these resources. Simultaneously, it connects computing supply with model demand, turning training from a mere resource consumption process into an organized and optimized networked collaboration. Within this system, Gradients acts as a central hub, converting distributed resources into usable AI capabilities and supporting the development of upper-layer applications.

3. Core Architecture: How AI Training Is Completed Across the Network

In the previous section, we mentioned that Gradients transforms AI training from being completed “within a platform” to being accomplished “through network collaboration.” This section’s core focus is to break down this process in a more intuitive way.

3.1 Distributed Training: How a Single Task Is Completed by Multiple People

You can think of Gradients as a continuously running "collaborative training network." When a user submits a training task, it is not assigned to a single system, but rather distributed simultaneously to multiple participants in the network. These participants each try different training methods using the same data and objectives, and submit their results within a set time frame. The system then evaluates all submissions collectively and selects the best-performing solution. The superior results are rewarded, while the others are eliminated. From the user’s perspective, this process requires only a single task submission—equivalent to simultaneously “calling” multiple different optimization approaches and automatically selecting the optimal solution. The key here is not the strength of any single node, but rather the combination of parallel human effort and automated selection, allowing results to continuously converge toward the optimum.

In this network, there are three main participants: users, miners, and verifiers. Users submit training requests; miners provide computational power and experiment with different training methods; verifiers evaluate the results and select the optimal models. This division of labor enables the training process to run continuously and progressively identify better solutions. Overall, it forms a collaborative network driven by “demand, supply, and evaluation.”

3.2 Market-Driven AutoML

As shown in the previous breakdown of the mechanism, Gradients does not simply move AutoML on-chain; instead, by introducing multi-party participation and incentive structures, it transforms the fundamental logic of model optimization. Traditional AutoML relies on a single system to find optimal solutions within limited pathways, whereas in Gradients, this process is extended across the entire network: different participants continuously experiment with diverse approaches to the same task, with results being consistently evaluated and refined. This turns model optimization from a one-time computational task into a dynamic, iteratively evolving process. Under this mechanism, superior performance yields higher rewards, continuously attracting participants to refine their strategies and drive ongoing improvements in overall outcomes.

4. Incentive and Competition Mechanisms: How AI Training Creates a Positive Feedback Loop

4.1 Incentive Mechanism (TAO-Driven): From Training Actions to Reward Returns

The key to Gradients' long-term operation lies in its underlying incentive mechanism, which relies on Bittensor’s native incentive system. TAO is Bittensor’s native token and serves as the primary vehicle of value within the network: it rewards participants who contribute compute power and model resources, while also enabling participation in subnet weight allocation through staking and other mechanisms, influencing how resources flow between different subnets.

The Bittensor mainnet continuously generates new emissions in the form of TAO (currently approximately 3,600 TAO per day), which are distributed to various subnets according to specific rules. The amount each subnet receives depends on its performance across the network, such as activity level, quality of contributions, and financial support. For the subnet where Gradients operates, the allocated TAO is further distributed internally among participants, with the core principle being that those who contribute better models receive greater rewards.

Specifically, miners submit training results, and verifiers are responsible for testing and scoring these results. The system calculates each participant’s “contribution weight” based on the scores, then distributes rewards according to this weight. Models with better performance—such as stronger generalization and more stable results—earn higher rewards, while verifiers who provide more accurate scores that better reflect true quality also receive greater incentives. This design directly links “doing better” with “earning more,” motivating participants to continuously improve their models.

4.2 Competition Between Subnets: Not Just Internal Competition, But Also External Ranking

In addition to competition within the subnet, Gradients faces “lateral competition” across the entire Bittensor network. Since TAO allocation is dynamic, different subnets compete for higher weights. Only those subnets that consistently deliver high-quality results and attract more participants can secure a larger share of rewards. Thus, Gradients’ incentives depend not only on internal model performance but also on its relative competitiveness within the broader ecosystem. The entire system forms a multi-layered feedback loop: competition among models within subnets, and competition among subnets based on overall performance. Ultimately, computational investment, model effectiveness, and economic returns are tightly linked, creating a self-sustaining positive feedback mechanism.

4.3 Gradients 5.0: From Competition to a Tournament Mechanism

Building on earlier competitive foundations, Gradients evolved into a more structured mechanism known as "tournament-style training." This can be understood as a periodic competition: each training round sets a time window during which multiple participants compete on the same task, gradually being filtered through multiple rounds until the optimal solution is selected. This approach emphasizes phased comparison and centralized evaluation. A key change is that miners no longer submit trained results directly, but instead submit "training methods" (code), which are then uniformly executed by verification nodes. This enhances fairness by eliminating interference from differing computational environments and better protects the privacy of data and training processes. Additionally, winning solutions are often archived as reusable methods, akin to continuously accumulating "best practices." Over time, this mechanism not only selects the optimal models but also builds an ever-evolving library of training methods.

5. Ecosystem Status

5.1 Participant Structure: A Collaborative Network Composed of Demand, Supply, and Evaluation

The Gradients ecosystem consists of three core roles: users (demand side), miners (supply side), and verifiers (evaluation side). Users primarily include AI developers, small and medium-sized enterprises, and Web3 builders—groups that typically possess some technical expertise but lack sufficient compute power or complete model training capabilities, making them more inclined to leverage Gradients to build models at a lower cost. Miners provide GPU compute power and compete to participate in training tasks, with their primary motivation being TAO rewards. Verifiers are responsible for evaluating and ranking training results, serving as a critical component in ensuring model quality and the effective operation of the mechanism.

From a more granular user profile perspective, Gradients’ actual user base exhibits a distinct “semi-developer” characteristic: distinct from top AI labs and entirely non-technical end users, it is primarily composed of developers and Web3 technology users with some engineering capability. This is also reflected in its community structure, where the ecosystem is currently English-dominated, with core users primarily located among developer communities in North America and Europe, alongside partial coverage of Southeast Asian miners and global GPU resource providers. Overall, it closely resembles a technology-driven developer community.

5.2 Current State of Ecosystem Operations

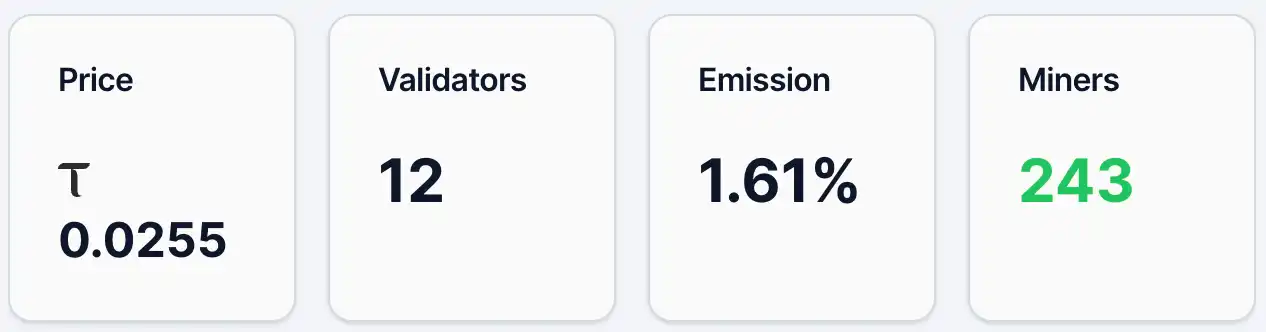

As of May 12, the price of Gradients' alpha token was approximately 0.0255 TAO, with around 4,890 holding addresses, 243 miners, and 12 validators, accounting for 1.61% of emissions. Meanwhile, in its liquidity pool, TAO made up 2.19% and Alpha accounted for 97.81%. Based on price and number of holders, Gradients has established a modest user base and level of attention, but remains in an early stage of distribution. In comparison, Chutes, a leading project in the TAO ecosystem, had an alpha token price of 0.0877 TAO and 13,409 holding addresses on the same day.

Figure 1. Gradient data.

Source:https://bittensormarketcap.com/subnets/56

Next is the Emission incentive mechanism. In the Bittensor ecosystem, Emission refers to the real-time allocation weight a subnet receives from the network’s newly generated rewards. The Bittensor network continuously minting new TAO, distributing them to subnets according to their respective weights. Gradients’ current 1.61% share means it receives only a small portion of the total new network incentives. This metric essentially reflects the market’s “vote” for different subnets through capital flows, such as staking. Therefore, a level of 1.61% typically indicates relatively limited market recognition and capital inflow at present—but also suggests potential for future weight increases. From a capital structure perspective (liquidity pools), TAO accounts for only 2.19%, while Alpha makes up 97.81%, indicating that external capital inflows remain constrained and the current supply is largely driven internally by the subnet. Prices are sensitive to new capital; increased TAO inflows could trigger a more pronounced amplification effect.

6. Competitive Landscape and Advantages/Disadvantages

6.1 Industry Positioning: Decentralized Training Infrastructure for AutoML

Gradients operates in the niche of "AI training infrastructure + decentralized AutoML." It aims to liberate model training from centralized platforms and achieve more efficient resource utilization and model optimization through networked mechanisms. In the Web2 ecosystem, this niche is relatively mature, with prominent examples including Google Vertex AI and AWS SageMaker. These platforms provide developers with end-to-end model training and deployment services via cloud computing, but they remain fundamentally centralized architectures. In contrast, Gradients’ differentiation lies not in offering more features, but in a fundamentally different underlying logic: it transforms training from a "platform service" into a "networked collaboration," using competitive mechanisms to select optimal results, making it closer to a market-driven training system.

6.2 Horizontal Comparison: Differences Between Web2 and Web3 AutoML

From a broader perspective, the difference between Web2 and Web3 in the realm of AutoML is essentially a contrast between two distinct paradigms. The Web2 model emphasizes efficiency and stability, delivering controlled and mature service experiences through centralized resources and engineering optimization. In contrast, the Web3 model prioritizes openness and incentive mechanisms, enabling continuous model evolution through multi-party participation and competition. Specifically, Web2 AutoML functions more like “a powerful tool,” where users submit tasks and the system internally searches for optimal solutions; whereas Web3 AutoML, exemplified by Gradients, operates more like “an open marketplace,” where users post requirements and various participants propose solutions, which are then evaluated and selected. This distinction directly results in the former being more stable and controllable, but with limited optimization pathways; the latter offers greater exploratory potential and higher theoretical ceilings, though it still has room to improve in stability and maturity.

6.3 Gradients' Differentiation in Web3

In the current Web3 AI landscape, most projects remain focused on the inference layer or AI agents, while those specializing in “training infrastructure” are relatively scarce. Some projects attempt to combine compute networks or data networks to provide training capabilities, but overall, most still operate at the level of resource scheduling or compute marketplaces. Gradients differentiates itself by going beyond mere compute matching—it extends further up to the “model optimization mechanism” itself, introducing evaluation and competition systems that enable the training process to evolve continuously. This means it not only addresses “where the compute comes from,” but also “how to use that compute more efficiently.” In terms of positioning, Gradients is closer to a “training outcome-oriented” network rather than a mere compute marketplace or tool platform—this is its core distinction from most other Web3 AI projects.

6.4 Core Advantage: Mechanism-Driven Efficiency Gains

Overall, Gradients' advantages are primarily reflected in its mechanism design. First, it lowers the barrier to entry through task abstraction, allowing users to obtain model results without deeply engaging in complex training processes, thereby expanding its potential user base. Second, on the resource level, the introduction of distributed computing power eliminates reliance on a single cloud provider, theoretically enabling a more resilient cost structure through competition. More importantly, its approach to optimization has changed: by enabling parallel exploration among multiple participants combined with a selection mechanism, Gradients offers an alternative to traditional single-path optimization, giving models the opportunity to achieve superior performance in a shorter time. This “competition-driven optimization” model is its core advantage.

6.5 Potential Challenges

The model quality may suffer from stability issues. Decentralized training relies on multiple parties, which can raise the upper limit but may also introduce result variability, creating some uncertainty in controllability compared to centralized systems. Second is the enterprise-level trust issue: for enterprise users, data security and verifiability of the training process are critical, and ensuring data is not misused while maintaining auditable results in a decentralized environment remains a key challenge. Finally, there is dependence on the token economy: Gradients operates heavily on incentive mechanisms; if TAO rewards lose their appeal, participation by miners and overall network activity could decline. Therefore, its long-term sustainability depends, to some extent, on whether the economic model can establish a stable positive feedback loop.

7. Future Outlook: Can Decentralized AutoML Be Realized?

From the current stage, Gradients is still in its early phases, and its long-term viability depends on several key factors. The most critical is whether it can consistently attract genuine training demand, not just participation driven by incentives; secondly, model quality—whether the decentralized approach can reliably produce usable, or even superior, results; and thirdly, whether the economic mechanism can establish a positive feedback loop, maintaining a long-term balance between compute supply and rewards.

Within the broader industry context, AI training is diverging into two paths. One is the Web2 model, dominated by leading tech companies that enhance model performance through centralized resources and engineering capabilities, offering advantages in stability and maturity. The other is the Web3 path, exemplified by Gradients, which leverages open networks and incentive mechanisms to enable broader participation in model optimization, continuously raising the ceiling through competition. The former is about “building stronger systems,” while the latter is more like “constructing a self-evolving network.”

From this perspective, Gradients' exploration represents a new possibility: AI training is no longer just a technical challenge, but a combination of "compute + data + market mechanisms." If this model proves viable, it has the potential to become the entry point for decentralized AI training and play a key infrastructure role within the Bittensor ecosystem. Of course, this direction still requires time to validate, but it has already provided AutoML with an alternative evolutionary path distinct from traditional approaches.

Reference

1. Bittensor Documentation: https://docs.learnbittensor.org

2. Gradients website: https://www.gradients.io/

3. Gradients: https://bittensormarketcap.com/subnets/56

4. Gradients X: https://x.com/gradients_ai

5. Taostats: https://taostats.io/subnets/56/chart