By LetterAI

Google is really desperate.

Just moments ago, it was reported that Google co-founder Sergey Brin has restarted the "founder mode," personally overseeing efforts and assembling an elite "task force" to aggressively enhance Gemini’s key capabilities in AI programming and autonomous agents to catch up with competitors like Anthropic.

Shortly after, Google announced a major update late at night, introducing two new autonomous research agents built on the Gemini 3.1 Pro model: Deep Research and Deep Research Max.

Not only has the model's underlying reasoning capability been enhanced, but significant efforts have also been made to advance autonomous research agents toward enterprise and developer platform solutions, aiming to gain a competitive edge in the high-value use case of "AI research/analysis tools" through API exposure, private data support, and background asynchronous tasks, in response to competition from rivals such as OpenAI (Hermes) and Perplexity.

These two agents enable developers to combine open web data with enterprise proprietary information through a single API call, natively generate charts and infographics within research reports, and connect to any third-party data source via the Model Context Protocol (MCP).

Two agents are now available in public preview via paid plans of the Gemini API, accessible through Google’s Interactions API, first introduced in December 2025.

That's right—these new agents are currently only accessible via API; even paying subscribers cannot use them in the Gemini app. Some users, disappointed to see updates they can't access, have complained: “For some reason, Google continues to penalize us Gemini App Pro subscribers…”

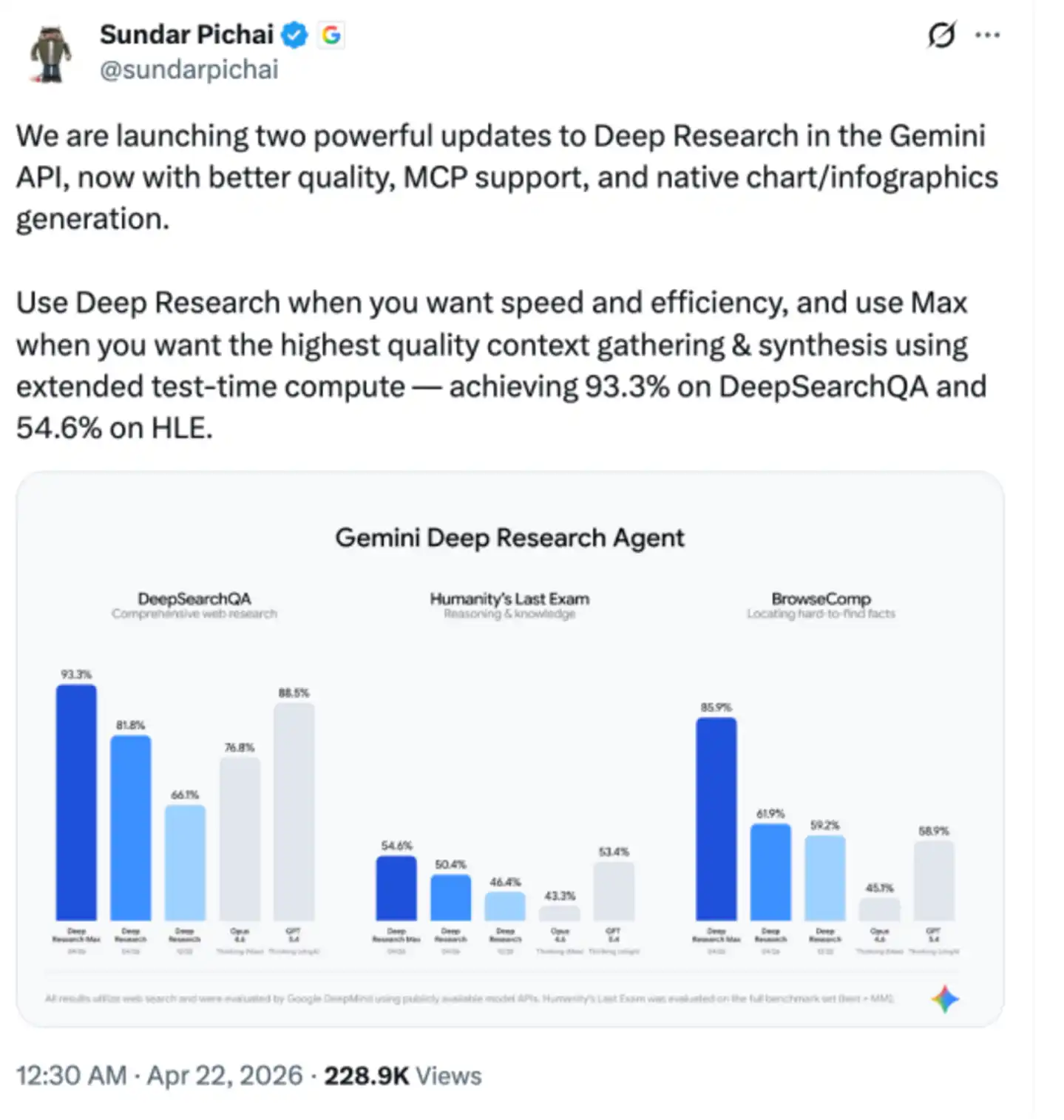

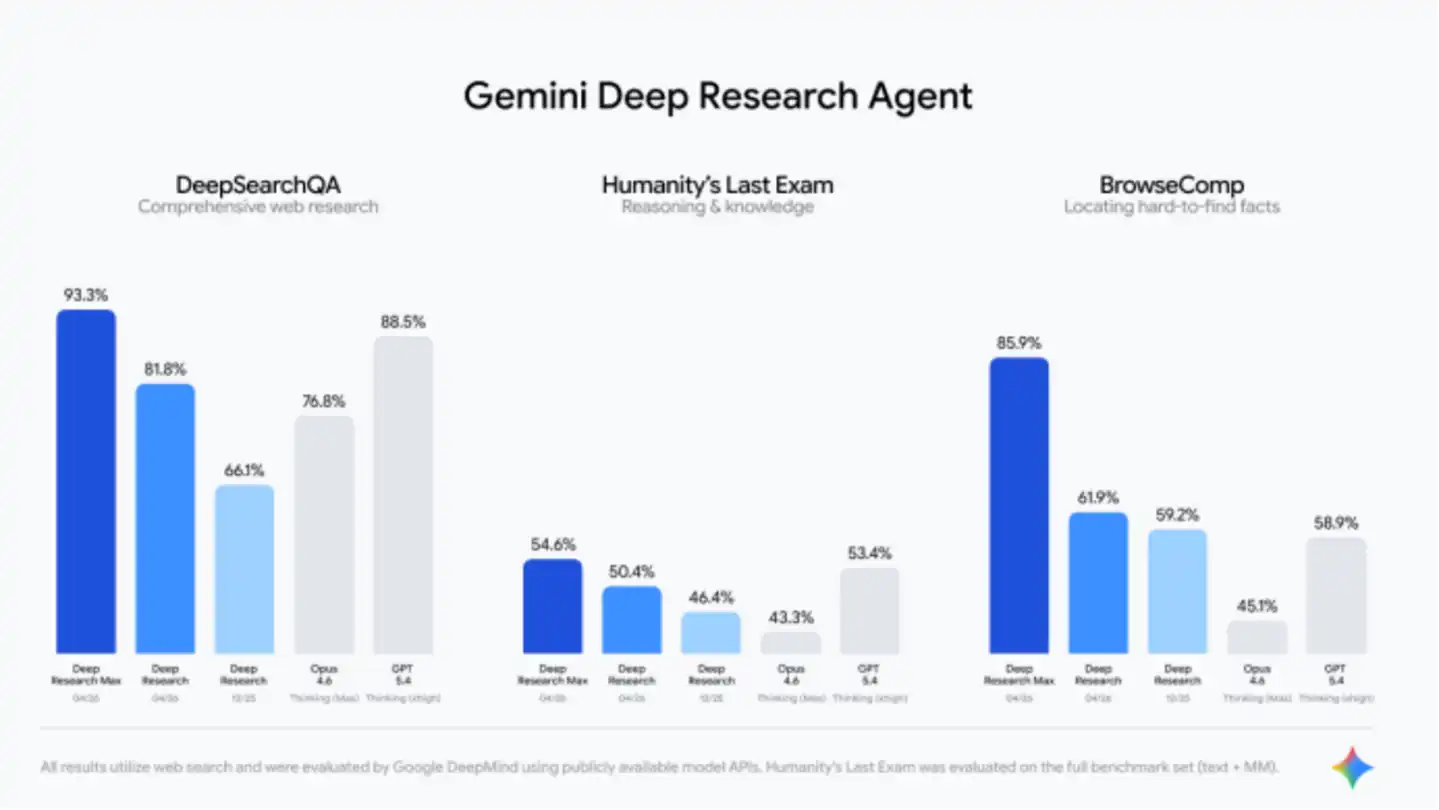

Google CEO Sundar Pichai also personally promoted on X: "Use Deep Research when you need speed and efficiency; use the Max version when you seek the highest quality context collection and synthesis—it achieves scores of 93.3% on DeepSearchQA and 54.6% on HLE through extended compute during testing."

Eighteen months ago, Google Deep Research aimed to help graduate students avoid being overwhelmed by countless browser tabs. Today, Google hopes it can replace the foundational research work of junior analysts at investment banks.

The gap between these two goals—and whether this technology can truly bridge that gap—will determine whether autonomous research agents become a transformative product in enterprise software or merely another flashy AI demo that impresses in benchmarks but disappoints at conferences.

Two versions, optimized for different workloads

The Standard version of Deep Research offers lower latency and lower costs, making it ideal for speed-critical scenarios.

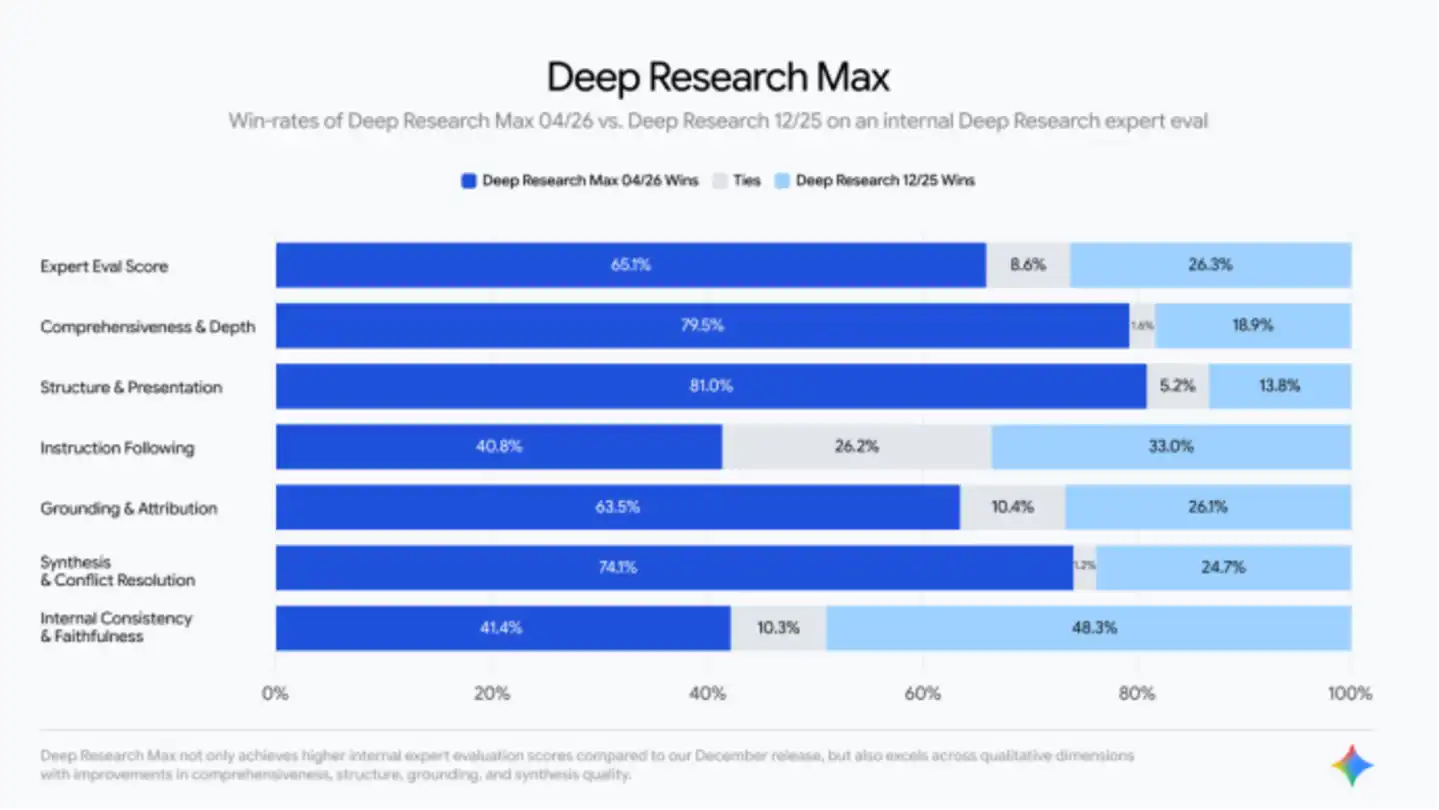

Deep Research Max prioritizes depth over speed. This agent performs in-depth reasoning, search, and iteration by extending test-time computation to generate a report.

Google notes that asynchronous background workflows are an ideal use case, such as running via a scheduled cron job overnight to deliver a complete due diligence report to the analyst team by the next morning.

In Google's own benchmark tests, Deep Research Max achieved significant improvements in retrieval and reasoning tasks. The agent can gather information from a broader range of sources than previous versions and capture subtle nuances that earlier models tended to overlook.

Google also provided a comparison with competitors.

However, comparing it to OpenAI’s GPT-5.4 and Anthropic’s Opus 4.6 is not entirely fair. While GPT-5.4 excels at autonomous web searches, it has not been specifically optimized for in-depth research. To address this, OpenAI offers its own DR agent, which switched to GPT-5.2 rather than GPT-5.4 after its February update. OpenAI’s most powerful search model is actually GPT-5.4 Pro, but Google clearly did not include it in the comparison.

According to OpenAI's data, GPT-5.4 Pro achieves a maximum score of 89.3% on the agent search benchmark BrowseComp, while GPT-5.4 scores 82.7%.

According to Anthropic's own report, Opus 4.6 scored 84% on BrowseComp, higher than the value demonstrated by Google. This score was achieved with reasoning disabled, and the model outperformed Google’s high-intensity reasoning setup used in their API benchmark tests.

These discrepancies likely stem from differences in testing methodologies—whether the models were evaluated via the raw API or wrapped within each lab’s own toolchain. Google’s data is not necessarily incorrect, but it warrants careful interpretation. Regardless, its presentation lacks sufficient transparency.

MCP Support

The most impactful feature in this release is likely the addition of support for the Model Context Protocol (MCP). This feature transforms Deep Research from a powerful web research tool into something closer to a “general-purpose data analyst.”

MCP is an emerging open standard for connecting AI models to external data sources. It enables Deep Research to securely query private databases, internal document repositories, and specialized third-party data services—all without sensitive information leaving its original environment.

In practical applications, this means a hedge fund can direct Deep Research to both its internal trading flow database and financial data terminals, then ask the agent to combine these with publicly available information from the web to generate comprehensive insights.

Google has revealed that it is actively collaborating with companies such as FactSet, S&P, and PitchBook to design its MCP server, clearly indicating that Google is seeking deep integration with data providers that Wall Street and the broader financial services industry rely on daily.

According to a blog post by Google DeepMind product managers Lukas Haas and Srinivas Tadepalli, the goal is “to enable shared customers to integrate financial data products into workflows powered by Deep Research, achieving a leap in productivity by rapidly gathering context from its vast universe of data.”

This feature directly addresses one of the most persistent pain points in enterprise AI adoption: the significant gap between the information available on the open internet and the information actually needed for organizational decision-making. Previously, bridging this gap required extensive custom engineering efforts.

MCP supports autonomous browsing and reasoning capabilities integrated with Deep Research, simplifying most complexity into a single configuration. Developers can now enable Deep Research to simultaneously use Google Search, remote MCP servers, URL context, code execution, and file search—or completely disable internet access and search solely on custom data.

The system also supports multimodal inputs, including PDFs, CSV files, images, audio, and video, as grounding context.

Native charts

The second major feature is native chart and infographic generation.

The previous Deep Research version could only generate plain text reports. If users needed visualizations, they had to export the data and create charts themselves. This limitation significantly undermined its positioning as "end-to-end automation."

Now, the next generation of agents can natively embed high-quality charts and infographics directly into reports, dynamically rendering complex datasets in HTML or Google's Nano Banana format, making them an integral part of the analytical narrative.

For enterprise users—particularly those in finance and consulting who need to produce deliverables for stakeholders—this feature transforms Deep Research from a tool that accelerates the research phase into one capable of generating outputs that closely resemble final analytical products.

In addition, the new system, combined with the enhanced collaborative planning feature—allowing users to review, guide, and optimize the agent’s research plan before execution—and real-time streaming of intermediate reasoning steps, enables developers to exert fine-grained control over the scope of investigation while maintaining the high level of transparency required by regulated industries.

Deep Research is becoming part of the infrastructure that Google provides to enterprises.

Google's official blog post explicitly states that when developers use the Deep Research agent for building, they are accessing the same autonomous research infrastructure that powers research capabilities across Google's popular products, such as the Gemini App, NotebookLM, Google Search, and Google Finance. This indicates that the agents provided via the API are not simplified versions of Google’s internal systems, but rather the same system delivered at platform scale.

This evolution has progressed extremely rapidly.

Google first launched Deep Research in the Gemini app in December 2024 as a consumer-facing feature, powered by Gemini 1.5 Pro. Google described it as a personal AI research assistant capable of synthesizing web information in minutes to help users save hours of work.

In March 2025, Google upgraded Deep Research using Gemini 2.0 Flash Thinking Experimental and opened it for public testing. It was later upgraded to Gemini 2.5 Pro Experimental, with Google reporting that evaluators preferred its reports over competitors’ by a ratio of 2 to 1.

December 2025 is a pivotal milestone, as Google launched the Interactions API, providing for the first time programmatic access to Deep Research powered by Gemini 3 Pro, alongside the release of the open-source DeepSearchQA benchmark.

The underlying model driving this improvement is Gemini 3.1 Pro, released on February 19, 2026. It achieves a significant leap in core reasoning capabilities: on the ARC-AGI-2 benchmark, which evaluates a model’s ability to solve novel logical patterns, Gemini 3.1 Pro scores 77.1%, more than double that of Gemini 3 Pro.