This crazy month, with eleven large models, has been like a noisy fireworks show.

Article author and source: 0x9999in1, ME News

TL;DR

- Thirty days of extreme competition: From March 26 to April 24, 11 major large models were launched globally, averaging one every 2.7 days. The market is facing severe "parameter fatigue."

- The “weight-loss surgery” for the parameter glutton: V4-Pro has a total of 1.6T parameters, but only 49B are activated. Through the CSA+HCA architecture redesign, FLOPs are reduced by 73% under 1M context, and KV cache drops to an astonishing 10%.

- The generational gap in alchemy: Pioneering a post-training paradigm of "independent reinforcement learning first, followed by online distillation and merging," V4-Pro-Max approaches the ceiling of proprietary models in reasoning and agent tasks.

- Real votes with real money: GPT-5.5 only drove NVIDIA’s single-day gain of 4.2% before peaking, while V4, fully open-sourced by MIT, has completely ignited sustained surges in local compute chains across Hong Kong and mainland China.

- Deep strategic logic: Closed-source models sell "taxes"; open-source large models sell "iron." The emergence of V4 has finally balanced the global enterprise-level private deployment compute ledger.

The whirlwind of April among the gods, amid market fatigue

Crazy. Everyone's crazy.

If you're an observer closely watching the AI sector, the past thirty days have likely left you feeling physically unsettled. Between March 26 and April 24, 2026, at least eleven significantly influential large models were launched into the market in less than a month.

The list reads like a menu: Anthropic Opus 4.6, Google Gemini 3.1 Pro, OpenAI GPT-5.5, Mistral Large 3, Meta Llama 4, Moonshot Kimi K2.6, Alibaba Qwen3-Next, ByteDance Doubao 2.5 Pro, Tencent Hunyuan 3.0, Kimi K2.6 Plus.

Also, on the morning of April 23, DeepSeek V4 quietly launched like a depth charge.

On average, a new model emerges every 2.7 days—a pace even fund managers can’t keep up with. Just as investors finish hearing about Company A’s “parameter breakthrough,” Company B’s “benchmark domination” is already on their desk. The market has essentially become numb. What’s called “benchmark ranking” has, in today’s hyper-competitive environment, increasingly become a self-referential numbers game.

But money is smart. Or rather, the candlestick chart never lies.

Review the 30-day K-lines of AI assets in the U.S., China, and Hong Kong, and you'll confront a brutally cold reality: in this "war of the gods," only two nodes have left lasting marks on the price chart.

First, on April 8, OpenAI across the ocean released GPT-5.5. This undisputed king directly propelled NVIDIA's single-day surge of 4.2%. Then what? That was it—the rally peaked in one day, and the good news was fully priced in. Everyone realized that even the greatest closed-source giant finds it much harder now than two years ago to easily move the massive mountain of global capital.

The second node is April 23 to 24. The preview version of DeepSeek V4 was released. There was no flashy launch event, no stunning promotional video. The weights were directly uploaded to Hugging Face and ModelScope under the MIT license.

Result? It propelled the China-Hong Kong computing power chain into a series of gap-up rallies.

Why? How did an open-source model accomplish what all these closed-source giants couldn't?

To answer this question, we need to become storytellers—set aside the dry press releases and pop open the hood of DeepSeek V4 to see what kind of monster is inside.

Dissecting V4: Moving Beyond the Blind Faith in Parameter Brutality

Large models. Very expensive. Everyone knows this.

Over the past year, large model manufacturers have fallen into a “insufficient firepower anxiety.” You build a trillion-parameter model, so I’ll build two trillion. Everyone believed that as long as they threw enough power at the problem, intelligence would naturally emerge and solve everything. But this has led to an extremely alarming cost in computational resources—even the richest landowner can’t afford to burn through this much grain.

DeepSeek V4 has unveiled two MoE (Mixture of Experts) models: V4-Pro and V4-Flash. Let’s first look at several key metrics.

V4-Pro: Total parameters of 1.6T (1.6 trillion), but only 49B (49 billion) activated parameters per token.

V4-Flash: Total parameters 284B (284 billion), activated parameters only 13B (13 billion).

Understood? This is an extremely restrained form of “using a small force to redirect a great one.” The essence of the MoE architecture is not to sound the alarm fully every time. For tasks that require killing a chicken, summon a few chicken-killing experts; for tasks that require slaying a dragon, bring out the dragon-slaying blade. The 1.6 trillion parameter base ensures it is “worldly and knowledgeable”; the 490 billion activated parameters ensure it is “quick to respond and agile.”

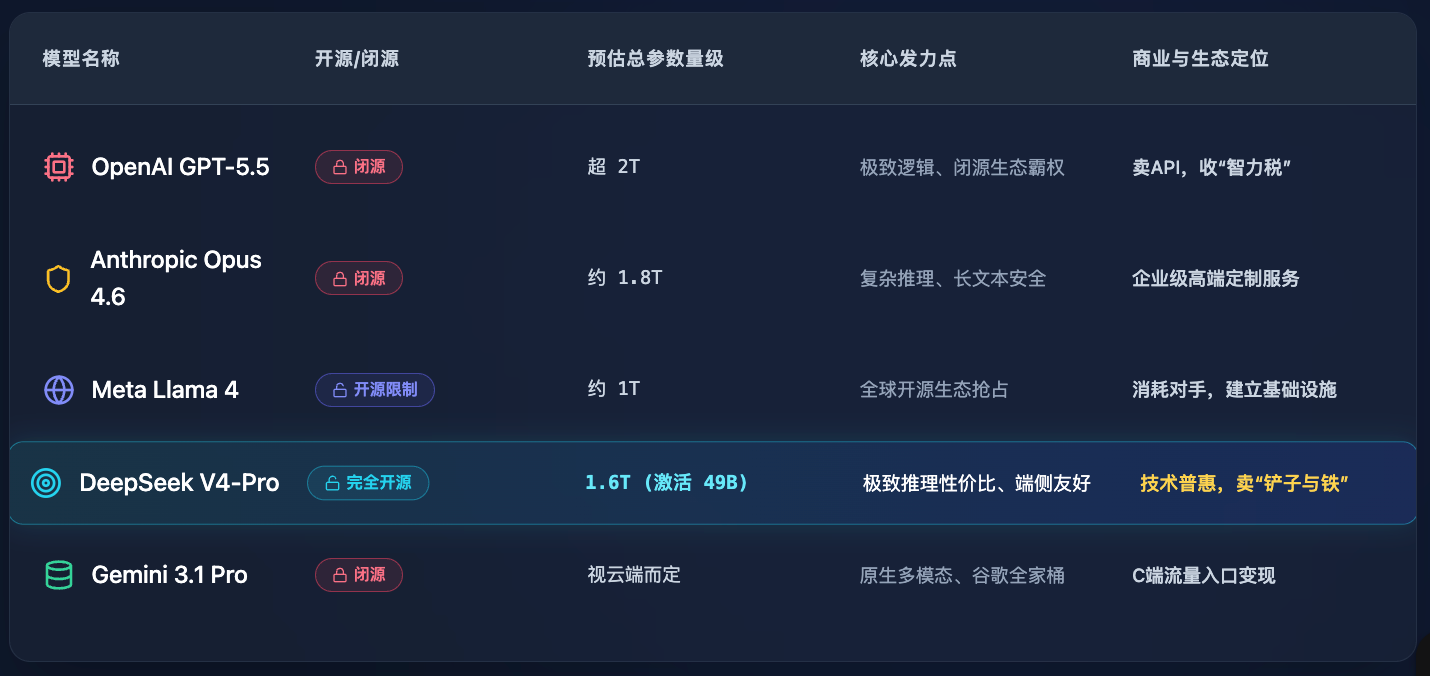

To better understand this gap, let’s create a table comparing the leading approaches currently in the market (data based on public market estimates and calculations):

Looking at the table, you’ll see that V4-Pro doesn’t blindly chase surpassing GPT-5.5 in total parameters—it focuses all its efforts on “how to make this giant eat less and run faster.”

But that’s not enough. What truly leaves seasoned insiders breathless is its ruthless elimination of the “VRAM assassins.”

The End of the VRAM Assassin: Three Scalpels of Architecture

What is a "VRAM assassin"? It's "long context."

Everyone is now boasting about supporting 1M (one million) tokens of context. It sounds great—you can dump an entire copy of "Romance of the Three Kingdoms" in, and it reads through in seconds. But what’s the cost? Long texts generate massive KV caches during inference—the VRAM used to store historical context. It’s like having to copy the content of every previous page onto a giant blackboard just to read the next one. By the time you reach the one-millionth word, you’d need so many blackboards that not even all the data centers in Zhongguancun could hold them.

Memory is more expensive than computing power. This is an unwritten rule in the AI industry.

How does DeepSeek V4 solve this? They performed “surgery” directly on the underlying attention mechanism. This is their first and most aggressive move in the architecture upgrade: the Hybrid Attention Mechanism (CSA + HCA).

CSA (Compressed Sparse Attention) plus HCA (Heavy Compressed Attention) may sound like Martian, but in plain terms: it no longer memorizes everything—it learns to highlight key points and take ultra-concise notes.

The effect is extremely dramatic: under a 1M context, V4-Pro requires only 27% of the FLOPs (floating-point operations) per token compared to the previous generation, V3.2! Even more astonishing, the KV cache is just 10% of V3.2's!

What does this mean? It’s like a million-word text task that previously required ten top-tier servers to handle is now easily accomplished by just one server. Computing costs have been slashed by 90%. This is a game-changing optimization.

There are two more knives.

The second innovation is called "Manifold-Constrained Hyperconnection (mHC)." In previous large models, information transfer between layers relied on "traditional residual connections," akin to using old, rusty pipes to transport water—under high pressure, leaks are inevitable. Faced with massive pre-training data of 32T tokens, these old pipes simply couldn't hold up. mHC is like upgrading to a fiber-optic network, significantly enhancing the stability of signal propagation across layers—no packet loss, no drift.

The third blade: change the engine oil. Ditch the traditional approach and switch to the Muon optimizer. This tool accelerates convergence. While others may take forty-nine days to refine a single batch of elixir, it could be ready in just twenty. Time is money; compute time is dollars.

These three cuts completely cured the large model's "affluenza."

The Secret in the Alchemical Furnace: From Isolation to Unification of All Methods

Everyone in the industry knows that pre-training merely turns a "illiterate" person into a "knowledgeable but speechless fool." What truly transforms it into a master is post-training.

DeepSeek V4 employed an extremely rigorous two-phase strategy in post-training.

In the past, training MoE was like a group of teachers all trying to teach one student at once, leading to a lot of conflict. How does V4 handle it?

Phase One: “Fighting Alone.” It uses SFT (Supervised Fine-Tuning) and GRPO (Group Relative Policy Optimization) reinforcement learning to separate and individually train each “expert network” within the model. The coding expert practices coding every day, while the math expert tackles math problems daily—completely independent and unaffected by each other. This approach maximizes individual expertise.

Phase Two: “All Methods Return to One.” Using online distillation technology, unify these expert models—each honed to mastery—into a single, seamless model. No internal friction. No lag.

Let’s take a look at the two “big moves” they forced out.

First is the V4-Pro-Max mode. This is the highest reasoning intensity mode, akin to unlocking a genetic lock. According to their claims (which were quickly validated by the community), on coding benchmarks, V4-Pro has already reached top-tier levels, and the gap with leading proprietary models (such as GPT-5.5 and Opus 4.6) has significantly narrowed in complex reasoning and Agent tasks.

Second is V4-Flash-Max. This one is even more interesting. It’s a tiny model with only 284B parameters, yet when given sufficient reasoning budget, its inference performance can approach that of Pro. What does this mean? It means that “algorithmic quality” is beginning to outperform “parameter scale.” As long as you give it enough time to think, even a small brain can solve big problems. Of course, it’s still limited by its parameter size when it comes to pure knowledge retention and extremely complex multi-step agent tasks (after all, its capacity is limited), but for the vast majority of enterprise-level everyday applications, its performance is more than sufficient.

Finally, the weight storage brilliantly employs FP4+FP8 mixed-precision storage, preserving accuracy while saving VRAM—every detail exudes the meticulous charm of a STEM student.

To more clearly compare the engineering efficiency gains from this post-training process, here’s a hard metrics comparison table:

The instinct of capital: Why did V4 ignite the China-Hong Kong computing power chain?

So far, we’ve broken down the technical aspects. But we haven’t yet answered the original burning question:

Why didn't GPT-5.5 sustain the boom in the computing power sector, while DeepSeek V4 did?

This requires us to step beyond the code and view this博弈 from the perspective of capital and business.

GPT-5.5 is incredibly powerful, overwhelmingly so. But it is proprietary. What does proprietary mean? It means OpenAI is a massive "black hole." If you want to use its capabilities, you must purchase its API. This is a "taxation" model—profits flow to Silicon Valley, and computing demand is concentrated in Microsoft’s cloud data centers. For global hardware manufacturers, local computing centers, and server providers in various countries, there’s little to gain beyond looking on in awe. No matter how powerful GPT-5.5 is, it’s someone else’s celebration. NVIDIA’s stock rises because everyone assumes OpenAI will buy even more chips.

But DeepSeek V4 is different.

It is open source and licensed under the extremely permissive MIT license. The MIT license is the most generous gift in the open-source community, meaning businesses can use, modify, and sell it for free without worrying about legal risks.

Even more critically, we previously spent considerable effort demonstrating that V4 has slashed the model’s inference cost and memory usage to ankle level.

Combining these two points, you arrive at a conclusion that has Wall Street buzzing: the tipping point for private deployment has truly arrived.

In the past, when companies wanted to deploy a large model over 1T, they would glance at the hardware price list, quietly close it, and turn to buy APIs instead. Now, V4 tells everyone: you only need very few machines to run a super brain locally that is nearly indistinguishable from GPT-4 and even challenges GPT-5.5 levels. Your data doesn’t need to leave your province or country—it’s absolutely secure.

Since everyone can now run it locally, what happens next?

Buy machines! Buy servers! Buy optical modules! Build an intelligent computing center!

Closed-source giants charge an intellectual tax, while open-source giants are essentially driving sales for hardware manufacturers across the industry. DeepSeek V4 is the one who dropped the spark. The more usable and open-source it becomes, the more explosive the demand for localized computing power in regions like mainland China, Hong Kong, and Taiwan. Companies involved in server assembly, liquid cooling, and data center operations are finally seeing real money in large-scale deployment.

This is why the China-Hong Kong computing power chain surged continuously the moment V4 was released on April 23. Capital is not paying for sentiment—it’s positioning itself in advance for the impending wave of private deployments across thousands of industries.

This is the fundamental business strategy.

Conclusion: The Receding Tide and the Rocks

This crazy month, with eleven large models, has been like a noisy fireworks show.

Titans swing their ocean of capital on the parameter arena, trying to knock down opponents with the heavy punch of computational power. But after the noise subsides, it’s often not the loudest who remain to reshape the industry’s landscape.

The arrival of DeepSeek V4 is like a calm assassin. It doesn’t compete with you on who spends more money; instead, it strikes at the most vulnerable point: eliminating unnecessary VRAM, lowering deployment barriers, and turning a high-end game into a level playing field for everyone.

In this AI battle known as "Ragnarok," the era of blindly stacking parameters is rapidly coming to an end. The future battlefield will belong to those who can find the perfect balance between "extreme performance" and "engineering efficiency."

Winds of hype always recede; only after the tide goes out do you see who was swimming naked and who was an unshakable reef.

V4 has distributed the weapons to everyone. Now, it’s up to each faction to establish their foothold on this new land.

Once you see through this layer, you might listen to the hype of “earth-shattering launches” and “redefining the game” with more calmness and less anxiety.

After all, no matter how dazzling the magic, it ultimately comes down to the ledger, balancing out those few coins.

Source:

- DeepSeek V4 Series Preview Official Release, DeepSeek Team, GitHub/ModelScope/HuggingFace. (2026).

- The April AI Rally: Analyzing the 30-Day Large Model Cycle, ME News Market Observer. (2026).

- Scaling Laws and the Post-Training Paradigm Shift, Journal of Artificial Intelligence Economics. (2026).

- Global Compute Supply Chain Market Pulse Report (April 2026), Pan-Asia Financial Data Analytics. (2026).