1,900 files, 512,000 lines of code, a misconfigured .map file, and all the things no one noticed.

Author: Jia Yan Kea

Source: Silicon Valley Alan Walker

7:02 AM, Mission District, by the window at Zombie Coffee

It’s been a weird morning. Chaofan Shou’s X post has already reached 3.1 million views, and every group is buzzing.

After the second drink, Silicon Valley Alan Walker downloaded 1,900 files and began reading them carefully.

After reading, Alan spoke with a few people—Kai (ex-Google, currently founding an infrastructure startup), Marcus (private equity background, recently looking at AI deals), and Sarah (former engineer at Anthropic, now independent). Here’s what came out of today’s conversations.

Alan Walker from Silicon Valley feels that most people’s analytical perspectives aren’t deep enough. Below is my record and organization.

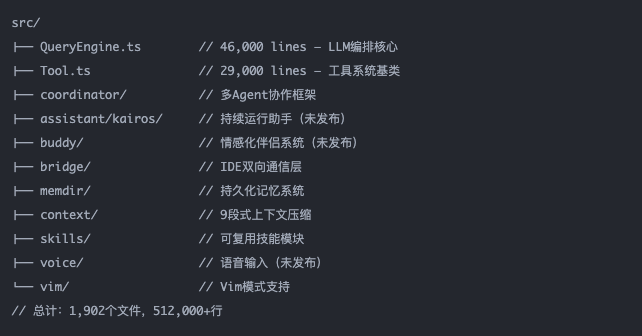

01 Secret #1: Models are just raw materials; harnessing them is the moat—and this number is 46,000 lines.

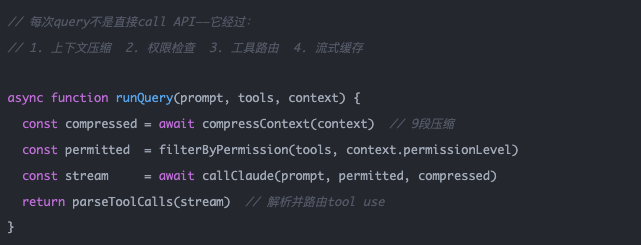

The first thing most people said upon seeing this leak was: "Wow, Claude Code is really complex." That’s wrong—it should be reversed: Claude Code works well not because it calls a smarter Claude, but because it has a 46,000-line query engine built outside the model.

Alan: Kai, have you seen QueryEngine.ts? Just this one file is 46K lines. This isn't an "AI wrapper"—it's an operating system.

Kai: I checked it out. More interestingly, they used Bun instead of Node—likely due to startup time considerations. This shows they seriously tested cold start performance. This isn’t something hastily put together.

From first principles: the model's capability is the upper limit; harnessing determines how much of that upper limit you can utilize.

A raw API call can utilize 20% of the model's capabilities.

Claude Code’s harness—context management, tool routing, permission layers—lets you access nearly 80%. That 40% gap is earned through 46,000 lines of code.

The next ChatGPT killer may not come from a team that builds a better model, but from a team that builds a better harness.

02 Secret Two: The True Intent of the Permission System — Not to Prevent AI from Acting, but to Empower AI to Act

Everyone who sees the four-tier permission system immediately thinks, "Security measures." This understanding is completely backwards.

Alan: Sarah, you worked at Anthropic—was this permission system really designed with "security" as its original intent?

Sarah: Not exactly. More accurately, it’s to make the model feel confident enough to execute. Without clear boundaries, the agent hesitates at every step, wondering, “Can I do this?” With boundaries, it acts directly within them and stops to ask when outside.

Pay attention to that detail:

Dangerous commands are not blocked by a list of rules, but rather through semantic analysis by a second AI.

This means Anthropic understands that the rule list will have gaps, so it uses AI to review AI—this is a defense system, not a set of rules.

Analogous to any organization: clear authorization boundaries don't discourage action—they empower people to make quick decisions within those boundaries.

Vague authorization paralyzes people.

03 Secret Three: The Memory System—Remember Preferences, Not Codes; This Is a Thoughtful Simplification

Alan: Have you checked the memdir/ directory? The amount of data stored in its memory system is much less than I expected.

Kai: That's right—it doesn’t store code or historical conversations, only user preferences and project constraints. At first glance, it seemed like cutting corners, but upon further thought, I realized it’s the right approach.

The context window is a limited resource, approximately 200K tokens.

A context overloaded with legacy code is like an engineer whose mind is filled with details from the last project—there’s no room left for today’s task.

Anthropic's approach is to store only "how to work with me" in long-term memory, and retrieve specific content anew each time.

The next battleground for AI products isn't who has more memory, but who has more precise memory—remembering the right things and forgetting what shouldn't be remembered.

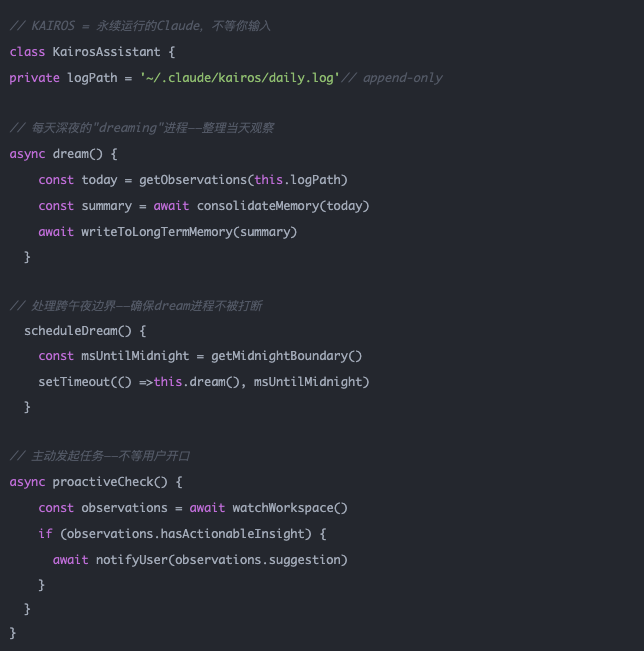

04 Secret Four: KAIROS—Anthropic isn’t really selling a tool; it’s selling a digital employee that never clocks out.

Alan: Marcus, as an investor, what are your thoughts on the KAIROS feature?

Marcus: What I see is a completely different business model. You're not paying for a SaaS subscription—you're paying for the salary of a contractor who works around the clock. This changes the entire pricing logic.

Handling the midnight boundary is critical—someone actually considered, "What if the dreaming process starts at 11:58 PM and crosses midnight?"

This indicates that KAIROS is not a proof of concept, but a fully designed feature ready for launch.

The SaaS business model will evolve into "AI staff augmentation." You hire a digital employee that never takes time off and has a marginal cost approaching zero.

This is not a tool price; this is a human resources price.

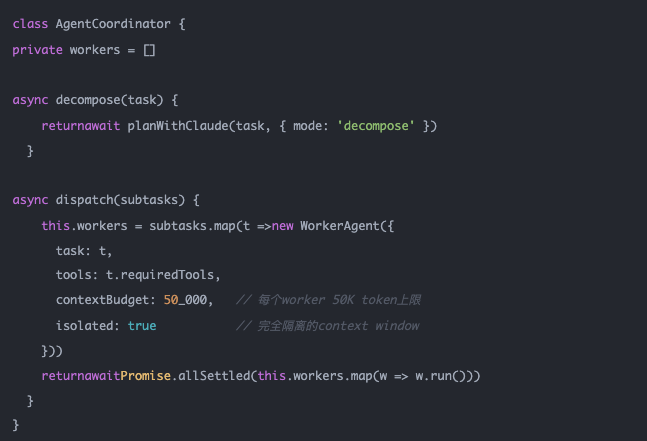

05 Secret Five: Multi-Agent Framework—AI Companies Are Replicating the Organizational Charts of Human Companies

Kai: Have you seen the directory structure? coordinator/, tasks/, skills/, services/—it’s exactly like a startup’s org chart.

Alan: Yes. In Coordinator Mode, one Claude can spawn multiple worker agents—that’s a model where a manager oversees a group of ICs.

The limit of a single AI is the size of its context window (200K tokens).

The only way to exceed this limit is to have multiple AIs work together, each managing its own context.

This is the same solution that human companies use to overcome individual cognitive limitations through division of labor. The difference is:

The coordination cost for an AI team approaches zero, while communication and coordination are the largest costs for human companies.

The scaling path of AI is replicating the evolutionary path of human organizations—but reducing coordination costs by 90%.

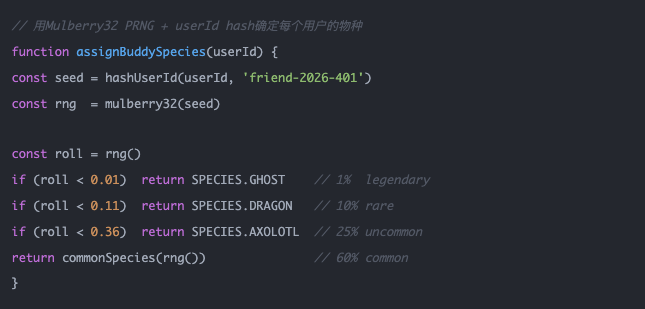

Secret 6: BUDDY—Anthropic knows that emotional attachment is the ultimate weapon for product stickiness.

Sarah: Many people outside call this BUDDY feature a gimmick, but I don’t see it that way. Duolingo achieved one of the highest DAU/MAU ratios globally with just a green owl.

Alan: The key is that deterministic seed—your species is determined by your user ID hash, so it’s always that one dragon, never anyone else’s. That’s what makes it addictive.

The species name is hidden in the source code using an array with String.fromCharCode().

Anthropic explicitly does not want it to appear in string search results.

The plan is to begin pre-launch marketing on April 1st (April Fools’ Day), with the official launch in May—a textbook viral growth trajectory.

Emotions are the strongest locking mechanism, stronger than any data migration cost.

You can migrate your codebase and configuration files, but you can't migrate the legendary dragon that accompanied you for two years, which Claude named "Mochi."

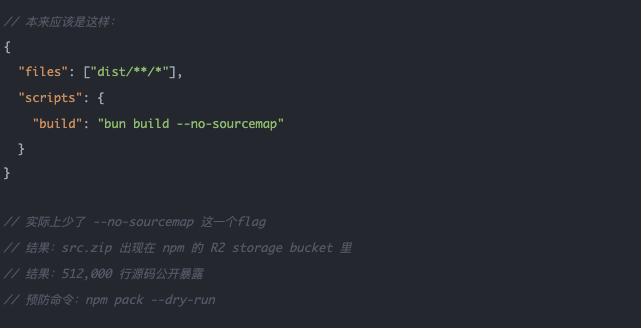

Secret 7: The Sourcemap leak itself is a snapshot of the entire AI industry's supply chain vulnerability.

Marcus: Did you know that on the same day this happened, Axios was also hacked? The npm package with 8.3 million weekly downloads had its maintainer account compromised, deploying a cross-platform RAT.

Alan: March 31 is a weird day for npm. The fact that these two events coincided highlights the same issue: the release pipeline for modern AI products is extremely fragile.

In 2025, 454,000 malicious packages were published on npm.

On average, each npm project pulls in 79 transitive dependencies.

The battlefield for AI security is rapidly shifting from "the security of the model itself" to "deployment and supply chain security."

Claude Code represents one of the most sophisticated AI systems today, yet even it can make this kind of mistake.

Secret 8: This leak itself was Anthropic’s best unintentional product marketing.

The fifth cup has gone cold. The morning in the Mission District outside the window has just begun.

Marcus: I've been investing for 20 years, and the timing of this is incredibly subtle. Six months after Anthropic’s last funding round, this code prompted developers worldwide to independently verify their technological moat—no PR budget could buy this.

Alan: More accurately: Competitors now know what to do, but that doesn’t mean they can do it. Google had the best search papers but didn’t create the best AI products.

The global developer community spontaneously analyzed, shared, and discussed the technical depth of Claude Code within hours—3.1 million X views, 1,100+ stars, and 1,900+ forks.

In this process, every engineer became a voluntary endorser of Anthropic.

What did Anthropic lose? Some TypeScript code.

The architecture diagram is the map; execution is the terrain.

What they are truly building is the world’s first true digital employee operating system—with its own memory, permission system, emotional interface, autonomous action capabilities, and multi-agent collaboration network.

The question waiting for an answer is not "Will AI replace human jobs?" The source code has already provided the answer:

KAIROS never stops, BUDDY builds emotional connections, and Coordinator manages teams.

The real question is: Do you want to be the one who designs the harness, or the one who is managed by it?