I feel that humanity may be being overtaken by AI at a pace beyond conventional understanding.

I’m not sure about your current situation, but at this point, I can’t live without AI—I rely on it to complete at least 50% of my daily tasks.

Moreover, this ratio continues to rise.

Meanwhile, as new models are released generation after generation, both my work efficiency and quality, as well as my monthly Token spending, are growing rapidly.

Last night, I saw a report that Anthropic released a model so powerful that even they themselves are hesitant to make it publicly available.

The name of this new model is "Mythos," which translates to "myth" in Chinese.

It is currently a preview version, so the official name is "Mythos Preview." However, it is being launched under the project name "Project Glasswing."

I’ll talk about this project later.

Last month, an internal document from Anthropic was accidentally leaked, mentioning that a larger and more powerful model than Opus, codenamed Mythos, is under development.

Subsequently, Anthropic attributed the leak to "human error" without providing further details.

The model codenamed Mythos has now been officially announced.

Officially announced, but it has not yet been publicly released—meaning regular users cannot use it yet.

The reason is straightforward: Anthropic believes the model is too powerful to be released to everyone before adequate safety measures are in place.

I think this sentence is worth pausing to consider for a second.

Usually, an AI company rushes to launch a new model as soon as possible to seize the market, but Anthropic’s approach this time is notably unusual.

In my view, it’s not that they don’t want to send it, but that they dare not send it.

Because the Mythos model is indeed very powerful.

First, let’s review a few officially published test results.

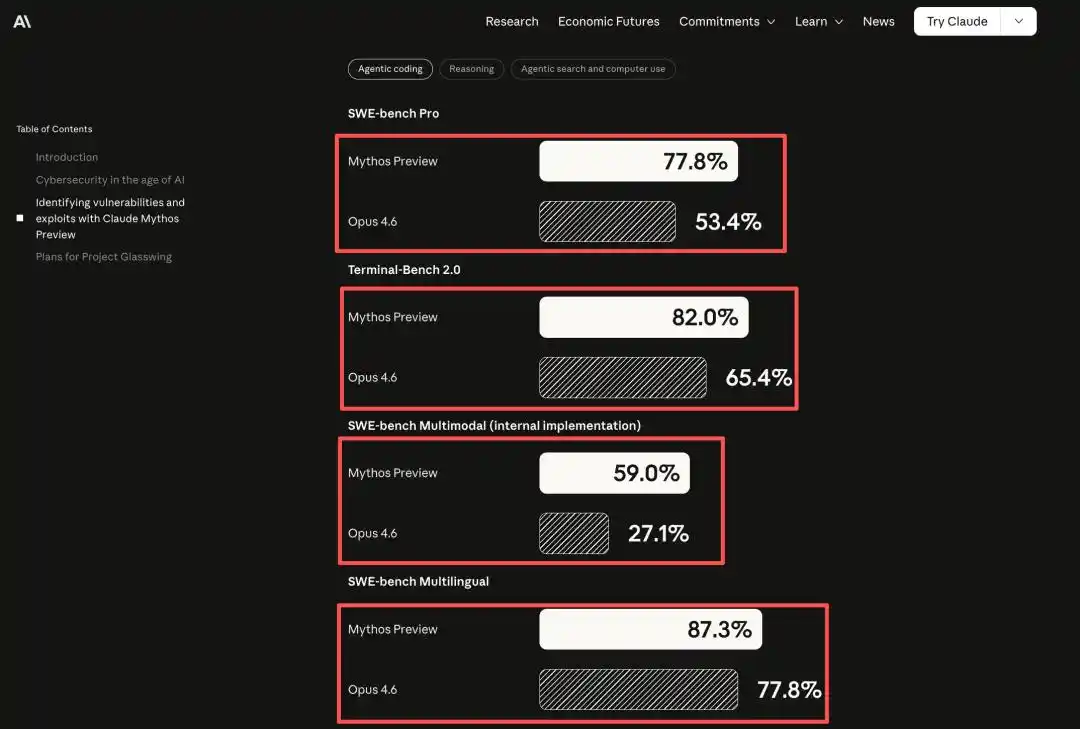

In coding ability, Mythos significantly outperforms Claude Opus 4.6, the currently publicly strongest model, decisively surpassing it across all benchmark tests.

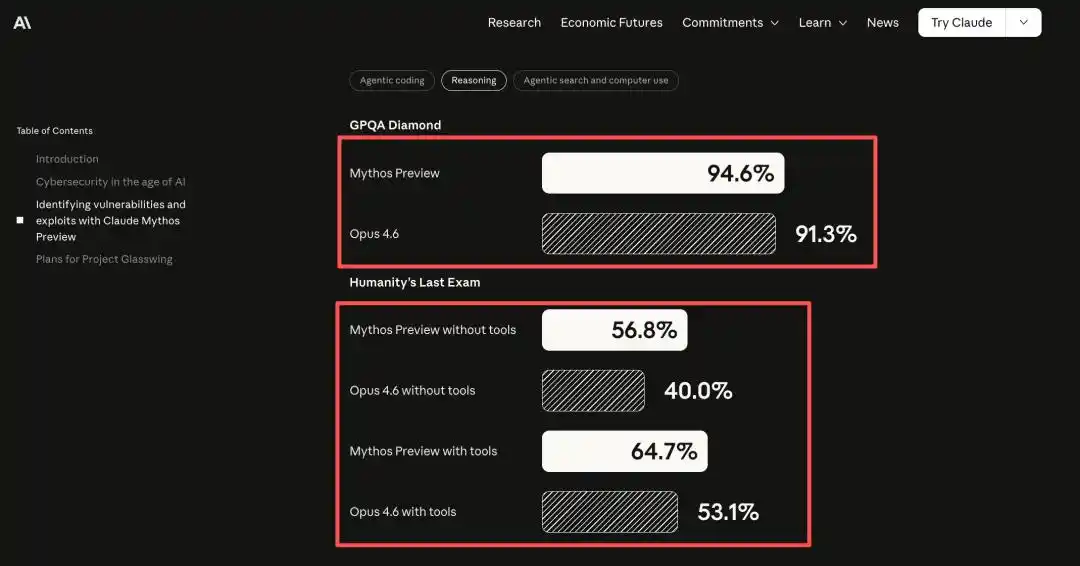

In reasoning ability, on the GPQA Diamond (graduate-level scientific QA) benchmark, Mythos scored 94.6% versus 91.3%, achieving superior results.

In both the tool-assisted and tool-free tests of Humanity's Last Exam, Mythos emerged victorious.

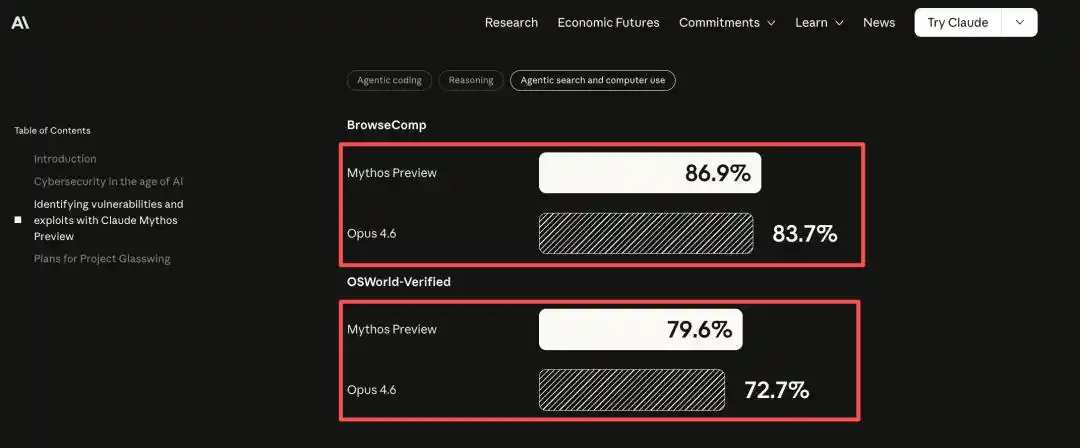

In computer operation skills related to Agent, OSWorld-Verified (autonomously completing computer tasks) achieved 79.6%, surpassing Oputs 4.6’s 72.7%.

On every dimension, Mythos outperforms Opus 4.6, with some areas showing a decisive advantage.

In some tasks, the gap has moved beyond incremental improvements to significant leaps. For example, SWE-bench Multimodal jumped from 27.1% to 59%, nearly doubling.

One of the most fundamental reasons they dare not go online with Mythos is its exceptional ability to breach security defenses in the software world.

In short, all systems and software worldwide have vulnerabilities, and Mythos can discover and exploit them at a level beyond human capability.

If this capability were acquired by hackers, operating systems and software worldwide would be at risk, particularly critical infrastructure and national security systems.

Anthropic stated in their announcement: "After reading it, I found it chilling upon reflection."

The coding ability of AI models has reached an extremely high level, and in discovering and exploiting software vulnerabilities, they can nearly surpass everyone except the most skilled humans.

Regarding this statement, I would like to elaborate further.

I come from a programming background, so I understand how software is built and how much code can vary between different developers.

Also, no software dares to claim it has no vulnerabilities, even if such a vulnerability has never been discovered.

The reason previous vulnerabilities remained undetected in the system for decades is not because the system was sufficiently secure.

Rather, finding vulnerabilities requires extremely high expertise, immense patience and energy, and a significant amount of time.

Too few people understand it, and even fewer are willing to invest.

This "scarcity of capability" forms the implicit foundation of the entire software security landscape. After AI intervention, this foundation begins to weaken.

AI can operate beyond the capabilities of most non-elite humans, and we can use it to exploit vulnerabilities, as well as to patch them.

To address this issue, let me explain what Anthropic’s Project Glasswing is.

In simple terms, this is a project that uses Mythos’s capabilities to find bugs in infrastructure systems worldwide.

Participants include a total of 12 organizations, such as AWS, Apple, Microsoft, Google, NVIDIA, Cisco, and the Linux Foundation.

This lineup covers cloud computing, operating systems, chips, browsers, financial infrastructure, cybersecurity, and the open-source ecosystem.

In other words, nearly all the key players in the global digital infrastructure are involved in this project.

The core logic of this project is simple: enable the defense side to leverage the capabilities of this top-tier AI model first.

Because if the attacker obtains equivalent tools first, once the window opens, it becomes very difficult to close. Anthropic has committed to providing $100 million in model usage credits, covering the research preview period.

In addition to the 12 core institutions, more than 40 organizations maintaining critical software infrastructure have been granted access to scan their own systems and open-source projects using Mythos.

At the same time, Anthropic donated $2.5 million to the Linux Foundation and $1.5 million to the Apache Software Foundation, both of which are infrastructure pillars of the software world.

To put it simply, virtually all the apps, websites, and systems we use today are built on top of their underlying architecture.

In my view, Anthropic did a great job this time—not only launching a more powerful model, but also investing in global information infrastructure to help improve it.

After all, going naked benefits no one.

You might still not fully grasp just how powerful Mythos is, but I found three specific examples from the official source that I believe speak louder than numbers.

First, OpenBSD.

This is a widely recognized operating system with extremely high security, used by many critical infrastructures, including Apple’s iOS, Android systems, and even some enterprise and institutional internal systems.

Mythos discovered a 27-year-old vulnerability that allows attackers to remotely crash a target machine simply by connecting to it.

27 years! It’s not that no one cared—it’s that no one found it.

Second, FFmpeg.

Almost all software that processes video relies on it, and it’s likely present in nearly every video player you use.

A vulnerability hid in a line of code written 16 years ago, and automated testing tools attacked it 5 million times without finding it.

But Mythos found it.

Third, the Linux kernel.

This doesn't need much explanation—it's essentially the infrastructure of the entire internet and also the most critical to be cautious about.

Mythos didn't just discover several isolated vulnerabilities—it linked multiple vulnerabilities into a single attack chain.

Start with regular user permissions, escalate privileges step by step, and ultimately gain full control over the entire machine.

Regarding Linux, this is completely different in nature from the previous two cases.

Finding vulnerabilities is an analytical skill.

But the chain vulnerability is a matter of strategy capability.

Like many product managers who can create wireframes, write documentation, and perform data analysis—these are individual skills. But connecting business, product, and commerce together is strategic ability.

A model capable of planning attack paths is no longer just an auditing tool—it is closer to an intelligent agent that can take proactive actions in a digital environment.

In all three cases above, Anthropic followed a process of discovery, reporting, remediation, and then disclosure, and all issues have now been resolved.

At this point, you can see just how powerful Mythos is—like a fierce beast held back from its cage, requiring the real world to prepare itself before it’s unleashed.

I’d like to share a few observations here, perhaps the beginning of real change to come.

First, the security assumptions in the software world are breaking down.

The software stability we take for granted today does not come solely from well-designed systems; to a large extent, it relies on the scarcity of attack capabilities.

To put it simply, it’s not that the software isn’t strong enough—it’s that the people aren’t.

Finding vulnerabilities costs money, constructing exploit chains takes time, and large-scale scanning requires resources. As a result, many technical debts, long-standing bugs, and outdated systems continue to exist without ever being properly addressed.

Just like with product development, just because we feel the logic is closed and everything seems fine doesn’t mean we’re truly out of the woods—it could simply mean we’ve reached the upper limit of our capabilities.

Mythos demonstrates the ability to compress the time window between vulnerability discovery and exploitation from months to just minutes.

What does a few minutes mean?

This means the pace of patches and the repair process have begun to lag behind the speed of attacks.

Second, the open-source community will feel the pressure first.

Most modern software today relies on a vast number of open-source dependencies—invisible in everyday use, but when compromised, they impact the entire industry simultaneously.

Some readers may not be familiar with this concept—put simply, all the software we use today relies on open-source projects as their foundation, and the source code of these projects is visible to everyone.

In the future, when models can continuously and at scale scan open-source projects, the level of pressure faced by open-source community maintainers will be completely different.

This is also why Anthropic donated to the Linux Foundation and the Apache Foundation.

It’s not about charity; it’s about recognizing that open-source infrastructure is the most vulnerable yet most critical foundation of the entire digital world in the AI era—they simply don’t want to be seen as villains.

Third, humans will be diminished, and AI will begin to compete with AI.

The value of previous internet product security teams lay in human judgment, accumulated experience, and deep understanding of systems.

In the future, this matter will follow a different logic.

It’s about whose model is stronger, whose tools integrate faster, and who can embed AI auditing at the very beginning of the development process.

This is not about programmers being replaced, but about the production model of the security industry itself being restructured.

On the flip side, thousands of critical vulnerabilities can be discovered within weeks. The problem is, attackers will eventually have tools of equal capability.

By then, the security of software products will no longer be a battle between people, but a strategic contest between models.

This time, Anthropic didn't just release capabilities—they also released risks. It’s the kind of honesty the entire industry likely needs most at this stage.

Everyone is talking about how AI is changing work efficiency, and that's perfectly fine.

But Mythos also reminds us that the leap in AI capabilities will eventually propagate from the content world to the software world, and then to the entire digital infrastructure.

The content landscape has been rewritten, affecting traffic logic.

The software world has been rewritten—at its very foundation.

At this moment, I recall a line from the movie "2012," which I’ll use as the conclusion of this article.

Whoever you are, regardless of race or nationality, tomorrow we are all the same!

This article is from the WeChat public account "Tang Ren" (ID: RyanTang007), authored by Tang Ren.