Zhixidongxi reported on May 7 that early this morning, Anthropic unveiled several major updates at the Code with Claude developer conference: relaxed rate limits for developer API calls, the launch of three new capabilities for Claude-hosted agents, over ten new features for Claude Code, and a significant partnership with SpaceX.

Starting today, Anthropic is doubling the Claude Code usage limit from 5 to 10 hours for Pro, Max, Team, and per-seat Enterprise plans; removing peak-time usage throttling for Claude Code on Pro and Max accounts; and relaxing API rate limits for the Claude Opus model.

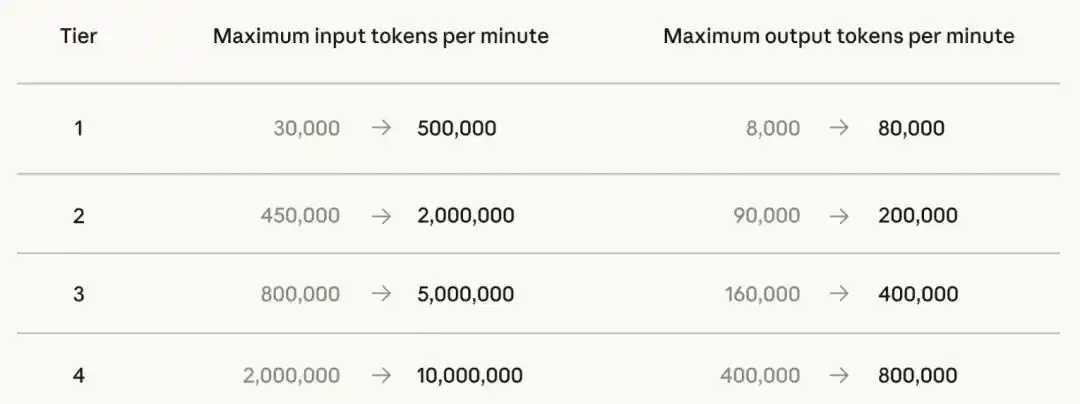

Updated API rate limits for the Claude Opus model

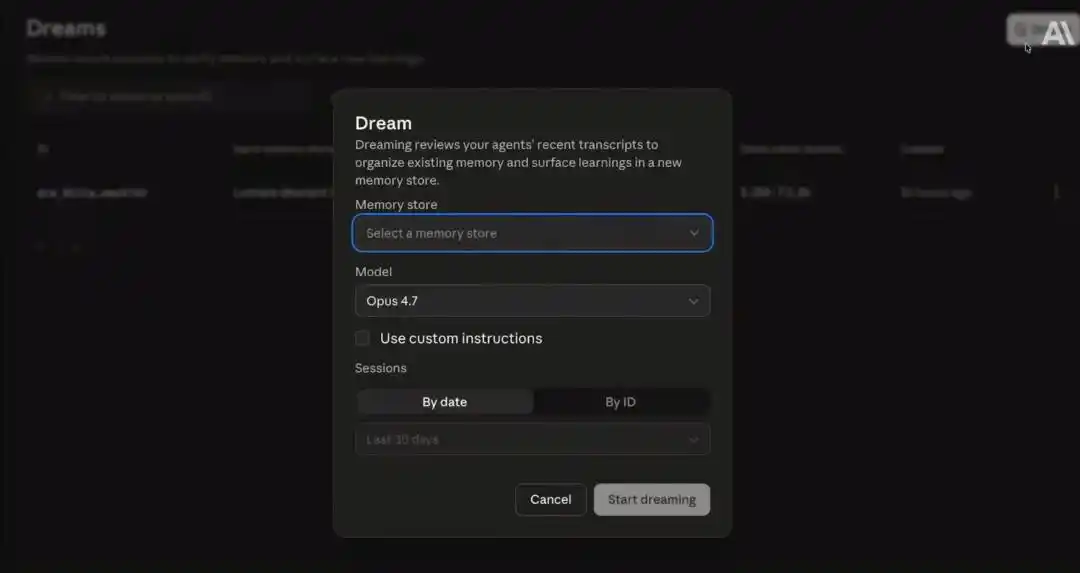

Second, Claude’s hosted agents now feature three new capabilities: multi-agent orchestration, Outcomes, and Dreaming. Dreaming is currently in research preview and requires an application to try. Outcomes, multi-agent orchestration, and memory capabilities are now available as part of the Managed Agents service and are open for public beta.

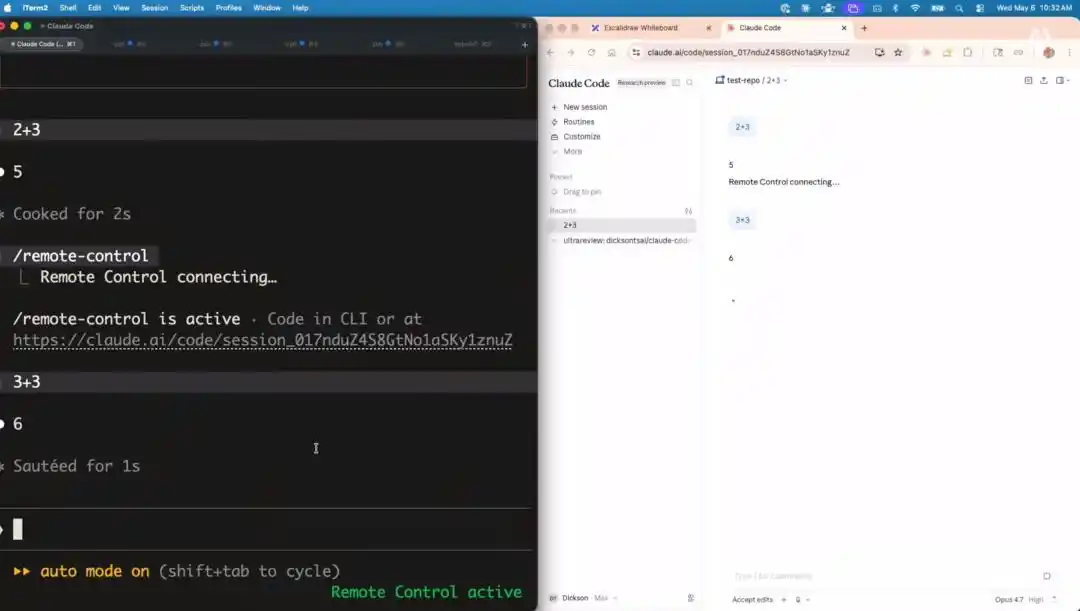

Claude Code introduces several new features, including remote control, UI refresh, flicker-free rendering, and access control.

Finally, to further expand its service coverage, Anthropic has partnered with SpaceXAI (formerly xAI) and will have full access to the entire computing resources of the SpaceXAI Colossus 1 data center. This partnership will add over 300 megawatts of computing capacity this month, equivalent to more than 220,000 NVIDIA GPUs, which will be used to enhance service capacity and user experience for Claude Pro and Claude Max subscribers.

This compute expansion is another major announcement in Anthropic’s series of significant compute initiatives.

Previously, Anthropic reached a power agreement with Amazon for up to 5 gigawatts of computing capacity, with nearly 1 gigawatt of additional capacity scheduled to come online by the end of 2026; signed a 5-gigawatt computing agreement with Google and Broadcom, with related capacity set to gradually launch by 2027; established a strategic partnership with Microsoft and NVIDIA encompassing $300 billion in Azure cloud computing resources; and partnered with Fluidstack to invest $500 billion in U.S. artificial intelligence infrastructure development.

At 4:00 AM today, Dario Amodei, co-founder and CEO of Anthropic, along with Daniela Amodei, co-founder and president of Anthropic, held a conversation with Ami Vora, Chief Product Officer of Anthropic.

Dario said that, thanks to Claude, the world has paid more attention to Anthropic than ever before. Anthropic’s ARR has grown exponentially—previously, they expected it to increase gradually by up to 10 times, but instead saw an 80-fold increase, and they are now providing more computing power than ever at the fastest possible pace. However, he also mentioned that he hopes the growth doesn’t continue, as it would become absurd and unsustainable.

Anthropic Chief Product Officer Ami Vora, Anthropic co-founder Daniela Amodei, and Anthropic co-founder and CEO Dario Amodei (from left to right)

01. Claude Hosted Agent Upgrade: AI Learns to Self-Reflect and Evolve

Anthropic has enhanced Claude's hosted agent with three new capabilities:

First is multi-agent orchestration capability, allowing developers to assemble agent clusters to collaboratively accomplish highly complex tasks.

Next is the Outcomes feature, which allows developers to precisely define the success criteria for a task, enabling Claude to automatically iterate until the task meets the specified standards.

Finally, there is the autonomous reasoning (Dreaming) capability. With Dreaming, Claude can independently plan tasks, proactively review past conversation logs, identify gaps in its own abilities and lessons it should have learned, and autonomously integrate these insights directly into its memory.

Anthropic’s product lead Angela Jiang and engineer Katelyn Lesse founded a startup called Lumara on-site, leveraging three new features of Claude-hosted agents, and developed a genetic algorithm software for the startup to enable autonomous lunar landings of drones.

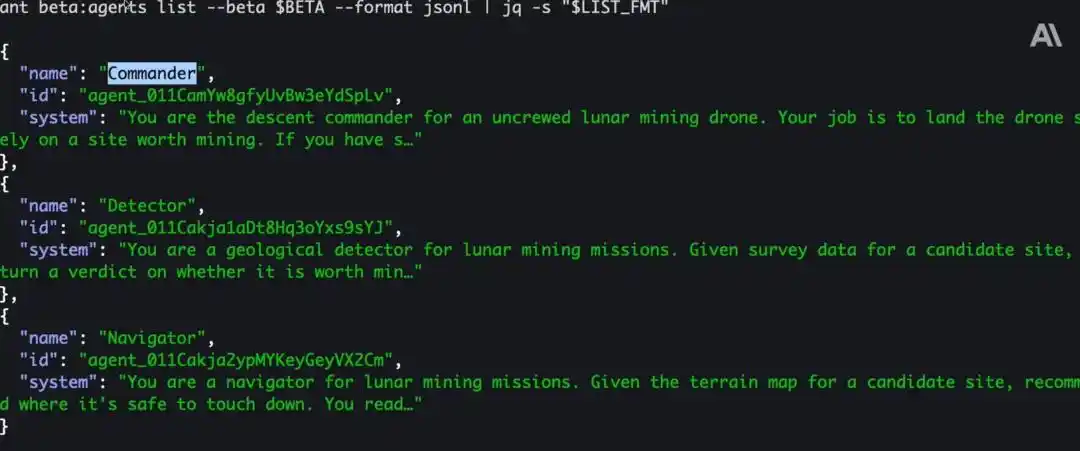

They first established a hypothetical scenario in which a client wants to deploy a drone on the Moon to mine a fictional mineral resource, then demonstrated the specific configuration process using the Claude command-line tool.

First, Lesse introduced the multiple agents that need to collaborate on the task: the commanding agent is responsible for ensuring the smooth progression of the entire mission; the reconnaissance agent identifies suitable landing sites rich in high-quality mineral resources; and the navigation agent ensures the drone lands safely and flies precisely to the designated target location.

During the execution of the entire task, the master agent initiates a task session, and each sub-agent operates on an independent execution thread with its own dedicated context window.

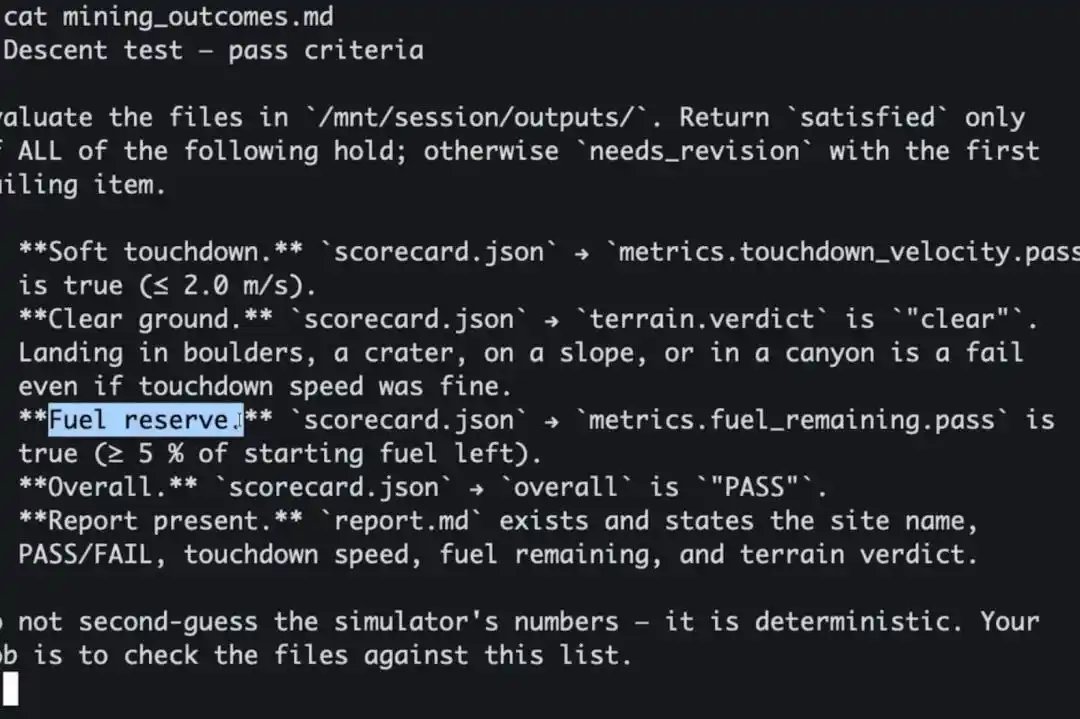

Next, based on the Outcomes feature, a high-level agent is configured to ensure the predefined goals are achieved. The concise Markdown file below clearly lists the criteria for determining task success: the drone must land smoothly and softly; it must land on a flat, obstacle-free surface; and sufficient reserve fuel must be retained to ensure the drone can safely return to Earth.

To establish this set of evaluation criteria for the task objective, the demonstrator sends an event to the task session, defining these evaluation rules as the acceptance criteria for the goal.

In addition, during task execution, a separate scoring and review agent is created. This agent evaluates the conversation throughout, determining whether each round of execution meets the predefined acceptance criteria. Developers can also set the maximum allowed number of iterations.

Next, the testing phase begins: the client provided data for six hypothetical landing pages and will run multiple simulated sessions to evaluate real-world performance. On Lumara’s backend dashboard, simulated runs have already been completed for all six landing pages; the results show that four were correctly identified, but landing pages three and four still offer room for optimization.

The next step is to upgrade and optimize this system. The demonstrator enters the Claude developer console, opens the Dreaming (autonomous reasoning) interface, clicks the button labeled "Dream," and selects a memory repository. The autonomous reasoning agent then reviews all past simulated conversations and writes the summarized insights into the memory repository. All future task conversations will be able to reference these accumulated experiences.

More importantly, the agent will automatically generate a set of landing operation guidelines. All future task sessions can refer to this handbook, which compiles best practices and rules derived from past tasks.

02. Launching over ten updates, focusing on user-friendliness and autonomous intelligence

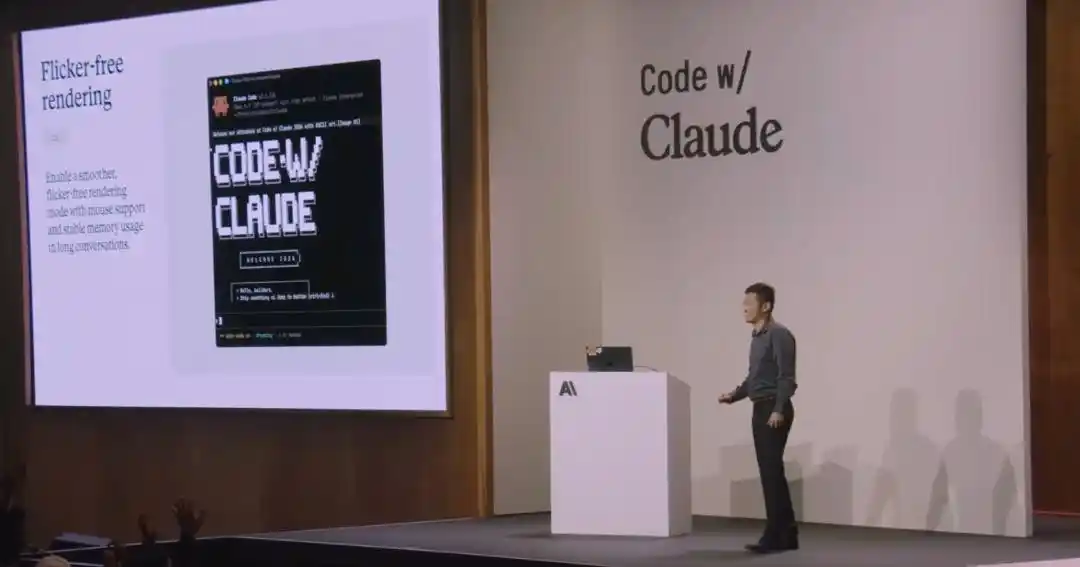

Claude Code engineer Dickson Tsai introduced over ten updates to Claude Code across two key areas.

The first major focus is on developer experience: how to make using Claude Code daily more intuitive and user-friendly.

Remote Control: Users can leave tasks running in the background on their computer and continue the same session and development environment on their mobile device while on the go.

Flicker-free Rendering: The previous version appended content to existing views, causing frequent redraws when view misalignment occurred. The current terminal UI supports full-screen mode and employs virtual list rendering technology. This optimization eliminates interface lag and flickering, enabling clickable interactions with code elements within the terminal, while maintaining stable memory usage even with extremely long session logs.

During Claude's operation, developers can clearly view the rendering results. Even with extremely long content, there are no issues such as rendering glitches or layout distortions.

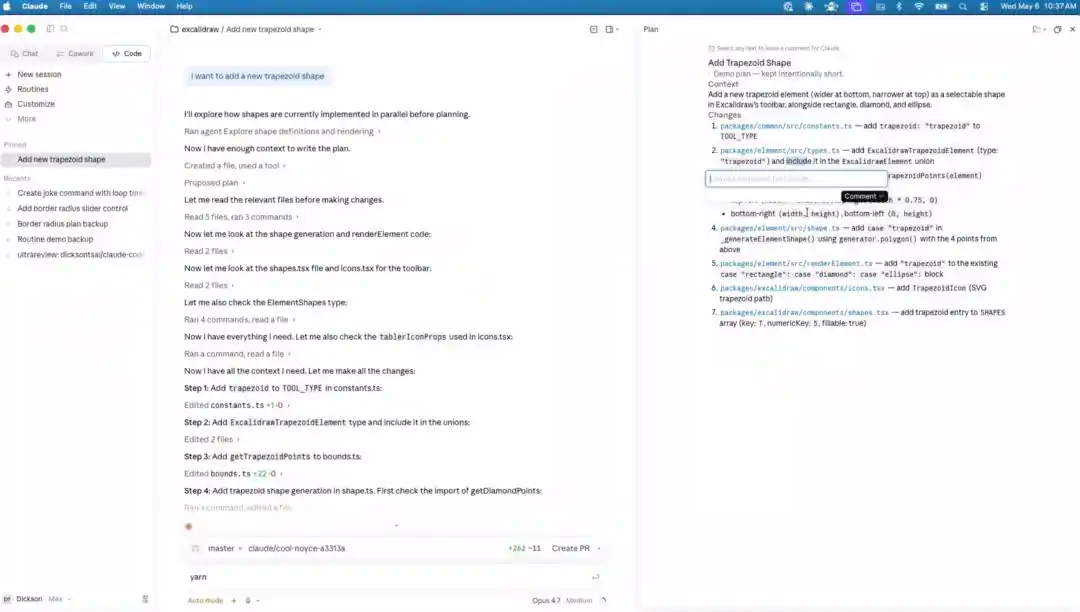

UI Refresh: Added filtering and grouping capabilities to organize items and tasks. Developers can now freely open various panels via drag-and-drop, and easily switch between multiple view layouts.

Developers can now directly navigate to the planning summary for each section, leave comments at any time, and all comments will be consolidated and followed up by Claude. In addition to the planning view, developers can switch to other views and leave comments directly in the corresponding locations. Finally, developers can open any file within the entire working directory for quick editing and modifications.

When the session log is long, developers can hover over any message to assign a custom title to any message in the session, which will automatically generate a table of contents at the top.

The second topic is autonomy.

Auto Mode: Claude can now autonomously perform routine tasks such as granting permission prompts, creating branches, and executing build commands, handling everything independently.

Claude Code has introduced a permission mode: leveraging a security classifier, AI automatically makes permission decisions on behalf of developers. The classifier primarily checks two factors: whether the operation poses a destructive risk, and whether it exhibits characteristics of prompt injection. If the tool call is deemed secure, it is automatically approved and executed; if risks are detected, the operation is blocked and requires manual confirmation from the developer.

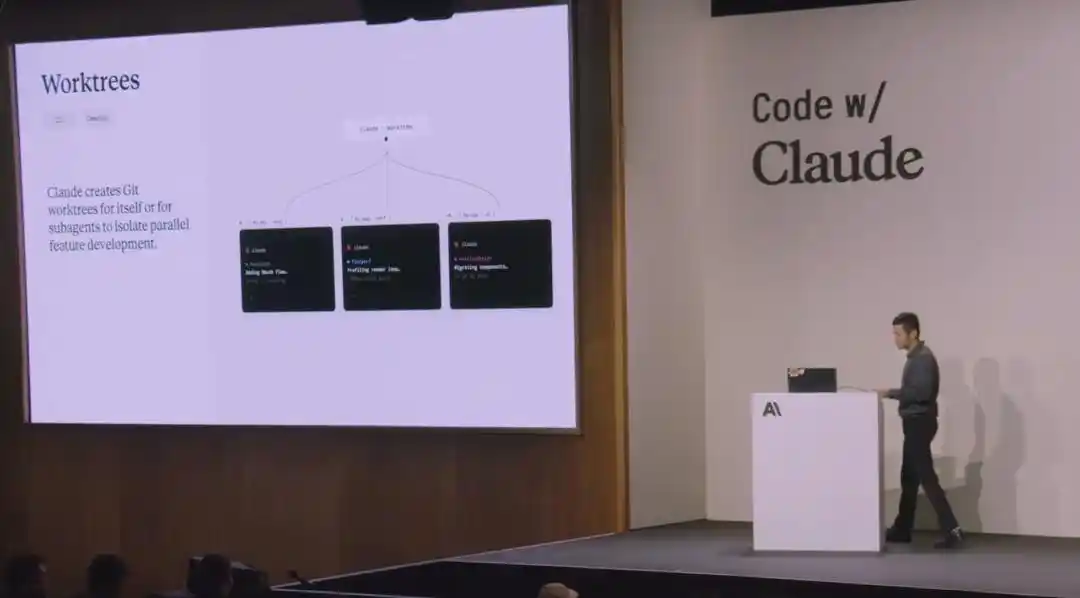

Worktrees: Help developers fully isolate different tasks and maintain clean, independent code environments. Native Git worktrees have many usage pain points and limitations; Anthropic has optimized and refined them to provide developers with a more user-friendly and intuitive interface.

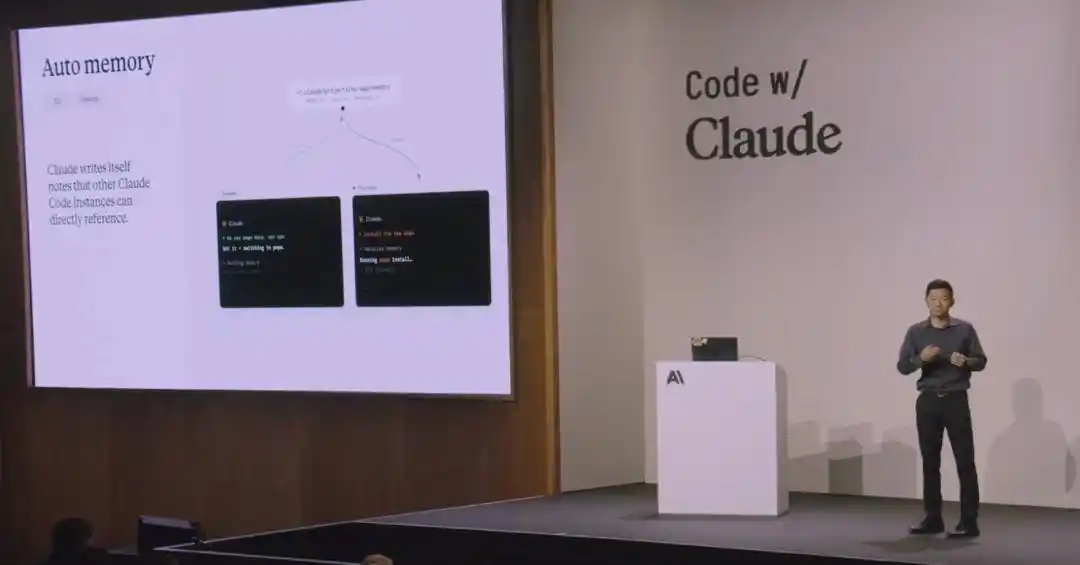

Auto Memory feature: Claude can accumulate knowledge across sessions, remembering key build commands, debugging insights, project preferences, and other information. Claude automatically determines whether this information will be useful in future conversations and decides whether to save it.

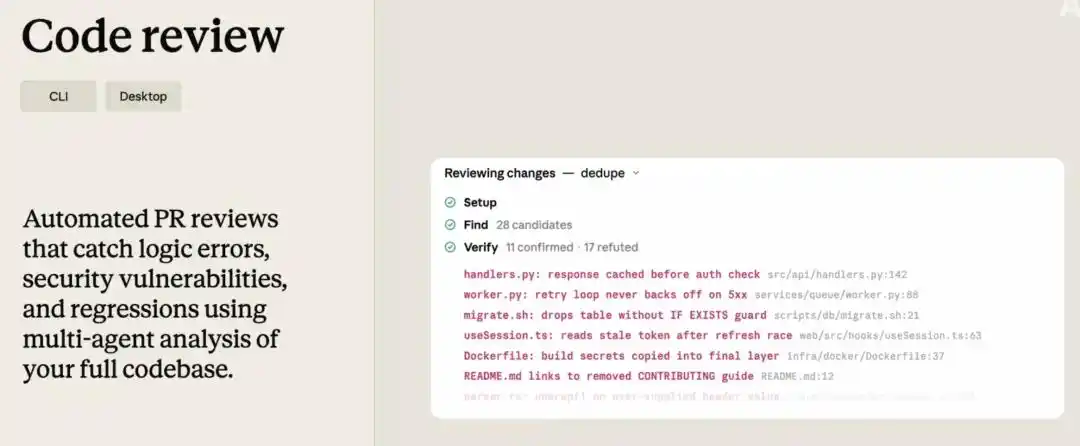

Multi-stage, multi-agent code review functionality: The system initiates a group of review agents that independently examine the code from different perspectives, then validates and confirms all review results. This mechanism uncovers many issues that would otherwise take hours to identify.

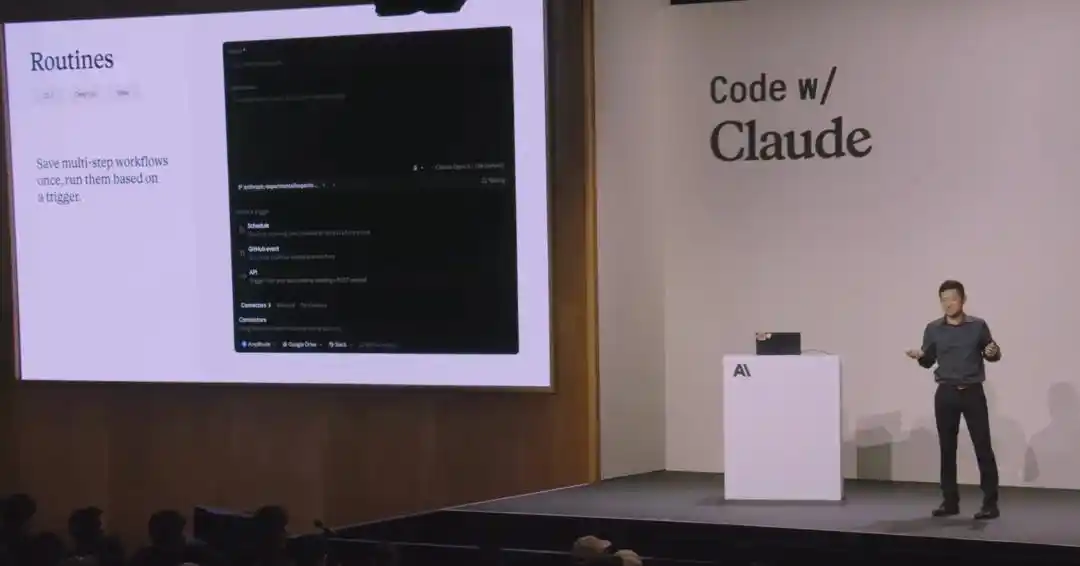

Routines: This feature is currently in preview. To use it, simply configure your prompts, code repository, and related connections once, then select trigger options such as scheduled Cron jobs, daily fixed-time execution, or GitHub webhook events—Claude will then run automatically.

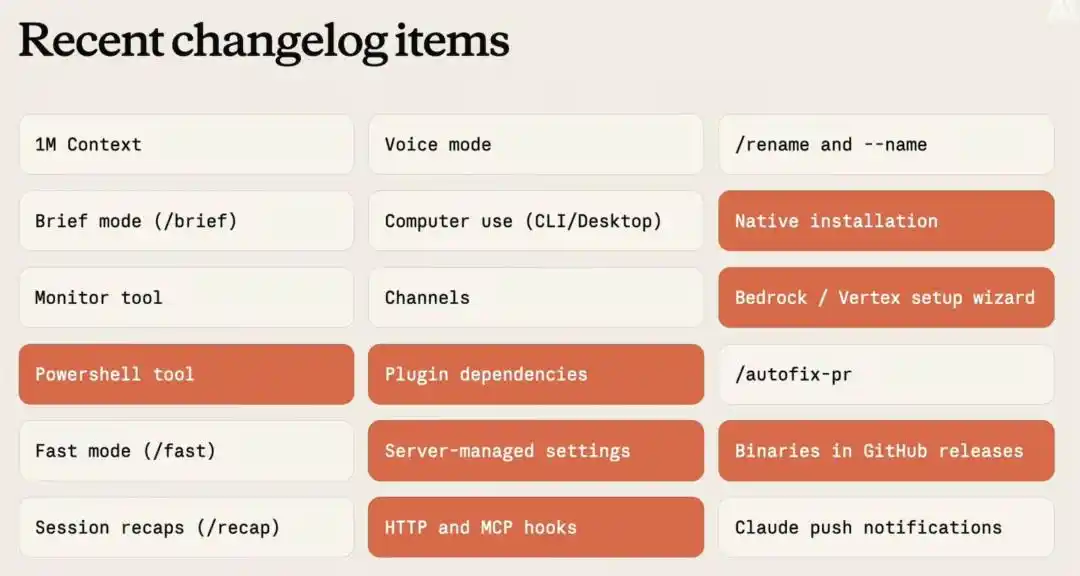

The recent update also includes the following:

03. Define three key future R&D directions—design architectures with the next-generation model in mind.

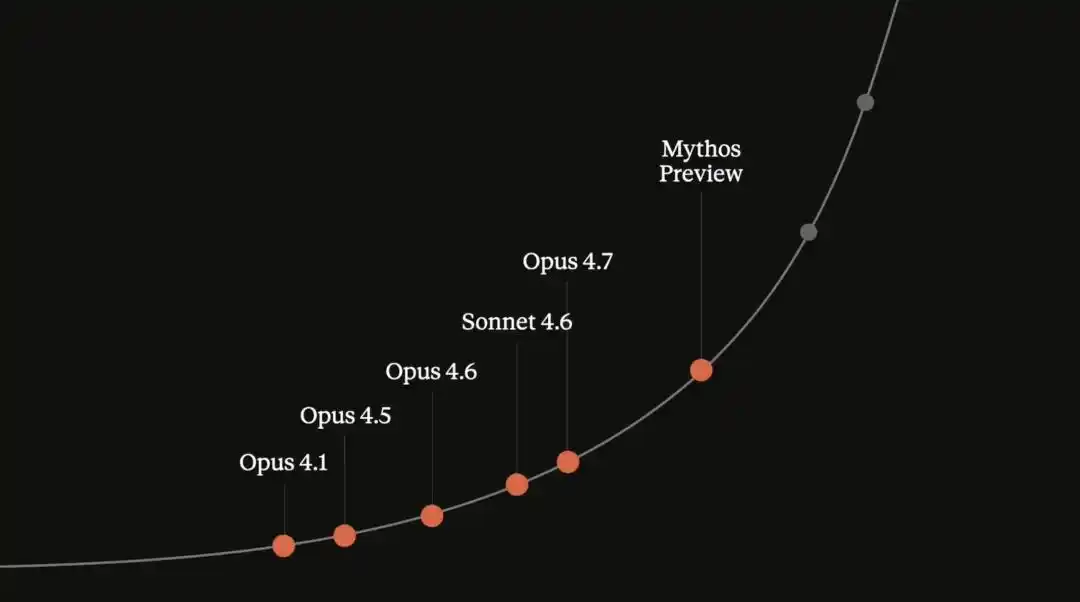

Dianne Penn said that Anthropic has released 18 versions of the Claude model, including Sonnet, Opus, and the new Mythos series, all of which are now available to developers.

Over the past year, they have gradually launched eight cutting-edge large models for developers. The exponential advancement of these models means their intelligence is becoming more logical, more strategic, and more thoughtful.

Future developers will have proactive, 24/7 online agents that know exactly what tasks to perform and maintain consistent, on-track logic throughout. The way everyone uses and builds upon the Claude model must also evolve accordingly.

Therefore, internally at Anthropic, it is believed that architecture design should be oriented toward the next generation of models, not merely adapted to the current version. The developers who ultimately succeed will proactively optimize their architectures to prepare for the next leap in intelligent capability, rather than focusing solely on incremental performance improvements. This demands the industry to continuously establish and build higher-standard evaluation systems and boldly develop cutting-edge prototypes that may currently seem unfeasible.

For businesses, the two main challenges are obtaining output results that meet expectations and rapidly launching and delivering services.

The Claude platform was built for this purpose, featuring API foundational primitives specifically fine-tuned for Claude models. It provides enterprises with the underlying infrastructure to build and scale agent systems, along with a comprehensive set of management capabilities for operating and maintaining these systems.

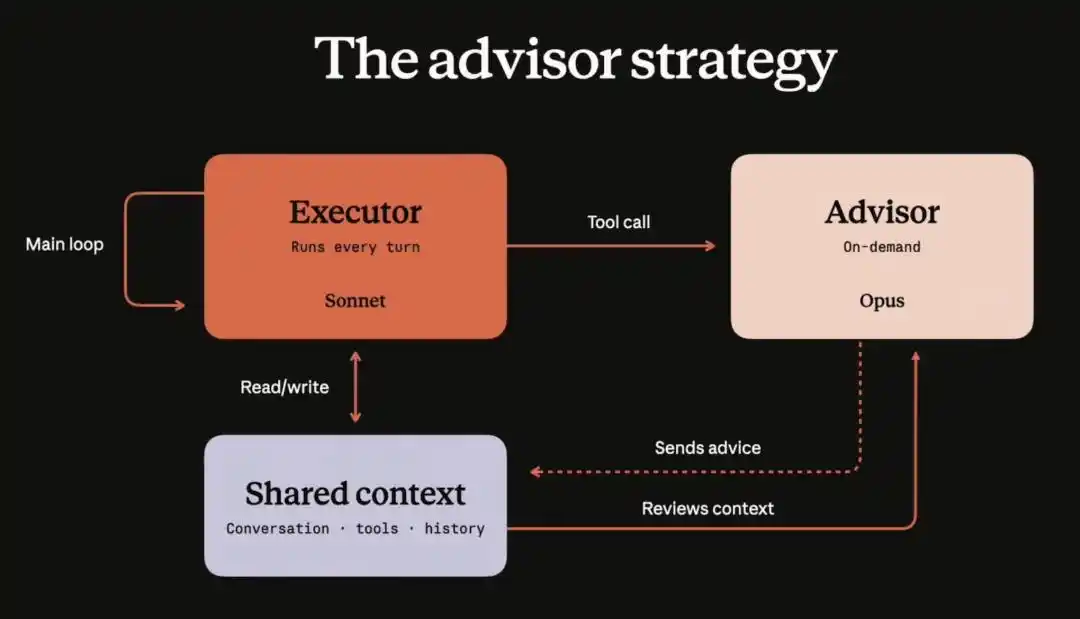

Angela said that the most common issue businesses face is their urgent need for advanced intelligent capabilities, yet they struggle to implement and utilize them effectively. One of the solutions proposed by Anthropic is consulting strategy capabilities.

Enterprises only need to update the tools array configuration in the Messages API.

Specifically, they provide enterprises with an agent architecture that separates the execution layer from the decision advisory layer. When performing tasks, enterprises can use lightweight small models to reduce costs. When the small model needs guidance on the next step, it can instantly invoke a larger model for advice.

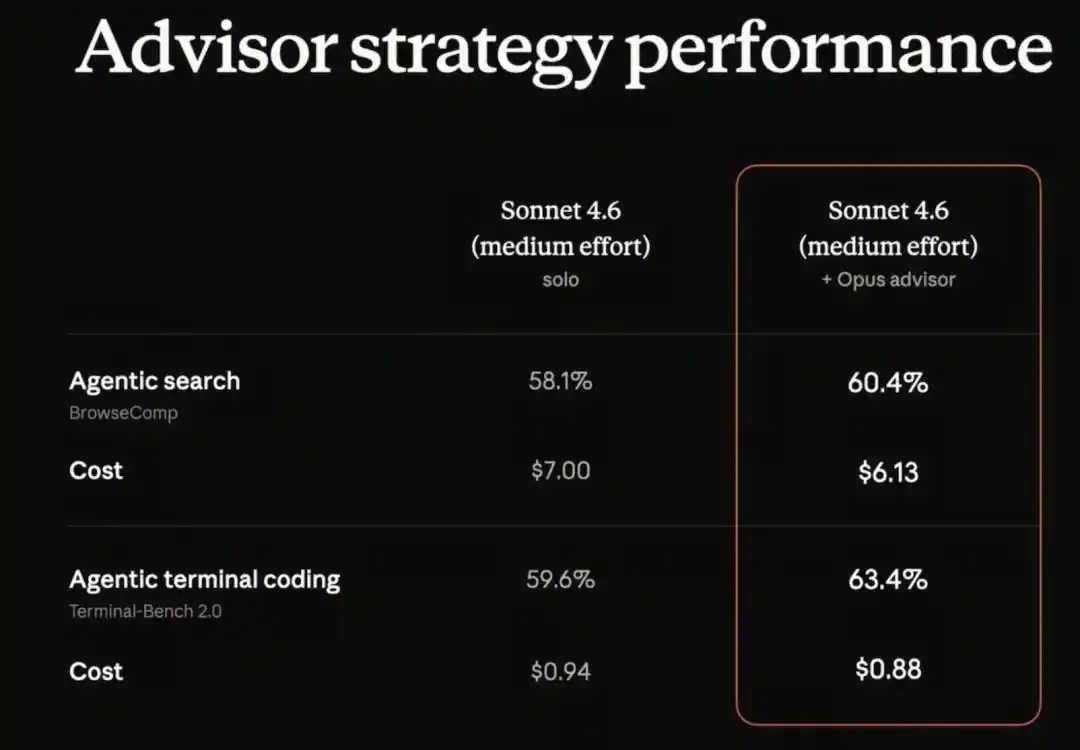

In practical implementation, companies can use lightweight models for task execution while relying on the Opus advanced model as a decision advisor. When they tested the combination of Sonnet for execution and Opus as advisor, the overall performance significantly exceeded that of using Sonnet alone, and the total cost of the entire solution was lower than using Sonnet by itself.

04. Conclusion: Anthropic is going all in—model development, computing power, and commercialization together?

Competition among large models is intensifying, and Anthropic has also revealed its development directions and future plans:

First, with stronger judgment and higher-quality coding skills, developers can empower Claude to handle autonomous engineering tasks;

Second, high-quality memory capabilities enable longer context windows, allowing developers to carry out extended, complex tasks while achieving superior output results;

Finally, there is multi-agent collaboration capability, enabling the formation of agent teams to work together, with multiple Claude instances dividing tasks and collaborating to accomplish complex objectives.

Large model companies are now fully transitioning to compete on comprehensive capabilities including computing infrastructure, models, ecosystems, and commercialization. Anthropic not only upgraded its own products but also announced a major partnership with SpaceX, further bolstered by the computing power from Amazon, Google, and Microsoft, widening its lead in total computing resources over industry competitors. Meanwhile, with simultaneous reductions in API pricing and increases in usage limits, Anthropic’s products are demonstrating superior cost-performance, likely accelerating the migration of numerous small and medium-sized businesses from other large model platforms to the Claude ecosystem, further solidifying its market share in the enterprise AI sector.

This article is from the WeChat public account "Zhi Dongxi" (ID: zhidxcom), author: Cheng Qian, editor: Li Shuiqing