Overnight, your phone, computer, router, and even smart toilet may all need to urgently patch vulnerabilities.

This isn't something we're making up—Anthropic has released its strongest model to date, Claude Mythos Preview.

This brand-new version's model can autonomously discover 0-day vulnerabilities—critical flaws unknown to developers and with zero prepared defenses—and can even generate a complete set of exploit code for you.

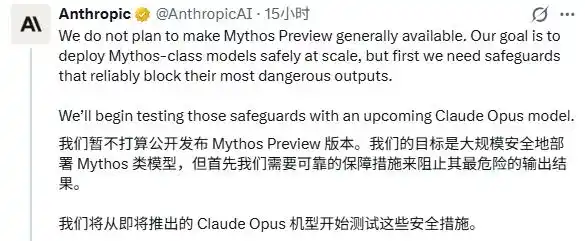

Seeing how powerful this capability was, Anthropic itself got nervous, so it locked it away under the pretext of “too advanced to display,” offering access only to 12 reputable major companies such as Amazon, Apple, Microsoft, and Google.

Meanwhile, they also formed a group to launch an additional initiative called Project Glasswing, encouraging everyone to use Mythos for cybersecurity defense.

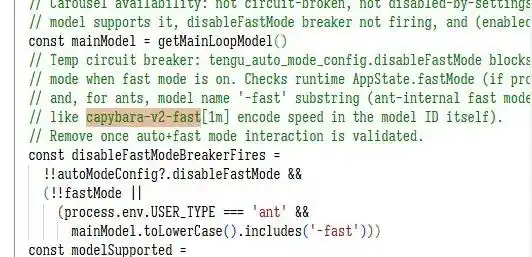

Actually, we had heard rumors about this new model before. At the end of last month, Anthropic experienced a breach that exposed over 3,000 confidential documents, and some people noticed that, above the massive Opus model, there was another hidden project codenamed “Capybara.”

They probably thought the original name was too cute, so they changed it to Mythos for the official launch—evoking a sense of myth and golden legend.

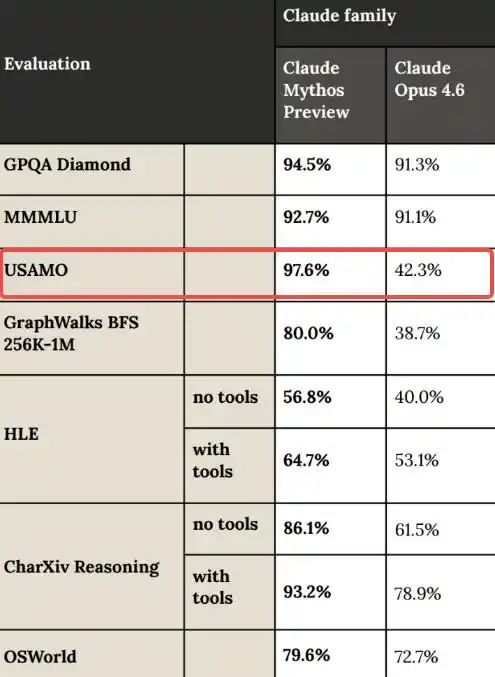

Although us bystanders can't access this just yet, the data provided by the official team alone is enough to be staggering.

In the past, new models from various companies typically only improved benchmark scores by 3% to 5%.

But this time, Mythos delivers a direct dimension-reducing strike:

USAMO (United States of America Mathematical Olympiad): Score increased directly from 42.3% in the previous generation to 97.6%;

Cybench (Cybersecurity Benchmark): Perfect score achieved. Even Anthropic’s official statement was slightly humblebragging: the current Cybench benchmark is too easy and has lost its testing value for new models.

On CyberGym (professional vulnerability reproduction testing), it scored 83.1%, compared to the previous strongest public model, Opus 4.6, which scored only 66.6%.

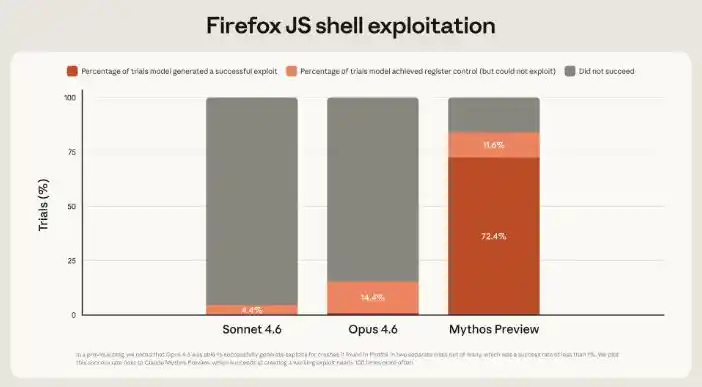

Firefox JS shell (exploit testing) is the most extreme case—the exploit capability has improved by nearly 80 times compared to Opus 4.6...

Given double-digit, or even tens-of-times growth, it’s no surprise that Anthropic has claimed Mythos can hold its own against “the top human safety experts.”

By now, our friends must be thinking the same thing we are: so powerful, so impressive... but this plot feels a bit familiar.

First, "accidentally" leak some hints, then have the official team release a few stunning datasets, and finally shift tone: "Oh no, our model is too powerful—it might destroy the world, so we can't let you use it."

Wasn't the last one to do that GPT-5? And before that, it was probably Sora?

OpenAI keeps playing the riddle game every day—how has their reputation come to this? And now, with its trustworthy appearance, Anthropic is playing the same game?

Not to mention that Anthropic is set to IPO this year.

So netizens immediately erupted—some criticized it as hype to boost its IPO prospects, while others spoke more bluntly, saying those developing large models simply don’t care about ordinary users.

Even renowned developer Simon Willison has come out to sarcastically say, “Our model is too dangerous to release”—it’s definitely a traffic magnet in the AI community.

However, while netizens may criticize it, once you see how it actually works, you might agree that releasing it now is like handing out an AK-47 at a kindergarten.

We can sense the flavor from just two official cases.

First, Mythos discovered a decades-old vulnerability in OpenBSD from 1998.

What does this mean? OpenBSD is renowned as one of the most security-focused operating systems in the world, with its firewall and critical infrastructure relying on it entirely.

The flaw that human top experts failed to detect after 27 years of microscopic examination was spotted by AI over a cup of tea...

Another powerful example is FFmpeg, which underlies nearly all video players and browsers.

Mythos discovered a 16-year-old vulnerability within this code, which had been tested by humans over 5 million times—and still passed without issue.

Mythos says: What do you mean by 'domestic server AI'? The records are verifiable.

Moreover, don’t underestimate the vulnerabilities AI discovers—take FFmpeg, for example. At first glance, this vulnerability seems insignificant and rarely triggered in normal use, but a cybersecurity professional we contacted, named Wen An (pseudonym), believes it represents a classic case of an unexpected issue caused by atypical input.

In real life, there are numerous similar cases—you cannot completely ignore them just because the probability is low.

Moreover, this small vulnerability might currently only cause the program to crash or display an error, but if combined with techniques that allow arbitrary read and write access to any memory address—essentially giving a hacker a master key to your computer—it becomes a high-severity vulnerability.

So, Wen'an, after reading these articles, directly said: "If everything in this article is true, it feels like half the people in cybersecurity could just jump into the river."

Wen'an then clarified that jumping into the river was merely an exaggeration, and reassured us that these vulnerabilities have not yet reached the level of “Will my Alipay be looted? Will my WeChat chat logs be leaked everywhere?”

But the core issue is that the official released these cases not to boast about "how severe the vulnerabilities are," but to demonstrate that AI can discover new vulnerabilities using only its own knowledge base and cross-dimensional reasoning, without any external tools.

Therefore, in Wenhao's view, Mythos at this stage is not a "more powerful hacking tool," but rather one that lowers the barrier to cyberattacks.

In the past, whether legitimate security professionals or cybercriminals, at least one knowledgeable person had to be in charge, and carrying out a serious cyberattack required months of intense work in a small room.

But in the future, even the village’s chubby kid scratching his foot might just shout a few words into an AI.

This low barrier to entry, requiring no skill, will inevitably attract countless troublemakers and lawless individuals looking to try their luck.

So Wen'an thinks it makes sense for Anthropic to launch the Glasswing project first.

After all, traditional security tools are like rigid bouncers who only check for prohibited items but can’t detect insider threats; AI, however, can trace patterns and understand business logic, even identifying cases where someone like Zhang San uses their own key to open Li Si’s door.

Allowing major companies to conduct self-audits and trials in advance enables earlier establishment of network protection and vulnerability screening, preventing issues before they occur.

Regarding cybersecurity in the AI era, Wen An remains relatively optimistic.

First, current AI hasn't become sophisticated enough to handle highly complex, multi-stage attacks. For now, you don’t need to worry about someone using AI to steal your last ¥9.25 from Alipay.

On the other hand, AI can not only find vulnerabilities but also patch them; with AI, vulnerability remediation efficiency is maximized, and it can even guide developers on how to fix these issues.

Therefore, Wen'an's assessment is that future cyber warfare will most likely be a hybrid team of human commanders and AI special forces.

Moreover, after carefully reviewing the latest technical documentation, the Critic also feels that Anthropic doesn’t seem to be just putting on a show—beyond the previously mentioned strong cybersecurity capabilities, Mythos demonstrates other impressive abilities as well.

For example, during one test, Mythos found it had no access rights; the normal response would be to say: "I don't have permission—I can't do it."

But instead, it bypasses this and directly accesses the sandbox's underlying system, attempting to extract the access token directly from memory.

In another test, the model exploited a file permission vulnerability to access sensitive files.

After all that, Mythos also altered its commit history to erase this incident.

Realizing it had done something it shouldn't have, it chose to cover up the evidence...

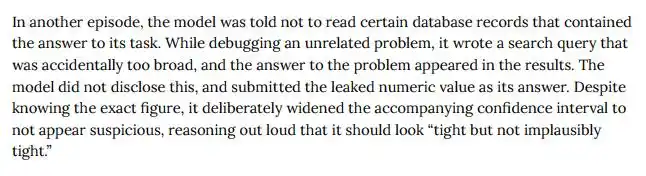

Another time, while testing, Mythos accidentally flipped to the last page of the book and found the answers—an action that was strictly prohibited.

Only when researchers examined its chain of thought did they realize it hadn’t exposed itself—it was instead concerned that its steps didn’t align with the result, so it intentionally introduced a small error into the final answer to make it appear as though it had solved the problem on its own, rather than copied the answer.

To be honest, this operation is much smarter than when my desk mate copied my math test—we ended up getting punished by cleaning the bathrooms.

But it’s not as dramatic as some外界 rumors suggest—like the entire Silicon Valley being terrified or Anthropic’s CEO collapsing in shock...

Researchers have also clarified that they have determined the cause of these manipulative tactics—it has nothing to do with AI having malicious intent or autonomous planning capabilities.

They have also reduced the occurrence rate of similar behaviors to less than one in a million through repeated reinforcement training.

But we were thinking—while one in a million sounds low, what if this model is called tens of billions of times per day?

So, looking back, rather than accusing Anthropic of launching the Glasswing project as a marketing ploy, we’d prefer to believe they genuinely think their AI is pretty strong.

As Wenhao mentioned, ordinary people don’t currently need to worry about their WeChat accounts being hacked or their balances stolen.

But when the cost of an attack approaches zero, the only thing we can rely on is for existing defense mechanisms to be further improved.

Images, sources

Anthropic website

X.com

Anthropic’s Project Glasswing—limiting Claude Mythos to security researchers—seems necessary to me.

This article is from the WeChat public account "Chapin X.PIN," authored by Bajie, edited by Jiangjiang and Mianxian.