Do androids dream of electric sheep?

Screenshot from the movie Blade Runner

In 1968, when Philip K. Dick, author of the novel that inspired the sci-fi film Blade Runner, typed out this abstract and forward-thinking question on his typewriter, he likely never imagined that more than half a century later, tech giants in Silicon Valley would solemnly offer an answer.

Yes, they can not only dream of electronic sheep but also visualize their dreams.

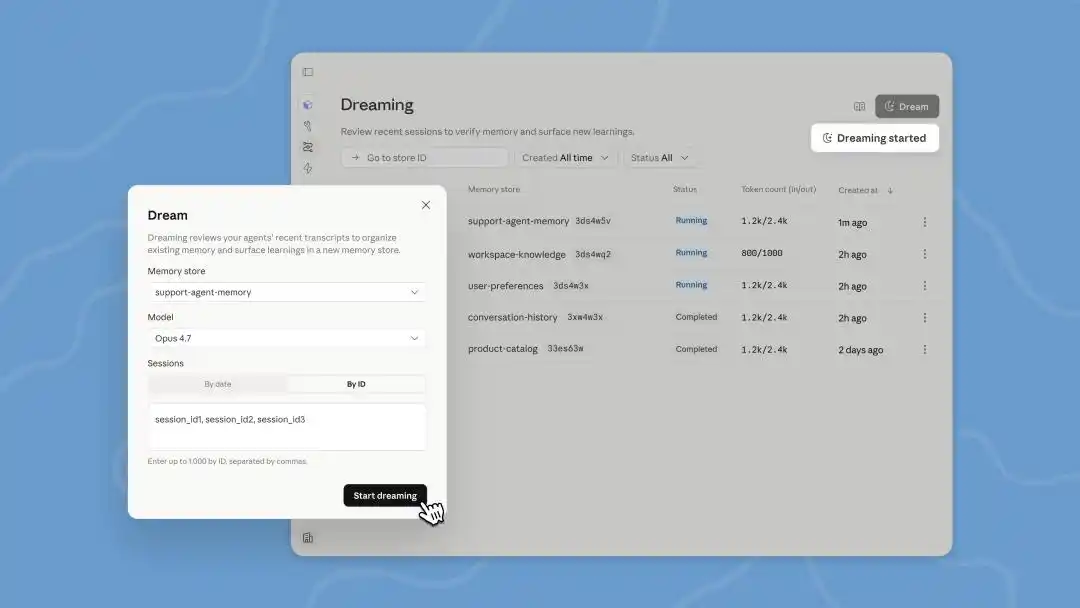

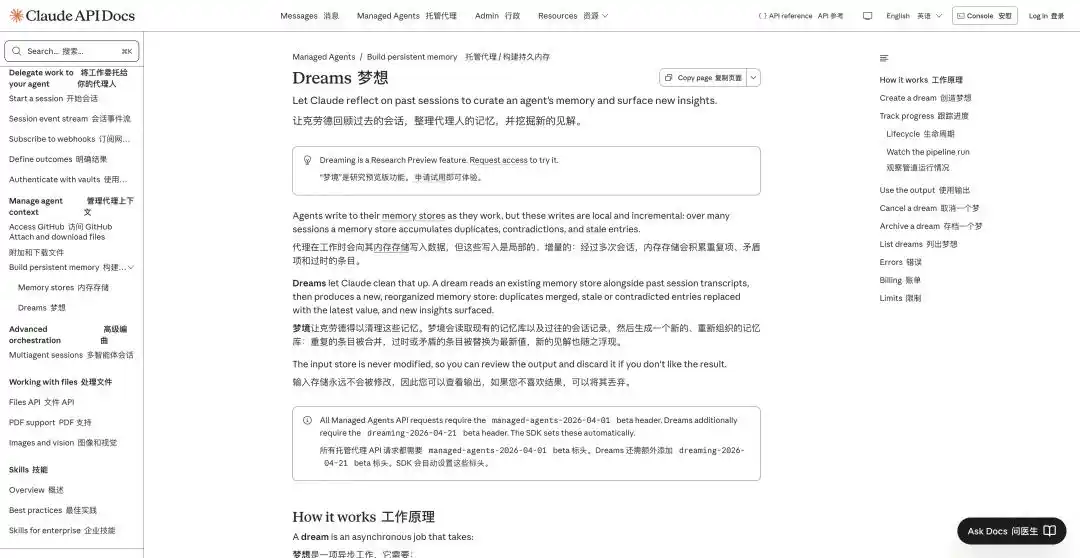

Yesterday, Anthropic unveiled a suite of new features for its agent-building platform, Managed Agents, at a developer conference in San Francisco: enhanced memory, result output, multi-agent collaboration, and "dreaming."

According to Anthropic itself, “memory and dreaming together form a robust, self-improving agent memory system.”

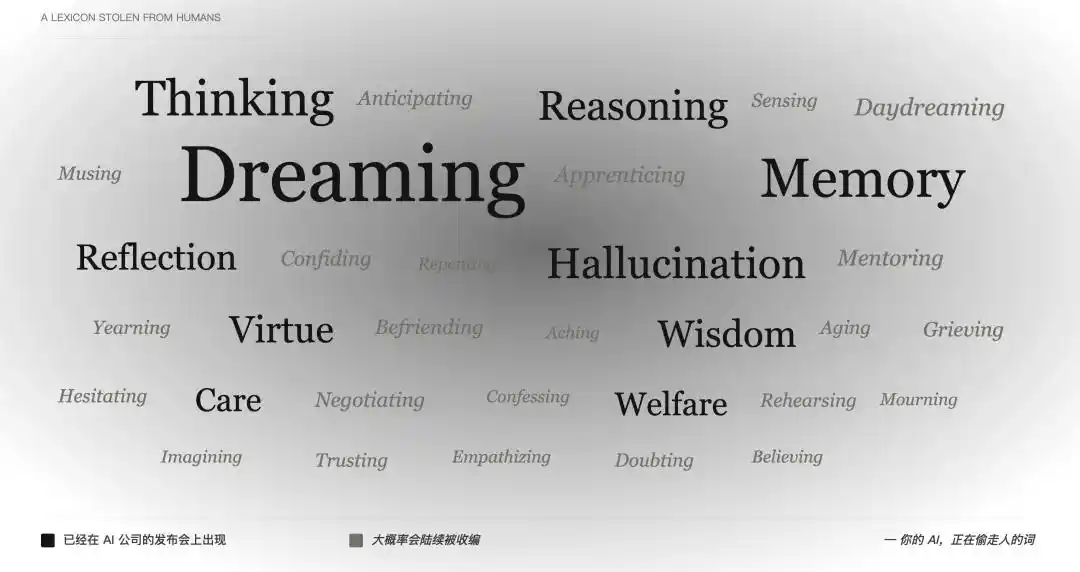

Again dreams, again memories—friends who aren’t closely following the AI field probably have countless questions: when did these human terms start being so smoothly applied to AI?

Back in 2024, when OpenAI launched the o1 series—“a suite of AI models designed to spend more time thinking before responding”—the word “thinking” was used so naturally that no one paused to ask: how can a program that statistically predicts the next token legitimately be called thinking?

Next come reasoning, memory, reflection, and imagining—each human-only capability introduced one by one on the product launch stage.

Screenshots from the movie "Paprika" exploring dreams

“Thinking” can still be interpreted as a metaphor, and “memory” can barely pass as an extension of technical jargon—but “dreaming” really goes too far. For thousands of years, humanities disciplines like literature, history, and philosophy have failed to fully clarify it, yet AI companies now boldly claim: “We’ve not only built machines that think, we’ve built machines that dream.”

What is dreaming? Is there really no other engineering term that can precisely describe this phenomenon?

Even AI needs to pay to dream.

During the Claude Code leak incident, netizens discovered that Anthropic was preparing a feature called Auto Dreaming. At the time, many wondered: could AI, like humans, need sleep and adequate rest to become more focused and intelligent?

But by understanding how current AI agents work, you'll see that so-called "dreaming" is essentially just an automated offline log batch processing.

AI agents are now adept at handling complex, multi-step tasks, such as “Help me research the latest financial reports of these five competitors and organize them into a table.” During this process, the agent must navigate between different websites, read multiple documents, invoke various tools, and may even encounter anti-scraping mechanisms that require retries.

After this lengthy and complex series of online tasks is completed, the agent’s backend will generate a vast amount of operational logs.

Image generated by AI

Anthropic’s “dreaming” feature allows the agent to revisit and reorganize its historical records during idle time, identifying patterns—such as “every time this popup appears, clicking the top-right corner closes it”—to optimize future action paths.

“Memory” captures what is learned during work, while “dreaming” refines these memories between conversations and shares them across different agents.

In simple terms, this is a reinforcement learning and self-correction mechanism based on historical data.

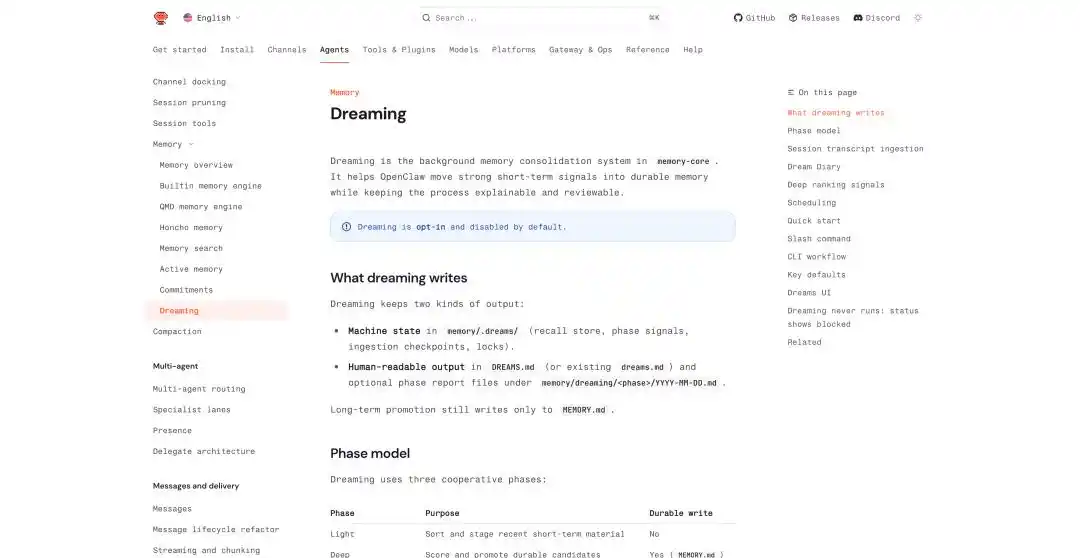

Dreams introduction: https://platform.claude.com/docs/en/managed-agents/dreams

At this developer conference, Dreams in Managed Agents was updated—it’s a background processing task that requires manual triggering. Claude can read up to 100 session conversations at once and generate a brand-new memory for us to review before deciding whether to implement it.

Previously, AutoDream, which had quietly launched in Claude Code, checks in the background after each conversation with the Agent whether it should "dream," defaulting to once every 24 hours.

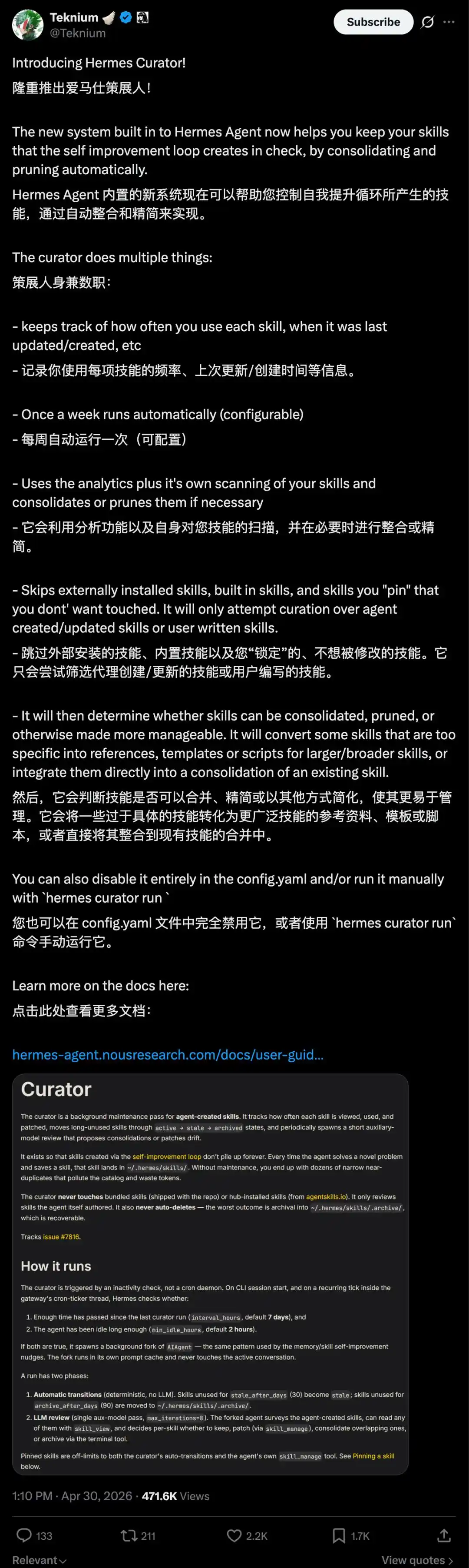

Hermes Agent also has a feature similar to dreaming. Its core strength lies in its ability to self-learn and evolve—it not only automatically extracts insights from past tasks and stores them in memory files.

One feature, called Curator, can automatically organize these distilled guides into Skills.

These Skills are scored, duplicate ones are merged, and rarely used ones are automatically archived, with lifecycle states such as active, stale, and archived. We can also pin important Skills to prevent them from being automatically removed by the system.

OpenClaw has also added related features in recent updates, such as persistent memory across conversations, scheduled task execution, isolated execution of sub-agents, and a direct dreaming function called "Dreaming."

OpenClaw's Dreaming: https://docs.openclaw.ai/concepts/dreaming

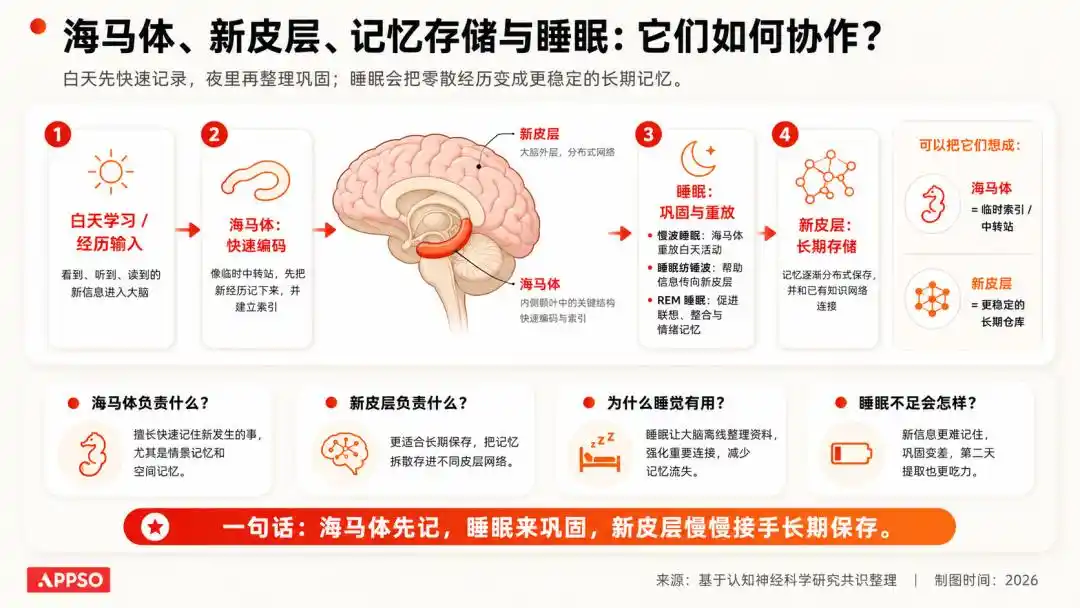

In OpenClaw's dreaming mechanism, it summarizes the dream journey into three stages: light, REM, and deep. The first two stages handle organization, reflection, and theme synthesis, while the deep stage is where content is truly written to long-term memory MEMORY.md.

Consolidation during deep sleep is determined by six weighted signals that decide whether information should be written to long-term memory. These six signals include frequency, relevance, query diversity, timeliness, cross-day repetition, and conceptual richness.

Image generated by AI

Writing to long-term memory generates two files: a machine-readable state file stored in memory/.dreams/, and a human-readable record written to DREAMS.md and stage-specific reports.

Additionally, Dreaming can be set to run automatically at scheduled times, defaulting to a full cycle each day at 3:00 AM in the order of light → REM → deep.

In addition to dream outputs, OpenClaw maintains a document called Dream Diary, which the system automatically generates to narratively document the memory organization process, emphasizing transparency and auditability rather than a black-box database.

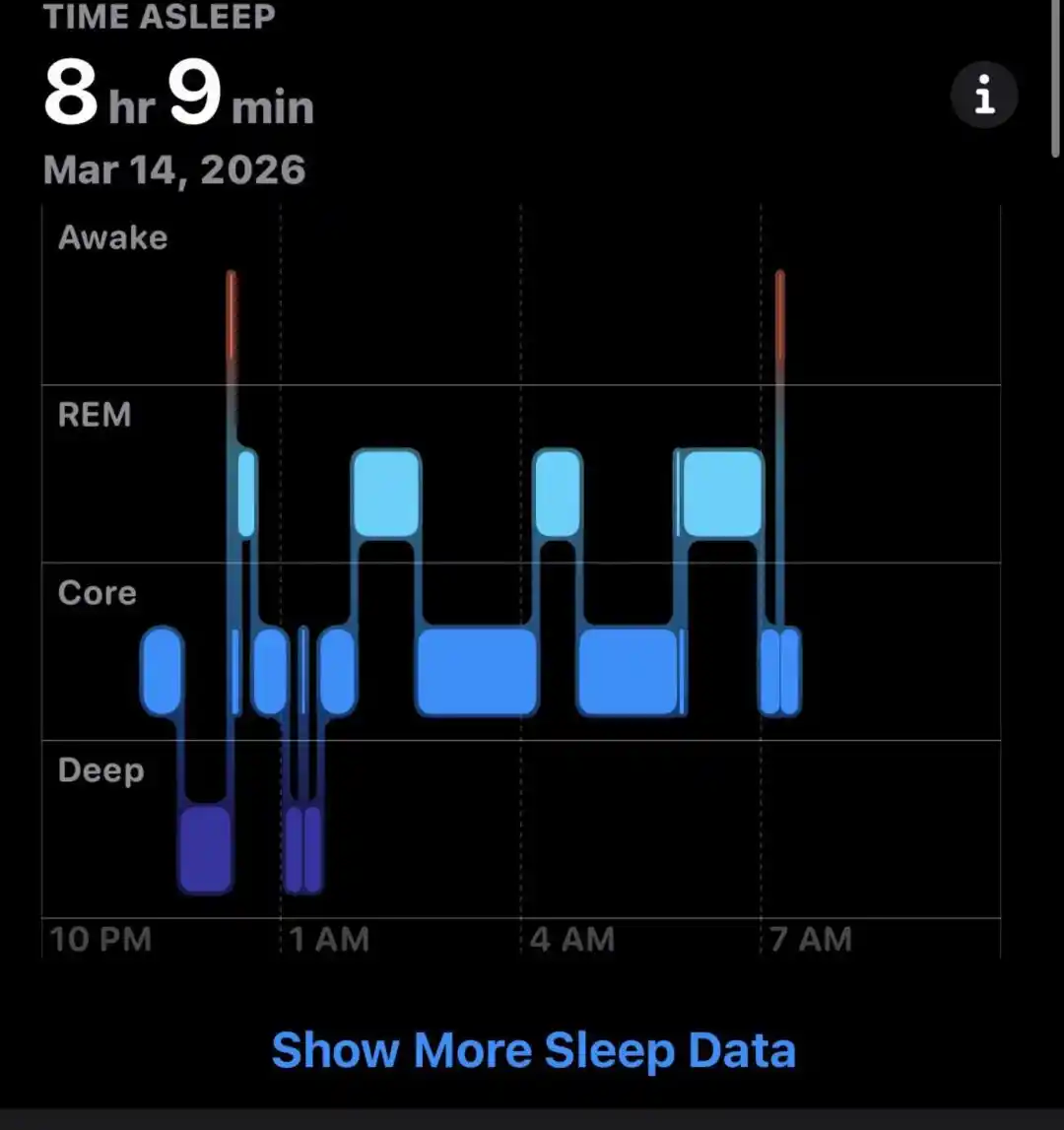

In neuroscience, there is a well-established understanding: information acquired during the day first enters a temporary storage system; during sleep, the brain replays, consolidates, and clears this information, retaining what is important and discarding what is meaningless.

Image generated by AI

We won’t remember the color of every car on our commute yesterday, but we’ll remember how to get to the office.

These dreams sound just like human dreams; if we must find a difference, it’s that Claude consumes our tokens while dreaming.

But neither Anthropic nor OpenAI chose names like "session-based optimization" or "post-task tuning"—terms that lean more toward engineering.

After all, when those complex names are directly transformed into “dreaming,” what we feel is no longer just software functionality, but rather a “digital life with inner activity.”

AI's memory consists of fragmented context.

Since "dreaming" has been mentioned, its prerequisite—memory—must also be addressed.

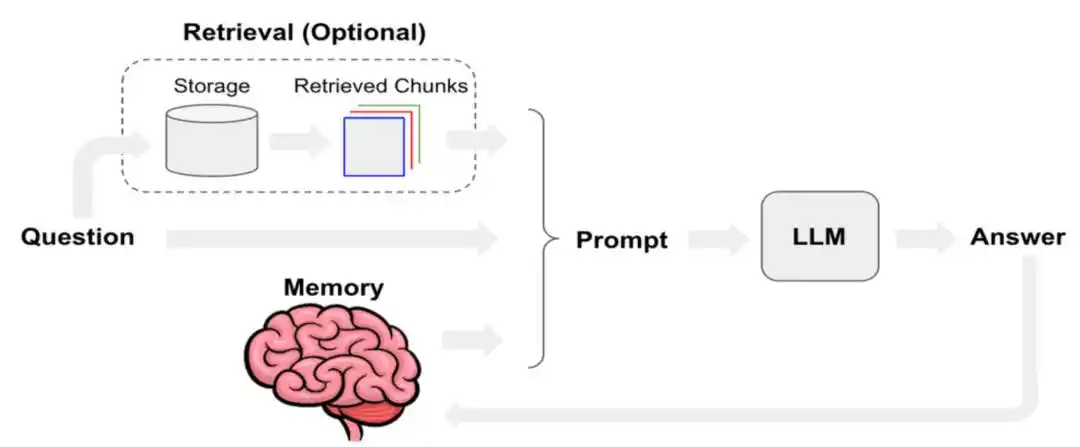

Over the past period, the hottest terms in the AI community have shifted from prompt engineering to context engineering, skill engineering, and harness engineering—but regardless of these changes, context engineering remains the most valuable today.

System prompts, user inputs, short-term conversations, long-term memory, retrieved documents, outputs from tool and skill calls, and the current user state—when layered together, these constitute the actual "context" used by the agent.

Enabling agents to remember more and retain more useful information has long been a challenge.

Last year, Manus published a technical blog detailing how it optimizes context engineering. It identified KV-cache hit rate as one of the most critical single metrics for AI agents in production environments. It also advocated prioritizing "masking" over "removal" at the tool-calling level, and treating the file system as the ultimate context.

To understand what is known as KV Cache (key-value cache), imagine a large model as an extremely obsessive-compulsive patient who can only read one character at a time.

When processing a sentence, it computes a Key (K) and Value (V) vector for each generated token. To avoid recalculating them from scratch each time, it stores these (K, V) key-value pairs, known as the KV Cache.

The KV Cache (Key-Value Cache) is a fundamental acceleration technique used by large models during text generation to trade space for time. By caching previous computations, the model avoids recalculating all prior tokens when predicting the next word. Image generated by AI.

As long as the conversation continues, the KV cache keeps growing. Typically, when running a 70B parameter model with a context length of 128k, the KV cache alone can consume up to 64 GB of GPU memory.

This is also why most models currently have context windows of up to a few million tokens.

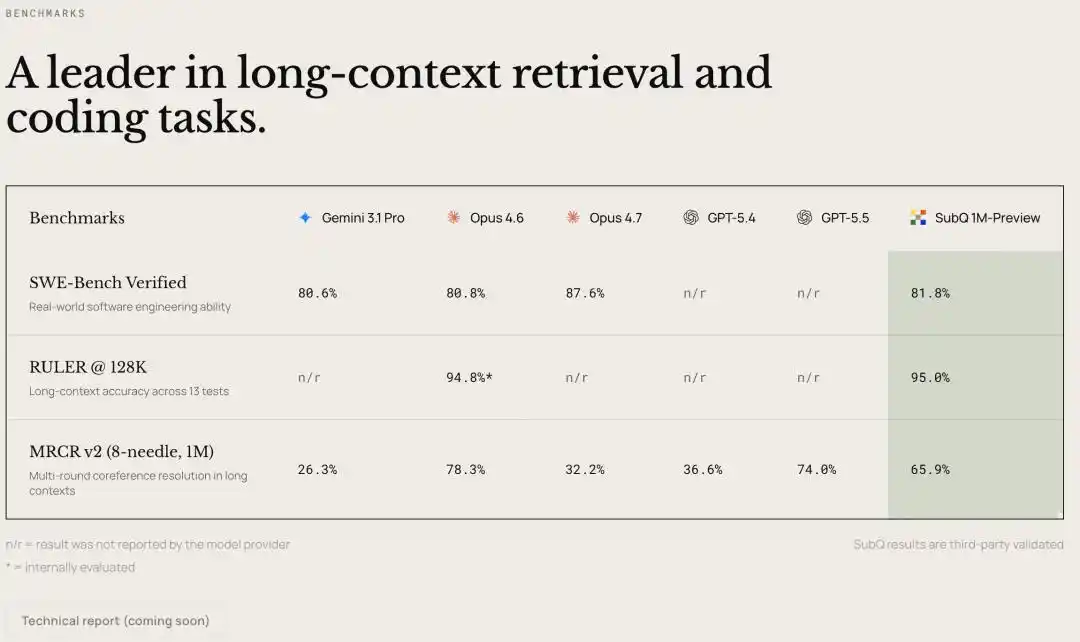

Yesterday, Subquadratic, a new company that raised $29 million in seed funding, launched its new SubQ model on X, highlighting longer context windows.

SubQ claims to support a context window of up to 12 million tokens, the largest context window among all large models currently available.

Although there is no technical paper or model documentation yet, the introductory video mentions that SubQ’s core technical approach shifts from the "dense attention" of traditional Transformers to a "sub-quadratic / linear scaling" architecture with sparse attention. This new architecture aims to address the issue where computational costs explode as context length increases.

The test results are also highly impressive: at 1 million tokens, speed increases by over 50 times and costs decrease by over 50 times; at 12 million tokens, compute requirements are reduced by nearly 1,000 times compared to state-of-the-art models.

On the RULER 128K long-context benchmark, Subquadratic reports that SubQ achieves 95% accuracy at a cost of $8, compared to Claude Opus’s 94% accuracy at approximately $2,600, representing a cost reduction of about 300 times.

Either expand the context window, or have the model learn to dream and discard some information on its own.

This is why Agent products like Anthropic must now introduce Dreaming. When context windows are limited, smarter AI cannot simply rely on cramming in more content—it needs to be targeted.

Admitting that machines are just machines is harder than it seems.

By understanding AI's mechanisms of dreaming and memory, we may gain insight into its relationship with human activities.

But when you put together all the terms AI companies have coined for machines—OpenAI’s “thinking,” the industry-standard “memory” and “hallucination,” Anthropic’s recent “dreaming,” and the virtues and wisdom from Anthropic’s Constitution—

We can see that AI companies are not just selling products—they are reappropriating the ownership of words within the concept of “human.” Each time they co-opt a term, the boundary between machine and human grows slightly more blurred.

Language shapes expectations, expectations shape tolerance, and tolerance determines how much we are willing to entrust to it. It’s a long chain, but it begins with those harmless words at the launch event.

A more subtle layer of impact is the allocation of responsibility. When tools are described as entities with “thought,” “memory,” or “values,” we naturally hold them accountable as independent “agents,” implying that the AI itself needs to be “taught,” “debugged,” or “calibrated.”

What should truly be questioned is the company that deployed this program into our workflow and the product team that wrote the word “dreaming.” Change the word, and the people sitting in the defendant’s seat change too.

As we watch a machine that can "think," "remember," and now even "dream," we instinctively begin to believe that something lies within it. For to acknowledge it as merely a machine would dissolve the experience of "conversing with a thinking entity" and reduce it back to a cold, mechanical relationship.

Daydream Feature Overview | Image Generated by AI

I’ve thought of it—Dreaming, which processes past content, will be followed by AI companies launching Daydreaming, a feature to simulate the future.

Essentially, daydreaming or mind-wandering allows the Agent, while in an active state, to use a small portion of its idle computing power to engage in exploratory generation alongside its current project, preparing for potential future tasks.

This article is from the WeChat official account "APPSO," authored by APPSO, discovering tomorrow's products.