While ordinary people are still studying the "strongest prompt formulas," top Silicon Valley labs have already turned AI infrastructure into a production line.

Author and source: AI World

Are you still repeatedly tweaking prompts in ChatGPT’s chat box?

Recently, an X user posted a tweet beginning with an exclamation: “The Claude Code project template, secretly used by major tech giants, has been leaked!”

This is no longer about writing prompts. This is AI engineering infrastructure.

The entire strategy revolves around a single file, "CLAUDE.md," whose core principles consist of only three rules:

Every time Claude makes a mistake → you add a rule; every time you repeat yourself → you add a workflow; every time a bug occurs → you add a safeguard.

This process is intended to transform project experience into long-term context and automated constraints that are read each time the system starts.

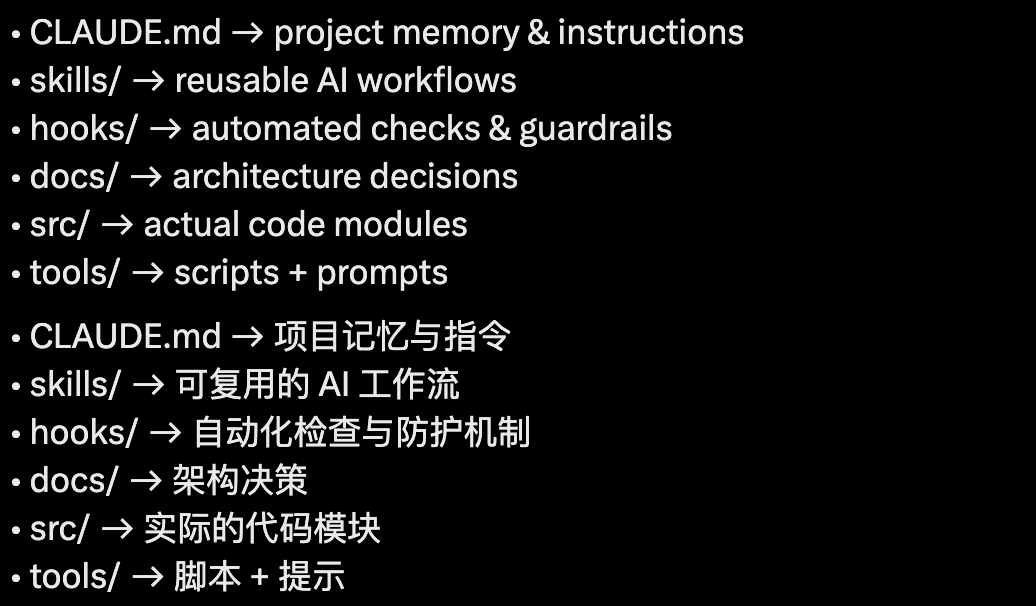

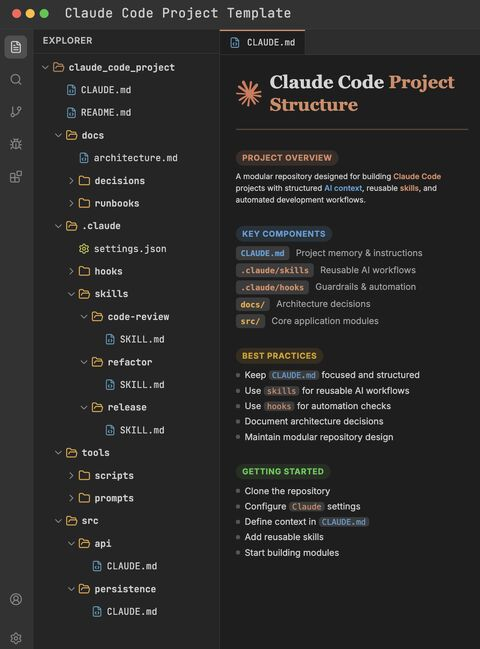

The entire architecture is like the organizational structure of an AI company: CLAUDE.md is the onboarding manual, skills/ is the standard operating procedure, hooks/ is the compliance department, docs/ is the company charter, tools/ is the logistics team, and src/ is the actual business unit doing the work.

You are no longer chatting with an AI; you are building an AI that understands your code repository.

The craziest part is that you only need to set it up once—Claude will automatically review your code, refactor it according to instructions, enforce architecture rules, write release notes, run workflows from skills, and remember past mistakes.

And it gets smarter the more you use it.

Most people open ChatGPT, write prompts, copy and paste, and repeat the process; with this approach, you simply open the terminal and run a pre-delivered skill code.

It’s like having a team of AI colleagues working in your own codebase.

Behind this tweet is a subtle signal that an era is quietly coming to an end—most people haven’t realized it yet.

A “leak screenshot” that isn’t actually a leak exposes a truth

The screenshot shared by @ai_rohitt is the standard Claude Code paradigm publicly recommended in Anthropic's official documentation.

CLAUDE.md is the project memory file that Claude Code automatically reads at the start of each session.

.claude/skills/ and .claude/hooks/ are officially supported extension mechanisms.

These are publicly established practices that the community has been discussing for months, not some stolen “internal template.”

But the fact that it has prompted some seasoned developers to share it voluntarily indicates that it has gained the approval of developers who use Claude daily.

A significant portion of people may have only just realized over the past couple of days that it can be used this way.

Meanwhile, the top team from Silicon Valley has turned this into a production line.

The first example is the OpenAI Frontier team.

In OpenAI's officially disclosed Frontier team experiments, an internal beta starting from an empty repository generated approximately 1 million lines of code and around 1,500 pull requests over five months; the team expanded from three to seven members, with no direct human coding involved.

In a subsequent interview, team leader Ryan Lopopolo further noted that this workflow has nearly reached the极限形态 of "0 manual code, 0 manual review."

He believes that instead of conserving tokens, it’s better to leverage the model’s extremely high concurrency and low cost to replace human beings’ limited and expensive synchronized attention.

The second example is Minions, Stripe’s internal automated code agent system.

Minions within Stripe generate and drive over 1,300 PR merges per week, with all code entirely AI-generated but still reviewed by humans.

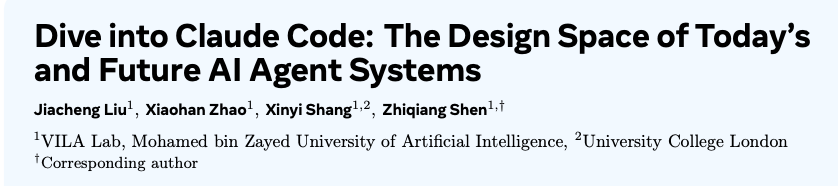

Here is another pair of data: 1.6% vs. 98.4%, from a paper published by the VILA-Lab at Mohamed bin Zayed University of Artificial Intelligence.

https://arxiv.org/pdf/2604.14228

Researchers systematically analyzed the 512,000 lines of TypeScript source code in Claude Code v2.1.88 and concluded that only 1.6% consists of AI decision logic, while the remaining 98.4% comprises deterministic engineering infrastructure.

Specifically, these include permission gateways, context management, tool routing, and error recovery.

This set of numbers does not mean the model contributes only 1.6% of the capability; rather, it shows that for Claude Code as a product, much of the complexity lies not in the model itself, but in deterministic engineering infrastructure such as permissions, context management, tool routing, and recovery mechanisms.

The CLAUDE.md/skills/hooks structure in that image is an "entry-level infrastructure" that any ordinary developer can set up—it follows the same paradigm as OpenAI’s and Stripe’s production-grade architectures, just on a much smaller scale.

The secrets exposed by CLAUDE.md

Over the past three years, everyone has been asking, "When will GPT get smarter?" and "When will Claude release a new version?"

But teams that have successfully implemented AI programming in production are likely more concerned with how to make the AI remember its past mistakes, how to ensure the AI reviews the project’s architectural constraints before taking action, and how to have tools automatically prevent the AI from making errors.

CLAUDE.md is the vessel for all of this.

Anthropic's official definition is just one sentence:

A Markdown file placed in the project root directory that Claude Code automatically reads at the start of each session.

https://code.claude.com/docs/en/memory

It sounds simple, but it's the several layers built around it that make it truly powerful.

CLAUDE.md is the project's brain.

Architectural decisions, naming conventions, testing requirements, and all the pitfalls we've repeatedly encountered are compiled here. It’s the “employee handbook” that AI sees first every time it starts up.

.claude/skills/ are reusable workflows.

Boris Cherny, the creator of Claude Code, repeatedly emphasizes in the community: "If you do something more than once a day, turn it into a skill or command."

A skill is an executable methodology. Code reviews, generating commit messages, and writing release notes shouldn't involve manually typing prompts every day—they should be accomplished with a single skill invocation.

.claude/hooks/ is an automatic guardrail.

This is the most critical part. It does not rely on the AI to judge itself; instead, deterministic code blocks it before the AI can make a mistake. This is why we dare to let the AI run “unsupervised”—the boundaries for errors are locked down by hooks.

docs/decisions/ contains architecture decision records.

Help AI understand not just what the code is, but why it is that way.

This is the most easily overlooked, yet it represents the greatest leverage point for AI collaboration.

The tools/ and src/ directories are the execution layer.

What’s truly noteworthy about this architecture isn’t that one developer created a neat directory, but that an increasing number of independent teams are converging on the same approach: placing models within a harness composed of context, tools, permissions, evaluation, and feedback loops.

You can already see many similar projects on GitHub:

rohitg00's awesome-claude-code-toolkit, diet103's claude-code-infrastructure-showcase, and affaan-m's everything-claude-code are all building engineered work environments for Claude Code around components such as agents, skills, hooks, rules, and MCP configs.

This shows that a truly mature AI programming workflow is not about relying solely on a more powerful model or a longer prompt, but about embedding the model within a reusable, controllable, recoverable, and auditable engineering system.

Regarding the specific directory structure, implementations may vary slightly.

OpenAI Lab's extreme experiment

On February 11, 2026, the OpenAI official blog published an article: “Harness Engineering: Leveraging Codex in an Agent-First World.”

https://openai.com/index/harness-engineering/

Anthropic has restructured the architecture of Claude Code around this concept; Martin Fowler’s website distills it into a formula: “Agent = Model + Harness.”

The word "harness" comes from equestrianism; it refers to the complete set of equipment for a horse, including reins, bridle, saddle, and headstall.

A horse can run fast and powerfully, but it doesn't know which way to go—the entire harness determines its direction.

Analogous to AI programming: the model itself is highly capable, but it doesn’t know where to go in your codebase. Harness is the steering wheel, brakes, and navigation system you build for it.

The OpenAI Frontier team's experiment with "1 million lines of code, zero human intervention" is essentially taking Harness to its extreme.

Their key engineering practices include the following:

Strict hierarchical structure.

Dependencies flow unidirectionally from Types to Config, to Repo, to Service, to Runtime, to UI, and are enforced at the CI level by a linter. If an agent writes code that violates the layering rules, the build fails immediately.

The linter error messages themselves are repair instructions, which is the most counterintuitive detail.

For regular projects, lint errors say "violation detected" for humans to read; for OpenAI Frontier, lint errors say "use logger.info({event: 'name', …data}) instead of console.log"—direct, machine-readable instructions that agents can understand and fix.

The documentation serves as the single source of truth. All architecture diagrams, execution plans, and design specifications are located within the docs/ directory in the repository. The agent requires no external knowledge base—everything is contained within the repo.

How effective is this set?

The model remained unchanged, but LangChain adjusted the harness—including system prompts, tools, middleware, and reasoning modes—ultimately increasing the Terminal Bench 2.0 score from 52.8 to 66.5.

What you can do today

Build a project brain for AI.

Back to the ordinary developer: if the paradigm has shifted, what can you do today as a regular engineer?

First, create a CLAUDE.md file in the root directory of your most important project.

Don’t aim for perfection or length. Write your team’s architecture rules, naming conventions, testing requirements, and past pitfalls— you can have a working version in 10 minutes.

Next time the AI makes a mistake, don’t fix it manually—instead, ask yourself: What’s missing from CLAUDE.md?

Second, turn daily repetitive tasks into skills.

Pay attention to Boris Cherny’s quote: “If you do something more than once a day, turn it into a skill or a command.”

Code reviews, generating commit messages, writing release notes, and fixing recurring types of bugs should be skills—not tasks you manually prompt every day.

Third, add a hook in areas where users are likely to run into pitfalls.

The hook is the most leveraged part of the 98.4%. It doesn’t rely on AI to become smarter—it relies on deterministic code to enforce checks. This is the process of translating human engineers’ judgment into machine-readable constraints.

The core of this matter is not writing code, but writing rules.

Karpathy’s widely shared tweet from January: “I’ve gone from writing 80% of the code manually to handing off 80% to agents.”

Over the next five years, engineers' skill curves are shifting from "How many lines of code can I write?" to "How strictly can I design the working environment for AI?"

The work of writing code is being taken over by agents.

But designing the world in which the Agent can write excellent code remains humanity’s work—and it’s harder, more important, and more fascinating than ever.