What should you do to prove your innocence if AI suddenly declares you guilty, and everyone blindly accepts its judgment?

This sounds like the plot from the movie Minority Report, where crimes are predicted and people are framed—but in a more abstract sense, a sloppier version of this has already played out in real life:

AI misidentified a criminal, causing an innocent woman to spend six months in prison, nearly ruining her life.

What does it mean that the police arrested "The Flash"?

Angela Lipps of Tennessee, USA, is this unfortunate woman. In July 2025, a team of armed police suddenly burst into her ordinary life, pointed guns at her, and told her she was under arrest.

She was momentarily stunned, as she had no idea what she had done wrong. The police claimed she was suspected of involvement in a bank fraud case in North Dakota, and the situation appeared to be backed by solid evidence—with a five-star warrant issued for her arrest. But upon hearing this reason, she froze in place:

But I’ve never been to that state in my life.

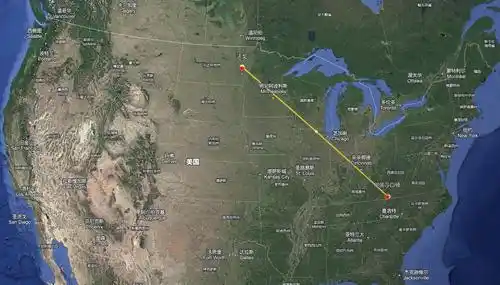

Geographically, Tennessee and North Dakota are indeed far apart, and it was AI that linked her to the suspect.

When the police arrested her, they automatically dismissed her defense; the specific details of the case only became clear after she was imprisoned. Police in Fargo, North Dakota, were investigating a fraud case in which the suspect used a fake military identity to scam tens of thousands of dollars from a bank. Their investigative approach involved reviewing surveillance footage first, then consulting AI, and finally making the arrest.

You might think AI was used to "distill" Sherlock Holmes and transform it into a brilliant detective solving cases through rigorous logical reasoning, but it’s not that “complex.” Police used an AI system to perform facial recognition on screenshots from surveillance footage and then screened the database for suspects.

The AI this time made a major mistake, incorrectly matching Angela Lipps' photo. This information was then passed to the police department handling the case, where staff made an even bigger error: after manually checking her driver’s license and social media, they concluded that her facial features, build, and hairstyle were similar, and thus identified her as the suspect.

Image from GoFundMe

After hearing this, the older sister was utterly devastated and didn’t bother to investigate other leads—what if there was a case of mistaken identity? But even worse was yet to come: after a series of actions unfolded, she had no chance to prove her innocence.

According to local procedures, she was not directly taken to North Dakota for further investigation but instead remained in a local jail in Tennessee. Classified as a "fugitive across state lines," she was denied bail and denied the opportunity to be questioned by police, resulting in her being unjustly imprisoned for 108 days—from July until the end of October—making it hard to imagine how desperate she must have felt during that time.

Angela Lipps was finally taken out of prison on October 30 by police, preparing to be transferred to North Dakota, where she faced multiple charges. During transit, she was not only handcuffed but also chained around her waist, and she felt that passing through the airport was like being paraded in public.

And this was actually her first time ever flying on a plane.

What she didn’t expect was that she would still have to wait after arriving in North Dakota. It wasn’t until December that she finally had a proper opportunity to explain the situation—her lawyer directly pulled her bank records to cross-reference the timeline, proving that at the time of the incident, she was buying pizza in Tennessee, nearly 1,900 kilometers away, meaning she couldn’t have committed the crime unless she were The Flash.

The lawyer clarified the matter for her in just five minutes.

By December 24, her accusations were finally dropped, but she had no reason to celebrate, as the “cut-off line” had already reached her ankles.

Angela Lipps spent her 50th birthday in prison. During her incarceration, she was not allowed to wear her dentures, ate only junk food, and endured high levels of mental stress, leading to significant weight gain. She entered prison wearing summer clothing, but when she was released, it was winter—she had no warm coat, making it difficult to stay warm, let alone return home. The police provided no travel funds, leaving her effectively stranded in North Dakota, trapped not only by her release but also by the day she was wrongfully accused by AI.

Fortunately, kind-hearted people came to her aid.

Her lawyer arranged for her to stay at a hotel and got her some food to get through the toughest moments; a local nonprofit organization rescued her and sent her back home to Tennessee.

But returning home made restarting life feel like a hellish ordeal. During her time in prison, her finances collapsed—she lost her rented apartment, her dog passed away, and even her car and everything inside it were gone. Her personal belongings had been temporarily stored in a warehouse, but since she couldn’t afford the storage fees, all her household items were eventually discarded. Even her relationships were strained, as neighbors had seen her arrested and “disappeared” for six months, assuming she had truly done something wrong.

At the same time, her outrageous experience quickly spread online, sparking widespread ridicule. For example, many people expressed disbelief, noting that in the U.S., some people can engage in "free shopping," but if you so much as touch a capitalist’s bank account, you’ll be arrested instantly—even if you didn’t do it.

Because the situation grew too large, her desperate circumstances once again took a turn for the better, bringing her even more support. She has now raised $80,000 in crowdfunding donations—more than enough to restart her life and business.

However, netizens' greatest hope now is that she hires a team of top lawyers to sue them relentlessly, potentially winning the case and securing another settlement.

This was not an isolated mistake by the police; numerous wrongful cases had occurred before, some of which were even worse than hers.

II. Terrifying Sci-Fi Moments Caused by AI "Mistakes"

Angela Lipps' experience has actually played out more than ten times across different regions, and even in other countries.

For example, Robert Williams was one of the earliest victims of such incidents; in 2020, police arrested him in front of his wife and daughter and detained him for 30 hours, despite being 20 centimeters taller than the actual suspect.

Another Chris Gatlin was also an extreme victim of bad luck: AI matched him as a potential suspect in a subway assault case based on blurry surveillance footage, leading to his wrongful detention for 17 months—the longest such incarceration of an innocent person to date. Even more absurdly, investigators only discovered at the very end that a body camera existed as crucial evidence—but the suspect in the footage bore no resemblance to him whatsoever.

In 2023, Porcha Woodruff experienced the same situation—police accused her of carjacking. She laughed in disbelief upon hearing the accusation, thinking it was a joke, as she was eight months pregnant and clearly not physically capable of committing such an act. Nevertheless, she was taken into custody and held for over ten hours, and later lost her legal case.

This year, a young man named Alvi Choudhury in the UK was also arrested under similar circumstances, accused of "burglary," though the evidence suggested the crime was committed remotely—an scenario also depicted in movies. The AI identified a photo of him from his 2021 detention; when police saw the real person, they laughed, as he appeared at least ten years older than the suspect.

Image from *The Transporter*

In 2022, Indian businessman Praveen Kumar was detained while traveling to Switzerland, after a layover in Abu Dhabi where AI matched him to a wanted criminal. He was extensively interrogated locally, but after it was confirmed he was not the suspect, he was repatriated to India—only to be detained twice more upon arrival at the Indian airport.

Among such incidents, the most outrageous was probably the experience of Russian scientist Alexander Tsvetkov, who was detained for 10 months after an AI claimed he resembled a murderer.

This scientist could be said to have been falsely accused by both AI and humans. In February 2023, after completing a research expedition, he returned to Russia and was arrested immediately upon landing. The reason? AI indicated a 55% similarity between his facial features and the suspect’s composite image from a serial murder case over two decades ago. Additionally, a key witness in the case, seeking a reduced sentence, deliberately gave false testimony identifying him. To make matters worse, the police conducted no thorough investigation and simply made the arrest.

After being detained, he initially thought it was a misunderstanding, but the investigation dragged on indefinitely. Under immense psychological pressure and deteriorating health, he was forced to confess, later retracting his statement. Fortunately, his wife and colleagues from the institute tirelessly worked on his behalf, gathering extensive evidence proving he was on a field investigation elsewhere at the time of the incident. As media coverage grew, the case sparked widespread public discussion. A breakthrough finally emerged in December, and the charges were officially dropped in February 2024.

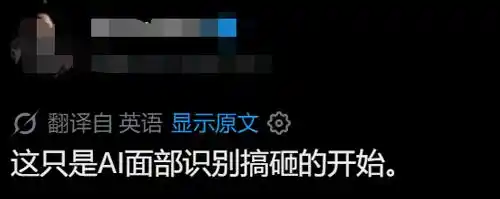

Cases of this nature are becoming increasingly frequent as AI evolves, appearing more as if AI is “directing,” while some people have become mere instruments carrying out its commands.

Three: The Occupational Hazard of "Let AI Decide"

The abstract feeling of "role reversal" arises because both AI and humans can fail—no matter how powerful, AI can still make mistakes, and humans are prone to slacking off at any moment.

Take Clearview AI in the United States, for example—the very system that sent the woman in the article’s opening photo to prison. Yet it is no shady software. Clearview AI claims to possess the world’s largest image database, having aggressively scraped billions of images despite fines from multiple countries. Over 3,000 law enforcement agencies in the U.S. use it, and last year it signed a $9.2 million contract with ICE.

But simply put, Clearview AI is a “facial recognition search engine”—you upload a photo, it matches against its database, and returns a list of similar images; you then determine whether the person in the results is the one you’re looking for.

Although AI has made rapid progress over the years, "accuracy" remains controversial, as data used in experimental tests often differs from real-world scenarios when using image search.

For example, most of the time, surveillance images obtained by police are of low quality, like those taken by a landline camera, compounded by unusual lighting, awkward angles, facial obstructions, and other factors—making identification genuinely difficult. The image database may also contain outdated photos, and any matching result could simply be a coincidence of two people looking similar.

When these errors are applied to real people, they naturally suffer the consequences.

Is this a crow or a cat?

Logically, if AI only occasionally makes mistakes, there’s still hope, because these are merely “clues”—the final “conclusions” still require human oversight. But often, humans are even more “outlandish” than AI.

Years ago, people were already criticizing U.S. police for being led by AI, falling into “automation bias”—relying on or even over-trusting AI-generated results. If AI says two people match, humans accept it without question; if the height doesn’t match, that’s not my problem; even if there’s an alibi, my job is just to make the arrest—any other evidence can be explained to the prison. By skipping basic investigative steps, they also skip people’s lives.

Humans are well aware of AI’s shortcomings; after paying substantial compensation, many U.S. jurisdictions have established “firewalls,” such as requiring independent evidence alongside AI-generated leads in investigations, and some have even banned the use of AI technology entirely for criminal investigations.

But these restrictions can't hold back how incredibly useful AI is. Although there's a chance it might fail, successful outcomes clearly occur far more often. Sometimes, the only lead police painstakingly uncover is a surveillance photo of "lock-level" quality—so they inevitably try AI just to see if it works. Back in 2023, reports already emerged that U.S. police had run over a million searches using Clearview AI; even after public use was officially banned, they continued using it covertly, simply denying it when questioned. If one software was blocked, they switched to another; if local use was prohibited, they enlisted other organizations to access it for them—they were utterly hooked.

So, once AI provides an unreliable lead and humans lazily skip the investigation, the combination of these two errors inevitably leads to a miscarriage of justice.

Similar issues have also been faced in recent years by Brazil’s Smart Sampa system. In 2024, São Paulo, Brazil, launched Latin America’s largest AI-powered facial recognition police system, reportedly connected to 40,000 cameras.

The good news is that the system is highly effective: nearly 4,000 criminals have been apprehended on the spot over the past few years, over 3,000 fugitives have been captured, and robbery cases decreased by nearly 15% in 2025—earning it the nickname “crime-fighting assembly line.”

The bad news is that at least 59 people were mistaken.

Among these cases are some abstract ones—for example, a psychiatric patient was taken away from the hospital as if he were a criminal, only to be released later when it was discovered that his arrest warrant had expired. Another man was arrested four times within seven months because the AI confused him with a fugitive murderer; each time, he was immediately released upon arrival at the police station, only to be arrested again a few days later—he was left utterly terrified.

When we previously discussed jobs that AI couldn't replace, we always said AI couldn't go to jail for a person—but we never considered the other side: now, it can make people "go to jail."

This used to be a joke, but now it’s almost not even news. In fact, it’s not just facial recognition—AI can sometimes pull off some impressive feats in object recognition too.

Last year, an AI security system at a high school in the U.S. mistook a student’s bag of chips for a “potential firearm,” triggering an alarm. Eight police cars arrived and immediately detained the student; only after a search did officers find the snack wrapper in a trash bin, leaving everyone embarrassed—the student genuinely feared for his life.

Don't say it, don't even say it

Who could have imagined that the more powerful AI becomes, the bigger the mess some people cause with it? In the past, AI was clumsy—misidentifying images and becoming the punchline of the internet for days. Now that AI is powerful, misidentifying one person can land a human in jail for half a year. Let’s not create abstract concepts in the future like AI judges, AI lawyers, or新能源courts. Ultimately, AI is just a tool—the key lies in the user’s ability and intent. Or rather, the more powerful AI becomes, the less room there is for human laziness.

In short, I can't help but miss that clunky old AI a little.

This article is from the WeChat official account "Cool Play Lab," authored by Cool Play Lab.