The United States spent three years, implemented four rounds of export controls, and targeted 24 categories of semiconductor equipment and over 140 entities on the list in an attempt to cut off China’s access to advanced AI chips. However, according to a report released by the U.S.-China Economic and Security Review Commission (USCC) on March 24, 80% of U.S. AI startups are using Chinese open-source models.

The wall is built at the hardware layer. The door is opened at the software layer.

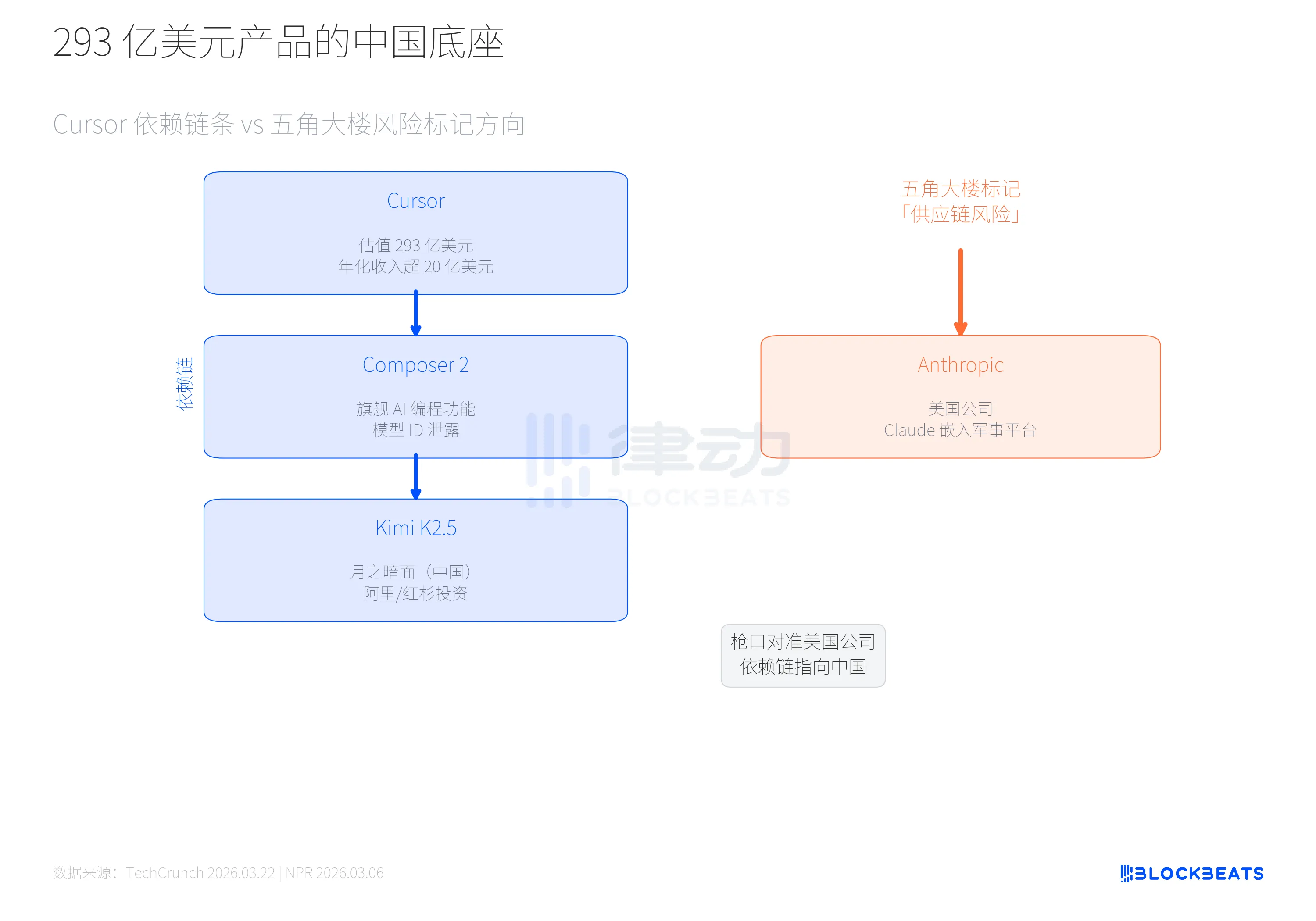

This contradiction is not an abstract policy debate. Just last week, it was discovered that Cursor, a $29.3 billion AI programming tool, uses Moonshot’s Kimi K2.5 as the foundational model underlying its flagship feature, Composer 2. A model from a Chinese company is powering the leading AI development tool in the United States.

Meanwhile, the Pentagon labeled Anthropic, a U.S. company, with the tag “supply chain risk.”

The direction of regulation is completely opposite to the direction of actual dependency.

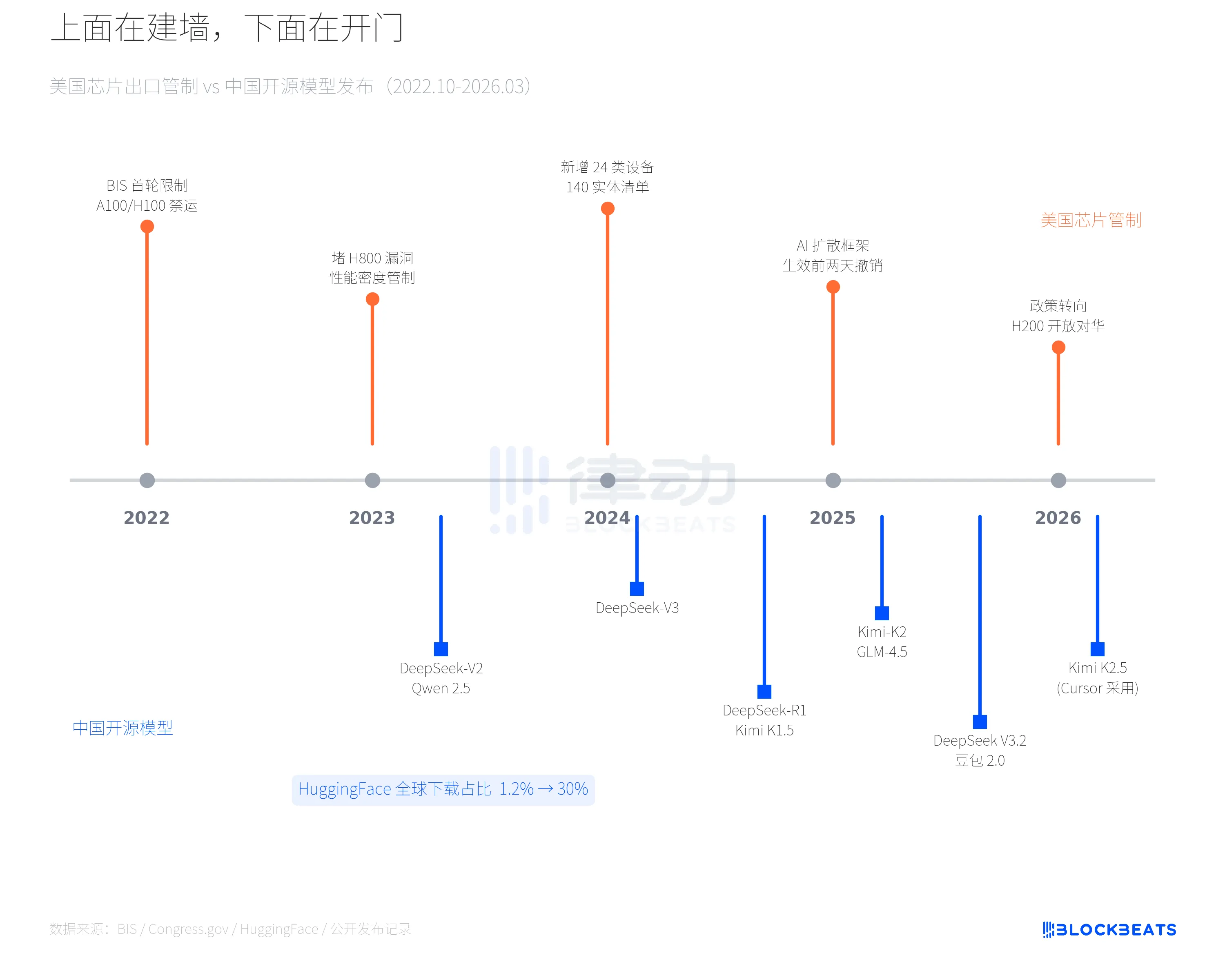

Starting in October 2022 with the BIS's initial restrictions on the export of A100/H100-class chips, U.S. chip controls have continuously intensified. In 2023, the loophole for H800 was closed, and performance density metrics were expanded. In December 2024, another round of restrictions was added, introducing controls on 24 categories of semiconductor equipment and adding 140 Chinese entities to the blacklist, extending restrictions to include high-bandwidth memory (HBM) and DRAM. In January 2025, the Commerce Department even introduced an "AI Proliferation Framework" aimed at establishing a global control system at the model level, but it was rescinded two days before its scheduled implementation. By December 2025, Trump reversed course again, permitting the export of H200 chips to approved customers in China.

In the latter half of this regulatory timeline, the release pace of Chinese open-source models has continuously accelerated. In 2024, DeepSeek-V2 and the Qwen 2.5 series were successively open-sourced. On January 20, 2025, DeepSeek-R1 and Kimi K1.5 were released on the same day, with the former briefly topping the U.S. App Store download chart, surpassing ChatGPT. In the second half of 2025, Kimi-K2 and GLM-4.5 followed suit. At the beginning of 2026, ByteDance’s Doubao 2.0 had reached 155 million weekly active users, while Kimi K2.5 was directly adopted by Cursor. The tighter the regulation, the more models emerge.

According to official data from Hugging Face, the share of Chinese open-source models in global downloads surged from approximately 1.2% at the end of 2024 to around 30% at the beginning of 2026. The cumulative downloads of Alibaba’s Qwen series exceeded 700 million in January 2026, officially surpassing Meta’s Llama. Chip restrictions have not halted China’s software output in AI; instead, they may have accelerated the strategic shift toward open-source approaches.

This is no accidental data coincidence. The USCC report uses a precise framework to describe this phenomenon: the “dual circulation.” In the hardware loop, China is constrained by chip supply bottlenecks. In the software loop, China reverse-engineers global AI infrastructure through open-source models, creating downstream dependencies. The forces in these two loops move in opposite directions but reinforce each other. Restrictions limit our access to top-tier computing power, yet they also drive the development of a technical path that achieves more with less compute. DeepSeek-R1, achieving frontier performance at a fraction of the inference cost of GPT-4o, is a product of this path.

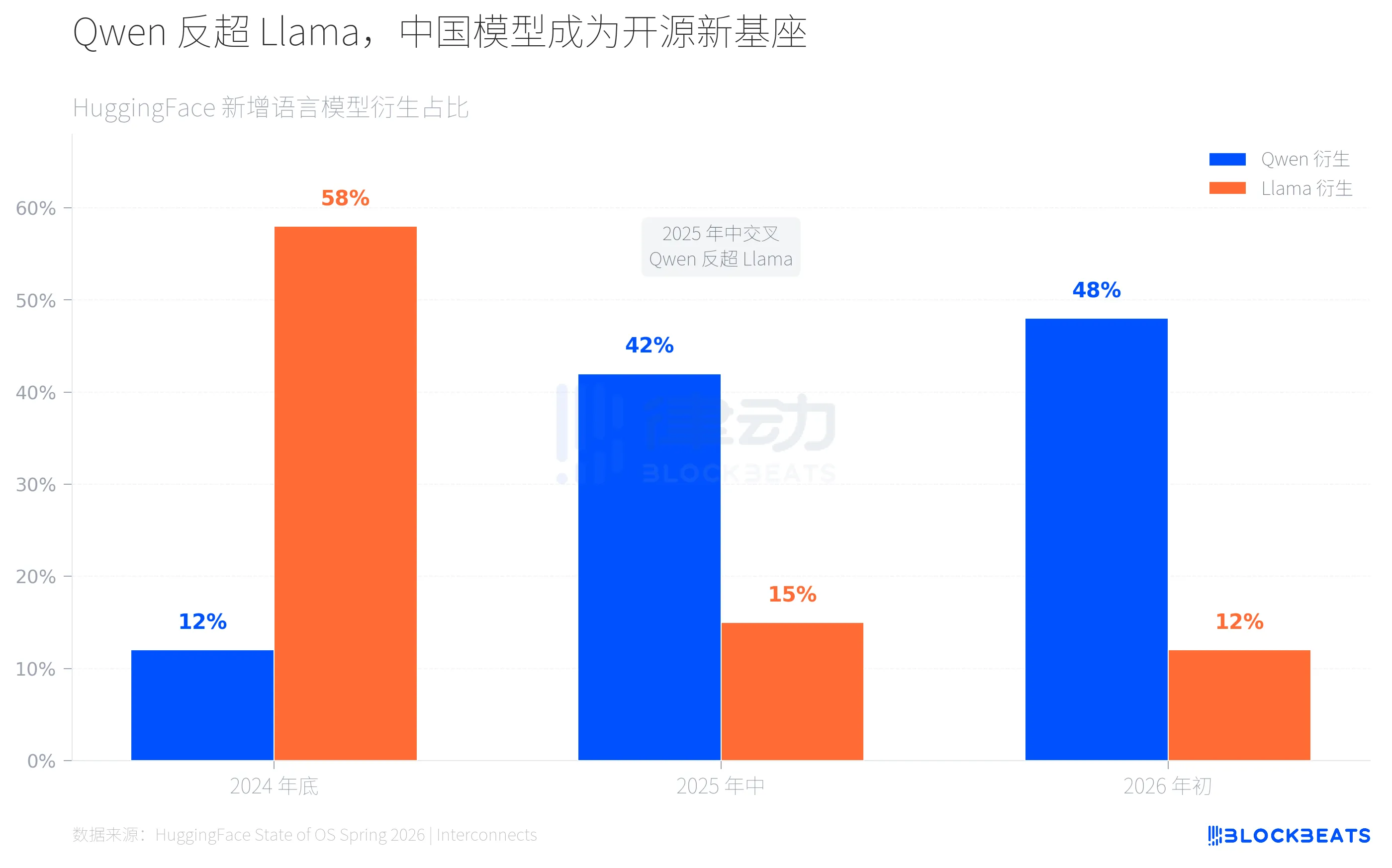

Changes on HuggingFace are visibly apparent. According to platform statistics, by the end of 2024, Llama-derived models accounted for about 60% of newly added language models, while Qwen accounted for just over 10%. By mid-2025, a turning point emerged: according to the official HuggingFace blog, Qwen-derived models surged to over 40%, while Llama dropped to around 15%. By early 2026, Qwen-derived models approached half of the total, while Llama continued to shrink to approximately 12%.

The speed of this crossover has exceeded most people's expectations. Two years ago, open-source AI was nearly synonymous with Meta's Llama ecosystem, with developers worldwide fine-tuning, deploying, and building products based on Llama. Now, the same thing is happening with the Qwen ecosystem—but faster and on a broader scale.

This means that developers worldwide are increasingly choosing Chinese models as the foundational base for building AI applications—not due to political stance, but because of performance and openness. The Qwen 2.5 series covers parameter sizes from 0.5B to 72B, allowing developers to fine-tune and deploy on their own hardware without paying API fees to OpenAI or Anthropic. Open source eliminates vendor lock-in and transcends national boundaries.

A notable detail, as reported by MIT Technology Review in February, is that Chinese AI companies are developing differentiated open-source strategies: DeepSeek focuses on extreme cost efficiency, Kimi emphasizes long-context and code capabilities, and Qwen pursues comprehensive parameter coverage. This multi-pronged approach is expanding global developers’ choices. Our open-source models are redefining the global AI supply chain with proven strength.

But what does the end of this supply chain look like?

On March 19, developer @fynnso discovered the model ID accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast in Cursor’s code. Cursor co-founder Aman Sanger later acknowledged that Composer 2 is built on Kimi K2.5. According to Cursor VP Lee Robinson, “The base model contributed only about a quarter of the compute; the rest comes from our own training.” But the base is still the base. A product valued at $29.3 billion relies on a base model from Moonshot AI, a Chinese company backed by Alibaba and Sequoia (HongShan).

When viewed alongside this dependency chain, the absurdity becomes even more apparent. On March 5, the Pentagon officially labeled Anthropic as a “supply chain risk.” According to NPR, the reason was Anthropic CEO Dario Amodei’s refusal to compromise on two red lines: the use of AI in autonomous weapons and large-scale surveillance of U.S. citizens. Trump gave the military six months to phase out Claude, which is deeply integrated into military and national security platforms. Anthropic subsequently sued the Pentagon on March 9.

On one side, the U.S. government labels its own companies with “supply chain risk”; on the other, 80% of U.S. startups are operating on Chinese models. The former is political maneuvering; the latter is a technological reality. The two have no overlap.

80% of U.S. startups are running Chinese models, and a U.S. company has been labeled by the Pentagon as a risk. Regulations are piling up at the hardware level, while dependencies quietly grow at the software layer. On the other side of the three-year chip wall, a new reality is taking shape: China’s open-source AI is no longer a “follower,” but a supplier of global AI infrastructure.

Click to learn about the open positions at BlockBeats

Welcome to the official BlockBeats community:

Telegram subscription group: https://t.me/theblockbeats

Telegram group: https://t.me/BlockBeats_App

Twitter official account: https://twitter.com/BlockBeatsAsia