Although this breach did not involve the core large language model (LLM) weights or sensitive user privacy data, it directly penetrated Anthropic’s technological barrier at the “agent orchestration” layer.

Article author, source: 0x9999in1, ME News

Core Insight

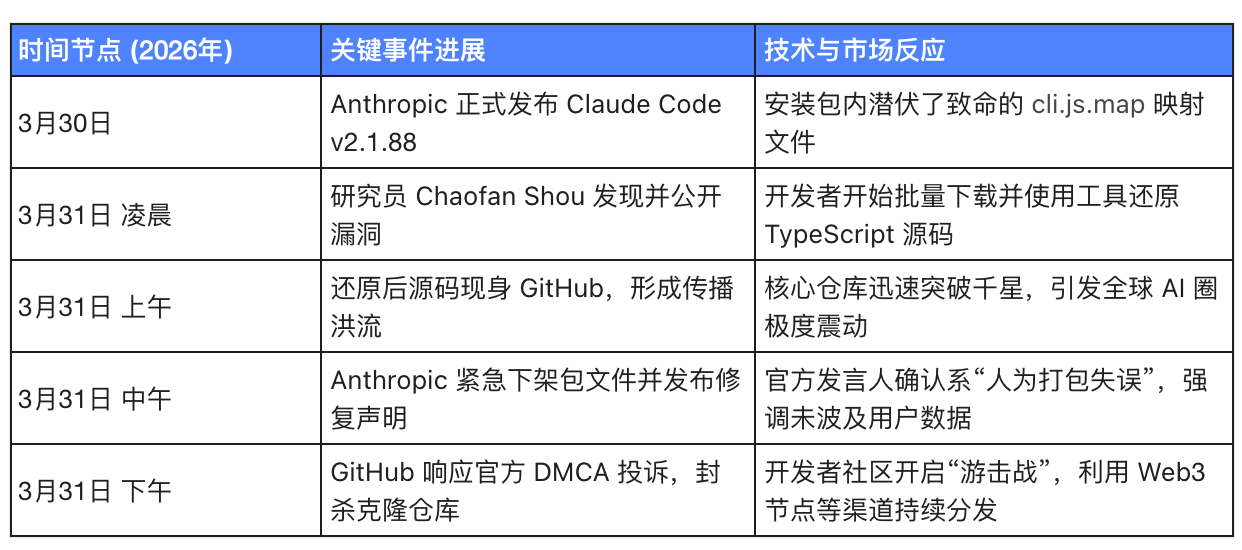

On March 31, 2026, Claude Code, Anthropic’s flagship AI agent product, experienced the most dramatic source code leak in AI history. A staggering 512,000 lines of core TypeScript source code were inadvertently exposed on a public npm mirror due to a basic packaging configuration error. This incident was not the result of a hacker’s APT attack or insider sabotage, but rather a classic frontend engineering configuration disaster.

ME News Think Tank believes that, although this leak did not involve the core large language model (LLM) weights or sensitive user privacy data, it directly pierced Anthropic’s technological barrier at the “Agentic Orchestration” layer. In today’s AI industry, large model capabilities are increasingly converging, and the core competitive advantage of vendors is shifting toward “Agent frameworks and engineering implementation.” The full exposure of the Claude Code source code is equivalent to freely distributing the design blueprints of the industry’s most advanced agent operating system to all competitors and developers. This will not only significantly erode Anthropic’s premium positioning in the enterprise market but also serve as a powerful catalyst for standardizing the underlying technologies across the entire AI Agent ecosystem.

Post-mortem: How were 510,000 lines of code “open-sourced with one click”?

In software engineering, the most devastating leaks often originate from the most unassuming configuration files. The leak path of Claude Code clearly illustrates a supply chain blind spot in modern agile development.

Technical root cause: The "transparency" crisis brought by Source Maps

On March 31, 2026, at 10:00 AM, researcher Chaofan Shou from the security research firm Solayer Labs first disclosed on a social platform that Anthropic’s newly released npm package @anthropic-ai/claude-code (version 2.1.88) inadvertently included a 59.8 MB cli.js.map file.

For modern JavaScript/TypeScript developers, Source Maps are a critical tool for everyday debugging. Since code running in production is typically obfuscated and compressed, error stack traces become nearly impossible to read. Source Maps bridge this gap by precisely mapping the minified code back to the original, fully commented source code with intact variable names. However, this file—intended to be strictly confined to development and internal testing environments—is often inadvertently published alongside production builds to the public web.

This error is closely tied to Anthropic’s tech stack. Anthropic heavily relies on Bun, a newer JavaScript runtime, at the core. Since Bun generates source map files by default, and the engineer responsible for deployment failed to properly configure the .npmignore file to exclude files with the *.map extension, proprietary secrets were inadvertently bundled and uploaded. With just basic reconstruction tools, anyone can reverse-engineer this file to restore the complete project directory containing approximately 1,900 files and over 512,000 lines of pure TypeScript code.

Communication and response mechanisms have failed

After the incident, the code went viral on open-source platforms such as GitHub. The initial clone repository garnered over 1,100 stars and 1,900 forks within less than 12 hours. Although Anthropic urgently removed the vulnerable npm package and swiftly invoked the DMCA (Digital Millennium Copyright Act) to force GitHub to “blackout” the implicated repository, this did not stop the code from spreading rapidly. Many developers turned to decentralized networks and the dark web to create backup copies of the code.

(Table 1: Timeline and Key Milestones of the Claude Code v2.1.88 Source Code Leak Incident)

Deep Dive into the Leaked Content: Anthropic's Technical Edge

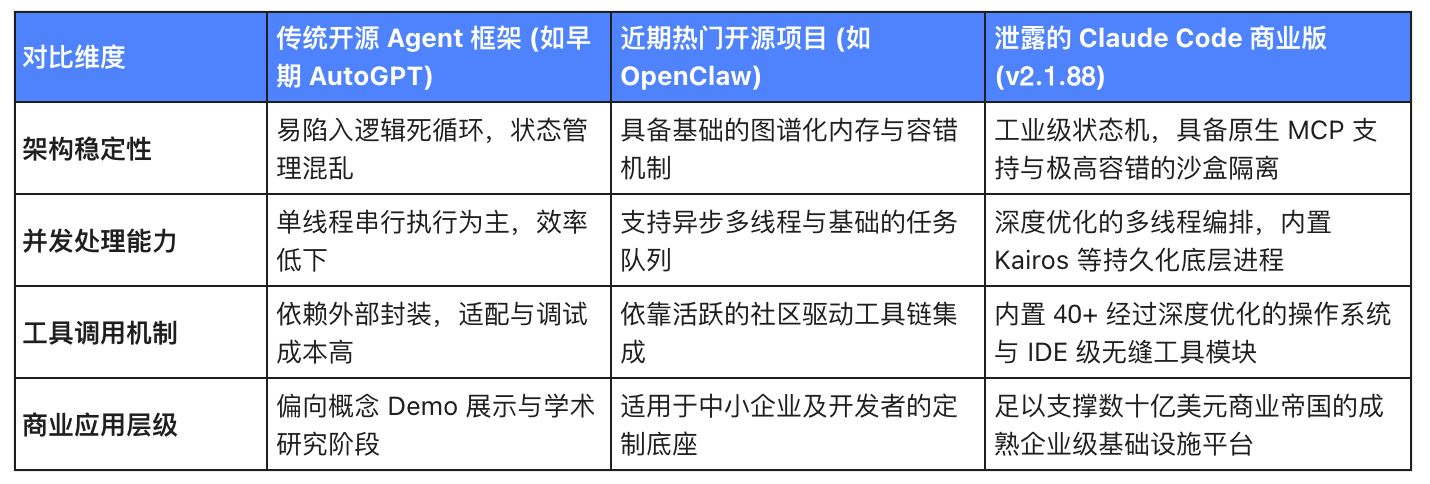

Analysis of the restored codebase reveals this is not a trivial leak of peripheral components, but a direct exposure of the core mechanisms powering Anthropic’s enterprise-grade AI Agent. The code clearly demonstrates how the system evolved from a simple chat assistant into a sophisticated, highly autonomous, and composable intelligent agent orchestration layer.

Memory management and multithreading orchestration logic

Among the leaked vast codebase, the most commercially valuable component is its client-side orchestration layer. The industry has long sought to unravel how Claude maintains exceptional logical coherence when handling complex, lengthy contexts—and the source code provides the answer. The code meticulously details the server-side orchestration logic based on MCP (Model Context Protocol) and its unique memory architecture, revealing how Claude Code covertly manages multiple concurrent tasks in the background and enables seamless state transitions and context switching among over forty built-in tool modules.

Unreleased "secret weapon" features and evolution roadmap

The source code also exposes 44 experimental features that have not yet been fully opened to the public or are in grayscale testing. Among the most notable are a persistent process mechanism called "Kairos" and a background silent operation framework known as "Undercover Mode." These code elements indirectly confirm that Anthropic is attempting to liberate AI Agents from the constraints of "single-turn Q&A," evolving them toward becoming "digital employees" capable of long-term background operation, automated monitoring, and system-level repairs.

In addition, the codebase contains extensive hard-coded interfaces and environment detection logic for the unreleased model "Claude Mythos" (internal codename "Capybara"). Coupled with Anthropic's recent incident on March 26, where a misconfigured CMS led to the leak of a draft promotional material for the Mythos model, these consecutive security breaches reveal significant challenges the high-valued unicorn faces in engineering practices.

Impact assessment: From individual developers to enterprise-level ecosystems

Impact on regular users: Privacy is intact, but trust is compromised and supply chain security is questionable

From a direct technical standpoint, the direct losses to individual users and enterprise clients are contained. The leaked material consists solely of the source code for the client-side command-line tool; it does not involve the core weights of the Claude large model in the cloud, nor does it include any user conversation history or API keys.

However, the impact on long-term business trust is profound. Industry estimates suggest that nearly 80% of Anthropic’s annual revenue of approximately $2.5 billion heavily relies on enterprise clients who prioritize data security and compliance. A company capable of developing top-tier large models yet unable to properly manage a basic .npmignore configuration file will inevitably raise doubts among Wall Street and government procurement agencies worldwide regarding the rigor of its engineering management systems. Furthermore, the exposure of the underlying interaction logic of the MCP server significantly increases the potential risk of supply chain attacks; advanced attackers could exploit known details of the orchestration framework to design malicious local environments that bypass the large model’s security safeguards.

Impact on developers: The premier open-source textbook and technological equity

For global AI developers, this accidental leak has undoubtedly become a celebration of "technological democratization." For a long time, the orchestration logic of top-tier AI agents has been treated as a top secret by major Silicon Valley companies. How to enable large models to reliably control local systems, design elegant failure-retry mechanisms, and implement fine-grained permission isolation has been an engineering mountain blocking progress in the open-source community.

The exposure of Claude Code's source code is like an airdrop of a perfect "model answer" to the entire industry. Similar to the open-source AI Agent project OpenClaw, which recently sparked enthusiasm in the developer community, developers now have the opportunity to dissect and absorb the architecture of the world's most sophisticated commercial Agent—such as industrial-grade state machines and sandboxed communication patterns. This will rapidly elevate the industry's engineering benchmark across the board.

(Table 2: Multi-dimensional comparison of AI Agent framework technical maturity and engineering readiness)

Industry shockwave: The Sword of Damocles over the AI Agent sector

The erosion of the valuation foundation and the abrupt shift in competitive dimensions

In the current AI narrative, as the scaling laws of foundational models increasingly exhibit diminishing marginal returns, leading companies are shifting their valuation narratives toward the application layer—namely, highly automated AI agent frameworks. Claude Code was originally one of Anthropic’s core competitive advantages in the developer ecosystem against OpenAI’s体系.

ME News Think Tank analysis indicates that this leak fundamentally undermines Anthropic’s exclusive technological advantage in the agent orchestration layer. When competitors and the open-source community can reverse-engineer Claude’s superior engineering design into their own capabilities, capital markets will be forced to reassess the true defensive strength of a moat built on “software engineering tricks” rather than “pure model intelligence.” The demystification of the underlying orchestration code means industry competition will accelerate into the next brutal phase: who can most rapidly deeply integrate these now-standardized agent frameworks with domain-specific data and workflows.

Promote the industry's evolution toward a unified standard ecosystem

From another perspective, every major code leak often serves as a catalyst for establishing de facto technical standards. Claude Code’s deep industrial-grade integration with MCP (Model Context Protocol) and its forward-thinking code organization set an exceptional engineering benchmark for future agent interactions. As these leaked codes are studied, deconstructed, and absorbed by developers worldwide around the clock, a wave of open-source “Claude-like” products with comparable engineering rigor will inevitably emerge over the coming months. This will not only accelerate the adoption of AI productivity tools by small and medium-sized enterprises but also strongly drive the entire AI industry from fragmented, isolated efforts toward a thriving ecosystem built on unified interoperability standards.

Source Citation

- Borish, D. (2026, March 31). Anthropic's Claude Code Source Code Leaked — What 512K Lines Reveal. David Borish Tech Insights.

- The Economic Times. (2026, March 31).Claude Code source code leak: Did Anthropic just expose its AI secrets, hidden models, and undercover coding strategy to the world?

- Analyst Uttam. (2026, March 31). The Claude Code Leak: 512,000 Lines of TypeScript and What They Reveal. Medium.

- Franzen, C. (2026, March 31). Claude Code's source code appears to have leaked: here's what we know. VentureBeat.

- Securities Times. (2026, March 31). Claude Code Over 510,000 Lines of Source Code Leaked. Securities Times Website.

- 36Kr. (2026, March 31). Claude Code source code leaked; the next王牌 is prematurely revealed.

- Sina Finance. (2026, March 31). Fork Going Viral Online! Just Now, the Claude Code Source Code Has Been Leaked and Open-Sourced