Onsite: about sixty people—entrepreneurs, engineers, product managers, investors, recent graduates, and a few who described themselves as "here to listen before figuring things out."

Speaker: Alan Walker, a serial entrepreneur from Silicon Valley, a firsthand witness to three cycles, now drinks only black coffee and never uses question marks.

Time: April 2026, one week after the release of Project Glasswing.

Not a methodology, not a career skill.

How to survive and thrive in a species-level transformation.

Intro · ALAN WALKER

Someone messaged before arriving, asking, “Alan, now that AI has arrived, is there still a chance for ordinary people?” Alan didn’t respond, because the question itself is misguided.

Before the invention of the Gutenberg printing press in 1440, the most valuable profession in Europe was the scribe. In monasteries, a master scribe held a status equivalent to today’s senior engineer, controlling the production and dissemination of knowledge. After the printing press emerged, some of them disappeared, while others became editors, publishers, authors, and teachers. They did not vanish—they migrated.

Everyone here today is a descendant of those scribes. Your ancestors were not wiped out by the printing press—that’s why you’re sitting here asking this question. Those of you who can sit here and ask this question are among the luckiest people in human history. The question isn’t “Is there an opportunity?”—the question is, “Are you willing to see where the opportunity lies?”

I’m giving you ten today. No fluff—I’ve thought through each one carefully.” – Silicon Valley’s Alan Walker

Law I · The opponent is not AI, but people who use AI.

It's not the profession that becomes obsolete—it's those who believe "this doesn't concern me."

Let’s start with a counterintuitive fact: Any technological revolution doesn’t eliminate jobs—it eliminates those who refuse to learn. This isn’t motivational fluff; it’s historical record. In 1900, 41 million horses in the U.S. were used for transportation. When cars arrived, stable hands disappeared—but mechanics, gas station workers, highway engineers, auto insurance actuaries, and traffic police all emerged. Net gain, not net loss.

In 1997, Deep Blue defeated Kasparov, and everyone thought professional chess was dead. In 2005, a new form of competition called "centaur chess" emerged—where an average amateur player paired with a standard PC could defeat the best grandmaster combined with a supercomputer. It wasn’t the strongest human who won, nor the strongest machine, but the one best at collaborating with the machine. This conclusion applies unchanged to every industry in 2026.

ALAN · On-site

Your competitor today isn’t Claude, isn’t GPT, isn’t Gemini. It’s the person sitting next to you who’s already using these tools—and you’re still wondering if they’re reliable. The adoption curve for technological tools never waits. After the printing press emerged, those who adopted it first in the first five years defined the landscape of knowledge production for the next two centuries. The window today may be even shorter than five years.

It’s not AI replacing you—it’s people who use AI replacing you. These two statements sound similar, but they lead to completely different strategies for how you respond.

Law II · AI Can't Steal the Mistakes You've Made

Large language models can learn all the knowledge that has been written down. They cannot learn the part you haven’t written down—and that part is what truly makes you valuable.

Philosopher Michael Polanyi wrote a slim hundred-page book in 1966 titled "Tacit Knowledge" (Polanyi, 1966). Its central proposition is simply this: "We know more than we can tell." He gave an example: you can recognize a face, but you cannot explain to me how you do it. This ability resides in your nervous system, cannot be articulated in language, and therefore cannot be taught or replicated.

The essence of large language models is the extreme compression and retrieval of knowledge that humans have already expressed. They absorb everything that has been written: textbooks, papers, code, conversations. But there is a type of knowledge they cannot access: the judgment you’ve built through eighteen failed projects, the intuition you develop after encountering a certain situation three times, the instinct for human nature you gain after years of navigating an industry. These things have never been recorded in any document—they exist in your brain as neural circuits, triggered only by experience and impossible to convey through language.

So, the experiences you thought were useless are actually your true moat in the AI era. The detours you took, the pitfalls you encountered, the wrong bets you made—they are assembling into a rare asset that AI cannot replicate. On the condition that you consciously systematize them: write them down, share them, and teach them to others.

ALAN · On-site

I know someone who has been in the restaurant business for eighteen years—he doesn’t know Excel, can’t code, and speaks Mandarin haltingly. But thirty minutes before a new restaurant opens, he walks through the space and tells you which dish will fail today, which staff member is off their game, and roughly what the table turnover rate will be tonight. How does he know? He can’t explain it. But that “can’t explain it” is worth millions. AI can generate a complete restaurant management manual, but it hasn’t walked through the eighteen years of pitfalls he has.

Systematize the pitfalls you've encountered. Articulate your failure cases. This isn't writing a memoir—it's forging the most underappreciated moat of the AI era.

LAW III · Depth is the credential; cross-border capability is the weapon

AI can be "good enough" in any single field. What it cannot do is overlay the underlying logic of two fields to see a third possibility.

In economics, there is a concept called "comparative advantage," introduced by Ricardo in 1817. It means you don’t need to be better than others at everything—you only need to be more efficient at a particular combination of skills. Today, the source of comparative advantage has shifted from single skills to interdisciplinary combinations—your background in biology, combined with your financial intuition and product thinking, creates a perspective that AI cannot replicate using any single training dataset.

Truly paradigm-shifting innovations in human history have almost never occurred within disciplines—they happen at the boundaries. Mendel, a monk, used statistics to study peas and laid the foundation for genetics. Shannon, a mathematician, applied the concept of entropy from thermodynamics to understand communication and created information theory. Jobs, a Zen practitioner and aesthetician, fused humanities and engineering to define consumer technology. In an era where AI can rapidly master any single field, the ability to connect across disciplines remains one of humanity’s last cognitive advantages.

Find your deepest area of expertise—this is your anchor; without it, everything else is just drifting seaweed.

Deliberately acquire sufficient knowledge in two to three adjacent or opposing areas; mastery is not required.

Train "connection intuition": Can the underlying logic of this field explain phenomena in that field?

AI helps you search, and you make the connections—that’s division of labor, not competition.

ALAN · On-site

The most impressive investor I’ve ever seen isn’t the one with the strongest finance background, but the one with solid financial acumen, genuine technical intuition, deep insight into human nature, and a memory of history. These four dimensions together cannot be replicated by AI today—because the core of “insight” is integration, and integration requires having been confronted by diverse systems in the real world, not merely pattern-matching retrieved from training data. Your complex experiences are a domain AI cannot yet colonize.

Without breadth, only depth, you are a well. With cross-domain connections, you become a network. AI is water—it flows into all wells, but the network is one you weave yourself.

LAW IV · Attention is the only truly scarce resource in the AI era

AI has driven the cost of producing information close to zero, meaning information itself is approaching zero value. Its scarce complement—focused attention—is becoming the most valuable currency of this era.

Herbert Simon wrote in 1971: "The abundance of information creates a scarcity of attention." He said this before the internet was born, relying solely on basic economic logic: when anything becomes extremely abundant, its own value declines, while the value of its scarce complements rises.

Today, the amount of content generated by AI daily exceeds the total produced by humans over the past several hundred years. Your brain hasn’t been upgraded, and your total attention is fixed. What you give your attention to is what you’re voting for—and what you’re cultivating. Someone who spends three hours a day drifting through fragmented information isn’t just wasting time; they’re actively downgrading their cognitive system into a consumption terminal—capable only of receiving, not creating; only reacting, not thinking.

Here is a counterintuitive conclusion: In the AI era, deep reading ability is rarer and more valuable than programming skill. AI can write code, retrieve information, and generate reports—but it cannot replace your ability to truly understand a book and integrate it into your own system of judgment. A person who can focus for long periods, think independently, and make autonomous judgments is a collaborator with AI. Someone who only consumes fragmented content is merely an AI consumption terminal. Terminals don’t think—they only receive.

ALAN · On-site

I have a test: pick a book you consider important, sit down, and read it for two hours without touching your phone. If you can’t do it, your attention has been colonized. This isn’t a moral judgment—it’s an assessment of cognitive ability. In an era where AI levels everyone’s productivity, those who can maintain deep focus are cognitive nobles—not because they’re smarter, but because they’ve preserved what most people have already given up.

Protecting your attention is protecting your cognitive sovereignty. Surrendering your attention is voluntarily downgrading yourself to a consumption terminal for AI, rather than a collaborator with AI.

LAW V · Credit is the only thing AI cannot mass-produce.

AI can generate your resume, mimic your writing style, and forge your voice. But it cannot replicate the trust you’ve built over time through consistent follow-through in real relationships.

What is the essence of trust? From a game theory perspective, trust is the result of repeated interactions—Axelrod, 1984. When two individuals engage in sufficiently many interactions and consistently verify that each other’s actions match their promises, they are willing to reduce their defensive costs and enter a more efficient state of cooperation. This process cannot be compressed, forged, or mass-produced, because its essence lies in a record of兑现 over time.

When AI can generate any content and mimic any style, genuine human trust experiences a paradoxical increase in value. The more AI proliferates, the rarer and more valuable it becomes to be "a real person—and reliable." Your reputation is your only anti-counterfeiting label in the age of AI.

On a deeper level, credit isn’t just “you do what you say you’ll do”—credit is “others are willing to place uncertainty on you.” When someone entrusts you with something whose outcome is unknown, it’s not because they’re certain you’ll succeed, but because they believe you’ll give it your all, provide honest feedback, and never disappear. This relationship of trust is a private contract that AI cannot enter—it is offline, emotional, and built over time.

ALAN · On-site

I know someone with no elite school background, no experience at a big tech company, and broken English. The only thing he has is that over the past fifteen years, he has never failed to follow through on a promise he made. Now, every time he sends a message, fifty people prioritize replying to him. In the age of AI, this is called signal penetration. In a world saturated with infinite noise generated by AI, his signal is clear. None of these fifty people respond to him because his resume looks impressive.

Every time you keep your promise, you're making the most valuable investment in the AI era. Every time you break it, you're destroying assets that AI cannot help you rebuild.

Law VI: Answers are depreciating; good questions are appreciating.

AI can answer any question in three seconds. It doesn’t know which question is worth asking. That “not knowing” is where you stand.

For three hundred years, the entire human education system has trained people in one thing: answering standard questions. Exams test answers, interviews test problem-solving, and performance evaluations test output. The underlying assumption of this system was that questions are fixed and answers are scarce. With the emergence of AI, this assumption has been completely overturned: answers are no longer scarce—good questions have become the rare commodity.

Einstein said that if he had one hour to solve a life-or-death problem, he would spend fifty-five minutes defining the problem and five minutes finding the solution—attributed to Einstein. In 2026, the meaning has changed: those five minutes, you can outsource to AI. Those fifty-five minutes, only you can do.

What makes a good question? A good question has three characteristics: first, it reveals things you hadn’t seen before; second, it prompts the other person in the conversation to reexamine their assumptions; third, it opens up a new space of possibilities rather than narrowing the boundaries of an existing answer. Developing this ability comes from extensive reading, deep conversations, and constantly shifting between different systems—until you develop an instinctive skepticism toward what seems obvious.

ALAN · On-site

In the age of AI, the most competitive way to work is this: you start with a good question, AI generates ten answers, and then you use an even better question to extract an eleventh answer—the direction AI never thought of. In this feedback loop, you are the director, and AI is the actor. If you merely accept AI’s output, you are an audience member. Audience members don’t get paid like directors. The world always lacks great directors—and never lacks audience members.

Learning to ask questions is more valuable than learning to answer them. AI can answer anything, but it doesn’t know what to ask. That “unknown” is your domain.

LAW VII · Find the places where value comes from people

Not all efficiency is worth optimizing. There is a type of value that becomes more expensive precisely because it is inefficient and requires human involvement.

Veblen described in 1899 a category of unusual goods—Veblen goods—where demand increases with price, because the high price itself is part of the value. Today, human participation is becoming a Veblen attribute for a certain class of services: they are valuable because real people are involved; the scarcer they are, the more valuable they become.

Think about it: the difference in value between a doctor who truly understands your situation and an AI-generated diagnosis report. The irreplaceable nature of a friend sitting across from you during your hardest moments versus any AI companion app. The fundamental distinction between a decision-maker who can make a call in person and take immediate responsibility for the consequences, and an AI-optimized recommendation. The common thread in these scenarios is that human presence itself is part of the value—and an inseparable part.

From an evolutionary perspective, this is not surprising. Humans are super-social animals, and our nervous systems are designed to respond to real human presence. Oxytocin, mirror neurons, facial recognition systems—these mechanisms do not respond to AI. When an AI tells you, “I understand how you feel,” your limbic system knows it’s fake, even if your rational mind is momentarily convinced. Humans have a biological need for human presence that cannot be replaced by digital substitutes.

ALAN · On-site

I predict an industry that will surge against the tide in the AI era: end-of-life care. It’s not because AI can’t provide information or companionship, but because no one wants to spend their final moments facing a screen. This is an extreme case of the “human premium,” yet it illustrates a universal principle: find the areas where increased automation makes people feel more empty—that’s your opportunity. The colder and more efficient a place becomes, the more valuable human warmth is.

Ask yourself: If this entire task were done by AI, what would the customer lose? That "thing lost" is your permanent moat.

LAW VIII · Uncertainty is not your enemy; it is your final advantage.

Evolution never rewards the strongest; it rewards those who survive the longest through change. Those who maintain actionability in high uncertainty are the true强者 of the AI era.

Nassim Taleb, in Antifragile (Taleb 2012), introduced a framework that transformed my worldview: there are three types of systems in the world. Fragile systems break under stress; robust systems endure stress; antifragile systems grow stronger under stress. He argues that nature rewards not robustness, but antifragility. Muscles grow under stress, the immune system strengthens through infection, and economies advance through creative destruction.

The uncertainty of the AI era is structural and will not disappear. Every few months, new models emerge, new capability boundaries are pushed, and entire industries are reshaped. This is not temporary chaos—it is the new normal. You cannot predict the next card. What you can do is train yourself to act, learn, and maintain direction even when you don’t know what the next card will be.

A more fundamental truth: uncertainty is the last weapon ordinary people have against large institutions. Corporations, governments, and big capital hold absolute advantages in a world of certainty—they have resources, scale, and moats. But in a rapidly changing, uncertain environment, their size becomes a burden, their processes become shackles, and their history becomes a liability. Meanwhile, you, as an individual who can make decisions within 72 hours and fully pivot within a week, possess a flexibility that no large institution can ever replicate.

ALAN · On-site

Be more specific: Place small bets, iterate quickly, and never go all-in on any single assumption. Build a life structure that absorbs mistakes, not one that must always be right. Keep the cost of failure within what you can afford, and maximize your learning speed to the highest level you can sustain. You can’t predict which industry AI will disrupt next. But you can train yourself to feel excitement, not panic, when it happens. Large institutions fear uncertainty because they’re too heavy to turn quickly. You’re light—you can turn. This is your final structural advantage; don’t waste it with anxiety.

Uncertainty is the only structural advantage ordinary people have against large institutions. Institutions fear it; you should embrace it.

LAW IX · Keep outputting, turning your knowledge into public assets.

AI enables everyone to "create content." But content and opinions are two different things. Those who have unique perspectives and consistently express them will gain exponential visibility amid the noise of AI.

In economics, there is a concept called "network effects" (Metcalfe, 1980)—the value of a network is proportional to the square of the number of its nodes. Your public expressions are nodes in the network of human knowledge. Each article, each speech, each idea increases the number of your connections. But the value of a node comes from its uniqueness, not from its quantity.

Before AI made content production cost nearly zero, scarcity lay in production capacity. Afterward, scarcity lies in unique perspectives worthy of trust. Anyone can use AI to generate an article titled “A Survival Guide for the AI Era,” but not everyone can write an article that leaves readers feeling, “This person has seen the real world.” The latter requires real-life experience, independent judgment, and sustained reflection—three things AI cannot replace.

The more fundamental logic is: if you don’t output, you don’t exist. In the digital age, existence means being seen—only when seen can value flow. Someone who holds many great ideas in their mind but never expresses them is equivalent in the world’s information stream to someone who knows nothing—they are both invisible. Turning your knowledge into public assets is the most underappreciated compounding behavior in the AI era.

ALAN · On-site

I know someone who manages a factory in a second-tier city, with no elite education or impressive resume. Three years ago, he began writing online about his real-world experiences running a factory—not theoretical methods, but raw, painful failure stories and the lessons he learned from them. Today, he has 200,000 readers, three factories have reached out to him for consultation, and publishers have approached him to write a book. He didn’t become smarter—he simply took what was inside his head and put it out into the world. The world saw it, and value flowed to him. If you don’t output, the world doesn’t know you exist.

Put what’s in your mind out into the world—not to perform, but so the world knows you exist, and so value knows where to find you.

LAW X · Manage Your Energy, Not Your Time

Time management is a logic from the industrial era—factories required steady output, so you traded time for products. In the AI era, what’s needed is creative cognitive breakthroughs, so you need to manage energy, not time.

The core assumption of the industrial era is that time is a function of output: work eight hours, produce eight hours' worth of value. This logic holds on an assembly line because assembly line work is linear, additive, and does not require peak performance. But creative work is not linear. Two hours in peak condition can produce something that twenty hours of exhaustion cannot.

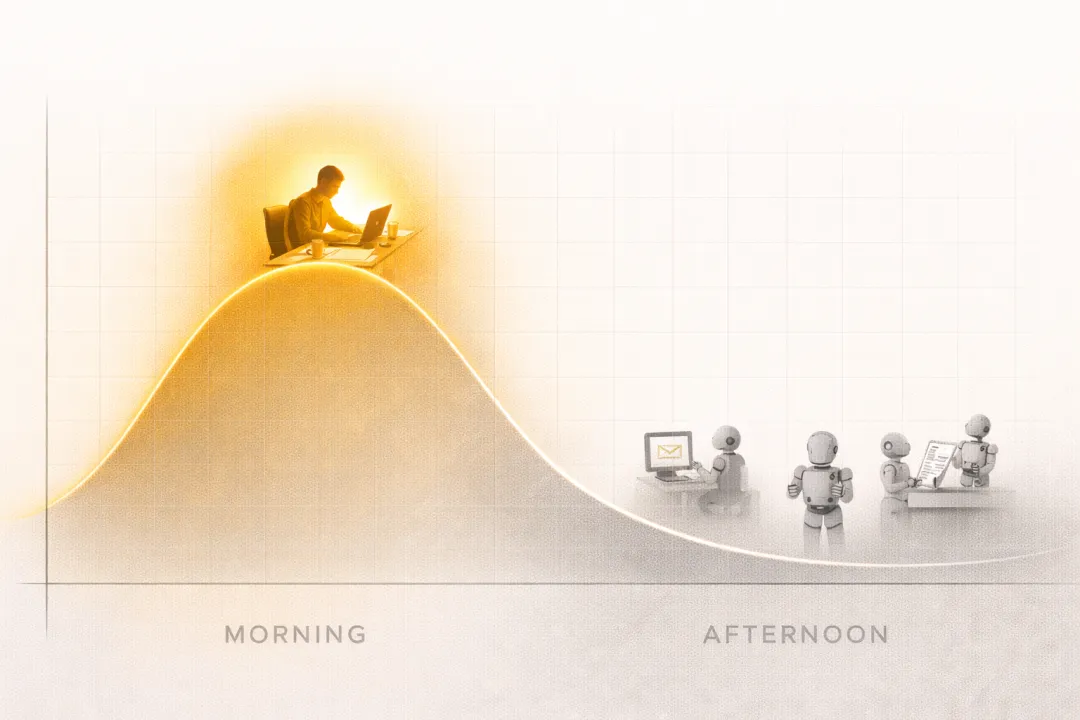

Neuroscience has confirmed this (Kahneman, 2011): Human higher cognitive functions—deep analysis, creative connections, complex judgment—rely on high levels of activity in the prefrontal cortex. This state is extremely energy-intensive and available only in limited daily windows. Most people spend this most expensive time window on emails, scrolling through social media, and attending low-quality meetings, then use their remaining exhausted state to tackle tasks requiring deep thinking, only to complain about low productivity and lack of creativity.

In the age of AI, this mistake becomes even more deadly. AI can now handle all low-cognitive-effort tasks—information retrieval, formatting, data aggregation, and standard writing. What it cannot replace is your judgment, insight, connection, and creativity produced during your peak cognitive states. If you spend your peak hours on low-value tasks, you’re using your most expensive resource to do the cheapest work, while leaving the tasks that most need you to your lowest state.

ALAN · Final Round

I have about three peak hours every morning. During those three hours, I don’t check messages, attend meetings, or reply to emails. I do only one thing: think about the most important question for the day. Everything else—including a large volume of work—I delegate to AI or save for the afternoon. This isn’t laziness; it’s rational allocation. The value of your three most precious hours each day depends entirely on how you use them. Since AI arrived, the answer to this question has become even more extreme: use them right, and your peak output can be ten times that of an average person; use them wrong, and your low points are no different from AI’s. Asimov wrote three laws of robotics to set boundaries for machines. Today, I give you these ten principles to help humans reclaim their place. Your place is in your peak—not on the assembly line.

You don’t need more time. You need to protect your best time for doing only what you can do.

AI is not your ceiling—it's your leverage.

You're at the peak, not on the pipeline.

Your opponent is never AI; it's the person who uses AI.

AI can't steal the mistakes you've already made

III Depth is the credential; cross-border capability is the weapon.

IV Attention is the only truly scarce resource in the AI era

Credit is the only thing AI cannot mass-produce.

VI is depreciating. Good questions are appreciating.

VII Find the place where it says "It's valuable because of people"

VIII Uncertainty is not your enemy—it is your final advantage.

IX continues to output, turning your knowledge into public assets.

X manages your energy, not your time.

-Melly